Using AI for Automation: A Practical Guide for Teams

Learn how to plan, pilot, and scale AI-driven automation across business processes with practical steps, governance, and real-world workflows. A comprehensive how-to for developers, product teams, and leaders.

This guide outlines a practical, step-by-step path to using ai for automation in your organization. You’ll identify high-impact processes, design safe, scalable agent-driven workflows, and pilot a workflow with governance and measurement. Expect a phased approach that reduces risk while enabling rapid learning and iteration.

Why using AI for automation matters

In the modern enterprise, using ai for automation is less about replacing humans and more about augmenting decision-making and execution at scale. AI agents can handle repetitive data tasks, triage routine issues, and coordinate across systems with less human intervention. When paired with clear governance and guardrails, AI-powered automation reduces cycle times, improves consistency, and frees teams to focus on higher-value work. From a strategic perspective, organizations that invest in AI-driven automation often unlock faster feedback loops, enabling continuous improvement. According to Ai Agent Ops, the most successful teams align automation initiatives with business outcomes, not just technology capabilities, and they build capabilities that can adapt as needs evolve.

This section grounds the discussion in practical terms: you’ll learn how to recognize candidate workflows, assess readiness, and design initial experiments that keep risk manageable while delivering tangible learnings. You’ll see that the goal is not to automate every task at once but to create a repeatable pattern for automating decisions, actions, and orchestration across systems. As you proceed, keep your focus on measurable impact, data quality, and privacy considerations.

Core capabilities of AI agents for automation

AI agents bring a blend of language understanding, structured reasoning, and action execution. In automation contexts, these capabilities translate into several concrete functions: interpreting user or system inputs, retrieving and validating data from multiple sources, and orchestrating actions across tools or services. You can compose agent-based automation with components such as task automation engines, API integrations, and governance layers to ensure predictable behavior. A key pattern is modular orchestration: small, testable agent functions that can be combined into end-to-end workflows. For teams exploring this space, it’s essential to map each capability to a business objective, such as reducing manual handoffs or accelerating decision cycles. The Ai Agent Ops framework emphasizes that you should start with a single end-to-end workflow and then progressively extend coverage as confidence grows.

Defining success metrics for AI-driven automation

Before you begin, define what success looks like. Typical metrics include time saved, error rate reduction, and improved throughput, but the best measures tie directly to business outcomes like customer satisfaction, revenue impact, or cost avoidance. Set guardrails for data quality, model reliability, and safety checks, and specify thresholds that trigger human review. Establish a lightweight experimentation framework so you can compare automated vs. manual performance under similar conditions. In practice, you’ll want to document baseline performance, track changes during the pilot, and create a simple dashboard that makes progress visible to stakeholders. Remember to consider governance and privacy requirements from the outset to prevent downstream compliance issues.

Practical workflows and examples of AI-driven automation

Consider a customer support scenario where AI agents triage inquiries, fetch relevant data, and route cases to the right humans or systems. Another example is procurement, where AI can parse supplier quotes, extract key terms, and generate approval requests automatically. In operational contexts, AI agents can monitor dashboards, detect anomalies, and trigger corrective actions in collaboration with human operators. The most successful pilots address a well-defined pain point, such as a bottleneck in a manual process, and demonstrate a clear path from data input to final action, including error handling. Document the steps, data dependencies, and decision criteria so future iterations can reuse and extend the workflow without rebuilding from scratch.

Architecture patterns for automation: choosing the right mix

Effective automation architectures balance autonomy with control. A typical pattern involves orchestrated agents that perform discrete tasks and pass results to a central coordinator. This coordinator implements business rules, logging, and escalation paths. For more complex needs, a hybrid approach combines RPA with AI agents and API integrations, enabling structured processes to be enhanced by intelligent decisions. When evaluating options, consider ease of integration, data locality, latency, and security. The goal is to assemble a practical stack that supports reliable operation, observability, and governance while remaining adaptable to evolving requirements.

Data readiness and governance for automation projects

Data quality and provenance are the foundation for successful AI-driven automation. Start by inventorying data sources, documenting data schemas, and identifying any sensitive fields. Implement data access controls, audit trails, and versioning so changes to data or models are traceable. Establish a lightweight governance model that defines who can deploy, monitor, and modify automation workflows. Pay attention to data drift, model decay, and external dependencies that might affect performance. By prioritizing data governance early, you reduce the risk of misconduct, privacy violations, and regulatory concerns as your automation footprint grows.

Risk management, ethics, and compliance in automation

Automation introduces new risk profiles, including operational failures, biased outcomes, and security vulnerabilities. Build guardrails such as input validation, exception handling, and rollback procedures. Conduct impact assessments for new automations and maintain a clear audit trail. Align automation initiatives with ethical guidelines and industry regulations, and establish a process for incident response and remediation. Engaging legal, compliance, and security teams from the start helps prevent costly rework later and fosters trust with customers and stakeholders.

Getting started: a phased plan to begin using ai for automation

A practical plan starts with selecting a single, high-potential workflow, building a minimal viable automation, and iterating based on lessons learned. Develop a high-level map of inputs, decisions, actions, and outputs, then translate that map into a pilot that can be executed in a controlled environment. Set up observability, including logs and metrics, so you can quantify improvements and spot issues early. Expand coverage gradually, refining data pipelines, error handling, and governance rules with each iteration. The key is to maintain momentum while keeping risk in check through careful scoping and continuous learning.

Case study sketch: a hypothetical automation project for a hands-on example

In this hypothetical scenario, a team aims to streamline the onboarding process for new hires. The automation sequence includes collecting documents, verifying identity, provisioning accounts, and notifying stakeholders. The AI agent coordinates with HRIS, IT services, and security tooling, while human approvers review exception cases. The project emphasizes data protections, auditing, and rollback strategies, ensuring that if a step fails, the workflow gracefully retries or escalates. Though simplified, this example illustrates the end-to-end pattern you can replicate in your own environment to validate benefits before broad rollout.

Tools & Materials

- Project goals and success criteria document(Defines scope, outcomes, and constraints for the automation effort)

- Sandbox environment with test data access(Isolated space to pilot workflows without impacting production)

- Access to data sources and APIs(Provide necessary read/write permissions for integration points)

- Evaluation metrics template(Template to track ROI, time savings, and quality improvements)

- Security and compliance guidelines(Optional, but recommended for governance)

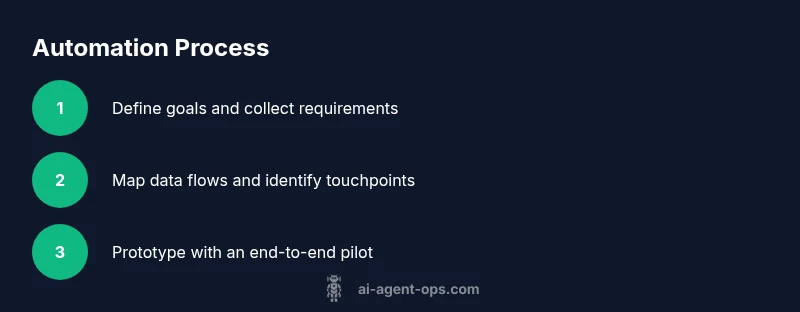

Steps

Estimated time: 4-6 weeks

- 1

Assess goals and constraints

Identify candidate workflows that are repetitive, data-rich, and time-sensitive. Define what success looks like in measurable terms and set guardrails for risk and data governance. Include a plan for the pilot and the criteria for expanding beyond it.

Tip: Start with a single end-to-end workflow to learn patterns and avoid scope creep. - 2

Map current workflows

Document each step, data flow, and decision point in the chosen process. Identify data sources, owners, and potential bottlenecks. This map becomes the blueprint for the automation design and helps reveal where AI is most impactful.

Tip: Use simple flow diagrams to visualize handoffs and failure points. - 3

Select AI capabilities and tools

Choose a combination of AI reasoning, automation orchestration, and API integrations that fit the workflow. Prioritize no-code or low-code options if your team lacks deep developer resources.

Tip: Aim for a minimal viable tech stack that you can extend later. - 4

Design agent orchestration

Define how agents coordinate, when they should hand off to humans, and how errors are recovered. Create guardrails, retry logic, and clear escalation paths.

Tip: Document responsibilities and decision boundaries between automation and human work. - 5

Build a pilot in a controlled environment

Implement a contained version of the workflow in a sandbox. Include logging, observability, and version control so you can measure impact and rollback if needed.

Tip: Flag features behind a toggle to test safely. - 6

Monitor, test, and iterate

Run automated tests, monitor performance, and adjust data pipelines and decision rules based on results. Collect feedback from users and stakeholders to refine the workflow.

Tip: Automate anomaly detection to catch issues early. - 7

Scale responsibly

Expand the automation to additional workflows only after demonstrating stability, governance, and measurable benefits. Update playbooks and governance artifacts as you scale.

Tip: Keep the governance model lightweight but enforceable.

Questions & Answers

What is AI-driven automation?

AI-driven automation uses intelligent agents and models to perform tasks with limited human input. It combines perception, reasoning, and action to coordinate across systems and data sources. The goal is to reduce manual effort while maintaining control and governance.

AI-driven automation uses intelligent agents to perform tasks with limited human input, coordinating actions across systems.

How do I decide where to start?

Begin with a high-impact, data-rich workflow that has clear handoffs. Validate the concept with a pilot, measure the outcomes, and only scale after demonstrating value and governance readiness.

Start with a high-impact workflow and validate it with a pilot before scaling.

Do I need deep AI expertise to begin?

You don’t necessarily need deep AI expertise to start. Many platforms offer no-code or low-code options that let you wire together AI capabilities with existing systems. Build competency gradually and rely on governance for safety.

No deep AI expertise is required to begin; use no-code options and grow capabilities with governance.

What are the main risks with automation?

Key risks include data privacy breaches, incorrect decisions, and system outages. Use guardrails, testing, and audit trails to mitigate these risks and ensure quick rollback if issues arise.

Risks include privacy and incorrect decisions; guardrails and testing help manage them.

How long before I see results?

Results vary by workflow and data readiness. Start with a pilot to establish baselines, then iterate. Expect gradual improvements as you scale governance and refine the automation.

Results depend on the workflow, but a pilot helps you learn and iterate quickly.

How should I measure ROI?

Measure ROI by linking automation outcomes to business impact, such as time saved, error reductions, or improved customer outcomes. Use a simple dashboard to track these metrics over time.

Link outcomes to business impact and track with a simple dashboard.

Watch Video

Key Takeaways

- Define clear automation goals and success metrics.

- Choose architecture patterns that match your workload.

- Prioritize data governance and security from day one.

- Pilot before scaling to minimize risk and learning.

- Measure outcomes with observable business impact, not vanity metrics.