AI Agents vs Cybersecurity Pros: A Side-by-Side

An analytical comparison of AI agents and cybersecurity professionals, outlining strengths, limits, use cases, and governance for blended teams.

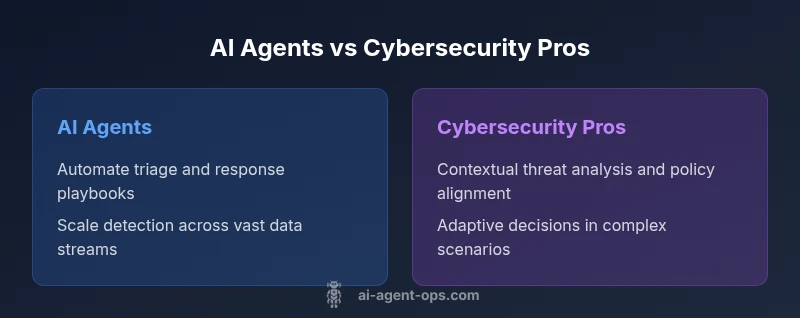

AI agents and cybersecurity professionals serve complementary roles. This side-by-side comparison of comparing ai agents to cybersecurity professionals shows how automation accelerates alert triage and data correlation, while human experts handle strategic defense, policy alignment, and complex incident response. The best outcomes come from a blended approach that scales capability without sacrificing judgment.

Defining AI agents in security operations and cybersecurity professionals

In the realm of cyber defense, AI agents refer to autonomous software entities that can monitor, analyze, and respond to signals across networks, endpoints, and cloud services. They leverage machine learning, anomaly detection, and rule-based orchestration to triage alerts, correlate events, and trigger automated playbooks. Cybersecurity professionals, by contrast, are human experts who interpret data, apply context, and make strategic decisions about risk, policy, and incident response. When we talk about comparing ai agents to cybersecurity professionals, the core question becomes: where does automation add value, and where is human judgment indispensable? According to Ai Agent Ops, the most effective security postures combine capable agents with skilled practitioners to cover both scale and nuance. In practice, a blended model starts with clear roles, governance, and auditable workflows that prevent automation from outrunning oversight. Recognizing the strengths and limits of each side helps teams design defense strategies that scale without sacrificing critical thinking. In this analysis, we will repeatedly reference how the two work in concert, rather than viewing them as mutually exclusive.

Core capabilities of AI agents in threat detection and automation

AI agents excel at processing vast data streams in real time, identifying patterns that would overwhelm human analysts. They can ingest telemetry from endpoints, network sensors, identity and access management systems, and cloud environments, then perform rapid triage to determine which alerts warrant human attention. Beyond detection, AI agents can automate repetitive tasks such as enrichment, correlation, and the execution of predefined response playbooks. This automation reduces mean time to containment and improves consistency across incidents. However, capabilities are bounded by the quality of data, feature engineering, and governance controls. The best outcomes arise when AI agents are configured with explainable logic, auditable logs, and throttles that prevent automated actions from escalating beyond defined risk tolerances. In the broader landscape, AI agents also enable proactive defense—predictive analytics that highlight potential attack surfaces before exploitation—yet these insights require human validation before formal action.

The value of human expertise in cybersecurity

Cybersecurity professionals bring context, critical thinking, and judgment that automated systems cannot fully replicate. They interpret anomalies within business contexts, assess risk tolerance, and align technical actions with organizational policies and regulatory requirements. Humans excel at scenario planning, red team–blue team exercises, and decision-making under uncertainty. They can adapt to novel threat surfaces, evolving attacker techniques, and complex multi-vector campaigns that require holistic reasoning. Moreover, professionals steward governance, compliance, and explainability—areas where traceability and accountability are essential. The interplay between humans and AI agents is thus a matter of balancing speed with discernment, routine with nuance, and automation with oversight. The ongoing skill development of security teams should emphasize both machine-assisted workflows and the cultivation of strategic thinking, risk assessment, and policy design.

Speed, scale, and reliability: contrasting strengths and limits

Automation excels at speed and scale: AI agents can monitor thousands of signals concurrently, apply standardized playbooks, and generate auditable artifacts in near real time. This yields faster triage, higher throughput, and more consistent tuning of detection rules. Humans, however, remain essential for handling ambiguous signals, adversarial tactics, and complex decisions where context matters. Reliability is a function of data quality, model drift, and governance. Poor data feeds or misconfigurations can propagate false positives or, in worst cases, automated actions that aggravate a security incident. Therefore, a robust approach pairs deterministic, tested automation with human-in-the-loop review for high-stakes actions. For teams, the objective is not to replace humans with machines but to shift the workload toward automation for routine tasks while preserving human oversight for critical decisions and policy implications.

Scenarios: threat hunting, incident response, and governance

Threat hunting benefits from AI agents that surface patterns across large data sets, enabling analysts to chase high-signal leads more efficiently. In incident response, automation can containment playbooks, evidence collection, and coordination across teams, reducing response time and enabling responders to focus on complex decisions. Governance and compliance require auditable trails and explainability for automated actions, ensuring policy alignment with industry standards. In all these scenarios, AI agents provide a force multiplier, but human expertise remains crucial for interpretation, ethical considerations, and strategic direction. The best practice is to define clear use cases where automation reliably augments expertise and to establish escalation procedures for when human judgment is indispensable.

Deployment patterns: when to automate and when to rely on humans

A practical deployment pattern starts with a risk-based assessment to identify high-volume, low-complexity tasks suitable for automation, such as log enrichment, correlation, and routine containment steps. As teams mature, automation can take on more sophisticated workflows, including patch orchestration and adaptive response based on evolving attack patterns. However, scenarios that demand adaptability, strategic decision-making, or policy alignment benefit from human involvement. A phased approach with measurable milestones—coverage, accuracy, response time, and auditability—helps organizations scale safely. Regular governance reviews, red-teaming, and redacted incident reports support continuous improvement. In short, automation should complement human expertise, not replace it, to sustain resilient security operations.

Cost, ROI, and governance considerations

Investing in AI agents involves more than upfront tooling costs; it requires ongoing data management, model maintenance, and governance infrastructure. A blended model can reduce per-incident costs by accelerating triage and standardizing responses, while preserving high-value human labor for decisions that carry organizational risk. ROI should be evaluated in terms of time-to-detection improvements, reduction in manual toil, and overall risk posture gains, rather than solely on cost savings. Governance is essential: establish standard operating procedures, audit trails, explainability, and risk controls. These elements ensure that automation aligns with regulatory expectations and internal risk tolerance. Ai Agent Ops analysis shows that when governance and analytics are integrated, automation yields sustainable improvements without compromising safety or accountability.

Deployment roadmaps and blended-team strategies

A practical blueprint begins with stakeholder alignment: define success metrics, governance boundaries, and escalation paths. Next, inventory existing data sources, determine data quality requirements, and design a modular automation stack that can be iteratively extended. Build a core set of playbooks for triage, enrichment, and containment, then layer in more advanced analytics such as anomaly detection, user-behavior monitoring, and threat intelligence integration. Train security staff to work with AI agents, focusing on data interpretation, model governance, and incident coordination. Finally, implement continuous evaluation: monitor false positive rates, incident outcomes, and policy compliance. A well-executed blended strategy reduces workload on engineers, accelerates response, and ends with a stronger security posture.

Authoritative sources for further reading

- https://www.nist.gov

- https://www.cisa.gov

- https://spectrum.ieee.org

Comparison

| Feature | AI Agents | Cybersecurity Professionals |

|---|---|---|

| Capabilities | Automates triage, correlation, and routine responses at scale | Interprets context, makes policy-aligned decisions, and handles nuanced incidents |

| Response Speed | Rapid automation for ticketing, enrichment, and containment playbooks | Deliberate, context-rich decision-making with strategic oversight |

| Learning & Adaptation | Continuous data-driven improvements with evolving datasets | Tacit knowledge, experience, and expert intuition influencing outcomes |

| Cost & Resource Use | Lower marginal cost per incident with scalability | Higher ongoing cost but essential for risk-aware decision-making |

| Risk & Reliability | Reduces human toil and offers consistent playbooks with governance | High reliability for complex decisions but potential fatigue and bias risks |

| Compliance & Auditability | Traceable automation logs and repeatable processes | Policy-aware decisions with documented rationale and audits |

Positives

- Scales defense operations through automation

- Reduces repetitive workload for human staff

- Improves speed and consistency of routine responses

- Provides data-driven insights for strategic planning

What's Bad

- Potential overreliance on automation leading to skills erosion

- Risk of bias, misconfiguration, and false positives

- Requires ongoing governance, data quality, and validation

A blended approach wins: AI agents accelerate routine work while cybersecurity professionals handle complex reasoning and policy.

Use AI to scale detection and automation; rely on humans for nuanced threat analysis and governance. This combination delivers speed, scale, and accountable decision-making.

Questions & Answers

Can AI agents fully replace cybersecurity professionals?

No. AI agents automate routine tasks and augment decision-making, but complex threat analysis, policy decisions, and strategic defense require human expertise.

AI helps with routine tasks, but humans are still essential for complex threat analysis and policy decisions.

Which tasks are best left to AI agents?

Triage alerts, data correlation, enrichment, and execution of standardized response playbooks are ideal for automation, freeing humans to focus on analysis and strategy.

AI is great for fast triage and routine responses while humans handle the complex decisions.

What are the risks of relying on AI in cybersecurity?

Overreliance, false positives, data privacy concerns, and potential bias or misconfigurations. Governance and validation reduce these risks.

There are real risks with automation; governance and validation help manage them.

How should teams blend AI agents and human experts?

Start with defined roles, implement governance, and monitor performance. Adjust the balance as capabilities mature and threat landscapes evolve.

Define clear playbooks and keep humans in the loop for critical decisions.

What about compliance and auditing when using AI?

Maintain logs, explainability, and traceability for automated decisions; align with industry standards and regulatory requirements.

Keep thorough records of AI-driven actions for audits.

What skills should cybersecurity staff develop to work with AI agents?

Data literacy, model governance, incident coordination, and the ability to interpret AI outputs within business context.

Learn to work with data, understand models, and coordinate responses with automation.

Key Takeaways

- Define clear automation vs. human roles

- Use AI for triage, correlation, and playbooks

- Maintain governance and auditability

- Invest in team training to bridge skills

- Assess cost, risk, and ROI in tandem