n8n ai agent vs llm chain: A Comprehensive 2026 Comparison

An analytical comparison of n8n ai agent vs llm chain, covering architecture, use cases, performance, pricing, and integration outcomes for developers and business leaders.

Context and Definitions

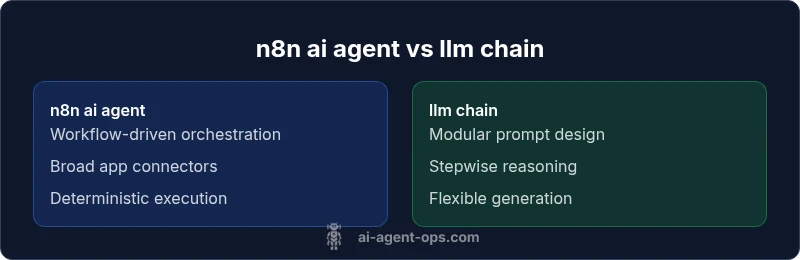

In the evolving landscape of AI-powered automation, two prominent patterns recur in discussions about efficiency, reliability, and scale: the n8n ai agent and the llm chain. An n8n ai agent uses the open-source workflow automation platform to orchestrate a sequence of actions across apps, services, and data sources. It emphasizes flow control, state management, retries, and robust error handling. In contrast, an llm chain represents a modular sequence of prompts and model invocations designed to break complex tasks into discrete steps, with each step feeding into the next in a chain-of-thought like fashion. The phrase “n8n ai agent vs llm chain” surfaces frequently as teams decide between a deterministic, rule-based workflow and a prompt-driven reasoning approach. According to Ai Agent Ops, the decision is less about universal superiority and more about aligning the architecture with the problem: deterministic workflows versus nuanced reasoning and generation. This article provides a deep dive into differences, tradeoffs, and practical implications for developers, product teams, and business leaders planning 2026 automation initiatives.