n8n LLM Chain vs AI Agent: Practical Comparison Guide

A detailed, analytical comparison of n8n basic llm chain vs ai agent, focusing on architecture, use cases, cost, governance, and integration for developers and leaders.

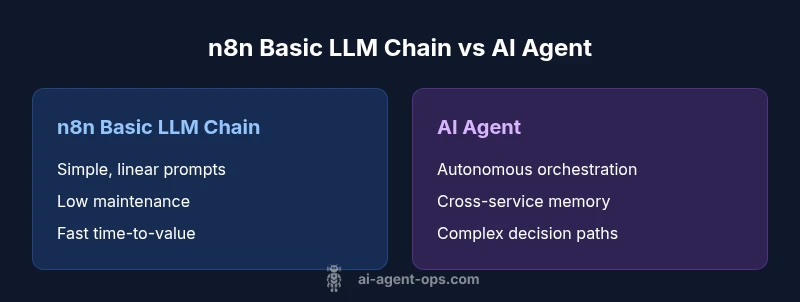

When you compare n8n basic llm chain vs ai agent, the choice is usually about simplicity versus autonomy. According to Ai Agent Ops, a basic n8n LLM chain is quick to set up for lightweight tasks, with predictable cost and clear prompts. An AI agent, by contrast, orchestrates multiple steps and systems, delivering scalable automation but at a higher setup and maintenance cost. Use case and risk tolerance drive the decision.

What n8n basic llm chain means in practice

In contemporary automation workflows, a basic n8n llm chain refers to a straightforward sequence where a single large language model is invoked, data passed through lightweight transformations, and a final action returned to the user or system. This pattern benefits from no-code and low-code tooling, enabling developers and product teams to rapidly assemble prompts, route responses, and perform simple decision logic. Because the chain is linear and stateless by design, it tends to be highly predictable, easy to audit, and quick to modify when requirements shift. The term n8n basic llm chain vs ai agent is not just about tooling; it captures a fundamental architectural choice: simplicity and locality over autonomy and cross-system orchestration. For teams starting new automation efforts, this approach minimizes risk, shortens time to value, and reduces the cognitive load on operators. It remains especially appealing when the data domains are well understood, inputs are clean, and the probability of edge cases is low. As you consider this option, also evaluate the governance overhead required to keep prompts stable and ensure you have a clear rollback path if the LLM output drifts over time.

What AI agents bring to the table and how they differ from chains

AI agents are designed to operate as autonomous orchestrators. Instead of a single prompt plus a linear flow, an AI agent coordinates multiple tools, services, and data sources, often maintaining state across interactions. In the n8n LLM chain vs ai agent debate, agents introduce capabilities like plan generation, decision-making across domains, memory of past interactions, and adaptive strategies. The architecture typically includes a controller that issues subgoals, a memory layer to recall prior contexts, and a set of tools or connectors that the agent can use to accomplish tasks without human intervention. The trade-off is clear: agents offer higher potential for end-to-end automation, deeper integration across systems, and resilience to changing requirements, but they also demand more robust governance, error handling, and monitoring. As organizations scale, the agent approach tends to reduce manual rework and accelerate complex workflows, especially when variability and external dependencies increase. In the context of this comparison, AI agents are not merely fancier prompts; they represent a distributed, adaptive automation paradigm that can evolve with your product roadmap.

Architectural differences: data flow, memory, state, and control

The core architectural distinction between a simple n8n basic llm chain and an AI agent lies in data flow, state management, and control flow. A chain typically processes a request through a single or shallow sequence: ingest data, apply a prompt, obtain a model response, and trigger a downstream action. Data movement is linear, and memory is often limited to the current session. In contrast, an AI agent maintains an internal plan, tracks subgoals, and stores contextual information across interactions. The data flow becomes a graph of tasks: a controller synthesizes subprompts, calls multiple tools, and reasons about outcomes before deciding the next step. This enables robust handling of edge cases because the agent can reevaluate its plan and choose alternative paths. Memory layers may include short-term workspace buffers and longer-term state caches, with policies for data retention and privacy. Control mechanisms shift from deterministic sequencing to adaptive orchestration, where failure modes are handled by fallback tools, retries, and escalation rules. In this sense, the AI agent embodies a more resilient, scalable approach—at a price. The choice between the two often hinges on the required degree of autonomy and the acceptable level of operational complexity.

Use-case patterns: when to choose each approach

Choosing between a basic n8n llm chain and an AI agent is rarely binary. Consider a lightweight, rule-driven workflow such as routing customer inquiries, generating templated responses, or performing data extraction with minimal variation. In these scenarios, a chain delivers fast value with low maintenance. On the other hand, complex, multi-system automation with dynamic decision-making, cross-service orchestration, and memory of past interactions benefits from an AI agent. Patterns include long-running processes that span human-in-the-loop checkpoints, tasks that require cross-domain reasoning, and environments where new tools and APIs are frequently added. For teams, a staged approach—start with a chain for core functionality, then introduce an agent to handle orchestration and memory—can balance risk and velocity. A hybrid model, using chains for straightforward steps and agents for critical decision points, often yields the most practical outcomes. Finally, assess nonfunctional requirements such as latency tolerance, observability, and governance constraints to guide the choice.

Cost, maintenance, and governance considerations

Cost and maintenance are pervasive factors when weighing n8n basic llm chain vs ai agent. A chain typically incurs lower upfront costs and simpler maintenance: fewer moving parts, straightforward prompts, and minimal state management. AI agents, while powerful, introduce ongoing costs related to tool subscriptions, memory management, and complex monitoring. From a governance perspective, agents demand stronger controls: role-based access, data lineage, prompt safety reviews, and auditable decision logs. As you scale, consider the cost of retries, tool usage, and potential vendor lock-in. Ai Agent Ops analysis shows that organizations that adopt a hybrid approach often strike a balance between speed and governance, gradually increasing autonomy as confidence and tooling mature. It’s essential to quantify risk tolerance and establish a staged roadmap that documents trigger points for migrating from a chain to an agent. Avoid treating cost as a one-time calculation; factor in maintenance, compliance, and potential rework when requirements evolve.

Integration, security, and reliability considerations

Both approaches depend on reliable integrations, but AI agents amplify the importance of robust integration design. When wiring up multiple services, ensure you have resilient connectors, idempotent operations, and clear error-handling policies. Security considerations include data minimization, prompt safety, access controls, and secure storage of memories or state data. Reliability rests on monitoring, tracing, and observability: track prompts, tool invocations, latencies, and failure rates to identify bottlenecks. In contrast, an n8n basic llm chain benefits from the simplicity of fewer moving parts and more predictable behavior, which makes it easier to test and validate in early deployments. Nevertheless, even simple chains should incorporate guardrails, validation, and a rollback path in case prompts drift or API limitations change. For teams building with Ai Agent Ops in mind, the goal is to implement a governance framework that gradually expands automation while maintaining auditable traces of decisions and tool usage.

Practical implementation patterns and steps

A practical path starts with a clear problem statement and success metrics. For the chain: 1) map inputs to outputs, 2) design prompts with deterministic fallbacks, 3) add lightweight validation, 4) enable simple routing rules, 5) monitor prompt drift. For the agent: 1) define subgoals and available tools, 2) implement memory schemas and retrieval-driven prompts, 3) establish safety rails and escalation policies, 4) create robust observability dashboards, 5) run gradual experiments with controlled rollouts. In both cases, start with a minimal viable implementation, then progressively add capabilities such as memory, tool chaining, and error handling. Real-world patterns include customer support automation, data enrichment pipelines, and cross-app task orchestration. The overarching message is to couple your architecture with measurable milestones and a clear plan for evolving from a linear chain to a more autonomous agent as the organization’s needs grow.

Decision framework and evaluation checklist for teams

To decide effectively between a n8n basic llm chain and an AI agent, apply a structured framework: define the problem scope, assess variability, estimate data and tool integration complexity, and set governance requirements. Create a simple scoring rubric for criteria like autonomy, latency, cost, risk, and maintainability. Build a short list of milestones and metrics to track progress, such as time-to-value, mean-time-to-recovery, and prompt drift. Consider a phased rollout: start with a chain to validate the core workflow, then add an agent to handle cross-system orchestration and decision-making. Finally, prepare a migration plan with clear trigger points for escalation and scaling.

Comparison

| Feature | n8n basic llm chain | AI agent |

|---|---|---|

| Control & debugging | Straightforward, highly observable prompts and logs | Autonomous, requires centralized tracing across tools |

| Reusability | Modest reuse via templated prompts | High reuse through modular tool calls and plan templates |

| Orchestration | Linear flow with simple branching | Graph-based orchestration across services |

| Memory & state | Limited session memory | Persistent memory and context over time |

| Learning & adaptation | Prompts guide behavior; updates are manual | Agent learns from interactions and adapts plans |

| Latency & throughput | Lower latency; predictable performance | Potentially higher latency due to tool coordination |

| Cost & maintenance | Lower ongoing cost; easier maintenance | Higher ongoing cost; requires cross-team governance |

| Best for | Low-risk, fast wins, well-defined inputs | Complex workflows, multi-system automation |

Positives

- Low upfront complexity and faster time-to-value

- Easier to audit prompts and behavior

- Lightweight maintenance with clear scope

- Good for straightforward, rule-driven tasks

What's Bad

- Limited autonomy and cross-system capabilities

- Less scalable for complex workflows

- Higher manual intervention may be needed for exceptions

AI agents outperform chains for scalable automation; chains suit simple, fast wins

The Ai Agent Ops team recommends starting with a simple chain for quick wins, then evolving toward an agent when cross-system orchestration and autonomy are needed. This phased approach balances risk and speed, aligning with governance and operational realities.

Questions & Answers

What is a n8n basic llm chain?

A basic n8n LLM chain is a lightweight, linear workflow that calls a single language model, applies simple transformations, and performs a single-or-limited set of actions. It’s ideal for straightforward tasks with minimal cross-service dependencies.

A basic n8n LLM chain is a simple, linear workflow that uses one AI model to handle straightforward tasks.

What defines an AI agent in this context?

An AI agent is an autonomous orchestration entity that coordinates multiple tools and services, maintains memory across interactions, and adapts its plan to achieve a goal. It handles complex workflows beyond a single prompt.

An AI agent autonomously coordinates tools and services to achieve goals across complex tasks.

Which approach is cheaper upfront?

A n8n basic llm chain generally incurs lower upfront costs due to its simplicity and fewer moving parts. AI agents typically require more investment in tooling, memory, monitoring, and governance.

The chain is usually cheaper to start, while agents cost more upfront but can save time later.

Can you combine both approaches?

Yes. A hybrid pattern uses a chain for simple steps and an agent for orchestration and decision-making, enabling a gradual increase in automation complexity while managing risk.

You can blend both: run simple steps with a chain and bring in an agent for the tougher parts.

What are common pitfalls to avoid?

Common pitfalls include underestimating governance for agents, neglecting observability, allowing prompt drift without safeguards, and failing to plan for data retention and tool compatibility.

Watch out for prompt drift and governance gaps when moving to agents.

How do you measure success for each approach?

Define clear success metrics such as time-to-value, error rates, latency, maintenance effort, and user satisfaction. Track changes over time to determine when a transition to a more autonomous solution is warranted.

Set specific metrics like latency and error rates to judge success and guide evolution.

Key Takeaways

- Choose simplicity first when requirements are stable

- Evaluate autonomy needs before migrating to agents

- Plan governance and observability early

- Adopt a hybrid approach to balance speed and scale

- Measure value with clear milestones and metrics