llm vs ai agent: A comprehensive, team-focused comparison

An in-depth, objective comparison of LLM-based approaches versus AI agent frameworks, with architecture patterns, use-case mapping, trade-offs, and deployment guidance for developers and business leaders.

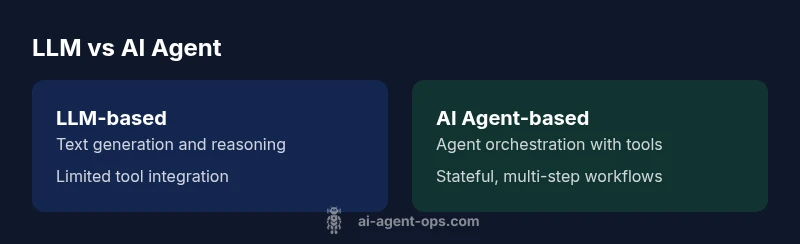

The llm vs ai agent distinction matters because it shapes architecture, tooling, and governance. LLMs excel at text understanding and generation within prompts, while AI agents orchestrate actions across tools, memory, and state. For teams, the best choice depends on task complexity, integration needs, and risk tolerances. This comparison maps typical use cases to architectures, helping you choose the right pattern for your product roadmap.

Context: Why the llm vs ai agent distinction matters

In real-world projects, teams that misclassify the approach risk over-engineering or missed automation opportunities. The distinction between an LLM and an AI agent is not just academic; it drives data flows, tooling choices, governance, and deployment patterns. According to Ai Agent Ops, many organizations start with a lightweight LLM prototype to validate business value, then decide whether to escalate to an agent-based workflow if a multi-step process, external tools, or stateful reasoning are required. This framing helps engineers and leaders align expectations, budget, and risk. As you read, you’ll see how an LLM-based pattern vs an AI agent pattern affects latency, reliability, and safety, with concrete decision criteria to guide your roadmap.

Key takeaway: the choice should be driven by task complexity, tool integration needs, and governance requirements, not only model capability. llm vs ai agent is about matching architecture to outcomes.

Key definitions: What is an LLM and what is an AI agent?

- LLM (large language model): A statistical model trained to predict text, perform reasoning inside prompts, and generate coherent, human-like responses. LLMs shine at language tasks—text completion, classification, summarization, and creative writing. They operate best when prompts guide behavior and the knowledge they rely on is static or retrievable on demand.

- AI agent: An orchestrated system that couples an LLM with planning, memory, and external tool calls (APIs, databases, automation services) to perform multi-step tasks and affect the real world. AI agents manage state across steps, decide which tools to use, and monitor outcomes, enabling end-to-end automation and adaptive workflows.

In short, llm vs ai agent describes a spectrum: from a language-focused model to an autonomous, tool-driven decision maker. This distinction matters for system design, reliability, and governance.

Core differences in capability and scope

- Primary capability: LLMs excel at language understanding and generation within prompts; AI agents enable action and orchestration across tools.

- Decision latency: LLM prompts can be fast for single-turn tasks; AI agents incur planning and tool-calling overhead, which adds latency but enables multi-step outcomes.

- Context and memory: LLMs often rely on short-term prompts or external memory bundles; AI agents maintain explicit state across steps and sessions.

- Tool integration: LLMs have limited external tool integration out of the box; AI agents integrate with APIs, databases, and automation platforms.

- Error handling: LLMs rely on prompt design and re-prompts; AI agents implement structured retries, monitoring, and external checks.

- Complexity and cost: LLM-only approaches are typically simpler to implement but limited; AI agents require more infrastructure but unlock end-to-end automation. llm vs ai agent decisions hinge on your risk tolerance and desired outcomes.

Architectural patterns: standalone LLMs vs agentic systems

- Pattern A — LLM-based with prompts (static reasoning): A single model handles interpretation, classification, and generation, guided by carefully crafted prompts. Maintenance is lighter, and updates are model-led.

- Pattern B — Agent pattern (orchestrated workflows): An agent framework combines an LLM with a planner, memory module, and tool adapters. It executes multi-step tasks, calls external services, and stores state for continuity.

- Pattern C — Hybrid approach: LLMs handle language tasks while a lightweight orchestrator manages state and tool calls, balancing simplicity with automation.

Choosing between these patterns depends on task breadth, external dependencies, latency budgets, and governance requirements. llm vs ai agent decisions should reflect not only capability but also operational realities.

Use-case mapping: when to choose each approach

- LLM-based solutions (llm-focused): Content creation, chatbots focused on natural language, routing by text analysis, and quick prototype pilots where external tool usage is minimal.

- AI agent-based solutions (agent-focused): End-to-end workflows, complex decision making with external tools, data pipelines, automated customer journeys, and scenarios requiring memory and stateful reasoning.

- Hybrid scenarios: When you need fast language tasks with occasional tool calls, a hybrid can offer low friction with gradual automation. llm vs ai agent decisions should be guided by the criticality of end-to-end automation and the level of tool integration required.

Practical trade-offs: cost, latency, governance

- Cost considerations: LLM-based patterns typically incur lower per-call compute, but long-term costs can rise with scale if prompts become complex. Agent-based architectures incur tooling and orchestration costs plus potential tool usage fees, but can reduce manual handoffs and errors.

- Latency and throughput: Simple LLM prompts are often faster, while agent-based workflows may experience variable latency due to API calls and multi-step planning. Optimize by parallelizing non-dependent steps where possible.

- Governance and safety: AI agents introduce more governance needs (tool access, audit trails, action monitoring). Plan for access control, runbooks, safety nets, and continuous validation. llm vs ai agent considerations should always include risk assessment and compliance alignment.

Implementation considerations: data, tooling, and integration

- Data sources: LLMs need reliable inputs and retrieval methods; AI agents require structured data flows, connectors, and reliable state stores. Consider retrieval-augmented generation for up-to-date information.

- Tooling landscape: LLMs benefit from prompt-tuning and adapters; AI agents rely on tool registries, adapters, and orchestration frameworks. Both require monitoring and observability.

- Memory and state: Decide what to store (short-term context vs long-term state) and how to persist it securely. llm vs ai agent implementations benefit from clear data governance and privacy controls.

- Observability: Instrument prompts, tool calls, and decision points for traceability and debugging. Build dashboards that reflect end-to-end task outcomes and safety checks.

Platform and ecosystem implications: safety, standards, and scalability

- Safety and ethics: Both patterns demand guardrails against hallucinations, biased outputs, and unsafe actions. Implement content filters, tool-level validation, and human-in-the-loop where appropriate.

- Standards and interoperability: Adopt modular architectures with clear boundaries between language models, planners, and tools. Favor open APIs and standardized tool schemas to ease migration and upgrades.

- Scalability: Design for horizontal scale, load shedding during outages, and versioning of tools and policies. llm vs ai agent decisions should reflect the platform's ability to evolve without breaking critical workflows.

Migration path and practical guidance for teams

- Phase 1 — Pilot with LLMs: Start with a lightweight LLM-based prototype to establish value and gather data. Establish clear success criteria and define what you’d expect to automate later.

- Phase 2 — Introduce agent capabilities: Add a planner, memory, and tool adapters to support end-to-end workflows where needed. Monitor performance and governance rigorously.

- Phase 3 — Optimize and govern: Implement standardized tool access, runbooks, and compliance checks. Refine prompts, memory usage, and tracing to reduce risk and improve reliability. llm vs ai agent decisions should be revisited as your product matures.

Comparison

| Feature | LLM-based approach | AI agent-based approach |

|---|---|---|

| Primary capability | Text understanding and generation within prompts | Orchestrated actions with tools, memory, and state management |

| Decision latency | Low for single-turn prompts | Higher due to planning and tool calls, but end-to-end outcomes improve |

| Context retention | Prompt-bound or external memory bags | Explicit, persistent state across steps |

| Tool integration | Limited external tool usage; relies on prompt capabilities | Rich tool ecosystem integration (APIs and automation) |

| Error handling | Re-prompting and prompt design-based fallbacks | Structured retries, monitoring, and external validation |

| Complexity | Simpler to implement for narrow tasks | Higher upfront complexity with broader automation goals |

| Cost context | Lower per-call compute for simple tasks | Potential higher total cost due to orchestration and tools |

| Best for | Content generation, NLP classification, quick prototyping | End-to-end automation, multi-step processes, tool-driven tasks |

Positives

- Clarifies decision criteria for teams

- Helps align architecture with business goals

- Highlights trade-offs clearly

- Supports faster, evidence-based decisions

- Encourages modular, reusable design

What's Bad

- Requires careful scoping to avoid oversimplification

- Implementation complexity can be high for large workflows

- Tooling and governance overhead may increase time-to-value

- Risk of integration fragility if external services change

AI agent-based approaches are generally favored for end-to-end workflows; LLM-based patterns fit simpler, language-centric tasks.

For multi-step automation and tool integration, choose an agent pattern. For rapid prototyping and text-centric tasks, an LLM-based approach may be best. Ai Agent Ops supports selecting the architecture that best aligns with governance and risk tolerance.

Questions & Answers

What is the fundamental difference between an LLM and an AI agent?

An LLM is a probabilistic language model that generates text and performs prompt-driven reasoning. An AI agent combines an LLM with a planner, memory, and tool calls to perform actions and manage state across steps.

LLMs handle language; AI agents act and automate using tools.

When should I choose an LLM-based solution over an AI agent?

Use LLM-based solutions for straightforward language tasks like drafting, summarization, or simple classification. If you require multi-step workflows, external tool calls, or stateful reasoning, an AI agent pattern is typically more appropriate.

Choose LLMs for simple language tasks; agents for complex automation.

What governance and safety considerations exist for AI agents?

AI agents interact with external tools and data sources, creating governance and risk considerations. Implement access controls, auditing, runbooks, safety checks, and human-in-the-loop where appropriate.

Plan safety, auditing, and control for agent-based systems.

Can an LLM serve as an AI agent with plugins?

Yes, an LLM can drive agent-like behavior when augmented with tooling and orchestration. This requires careful integration and risk management to ensure reliable, safe outcomes.

Yes, with the right tooling, but it isn’t automatic.

Do AI agents require more infrastructure?

AI agents typically require orchestration, state management, and tool adapters, which adds architectural and operational overhead but enables end-to-end automation.

Often yes, more infrastructure is involved.

How should I measure success for llm vs ai agent implementations?

Define end-to-end goals (task completion, latency, accuracy, safety compliance) and track outcomes across the full workflow, not just model quality. Use both quantitative and qualitative signals.

Measure end-to-end impact, not just model stats.

What are common pitfalls when deploying AI agents?

Over-automation without governance, fragile tool integrations, and insufficient monitoring can undermine reliability. Start with a narrow scope, enforce strong observability, and progressively expand automation with safety nets.

Be mindful of governance and monitoring as you scale.

Key Takeaways

- Map tasks to architecture before development

- Prefer AI agents for multi-step workflows requiring tooling

- Use LLMs for fast prototyping and language tasks

- Plan governance and safety early