LLM vs AI Agent vs Agentic AI: A Practical Comparison

A rigorous, practitioner-focused comparison of LLMs, AI agents, and agentic AI, with definitions, use cases, trade-offs, governance, and practical guidance for developers, product teams, and business leaders.

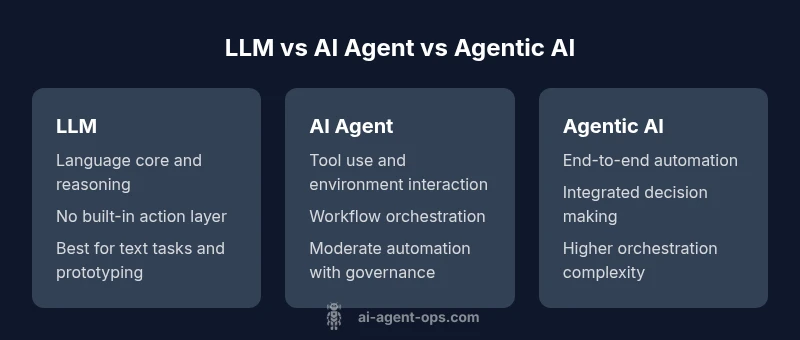

When you compare llm vs ai agent vs agentic ai, you’re evaluating three levels of capability. LLMs excel at language understanding and generation; AI agents extend those skills with tool use and workflow orchestration; agentic AI aims to automate end-to-end decisions. According to Ai Agent Ops, the best practice is a layered approach: start with an LLM, add an orchestrating agent, then introduce agentic AI for full automation.

LLM, AI Agent, and Agentic AI: Definitions and Boundaries

In the llm vs ai agent vs agentic ai spectrum, three archetypes define how we approach language, action, and automation. A large language model (LLM) is primarily a language engine: it excels at understanding prompts, generating coherent text, and supporting reasoning in a probabilistic manner. An AI agent takes a step further by using the LLM’s capabilities to perform actions in the real or simulated world—calling APIs, querying databases, and making decisions within predefined rules. Agentic AI pushes this even further by embedding automated decision-making and orchestration across tasks, effectively enabling end-to-end workflows that span data access, computation, and action. For developers and leaders, this taxonomy helps plan risk, governance, and integration strategy. The terms llm vs ai agent vs agentic ai are not interchangeable labels; they represent a maturity ladder from language-first tooling to automated operations.

The Ai Agent Ops Perspective on Maturity

From an architectural and governance standpoint, the Ai Agent Ops framework emphasizes layered capabilities, clear interfaces, and explicit handoffs between stages. In this view, the llm vs ai agent vs agentic ai distinction is not just about capability but about control, observability, and reliability. Early adoption tends to emphasize robust language tasks with safe prompts; subsequent iterations introduce tool use and workflow integration; final stages pursue holistic automation with safety rails, monitoring, and auditing. Keeping this progression in mind helps teams scope experiments, allocate budgets, and design governance policies that scale with risk.

Why This Distinction Matters for Projects

Projects that conflate llm, ai agent, and agentic ai risk scope creep and governance gaps. Labeling a system simply as an 'AI agent' without specifying tool integration, decision boundaries, or end-to-end responsibility can lead to ambiguous ownership and fragile operations. Conversely, a well-structured plan that respects the llm vs ai agent vs agentic ai hierarchy enables phased investments, measurable milestones, and clearer success metrics. In practical terms, teams should map capabilities to requirements: language quality, tool integration, decision automation, and end-to-end process governance.

Feature Comparison

| Feature | LLM | AI agent | Agentic AI |

|---|---|---|---|

| Definition | A probabilistic model trained to predict next tokens for natural language tasks. | A software agent that uses language models and tools to perform tasks via actions in an environment. | A system that integrates language models, agents, and automation to execute end-to-end tasks. |

| Primary Function | Generate and understand text | Coordinate tools and actions to complete workflows | Orchestrate language, actions, and decisions across tasks |

| Strengths | Strong language generation and comprehension | Direct task execution through tool use | End-to-end automation with governance and adaptability |

| Limitations | Limited action outside the text domain without tools | Requires reliable tooling and safety controls | Increased complexity and risk with integration |

| Typical Use Cases | Chatbots, content drafting, summarization | Workflow automation, API calls, decision making within defined schemas | Operationalize AI across domains with autonomous reasoning |

| Best For | Pure language tasks and prototyping | Automation of repeatable tasks with external tools | End-to-end automation with dynamic decision-making |

Positives

- Clarifies roles and responsibilities across AI layers

- Supports modular architecture and governance

- Enables safer, auditable automation when scaled

- Offers clear upgrade paths from language to action

What's Bad

- Adds integration and maintenance complexity

- Requires robust tooling and monitoring

- Risk of cascading failures if orchestration is weak

Agentic AI provides the most comprehensive automation path when properly designed; however, organizations should master LLMs and AI agents first.

Start with LLMs, validate with AI agents, then combine into agentic AI for end-to-end workflows. The layered approach reduces risk and increases governance.

Questions & Answers

What is the difference between an LLM and an AI agent?

An LLM focuses on language understanding and generation. An AI agent uses an LLM, plus tools and workflows, to perform tasks and make decisions within an environment.

An LLM handles language tasks. An AI agent uses that capacity to act through tools and workflows.

What exactly is agentic AI?

Agentic AI combines language models, automated decision-making, and action orchestration to complete end-to-end tasks with minimal human input under governance controls.

Agentic AI blends language, decisions, and actions into automated workflows.

When should I start with an LLM vs an AI agent?

Begin with an LLM for language tasks. Add an AI agent when you need reliable tool use and structured workflows, then consider agentic AI for end-to-end automation.

Start with language tasks, then add tools, and finally automate end-to-end.

What are the main risks?

Risks include safety and governance gaps, data handling concerns, integration complexity, and the potential for cascading failures if orchestration is not well designed.

Risks include safety, data handling, and system complexity.

How do you measure success?

Define metrics for reliability, latency, accuracy, and end-to-end throughput; monitor governance controls and user impact to ensure ongoing value.

Track reliability, speed, accuracy, and overall impact.

Can I evolve gradually from LLM to agentic AI?

Yes. Start with a solid LLM, validate outputs, add an AI agent for orchestration, and finally integrate agentic AI with strong governance and monitoring.

Yes—step by step, with checks at each stage.

Key Takeaways

- Define clear roles: LLMs for language, agents for action

- Use a staged rollout from language to orchestration to agentic AI

- Prioritize governance and safety at every layer

- Invest in tool libraries and standardized interfaces

- Measure outcomes with process and quality metrics