ai agent and llm: A Side-by-Side Comparison for 2026

This in-depth comparison analyzes ai agent and llm architectures, outlining key differences, use cases, costs, and best practices for deploying agentic AI workflows across modern organizations.

In most modern AI workflows, pairing an ai agent with an llm delivers stronger autonomy and reliability than either component alone. The llm provides planning, reasoning, and natural-language understanding, while the ai agent handles tool orchestration, data access, and action execution with guardrails. The best setups are modular, auditable, and designed for governance, enabling scalable experimentation and measurable ROI.

Why ai agent and llm matter in modern automation

In the current landscape of enterprise automation, the combination of an ai agent and llm is more than a trend—it's a practical pattern that accelerates execution while preserving human oversight. The ai agent acts as the conductor, translating goals into concrete actions across tools, APIs, databases, and human-in-the-loop checkpoints. The llm supplies the reasoning, natural-language interaction, and the ability to understand context, explain decisions, and adapt prompts as conditions change. For developers and product teams, this pairing reduces the friction between planning and doing, enabling faster cycles from concept to production. According to Ai Agent Ops, organizations that adopt an integrated agent-LLM stack report improved throughput, clearer ownership, and more predictable orchestration than systems that rely on standalone components. The philosophy is simple: let the llm craft plans and interpretations, and let the agent translate those plans into reliable actions with proper safeguards. When designed well, this architecture supports scalable automation, auditability, and resilience across evolving business needs. The role of governance emerges early: without guardrails, even the most capable system can drift or perform unsafe actions. The objective is to combine the strengths of language understanding with disciplined action, delivering outcomes that are auditable, repeatable, and adaptable.

Architectural patterns: how the two components fit together

Architectural patterns for ai agent and llm pairings fall into a few recognizable models. The most common is the planner-agent pattern: the llm functions as a planner that produces a sequence of actions, while the ai agent executes those actions via tools and services. Another approach is plugin-driven orchestration, where the llm issues requests that are dispatched through a tool-connector layer with strict contracts, rate limits, and failure handling. A third pattern uses a memory-capable agent that stores context across sessions, enabling continuity across tasks. In all patterns, clear boundaries between planning (llm) and execution (agent) help isolate failures and improve testability. The system should include a tool catalog with safe wrappers, access control, and observability hooks. Quality attributes to optimize include latency, throughput, data privacy, and explainability. The design should prefer modular adapters, versioned prompts, and a policy layer that enforces guardrails. Additionally, retrieval-augmented generation (RAG) enhances the llm's context with domain data, while persistent state stores provide continuity. The goal is to balance flexibility with reliability, so teams can iterate without sacrificing safety or governance.

Use cases: when to deploy ai agents with llm

Organizations deploy ai agents with llm in scenarios that require automated decision making, cross-system coordination, and natural-language interactions. Example use cases include customer support assistants that autonomously pull data from CRM and messaging platforms, procurement workflows that negotiate terms by querying supplier APIs, and data pipelines that orchestrate data quality checks and alerting. In product development, this pairing accelerates experimentation by turning user stories into runnable tasks, tracking progress, and surfacing rationale for decisions. In finance and operations, agents monitor dashboards, generate summaries, and trigger corrective actions when anomalies appear. Another compelling use case is research assistance: the llm can synthesize literature and the agent can fetch papers, run analyses, and cite sources with an auditable log. Crucially, the success of use cases hinges on domain-specific tool wrappers, robust error handling, and clear ownership. Start small with a well-scoped task, then expand to end-to-end workflows as confidence grows. The Ai Agent Ops team recommends mapping constraints, data sources, and decision boundaries before scale, to ensure alignment with business goals.

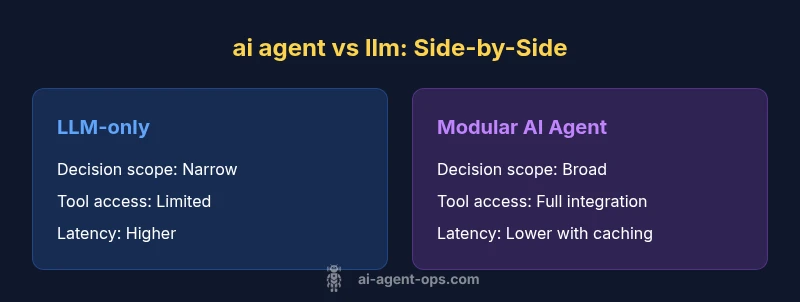

Key differentiators: what sets apart LLM-driven vs agent-driven approaches

The most important differentiator is where decision-making happens and how actions are executed. In an LLM-driven approach, language understanding guides task selection, but the system relies on external scripts or human input for action. In an agent-driven setup, an orchestrator translates decisions into concrete actions across tools, services, and data stores, with explicit feedback loops. Other differentiators include observability and safety: agent-driven architectures typically produce richer action trails and auditable logs, while LLM-driven systems depend on prompt hygiene and external monitors. Latency and cost trade-offs also matter: LLM-driven pipelines can be leaner to bootstrap but may incur repeat calls, whereas modular agents amortize cost through reuse and caching. Finally, governance maturity tends to be higher with agents because you can enforce contracts, limits, and compliance checks at the tool level. In practice, most teams move toward a hybrid model that uses llm reasoning for planning and an agent for execution, combined with a dedicated governance layer.

Governance, safety, and risk considerations

Safety and governance dominate the early phases of any ai agent and llm deployment. Key concerns include data privacy, access control, prompt leakage, and the risk of cascading failures when a single misstep propagates across tools. A robust approach combines policy-driven prompts, access-scoped tool catalogs, and continuous monitoring. Implement guardrails such as action limits, kill-switches, and anomaly detectors that flag unusual prompts or tool calls. Observability is essential: maintain end-to-end logs, versioned tool wrappers, and explainability hooks so stakeholders can audit decisions. Align the architecture with organizational risk appetite and standards, such as NIST guidance on AI risk management and privacy requirements. Training and testing should emphasize edge cases, adversarial prompts, and red-team exercises. Finally, ensure governance keeps pace with deployment: as capabilities evolve, so too should policies, contracts, and review processes.

Performance, cost, and scalability considerations

Performance hinges on latency, throughput, and reliability. In practice, an LLM-first pattern may incur higher round-trip times if planning and execution occur in separate phases, while an agent-driven pattern can achieve higher throughput through parallelization and caching. Cost models depend on API usage, tool subscriptions, and the maintenance overhead of connectors. To optimize, adopt persistent state stores, reusable tool wrappers, and staged rollout plans that test reliability under load. Scalability requires careful memory management, prompts versioning, and rate limiting to prevent overload. Consider hybrid configurations that keep critical decisions under strict policy while delegating routine actions to fast, cached agents. In some environments, governance investments pay back through reduced risk and faster time-to-value, even if upfront costs are higher. Ai Agent Ops analysis shows that mature agent architectures deliver stronger ROI over time when coupled with disciplined observability and automated testing.

Integration and tooling: building blocks you need

A successful integration stack includes a tool catalog, adapters for key services, and an orchestration layer that translates plans into calls. Start with a lightweight executor that can perform common tasks (read, write, fetch) and progress to richer agents that handle multi-step workflows. Use versioned prompts and pattern-based templates to reduce drift, and implement a policy layer to enforce guardrails. You will also want a memory layer or vector store to retain context across sessions, plus retrieval-augmented generation to keep knowledge up-to-date. Data governance tooling—such as access controls, data lineage, and audit trails—should be central. Finally, invest in testing frameworks that simulate real-world prompts and failure modes, including prompt injections and tool errors. A well-documented suite of tests helps maintain reliability as teams iterate toward production-ready agentic AI workflows.

Deployment patterns: step-by-step to scale

Adopt a phased approach: start with a narrow, well-scoped problem and a small tool set, then gradually expand. Phase 1: build a minimal viable agent with a single tool and a simple llm prompt, monitoring outcomes. Phase 2: add more tools, strengthen safety checks, and implement error-handling and fallback strategies. Phase 3: broaden governance coverage with auditable logs, change management, and performance dashboards. Phase 4: scale horizontally with multiple agents and shared state management across tasks. Throughout, maintain strong observability—metrics for latency, success rate, and error types; dashboards for tool usage; and regular red-teaming. Remember to document decisions and capture rationale for future audits. The end goal is repeatable, auditable automation that aligns with business goals while staying within risk tolerance.

Common pitfalls and how to avoid them

Common pitfalls include ambiguous ownership, brittle prompts, and over-reliance on a single tool or data source. Mitigation strategies include establishing clear ownership, version-controlled prompts, and diversified data inputs. Another pitfall is insufficient observability: without end-to-end logs and explainability, debugging becomes guesswork. Invest in instrumentation, test coverage, and consistent tool wrappers. A third risk is prompt leakage or data exfiltration through external calls; isolate tool inputs and sanitize sensitive data before sharing with an llm. Finally, neglecting governance can lead to regulatory exposure and unsafe actions; implement a formal review process for new capabilities and keep a running risk register. If you plan for these challenges, you’ll reduce rework and accelerate safe, scalable adoption.

Authority sources and research for further reading

- NIST AI Risk Management Framework: https://www.nist.gov/topics/artificial-intelligence

- Stanford AI Lab: https://ai.stanford.edu

- Nature AI research: https://www.nature.com/articles

Comparison

| Feature | LLM-only pattern | Modular AI Agent with Orchestration |

|---|---|---|

| Decision scope | Narrow, text-based reasoning | Broad, tool-enabled planning and action |

| Tool access | Limited tool usage or plugins | Full tool integration across apps, data stores, and services |

| Latency & throughput | Higher due to separate planning/execution steps | Lower with parallel execution and caching |

| Observability & governance | Fewer end-to-end logs | Rich action trails with auditable logs |

| Cost dynamics | Lower upfront tooling, potentially higher API usage | Higher upfront/maintenance with connectors |

| Best for | Rapid prototypes and simple tasks | Complex, repeatable workflows with governance |

Positives

- Faster initial deployment for simple tasks

- Lower upfront tooling and integration costs

- Clear separation of planning and execution

- Quicker iterations for experiments

What's Bad

- Limited scalability and governance without guards

- Higher long-term maintenance for complex workflows

- Potentially higher latency due to repeated planning

- Less robust audit trails without a dedicated agent layer

Modular AI agents with orchestration generally offer the best balance of scalability and governance, though for quick prototyping an LLM-only approach can be faster to deploy.

Choose agent-based orchestration for scale and governance; prefer LLM-only for fast, simple trials.

Questions & Answers

What is an AI agent and llm combination?

An AI agent coordinates actions across tools and data, while an llm provides planning, reasoning, and natural-language interaction. Together, they enable autonomous, auditable workflows that scale with governance.

An AI agent handles the actions; an llm plans and reasons. Together, they automate complex tasks with safety and logs.

When should I prefer an LLM-only approach versus an agent-based approach?

Choose LLM-only for quick prototyping and simple tasks, where orchestration needs are minimal. Opt for a modular AI agent with orchestration when workflows grow in complexity, require cross-system actions, and demand strong governance.

If you’re testing a simple idea, start with LLM-only. For scalable, auditable processes, go with a modular agent.

What governance and safety considerations are essential?

Prioritize data privacy, access control, and end-to-end observability. Implement guardrails, kill-switches, and auditing; align with standards like AI risk management frameworks and privacy laws.

Make safety and logs a built-in part of the system from day one.

What are typical costs and ROI drivers?

Costs come from API usage, tool subscriptions, and maintenance of connectors. ROI increases with scale, governance maturity, and reduced manual toil as workflows mature.

Costs scale with usage, but governance and automation grow ROI over time.

How do I start building ai agent and llm workflows in practice?

Begin with a focused, small scope task and a minimal tool set. Add guards, observability, and gradually introduce more tools and state across iterations.

Start small, add safety, then scale up thoughtfully.

Are there benchmarks or standards I should follow?

Refer to AI risk management frameworks and industry research from leading institutions. Use controlled pilots, red-teaming, and progressive deployment to establish benchmarks.

Use established AI risk standards and controlled pilots to benchmark your setup.

Key Takeaways

- Define your goal and test both patterns against real tasks.

- Prioritize governance, safety, and observability from day one.

- Start with an MVP using LLM plugins before adding orchestration.

- Monitor cost and performance continuously to scale responsibly.