Difference AI Agent and LLM: Core Distinctions for Builders

A rigorous comparison of AI agents vs large language models (LLMs), clarifying how each works, their use cases, strengths, and trade-offs for developers and leaders.

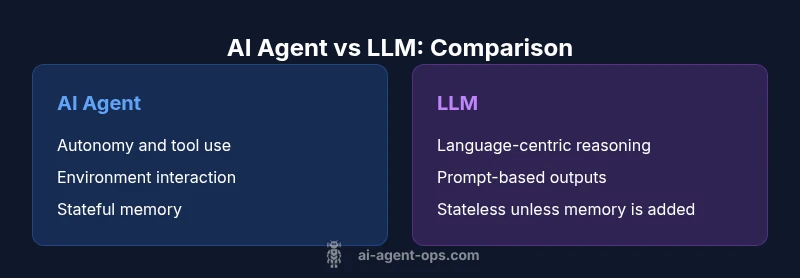

AI agents and LLMs serve distinct roles in modern AI systems. An AI agent is an autonomous system that acts in an environment to achieve goals, often using tools and memory; an LLM is a language model focused on generating and reasoning with text. The key differences involve autonomy, tooling, memory, and integration.

What makes the difference between an AI agent and an LLM?

The phrase difference ai agent and llm is more than a lexical distinction; it signals how these technologies integrate into real systems. AI agents are designed to operate autonomously, execute actions in the world, and adapt based on outcomes. LLMs, by contrast, excel at understanding, generating, and translating language within prompts. For teams building end-to-end workflows, recognizing this distinction helps allocate work between the language core and the decision/actions layer. In practice, many systems fuse both: an LLM as the reasoning backbone inside an agent that carries out actions across tools and environments. This section lays the groundwork for a shared mental model that aligns architecture, governance, and experimentation with real-world constraints.

Autonomy, goals, and environment interaction

Autonomy is the central axis that separates AI agents from raw LLMs. An agent is designed to pursue explicit goals in a dynamic environment, selecting actions, negotiating tool use, and adjusting plans when signals arrive. An LLM remains text-focused, producing outputs from prompts and using internal reasoning patterns limited by prompt context. Understanding this helps teams decide where to place the boundary between automated decision-making and human oversight. In many cases, a well-designed agent loops in feedback from tool results, logs, and user input to refine future behavior, something a pure LLM cannot do without external scaffolding.

- Autonomy: agents act; LLMs respond to prompts.

- Goal orientation: agents pursue outcomes; LLMs optimize language quality.

- Interaction: agents use tools and sensors; LLMs rely on prompts and retrieved context to inform output.

Tooling, memory, and state management

Agents rely on tool use—APIs, databases, file systems, and even robotic or simulated interfaces. They maintain state across long-lived sessions, store context externally, and reuse results to inform subsequent decisions. LLMs typically operate without persistent state unless augmented with memory modules or external databases. Tooling integration is a deciding factor in system design: agents require robust orchestration, error handling, and safety checks, whereas LLM-centric flows emphasize prompt design, retrieval of relevant context, and response formatting. The upshot is that memory and tooling architecture often determine latency, reliability, and governance requirements in production.

Input, output, and reasoning patterns

LLMs shine at natural language understanding, summarization, and creative generation. They reason in text, often within a single prompt or a chain-of-thought style. AI agents, while they also rely on language models for understanding, operate through action loops: observe state, decide, act, observe results, and iterate. This loop-based approach enables multi-step tasks and complex workflows that extend beyond text generation alone. When organizations require automation with external effects, the combination of an LLM-based reasoning layer inside an agent loop can deliver both fluent language and tangible outcomes.

Use cases: when to choose an AI agent vs an LLM

Choosing between an AI agent and an LLM depends on the task and the environment. LLMs are ideal for content generation, drafting, coding assistance, and language-centric analysis where human-like text quality matters. AI agents excel in operational domains: automating business processes, data collection and synthesis, decision support with external tools, monitoring, and orchestration across services. For complex workflows, teams often deploy agents that orchestrate LLM-driven decisions, enabling scalable automation with language intelligence embedded in the decision loop.

Architectural patterns and data flow

A practical architecture often layers an LLM as the language core within a broader agent framework. The agent handles perception, goal formulation, planning, and tool execution, while the LLM provides natural language understanding and reasoning. Data flows typically include input ingestion, retrieval augmented generation for context, action execution via tool adapters, and feedback loops that update memory stores. Designing with modular interfaces—prompts, plans, tools, and memory—helps teams test incrementally and manage risk through sandboxed environments and clear rollback policies.

Risks, governance, and evaluation

Autonomy brings governance challenges: tool misuse, data leakage, and unintended consequences. Effective risk management includes guardrails, auditing tool actions, constraint policies, and transparent evaluation criteria. Evaluation should measure not just output quality but system behavior: reliability, safety, response latency, and the quality of decisions made by the agent. Establishing KPIs that cover operational goals, user impact, and governance outcomes helps align development with organizational risk tolerance and regulatory requirements.

Practical steps to get started

- Define clear use cases and success criteria for both language tasks and autonomous actions. 2) Start with a small, auditable agent prototype that can perform a simple task using a single tool. 3) Add memory layers and a structured tool registry to enable multi-step workflows. 4) Implement monitoring, logging, and safety checks before expanding capabilities. 5) Run iterative experiments comparing LLM-only prompts vs. agent-enabled flows to quantify value.

Adopting a hybrid: integrating agents with LLMs

Hybrid architectures—where LLMs power language tasks inside agents that act in the world—often deliver the strongest value. In such setups, you retain language fluency while leveraging the agent’s autonomy to automate real tasks, integrate with external systems, and maintain state. Start with simple integrations, ensure robust observability, and expand gradually to complex toolchains. The result is a system that combines the best of both worlds: articulate language with reliable action.

Comparison

| Feature | AI Agent | LLM |

|---|---|---|

| Autonomy and action in the world | High autonomy with tool use | Low autonomy; acts within prompts and requires human directions |

| Interaction with tools & environments | Orchestrates APIs, databases, sensors | Primarily text input/output; can be integrated via adapters |

| Context length & memory management | External memory/state through dedicated memory tools | Relies on prompt context; memory via retrieval/adapters |

| Best use case | Automation, decision loops, multi-step tasks | Language-centric tasks: drafting, analysis, coding help |

| Iteration & latency | Longer-running, event-driven workflows | Faster, interactive text generation |

| Governance needs | Requires robust monitoring, safety, and auditing | Easier governance via prompt controls and usage policies |

| Cost model | Depends on compute, tooling, and orchestration | Typically per-token or per-call with API pricing |

| Evaluation metrics | Task success, reliability, safety, and tool correctness | Fluency, factual accuracy, and prompt effectiveness |

Positives

- Automates complex workflows and reduces manual effort

- Enhances reliability through repeatable decision loops

- Integrates toolchains and external data sources at scale

- Offers domain-specific automation when properly scoped

What's Bad

- Increases architectural and governance complexity

- Requires robust safety, monitoring, and rollback mechanisms

- Potential data privacy and security risks with tool access

- Can incur multi-source costs and coordination overhead

Hybrid AI architectures that combine LLMs with autonomous agents provide the most practical value.

Use LLMs for language tasks and agents for autonomous actions with tool access. A hybrid approach often yields robust, scalable workflows while managing risk through governance and observability.

Questions & Answers

What is an AI agent and how does it differ from an LLM?

An AI agent is an autonomous system that acts in the world to achieve goals, often using tools and memory. An LLM is a language model focused on generating and reasoning with text. The difference ai agent and llm centers on autonomy and world-facing actions versus language-only reasoning.

An AI agent acts in the world to accomplish goals, while an LLM focuses on language tasks inside prompts.

Can an LLM function as an agent on its own?

An LLM by itself lacks persistent autonomy and environment interaction. It can simulate planning within a prompt but needs external tooling, memory, or control logic to act as an agent. This is why hybrids are common.

LLMs alone don’t autonomously act in the world; they need extra systems to become real agents.

What are the main risks of AI agents?

Key risks include safety violations, data leakage, tool abuse, and governance gaps. Address these with guardrails, monitoring, and clear ownership of tool access.

Risks include safety and data issues; guardrails and monitoring help keep agents in check.

How do I start building an AI agent within my product?

Begin with a narrow task, integrate a single tool, and add a memory layer. Iterate with observability dashboards and gradual rollout to production.

Start small: pick one task, add a tool, then expand with memory and monitoring.

Can I replace an LLM entirely with an agent?

Not usually. Agents complement LLMs by handling actions and tool use. For language-heavy tasks, LLMs remain essential unless the agent includes a strong language core.

Agents don’t replace LLMs for language tasks; they work best when combined.

Key Takeaways

- Define goals before tool selection to avoid scope creep

- Separate language tasks from action loops for clarity

- Invest in memory and tool governance early

- Pilot with small, auditable agents before scaling

- Hybrid designs often outperform single-technology solutions