ai agent like replit: A Practical Comparison for Developers

This comparative guide analyzes AI agents like Replit, focusing on integration depth, governance, cost, and extensibility to help developers, product teams, and leaders choose the right platform.

AI agents like Replit blend an in-browser IDE with autonomous task orchestration to accelerate coding and automation. This comparison highlights integration depth, governance controls, pricing models, and extensibility, so developers, product teams, and leaders can choose a platform that fits their workflows and risk tolerances. Whether you are building internal tools or customer-facing products, clarity on tradeoffs matters.

What is an ai agent like replit?

In modern software teams, an ai agent like replit is more than a code assistant. It combines a user-friendly coding workspace with autonomous task orchestration, enabling the agent to read files, run commands, query APIs, and orchestrate multi-step workflows without constant human input. The result is a loop where writing code triggers tests, builds, deployments, and data collection, all guided by intent and constraints. This pattern is especially valuable for rapid prototyping, educational environments, and cross-functional teams experimenting with automation. Ai Agent Ops's analysis shows that these platforms reduce context-switching and accelerate iteration cycles when they incorporate clear guardrails and auditable actions.

Core architectural patterns: environment, agent, and orchestrator

A typical ai agent like replit architecture consists of three core layers: (1) an executable environment that provides the runtime, tooling, and file system; (2) an autonomous agent that reasons, plans, and selects actions based on goals; and (3) an orchestrator or controller that sequences tasks, handles failures, and maintains state. Interfaces between these layers rely on APIs, webhooks, and event streams. The result is a resilient loop: plan → execute → verify → adjust. For teams, this separation supports testing, auditing, and governance, while still preserving the flexibility that developers expect from modern IDEs.

Deployment models: in-browser IDE, standalone agent, or hybrid

Deployment choices shape how teams adopt ai agent like replit. In-browser IDE models provide an immediate, low-friction entry point with cloud compute and shared workspaces. Standalone agents operate as independent platforms or services, accessible via APIs or CLIs, which is ideal for ecosystems with existing tooling. Hybrid setups blend both worlds, enabling local development workflows with cloud-backed orchestration. Each model carries tradeoffs in latency, control, security, and collaboration. When evaluating options, map your current tooling, data residency needs, and team skills to the deployment model that minimizes friction while maximizing governance and extensibility.

Evaluation criteria: integration depth, governance, and UX

Choosing between ai agents requires a clear, repeatable evaluation framework. Prioritize integration depth—how deeply the agent interacts with your IDE, repositories, CI/CD, and test suites. Governance capabilities matter too: role-based access, secrets management, audit trails, and policy enforcement reduce risk. User experience (UX) matters for adoption: intuitive prompts, task templates, and visual workflow editors speed onboarding. Compatibility with your tech stack (languages, frameworks, cloud providers) also influences ROI. Ai Agent Ops recommends scoring options on a weighted rubric that reflects your organization's risk tolerance and speed-to-value.

Security and governance foundations: risk, controls, and auditing

Security is foundational for AI agents that can execute commands and access sensitive data. Key considerations include sandboxed execution environments, restricted network access, and isolation of workloads. Secrets management, rotation policies, and least-privilege access controls reduce exposure. Auditing and replayable logs provide accountability for agent actions, while policy engines enforce compliance with organizational rules. Privacy implications, such as data retention and telemetry minimization, should be baked into the product strategy. A robust security model enables teams to trust automation as a scalable capability rather than a risk.

Developer productivity and ROI implications: measuring impact

Productivity effects vary by deployment model and governance maturity. Metrics to track include time-to-first-task, mean time to repair, and the rate of automated test executions. Early-stage pilots often show improvements in iteration speed and fewer context-switches, while larger deployments require governance to prevent runaway automation. ROI calculations should consider license costs, cloud compute usage, and the cost of building and maintaining guardrails. Ai Agent Ops's observations indicate that teams realize best ROI when pilots target high-value, repeatable workflows with clear auditability and rollback paths.

Collaboration features and team workflows: shared workspaces and governance

Team collaboration hinges on shared workspaces, versioned workflows, and role-based permissions. Features like simultaneous editing, task templates, and centralized dashboards help keep teams aligned. Governance features—such as policy enforcement, approvals, and ring-fenced environments—prevent cross-team interference and protect critical data. When teams coordinate, the agent’s decisions should be explainable and traceable. This transparency improves trust and accelerates learning across developers, designers, and operations staff.

Extensibility and connectors: plugins, APIs, and market ecosystems

Extensibility is the lifeblood of long-term value in ai agent like replit ecosystems. A healthy platform offers a plugin/extension model, connectors to cloud services, and a marketplace of templates. This openness accelerates adoption by letting teams tailor the agent to domain-specific workflows. However, it also raises governance considerations: third-party extensions can introduce risk if not curated. A balanced approach combines a robust vetting process with a lightweight governance layer to enable innovation without sacrificing security.

Pricing models and total cost of ownership (TCO)

Pricing for ai agents ranges from freemium tiers to per-user licenses or consumption-based models. TCO should account for license fees, cloud compute, storage, data transfer, and the cost of building guardrails. Cost predictability matters for budgeting, especially in teams scaling automation across multiple projects. When evaluating pricing, request transparent usage metrics, audit trails, and cost-control features such as quotas and alerts. Ai Agent Ops advises benchmarking against a baseline of manual development effort to quantify benefits.

Migration paths: from traditional IDEs to AI-driven workflows

Migrating to ai agent like replit often starts with a narrow pilot: a single repository, a limited set of tasks, and explicit success criteria. Gradually expand to more workflows, documenting changes and collecting feedback. Migration requires ensuring compatibility with existing CI/CD pipelines, test suites, and security controls. Equally important is training for developers and operators, plus updating playbooks and runbooks to reflect new automation behavior. A phased, well-communicated approach reduces risk and speeds value realization.

Best practices and anti-patterns: what to repeat and what to avoid

Best practices include starting with well-scoped tasks, enforcing policy-driven automation, and maintaining observable state and logs. Use explicit prompts, task templates, and guardrails to minimize drift. Anti-patterns to avoid include over-automation without governance, relying on opaque decision-making, and neglecting security reviews. Regular audits, post-mortems, and feedback loops help refine automation quality. The goal is to achieve reliable, auditable automation that grows with your team’s capabilities.

Real-world scenarios: three case studies of ai agent adoption

Case Study A describes a developer team embedding an ai agent like replit to automate test execution and deployment in a mid-sized project. Case Study B covers a product-ops group using the agent to orchestrate data pipelines and monitoring. Case Study C explores a small startup experimenting with AI-assisted code generation within a hybrid workflow. Across these scenarios, benefits include faster iteration, standardized task execution, and better collaboration—but challenges such as governance, cost management, and learning curves also emerge. These vignettes illustrate how strategic adoption yields tangible outcomes.

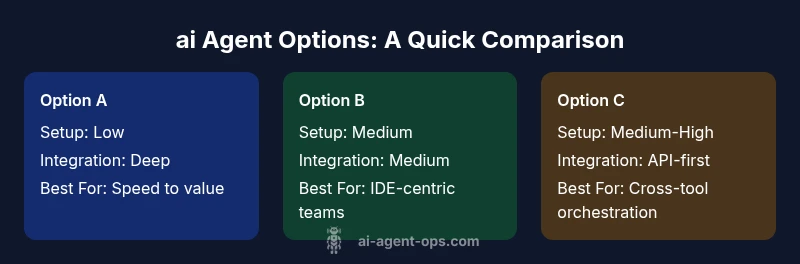

Feature Comparison

| Feature | Option A: Replit-like AI agent | Option B: IDE-integrated AI agent | Option C: Standalone automation agent |

|---|---|---|---|

| Setup difficulty | Low (cloud IDE ready) | Medium (requires existing IDE) | Medium-High (separate platform) |

| Integration depth | Deep: files, tests, and runtime in IDE | Medium: IDE plugin with targeted hooks | High: API-first with external systems |

| Collaboration features | Shared workspaces and live edits | Limited multi-user features | Strong governance and role-based access |

| Security controls | Sandboxing, basic secrets management | Enhanced DevSec controls | Advanced policy and secret management |

| Extensibility | Plugins and templates marketplace | Plugins limited but solid | Marketplace connectors and APIs |

| Pricing model | Freemium with usage-based tiers | Per-user license | Per-task or usage-based bundles |

| Best for | Speed to value in teams starting automation | Teams fully embedded in a single IDE | Organizations needing cross-tool orchestration |

Positives

- Speeds up coding and automation workflows

- Low barrier to entry with cloud-based environments

- Strong collaboration for teams

- Extensible with connectors and templates

- Promotes repeatable, auditable workflows

What's Bad

- Security and governance complexity increases with scale

- Costs can grow with usage and team size

- Vendor lock-in risk in some ecosystems

- Learning curve for advanced features and prompts

A strategic, guardrails-first ai agent is worth adopting for teams that value speed and repeatability

If your goals include faster iteration and scalable automation, prioritize options with strong governance and extensibility. Choose a deployment model that aligns with your existing tooling and data policy, and run a phased pilot to validate ROI and risk controls.

Questions & Answers

What defines an ai agent like replit?

An ai agent like replit is an AI-driven coding assistant that combines an online IDE, task orchestration, and automated execution of workflows. It goes beyond code completion by planning actions, running commands, and coordinating multiple steps in a project. This enables faster iteration while maintaining governance and traceability.

An AI agent like Replit is an AI coding helper that runs tasks inside an IDE, planning steps and coordinating actions across your project.

How do these agents differ from traditional IDEs?

Traditional IDEs mainly provide code editing and debugging features. AI agents add planning, decision-making, and automated execution of workflows that extend across tests, builds, deployments, and data gathering. The result is a more autonomous development experience with guardrails and audit trails.

They automate tasks beyond editing, planning and executing steps like tests and deployments with governance.

What are common security concerns?

Key concerns include access to secrets, execution of commands, and data leakage. Mitigate with sandboxing, least-privilege access, secrets management, and thorough auditing of agent actions.

Be mindful of secrets and execution risks; implement sandboxing and strong auditing.

What factors influence cost?

Cost factors include usage volume, number of developers, and the level of integration with external services. Consider licensing, cloud compute, storage, and the cost of building governance guardrails.

Costs depend on usage, seats, and integrations; plan for ongoing compute and licenses.

How to start with an ai agent like replit?

Begin with a focused pilot: choose a high-impact, repeatable workflow, set success criteria, and gradually expand. Ensure security controls and governance are in place from day one.

Start small with a concrete workflow, then expand as you learn.

Is it suitable for all teams?

AI agents are most beneficial for teams needing automation, rapid iteration, and cross-functional collaboration. Very small teams or projects with minimal automation needs may not justify the complexity or cost.

Great for teams chasing automation, less so for tiny projects.

What is the typical learning curve?

Expect an initial learning curve around prompts, task templates, and governance setup. With practice, teams realize faster iteration and clearer ownership of automated tasks.

There’s an initial learning curve, then faster work cycles with practice.

How can I measure ROI effectively?

Track time savings, defect rates, and deployment speed before and after adoption. Use guardrails to prevent runaway automation and quantify the cost of governance against benefits.

Measure time saved and deployment speed to gauge ROI.

Key Takeaways

- Evaluate integration depth before selecting a model

- Prioritize governance, auditability, and security features

- Choose an option that fits your team's collaboration needs

- Prototype with a focused workflow to measure ROI

- Plan for cost visibility and management from day one