ai agent like manus: Manus-style vs traditional AI agents

Explore how an ai agent like manus compares to traditional AI agents. This analytical comparison covers capabilities, safety, cost, and best-use scenarios for developers and leaders.

If your goal involves dynamic problem-solving and agentic workflows, an ai agent like manus is often the better choice. For stable, rule-driven tasks with tight governance, a traditional AI agent can offer more predictable performance and lower upfront risk. The right pick hinges on goals, data quality, and risk tolerance.

What qualifies as a Manus-like AI agent?

A Manus-like AI agent is an approach that emphasizes agentic capabilities: planning, multi-step reasoning, and autonomous action across tools and data sources. Unlike fixed-rule systems, it learns from feedback and adapts its behavior to changing tasks. In practice, such agents perform a cognitive loop: observe, infer, decide, act, and reflect. This cycle enables dynamic orchestration across APIs, databases, and human-in-the-loop interventions. According to Ai Agent Ops, the most successful Manus-like deployments combine robust guardrails with lightweight experimentation, allowing teams to scale agentic workflows while maintaining governance. A key feature is stateful persistence: the agent maintains context across interactions and uses memory to reason about past decisions. When you consider a ai agent like manus, you weigh adaptability against control, data needs against latency, and orchestration complexity against governance. This block sets the stage for a structured comparison with traditional AI agents and clarifies terms for non-expert stakeholders.

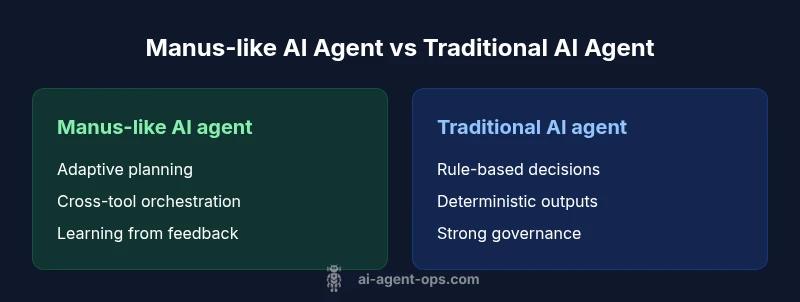

Key design differences between Manus-like agents and traditional agents

Manus-like AI agents differentiate themselves through learning-driven behavior, tool orchestration, and memory-based reasoning. Traditional agents, by contrast, rely on static rules or scripted decision trees. The Manus-like approach emphasizes planning over reaction, enabling multi-modal interaction with databases, APIs, and human operators. Expect components like a planner, a task executor, and a feedback loop that tunes behavior over time. This yields greater adaptability to shifting inputs and goals, but also increases the need for monitoring, governance, and guardrails. From a resource perspective, Manus-like systems typically consume more data infrastructure and compute, while traditional agents may require less data engineering but more manual tuning. Ai Agent Ops notes that the best outcomes come from a hybrid posture: preserve guardrails while allowing the agent to learn and adapt within defined boundaries. This balance is crucial for organizations aiming to scale agentic workflows responsibly.

Use-case alignment: when to choose Manus-like vs traditional

Choosing between Manus-like and traditional AI agents depends on task entropy, user expectations, and risk tolerance. Manus-like agents shine in dynamic customer journeys, complex decision pipelines, and cross-tool orchestration that spans humans and systems. They excel where tasks evolve over time and where autonomy can reduce manual handoffs. Traditional agents fit stable, repeatable processes with clear, auditable rules—think structured data entry, validation, or routine approvals. In regulated environments, a traditional agent offers predictable governance, easier audits, and shorter time-to-value for rule-defined workflows. Ai Agent Ops emphasizes mapping business goals to agent capabilities: if the objective is flexibility and learning from outcomes, start with Manus-like designs; if accuracy, traceability, and safety are paramount, begin with rule-based solutions and layer agentic features later.

Architecture and data considerations

Manus-like AI agents typically rely on larger, diverse data sources to fuel learning and decision-making. They use memory modules to retain context across tasks, allowing the agent to reason about prior actions when choosing next steps. Data pipelines must accommodate streaming inputs, privacy constraints, and real-time feedback signals. In contrast, traditional agents depend on structured data, explicit schemas, and deterministic logic. Their architecture favors clear data lineage, fixed decision paths, and deterministic outputs. Both approaches require robust interfaces to tools, APIs, and human-in-the-loop workflows, but Manus-like systems demand thoughtful data governance to prevent drift and ensure accountability. From a performance perspective, Manus-like agents can improve over time, but initial ramp-up requires careful experimentation, monitoring dashboards, and guardrails to prevent unintended behaviors. Ai Agent Ops highlights the need for clear success criteria and continuous validation when building these architectures.

Safety, governance, and interpretability

Safety is a core concern for Manus-like AI agents because decisions may emerge from probabilistic reasoning rather than fixed rules. Governance frameworks should include guardrails, approval gates, and anomaly detection to catch undesirable outcomes early. Interpretability remains a challenge with autonomous planning and multi-step reasoning, making explainability a critical research area and practical requirement. Traditional agents offer easier audit trails: rule citations, decision logs, and auditable feature pipelines. The trade-off is reduced adaptability. A prudent path often blends approaches: deploy Manus-like components for complex decision making, but anchor them with traditional governance around sensitive operations. This hybrid model supports accountability while preserving the benefits of agentic automation. Ai Agent Ops recommends documenting decision boundaries, failure modes, and escalation procedures to maintain trust in both architectures.

Performance, cost, and scaling considerations

Manus-like AI agents can deliver high value where tasks require autonomy, cross-domain reasoning, and continuous learning. However, scaling these systems often involves expanding data infrastructure, model serving, and monitoring capabilities. Costs may grow with data volume and compute, though efficiency gains can offset this through reduced manual intervention. Traditional agents typically offer more predictable costs, bounded by fixed rules and simpler orchestration. The balance between performance and cost hinges on data quality, the complexity of tasks, and the organization’s ability to invest in observability. Ai Agent Ops advocates a staged approach: start with a pilot in a controlled domain, measure impact, and incrementally broaden scope while maintaining governance and performance budgets.

Integration and tooling ecosystem

Integrating Manus-like agents with existing stacks requires robust tool orchestration layers, SDKs, and middleware to manage tool invocation, retries, and error handling. You will likely need an orchestration layer capable of handling asynchronous calls, memory updates, and cross-service coordination. Traditional agents integrate more simply with legacy systems due to fixed signals and clear interfaces. The ecosystem choice matters: open-source tooling can accelerate experiments, while enterprise platforms may provide stronger governance, security, and compliance. A practical approach is to position Manus-like components as the decision layer, with traditional adapters enforcing safety constraints and deterministic outputs when facing critical systems. This separation helps teams reuse existing investments while enabling advanced automation.

Real-world patterns and best practices

Successful deployments combine experimentation with structured guardrails. Start by defining non-negotiable safety constraints, data handling principles, and escalation paths. Use sandboxed environments for prototyping Manus-like agents before connecting to live systems. Establish observability dashboards that expose decision quality, latency, error rates, and policy violations. Incrementally add capabilities like tool chaining, memory updates, and reflective loops to improve planning quality. In parallel, maintain a strong artifact library: design documents, decision logs, and test cases to support governance. Across teams, cultivate a culture of responsible experimentation, clear ownership, and cross-functional reviews to ensure that Manus-like and traditional components align with business outcomes.

Common pitfalls and how to mitigate

Pitfalls include drift in decision behavior, over-reliance on data quality, and inadequate monitoring. Drift can arise when training data diverges from production contexts; mitigate with continuous evaluation, automated tests, and retraining schedules. Insufficient monitoring leaves serious issues unseen for too long; implement anomaly detection, alerting, and kill-switch mechanisms. Another risk is performance brittleness when tool integrations fail; guard against this with retry policies, circuit breakers, and graceful degradation. Finally, governance gaps can erode trust; address them with clear documentation, auditable logs, and explicit escalation rules. A disciplined rollout plan, combined with frequent audits, reduces these risks and supports scalable, responsible automation.

Roadmap implications for teams

For teams planning long-term adoption, align architecture with strategic goals: prioritize modular components, well-defined interfaces, and replaceable parts to minimize vendor lock-in. Build a governance layer that can adapt as the organization grows, ensuring compliance with data privacy and security requirements. Invest in talent capable of both data engineering and software governance to support Manus-like agent deployments. Consider hybrid flows that use Manus-like reasoning for decision making while enforcing traditional controls for high-stakes tasks. This approach provides the benefits of agentic AI while preserving predictability and accountability as you scale.

Benchmarking and evaluation metrics

Evaluate Manus-like vs traditional AI agents using a consistent, multi-faceted metrics set. Operational metrics include task completion rate, latency, and failure rate. Quality metrics cover goal alignment, decision clarity, and escalation effectiveness. Governance metrics examine auditability, policy adherence, and safety incident frequency. Economic metrics track total cost of ownership, implementation time, and ROI over defined periods. The key is to monitor both quantitative outcomes and qualitative signals, such as user satisfaction and trust in automation, to determine which approach best matches organizational objectives.

Implementation checklist for teams deciding between Manus-like vs traditional AI agents

- Define the primary objective and expected outcomes. 2) Map tasks to either Manus-like capabilities or rule-based approaches. 3) Establish governance, safety, and escalation rules. 4) Design a data strategy that supports learning and monitoring. 5) Build a phased rollout with pilot domains and measurable success criteria. 6) Set up observability dashboards for decision quality, latency, and safety events. 7) Plan for integration with existing tooling and cross-functional teams. 8) Prepare a governance playbook and documentation to support audits. 9) Iterate with feedback loops and guardrail refinements. 10) Reassess periodically as goals evolve and data quality changes.

Comparison

| Feature | Manus-like AI agent | Traditional AI agent |

|---|---|---|

| Learning approach | Self-driven, data-informed planning and adaptation | Rule-based or static modeling with explicit constraints |

| Data requirements | High-volume, diverse datasets; continuous learning | Structured inputs; limited drift; slower learning |

| Explainability | Emergent explanations; tracing decisions is complex | Explicit rules make tracing straightforward |

| Decision latency | Potentially higher due to planning and tool orchestration | Typically lower with fixed routines |

| Customization ease | Flexible, multi-domain customization; high upkeep | Predictable customization; easier governance |

| Safety & governance | Guardrails needed; monitoring essential | Clear governance via rules; easier audits |

| Best for | Dynamic, evolving tasks; agentic workflows | Stability-focused, high-control environments |

| Cost trajectory | Potentially variable; scaling can be efficient with data | Higher predictability; potentially lower long-term cost in simple tasks |

Positives

- Greater adaptability to unstructured inputs

- Strong support for agentic workflows and orchestration

- Potential for end-to-end automation with less manual coding

- Continuous improvement from feedback loops

- Better collaboration between humans and AI agents

What's Bad

- Increased complexity and setup effort

- Dependence on data quality and monitoring

- Risk of unpredictable behavior without guardrails

- Higher costs if data scale grows rapidly

Manus-like AI agents are the better default for dynamic, complex tasks; use traditional agents when predictability and strict governance are paramount.

For flexible workflows, choose Manus-like; for safety and control, opt for traditional. Ai Agent Ops's verdict is to blend approaches with guardrails to scale automation responsibly.

Questions & Answers

What is a Manus-like AI agent?

A Manus-like AI agent is an architecture that emphasizes agentic capabilities: planning, reasoning, and autonomously acting across tools to achieve goals. It relies on data-driven learning and dynamic decision-making, rather than fixed rules.

A Manus-like AI agent plans and acts across tools to reach goals, learning as it goes. It’s flexible, but needs safeguards.

When should I choose a Manus-like agent over a traditional agent?

Choose Manus-like agents when tasks are dynamic, ambiguous, or require cross-tool orchestration. If your priorities include adaptability and automation at scale, Manus-like is often appropriate. For highly regulated, stable tasks, a traditional agent may be safer and easier to govern.

If your work is dynamic and needs cross-tool decisions, go Manus-like. For stable, well-defined tasks, a traditional agent might be better.

What are the main risks with Manus-like agents?

Risks include drift in behavior, increased data requirements, and the need for robust monitoring. Without guardrails, unpredictable outputs can arise. Plan for governance, auditing, and rapid failure handling.

Risks include drift and unpredictability; guardrails and monitoring are essential.

How do costs compare over time between Manus-like and traditional agents?

Manus-like agents can have variable costs tied to data, compute, and maintenance. Traditional agents offer more predictable costs due to fixed rules and simpler orchestration. Budget for data infrastructure if choosing Manus-like.

Manus-like can scale cost with data and compute; traditional tends to be more predictable.

Can Manus-like agents be integrated with existing tools?

Yes, but it requires a well-designed orchestration layer and adapters to connect to existing tools. Integration complexity is higher than with traditional agents, but benefits include deeper automation and adaptability.

They can, with proper integration layers and adapters.

What metrics matter when evaluating Manus-like agents?

Key metrics include task completion rate, latency, decision quality, safety incidents, and governance compliance. Economic metrics like total cost of ownership and ROI are also critical.

Look at completion, latency, quality, safety, and cost to decide impact.

Key Takeaways

- Assess task complexity before choosing an architecture.

- Prioritize data strategy for Manus-like agents.

- Invest in monitoring and guardrails.

- Consider governance and compliance early.

- Plan for integration with existing tooling.