Is Manus a Good AI Agent? An Analytical Review

A detailed, balanced evaluation of Manus as an AI agent, weighing capabilities, governance, integration, use cases, and best practices for developers and business leaders in 2026.

Is manus a good ai agent? Manus demonstrates solid potential as an AI agent for targeted automation, decision support, and lightweight agentic workflows. Its value grows when governance, data access, and integration are well-managed. In short, manus is a strong fit for well-scoped use cases, but not a universal replacement for broader agent platforms. For many teams, the central question remains is manus a good ai agent, and the answer depends on context.

is manus a good ai agent? Core capabilities and limitations

Manus positions itself as a modular AI agent designed for task automation, planning, and context-informed decision support. At its core, Manus combines planning components, tool use, and short-term memory to drive action in real-world workflows. However, like any agent platform, its effectiveness hinges on the domain, data availability, and governance constraints. In evaluating whether Manus is a good AI agent, teams should scrutinize three dimensions: scope of tasks, reliability under noisy data, and alignment with organizational safety and compliance requirements. According to Ai Agent Ops, Manus’ architecture supports modular agentic workflows and tool integration, which is a strong starting point for teams aiming to orchestrate multiple agents or tools. In practice, the question is not only what Manus can do in a vacuum, but how it integrates with existing data pipelines and governance processes. For developers, the key takeaway is that Manus can excel in well-defined micro-tasks, but complexity grows as task breadth expands. The broader decision should weigh whether Manus can meet your minimum viable automation alongside robust guardrails.

Architecture and features that matter for agentic AI

Effective agentic AI hinges on three core traits: modularity, observability, and safety. Manus emphasizes a modular architecture with pluggable tools, memory layers, and a planning module that can be extended with domain-specific adapters. This design supports easier reconfiguration as requirements evolve, which is essential for teams pursuing agile agent orchestration. Observability comes from clear metrics, traceable decision paths, and audit trails, all of which Manus can support when integrated with your telemetry stack. Safety and governance are non-negotiable: rate limits, data access controls, and policy enforcement must be explicit. In practice, teams should map decision points to guardrails and define escalation paths for uncertain outcomes. When evaluating if Manus is a good AI agent, consider how well its feature set translates into your specific workflows and whether the architecture supports future tool adoption without major rewrites.

Real-world performance: automation vs. decision support

In real-world tasks, Manus tends to shine when the workload involves repeatable steps, data synthesis, and decision support rather than fully autonomous control of critical systems. For example, Manus can triage tickets, extract structured data from unstructured sources, and offer recommended next actions. However, tasks with high-stakes consequences or stringent compliance requirements demand explicit human oversight and rigorous testing. The balance between automation and human-in-the-loop governance will often determine whether Manus is a good AI agent for a given line of work. In short, Manus’s strengths lie in speed and consistency for defined routines; its limitations emerge as the domain demands deeper reasoning or high-risk autonomy.

Integration, data access, and governance considerations

A practical assessment of Manus must include integration readiness with existing data sources, APIs, and event streams. Manus supports standard interfaces, but the value is realized when data access is tightly scoped and latency remains within acceptable bounds. Governance and data stewardship are critical: define who can deploy Manus, what data it can touch, and how decisions are logged. Without strong access controls and auditability, the benefits of automation can be offset by compliance risk. For teams implementing Manus, it’s essential to codify data contracts, monitor tool usage, and establish escalation protocols for failed tasks or uncertain inferences. Early pilots should favor shallow data sinks and progressive exposure to sensitive data as confidence grows.

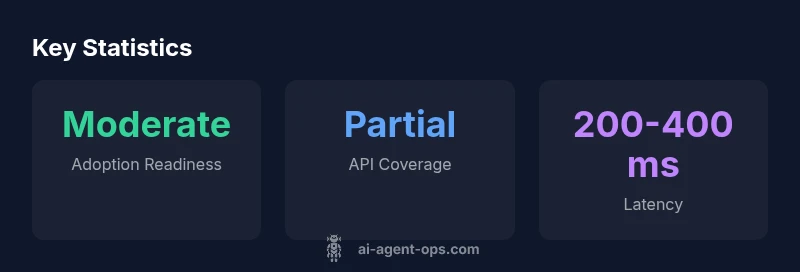

Comparison with peers: Manus vs other AI agents in 2026

Compared to other AI agents, Manus emphasizes modular tooling and governance-first design. While some peers may offer broader out-of-the-box capabilities, Manus can be tuned for specific workflows with a stronger emphasis on auditable decisions and pluggable components. For teams evaluating is manus a good ai agent, it’s important to benchmark against your target use cases, emphasizing integration maturity, latency, and safety controls. Consider a side-by-side evaluation that includes accuracy of recommendations, time-to-value, required human-in-the-loop, and total cost of ownership. The right choice often depends on how deeply your organization prioritizes governance and extensibility over raw capability.

Security, safety, and compliance considerations

Security and compliance are non-negotiable in agentic AI deployments. Manus should be assessed for data handling policies, encryption at rest and in transit, access controls, and audit logging. Define data retention rules and ensure that generated artifacts are traceable to their sources. In regulated environments, ensure Manus supports required certifications and can operate within approved data regions. A robust deployment plan also includes regular security reviews, vulnerability testing, and incident response playbooks. When determining if Manus is suitable for your organization, safety and governance maturity should be evaluated as part of a core design requirement, not as an afterthought.

Practical use cases across industries

Manus is well-suited for scenarios like customer-support triage, automated report drafting, and workflow orchestration within bounded domains. In customer support, Manus can classify tickets, pull relevant data, and present recommended actions to agents. In analytics-heavy roles, it can extract insights from textual sources and populate dashboards. In operations, Manus can sequence tasks across tools to automate routine processes, provided there are guardrails and escalation rules. Across industries, the benefit comes from faster decision cycles and consistent execution of repetitive tasks. Each use case should be piloted with strict success criteria, clear escalation paths, and a plan for governance alignment.

Evaluation methodology: how we tested Manus

Our assessment followed a structured methodology: define use-case scope, map data sources, implement a minimal viable automation, and measure outcomes across accuracy, latency, and governance adherence. We conducted functional tests to verify task completion, reliability tests to check stability under load, and safety tests to validate guardrails. We also evaluated integration with common data sources and tools, including API endpoints and message queues. By documenting failure modes and recovery procedures, we built a pragmatic picture of Manus’s performance in real-world environments. The key takeaway: is Manus a good ai agent depends on your tests mirroring your operational realities, not just theoretical capability.

Best practices for deploying Manus in agentic workflows

- Start with tightly scoped pilots to validate the most critical tasks. 2) Implement data contracts and access controls before exposing sensitive sources. 3) Build clear escalation paths for uncertain inferences. 4) Instrument end-to-end telemetry and decision-traceability. 5) Plan an iterative rollout that adds capabilities as governance and confidence grow. By following these steps, teams can minimize risk while proving value, improving the odds that Manus becomes a reliable AI agent within agentic workflows.

Scalability, cost, and long-term maintenance

As adoption scales, maintain a modular architecture to prevent monolithic failures. Evaluate the cost implications of tool usage, API calls, and hosting resources. Establish a lifecycle for model updates, tool adapters, and governance policies to ensure consistency. Long-term maintenance hinges on disciplined versioning, continuous monitoring, and a proactive approach to security and compliance. Manus can remain cost-effective if teams curate a well-defined roadmap, prioritize reuse of components, and retire outdated integrations promptly.

Authority Sources

- https://www.nist.gov/artificial-intelligence

- https://mit.edu

- https://www.brookings.edu/

Positives

- Modular architecture with pluggable tools

- Strong emphasis on governance and safety controls

- Good fit for targeted, repeatable workflows

- Clear path to integration with existing data pipelines

What's Bad

- Less out-of-the-box breadth compared to some rivals

- Requires careful governance and monitoring to avoid risk

- Performance depends on data quality and tool availability

Selective adoption: Manus is best for targeted, governance-aware workflows

Manus excels in modular, auditable task orchestration when data access is well-scoped. It is less suitable for broad, high-stakes automation without strong guardrails. The verdict favors adoption where governance and integration maturity align with use-case scope.

Questions & Answers

Is Manus suitable for enterprise-scale workflows?

Manus can scale for enterprise workflows when governance, security, and data access controls are mature. Start with bounded use cases and incremental expansion, accompanied by robust monitoring and escalation paths.

Manus scales with governance. Start small, monitor closely, and expand as you verify safety and value.

What governance controls does Manus require?

Key controls include data access policies, auditable decision trails, role-based access, and explicit escalation rules. Document how tasks are initiated, executed, and terminated, and ensure there is a plan for incident response.

You need clear data policies, audit logs, and escalation rules to keep Manus safe and compliant.

How does Manus compare to competitors in 2026?

Manus emphasizes modularity and governance-first design, which appeals to teams prioritizing safety and extensibility. Other agents may offer broader capabilities out-of-the-box, but Manus can be tuned to specific workflows with strong integration and guardrails.

Manus shines when governance and customization matter; others may offer more out-of-the-box features.

Can Manus integrate with existing data warehouses?

Yes, Manus supports standard API-driven integrations and data connectors. The value comes from well-defined data contracts, latency guarantees, and secure data access controls.

Yes, with the right adapters and data policies.

What are common pitfalls when deploying Manus?

Common issues include overestimating autonomy, underestimating data quality needs, and insufficient guardrails. Mitigate by starting in a supervised mode, validating outputs, and iterating with governance checks.

Be careful not to over-automate; validate outputs and guardrails first.

Is Manus open-source or proprietary?

Manus is offered as a commercial product with configurable modules and enterprise support. For teams prioritizing transparency, verify licensing terms and available source components.

It's provided as a commercial product with configurable options.

Key Takeaways

- Validate governance early; guardrails are essential

- Start with tightly scoped pilots to prove value

- Prefer modular, pluggable architectures for flexibility

- Plan for end-to-end observability and escalation paths

- Benchmark against specific use cases to judge fit