Ai Agent Manus: A Practical How-To for Building AI Agents

Step-by-step guide to building AI agents with ai agent manus. Learn design, governance, testing, and deployment for scalable, agentic AI workflows in modern teams.

You will learn to design, prototype, and deploy AI agents using the ai agent manus approach. This method emphasizes modular agent components, task catalogs, and governance. Before you start, ensure you have a defined workflow, access to data sources, and a testing environment. Follow this step-by-step guide to build reliable, scalable agentic AI.

What is ai agent manus?

ai agent manus is a modular framework for engineering AI agents that collaborate with humans and other systems to complete complex tasks. By organizing capabilities into discrete modules—perception, planning, action, and governance—the approach supports reusable components and safer deployments. According to Ai Agent Ops, the ai agent manus model emphasizes clear task definitions, configurable policies, and auditable decision trails. Practically, teams use manus to assemble agents from well-scoped building blocks, then extend them as requirements evolve. For developers, this means faster iteration, better maintainability, and a documented path from idea to production.

As you work with ai agent manus, you’ll notice an emphasis on agent orchestration, memory, and tool usage. The framework helps you map business goals to automated tasks, define success criteria, and govern how agents interact with data. This foundational clarity makes it easier to scale agentic workflows across projects and teams while preserving safety and compliance. The concept is not about a single product—it’s a blueprint for composable AI systems that can grow with your business needs.

Core concepts and components

ai agent manus rests on a handful of core concepts: modular agents, task catalogs, orchestrators, and governance rails. Each module encapsulates a capability (e.g., web data extraction, natural language reasoning, or API integration) and can be recombined without rebuilding from scratch. An orchestrator coordinates task sequences, memory stores context, and ensures data provenance. Governance rails—policies, role-based access, and monitoring—provide guardrails to prevent unsafe actions.

In practice, manus encourages teams to design with interfaces and contracts. Modules expose predictable inputs and outputs, enabling plug-and-play replacements. This reduces coupling and accelerates testing. Practically, you should start with a minimal viable set of modules, then add capabilities as your use cases demand. The outcome is an AI agent ecosystem that’s easier to audit, extend, and maintain.

Defining the task catalog and agent roles

A well-defined task catalog is the backbone of ai agent manus. List all tasks your agents will perform, with explicit intents, input data, expected outputs, and acceptance criteria. For each task, assign a role (e.g., data collector, decision-maker, actuator) and specify success metrics. This catalog becomes the single source of truth for new team members and external collaborators. As you flesh out the catalog, you’ll also define how agents collaborate—who takes ownership, how tasks are handed off, and when human oversight is required. Documenting these decisions early saves time during integration and testing.

To keep things practical, organize tasks by domain (e.g., customer support, data enrichment, workflow automation) and by capability (perception, reasoning, action). Use concise templates so engineers can rapidly add new tasks without rewriting the wheel. The result is a scalable map of capabilities that supports iterative growth of the AI agent manus system.

Data, governance, and safety considerations

Data handling is central to ai agent manus. Define what data each module can access, how data is stored, and how long it’s retained. Implement access controls, encryption, and data minimization to reduce risk. Governance should cover model usage policies, logging requirements, and audit trails that verify decisions and actions. Safety checks—such as confirmation prompts for irreversible actions and rate limits on API calls—protect users and systems from accidental or malicious misuse.

Ethical and legal considerations matter, too. Align your manus implementation with your organization’s data governance policies and industry regulations. Regularly review models and prompts for bias or drift, and maintain a changelog of updates so stakeholders can track evolving behaviors. Building a culture of transparency around agent decisions helps stakeholders trust the system and supports safer automation.

Architecture patterns for agent orchestration

Effective orchestration is a core pillar of ai agent manus. Two common patterns are centralized orchestration and decentralized, modular orchestration. Centralized orchestration provides a single coordinator that sequences tasks, while decentralized patterns distribute control across modules so agents can self-manage subtasks. A hybrid approach often works best: a central orchestrator defines the macro workflow and module-level agents handle specialized subtasks with their own local logic.

Key considerations include latency, fault tolerance, and observability. Implement asynchronous messaging (e.g., event-driven queues) to keep modules decoupled, and log rich context for traceability. Use memory layers to persist context across interactions, which improves consistency in multi-step tasks. Finally, design clear interfaces and contracts so modules can be replaced as needs change without breaking the entire system.

A practical run-through: example scenario

Imagine a manus-powered assistant that helps a product team prioritize feature requests. The task catalog includes data gathering (pull user feedback from tickets and surveys), prioritization (apply business rules and impact assessments), and reporting (generate a summary for stakeholders). The orchestrator sequences these tasks, each module contributing inputs and outputs with explicit contracts. The perception module collects data, the reasoning module evaluates options, and the action module creates the product backlog item or a summarized report. Governance rails ensure sensitive data is masked when generating reports and all steps are auditable. Over several iterations, the team refines the catalog, tunes prompts, and expands capabilities to include A/B test interpretations and release readiness checks.

This real-world scenario showcases how ai agent manus translates a vague idea into a concrete, repeatable workflow that teams can own and improve over time.

Testing, monitoring, and iteration

Testing is built into every manus workflow. Start with unit tests for individual modules and end-to-end tests for complete task sequences. Use sandbox data to simulate real-world inputs and verify outputs against acceptance criteria. Monitoring should cover latency, error rates, and success metrics for each task. Dashboards and alerts help teams detect drift or failures early. Iteration is facilitated by maintaining a changelog and a versioned task catalog so you can rollback if needed. Regularly update your governance rules to reflect new capabilities and risks. Continuous improvement is the goal, not a one-off deployment.

Scaling and maintenance

As your AI agent manus deployment grows, scale by adding modular capabilities and partitioning workloads. Use load-balanced orchestrators and parallel task execution to handle increasing demand. Maintain clear API contracts to prevent cascading failures when modules evolve. Documentation should stay current with edits to the task catalog, prompts, and governance policies. Finally, invest in ongoing education for teams and establish runbooks for common issues. The result is a robust, scalable manus ecosystem that supports sustained automation without sacrificing safety.

Tools & Materials

- Computing environment (local or cloud)(Include Python 3.11+ and Node.js 18+)

- Code editor and terminal(VS Code or JetBrains with relevant extensions)

- API access or sandbox data(Mock data for testing; ensure data governance)

- AI framework/tooling(Install latest stable version of OpenAI SDK, LangChain, or equivalents)

- Task catalog blueprint(Document tasks, intents, and success criteria)

- Monitoring/logging stack(Prometheus, Grafana, or built-in logs)

- Security and governance checklist(Access controls and data handling policies)

- Testing dataset and harness(Unit tests for tasks; end-to-end scenarios)

Steps

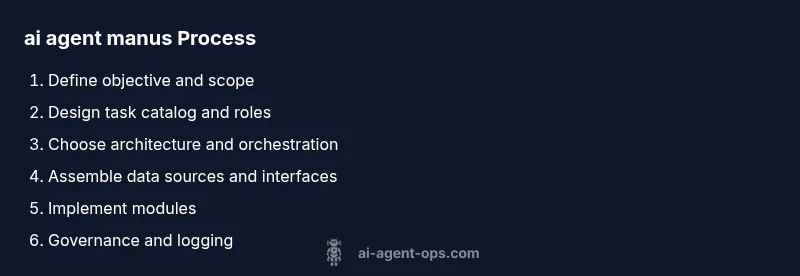

Estimated time: 2-4 hours

- 1

Define objective and scope

Clarify the business goal and measurable success criteria for the AI agents. Align stakeholders on expected outcomes and boundaries.

Tip: Document success metrics before implementation. - 2

Design task catalog and roles

List tasks with intents, inputs, outputs, and acceptance criteria. Assign agent roles (data collector, decision-maker, actuator).

Tip: Use templates to keep definitions consistent. - 3

Choose architecture and orchestration

Decide between centralized vs modular orchestration. Define interfaces and data contracts between modules.

Tip: Prefer loosely coupled components with clear boundaries. - 4

Assemble data sources and interfaces

Identify data sources, APIs, and message formats. Implement access controls and data masking where needed.

Tip: Mock data for safe early testing. - 5

Implement agent modules

Develop perception, reasoning, and action modules with defined inputs/outputs and error handling.

Tip: Keep modules small and replaceable. - 6

Establish governance and logging

Put policies, prompts, and audit trails in place. Enable verbose logging for traceability.

Tip: Log decisions and data lineage where possible. - 7

Run tests with sandbox data

Execute unit and end-to-end tests to validate module interactions and outputs.

Tip: Automate tests to run on each change. - 8

Prototype deployment in staging

Deploy to a staging environment with real-world workloads and guardrails.

Tip: Monitor for drift and alert on anomalies. - 9

Monitor, iterate, and scale

Analyze performance, iterate on catalog and prompts, and plan gradual scaling.

Tip: Keep a living backlog of improvements.

Questions & Answers

What is ai agent manus and why use it?

ai agent manus is a modular framework for constructing AI agents from reusable components. It supports scalable orchestration, governance, and auditable decision-making to deliver reliable automation.

ai agent manus is a modular framework for building AI agents from reusable parts, enabling scalable and auditable automation.

What are the essential components of manus?

Core components include perception, reasoning, action modules, an orchestrator, memory, and governance rails. Together they enable modular, testable, and safe agent workflows.

The essential pieces are perception, reasoning, action modules, an orchestrator, memory, and governance rails.

How do I start a manus project in a team?

Begin with a shared task catalog and governance policy. Establish interfaces between modules, assign roles, and begin with a pilot workflow before expanding.

Start with a shared task catalog and clear interfaces, then pilot a simple workflow to learn and adapt.

What governance considerations are needed?

Implement data access controls, auditing, prompt policies, and safety checks. Maintain a changelog and ensure compliance with relevant regulations.

Set up data controls, auditing, prompts policies, and safety checks with a clear change log.

How should I test and monitor manus workflows?

Use unit tests for modules and end-to-end tests for workflows. Monitor latency, success rates, and data quality with dashboards and alerts.

Test modules individually and end-to-end, and monitor performance with dashboards.

Watch Video

Key Takeaways

- Define clear objectives and success criteria.

- Build a modular, reusable manus component set.

- Governance and safety are essential from day one.

- Test early with sandbox data and iterate.

- Scale by expanding modules and maintaining contracts.