Manus AI Agent vs ChatGPT: A Practical Side-by-Side Comparison

Compare Manus AI agent and ChatGPT across autonomy, integration, and real-world use cases. Learn which excels in automated workflows or human-like dialogue, with practical guidance for developers and leaders.

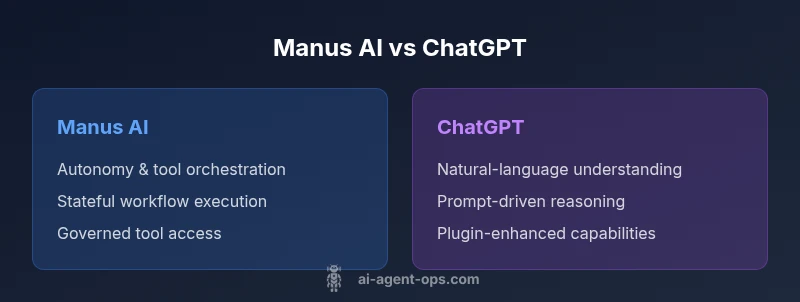

In the manus ai agent vs chatgpt landscape, Manus AI focuses on agent-based orchestration and automated tool use, delivering autonomous execution of multi-step tasks. ChatGPT excels at natural language understanding and generation, supporting flexible dialogues. For automation-heavy workflows, Manus AI generally outperforms on end-to-end execution, while ChatGPT shines in conversational tasks, knowledge retrieval, and rapid content ideation. The best choice depends on your goals and governance needs.

Overview: manus ai agent vs chatgpt in practice

The comparison between Manus AI agent and ChatGPT is not about which model is universally better; it’s about which paradigm suits a given goal. The phrase manus ai agent vs chatgpt is often used to describe two dominant approaches in modern AI-enabled workflows: autonomous agents that can plan, select tools, and execute actions, versus conversational LLMs that generate text and reason about prompts. According to Ai Agent Ops, the experimentation with these models reveals that Manus AI’s strength lies in orchestrating a chain of operations across systems, while ChatGPT provides rich, human-like dialogue and flexible prompt-based reasoning. For developers and leaders, the practical takeaway is to match the architecture to the task: automate with Manus AI where precision and repeatability matter, and rely on ChatGPT for dialogue, ideation, and knowledge retrieval. In this article, we examine the core differences, the tradeoffs, and a structured framework to decide when to deploy each approach within an agentic AI workflow.

Throughout this discussion, you’ll see how the two capabilities interact, where they converge, and where governance, data privacy, and latency shape the decision. The goal is to give you a transparent, data-informed view of manus ai agent vs chatgpt, with concrete criteria you can apply in your own projects.

Architecture and core capabilities

Manus AI agent and ChatGPT stem from different design philosophies. Manus AI is typically built as an agent framework that can be endowed with task planners, tool adapters, and stateful memory. It emphasizes autonomy: deciding what to do next, what tools to call, and how to sequence actions to reach an objective. This makes it well-suited for automation pipelines, operational workflows, and decision-oriented tasks that require chaining multiple systems. ChatGPT, by contrast, is a large language model architected for natural language understanding and generation. It excels at conversation, content drafting, brainstorming, and knowledge synthesis, and it can be augmented with plugins or tools through controlled prompts or API integrations. In manus ai agent vs chatgpt terms, the first tends to be execution-focused, the second narrative-focused. Reality, however, is often a hybrid: a ChatGPT-based interface that triggers Manus-style agents to perform concrete tasks.

From a memory and context perspective, Manus AI tends to manage persistent state and tool schemas, enabling cross-session continuity in a controlled way. ChatGPT handles context within a session or memory via platform features, but its long-term memory is typically realized through external persistence and prompt engineering. For governance, Manus AI offers structured tool access and audit trails, while ChatGPT emphasizes prompt-level controls and data handling policies. When you consider performance, Manus AI’s latency includes planning, tool calls, and execution; ChatGPT’s latency centers on generation speed and API throughput. The Manus ai agent vs chatgpt dynamic thus reveals which component should own what part of the workload, distributing tasks to optimize for reliability and speed.

For organizations evaluating these paths, a key conclusion from Ai Agent Ops is that most effective architectures blend both capabilities: an orchestration layer or agent that handles automation and tool integration, plus a conversational interface that manages human-facing interactions. This hybrid approach enables automated workstreams alongside natural language engagement, enabling teams to move faster while maintaining clarity and control over outcomes. In the following sections, we’ll drill into use cases, integration considerations, and practical decision criteria that help you navigate manus ai agent vs chatgpt choices.

Use cases and best-fit scenarios

When deciding manus ai agent vs chatgpt for real-world work, match the scenario to the model’s strengths. Manus AI shines in automation-heavy contexts: ingesting data from multiple sources, authenticating with services, orchestrating multi-step workflows, and making execution decisions based on predefined rules and tool availability. In enterprise settings, it can coordinate inventory checks, order processing, customer onboarding, or compliance workflows where traceability and repeatability are paramount. ChatGPT, on the other hand, excels in tasks that require nuanced language, reasoning with uncertain data, and producing user-facing content. It’s ideal for customer support chat, knowledge base summarization, product descriptions, brainstorming, and prompt-driven experimentation. In a manus ai agent vs chatgpt comparison, the right approach often combines both: use ChatGPT to interface with users and translate intent into actionable tasks for a Manus-controlled pipeline.

A practical pattern is to deploy ChatGPT as the front end for intent detection, then hand off to a Manus AI agent for execution. In regulated domains, the agent can enforce governance, tool access, and audit trails while preserving the natural language experience for end users. For developer teams, a hybrid design reduces risk: the high-velocity language generation remains modular, while the robust, auditable automation executes critical business processes. Across use cases, you’ll see a common thread: the most effective setups emphasize clear ownership of decisions, robust error handling, and observable metrics to measure both linguistic quality and execution reliability.

Integration and deployment considerations

Deploying manus ai agent vs chatgpt requires thoughtful integration planning. Manus AI typically requires a defined toolset (APIs, plugins, databases) and a controller that determines task sequencing and error recovery. The deployment footprint includes the agent runtime, tool adapters, and a state store that preserves context across steps. Integration complexity rises with the number of tools, data sources, and security requirements. ChatGPT deployments focus on the interface, prompt design, and optional plugins or tools that expand capabilities. The primary concerns here are data privacy, access control, and plugin governance. If you mix both in an agentic AI workflow, you’ll want a layered architecture: an interface layer with strong authentication, an orchestration layer for decision logic, and a data layer with traceability. For teams, a staged rollout—prototype, pilot, scale—helps surface integration wrinkles early. In terms of governance, ensure policy compliance, data residency, and logging standards. The manus ai agent vs chatgpt decision should also consider regulatory constraints, as multi-system automation may reveal cross-domain data flows that require auditing and risk assessment.

From a practical perspective, succeed by defining clear interface contracts for tool calls, establishing retries and timeouts, and enabling observability dashboards that show both task progress and natural language quality metrics. You’ll need to balance developer time with business value, ensuring you have guardrails so agents don’t operate outside intended boundaries. A well-structured integration plan is essential to maximize the value of manus ai agent vs chatgpt in real-world deployments.

Performance, reliability, and risk

Reliability in manus ai agent vs chatgpt hinges on how failures are detected, contained, and recovered. Manus AI-driven automation can suffer from cascading failures if a single tool call underperforms or a data inconsistency remains undetected. Robust design patterns—circuit breakers, idempotent actions, and comprehensive logging—help mitigate these risks. For ChatGPT-driven regions of the workflow, reliability depends on prompt design, model limits, and external tool integration. Latency matters: agent planning and tool orchestration introduce additional hops, while a pure chat interface prioritizes response speed. Risk assessment should address data leakage, prompt injection, and sandboxing risks when chat agents trigger tool calls. Governance controls must enforce data access boundaries, retention policies, and role-based permissions. In the manus ai agent vs chatgpt calculus, it’s crucial to separate risk domains: automated execution risk, language risk, and data governance risk. Implement test harnesses for both language and automation paths, including synthetic workloads to verify that interactions remain within defined boundaries.

Monitoring should be continuous. Track task completion rates, tool success rates, and user satisfaction with conversational interactions. Use blue/green or canary deployments for agent upgrades, and maintain rollback capabilities for critical automations. Finally, consider incident response readiness: define who can intervene, how to halt agents, and how to preserve evidence for audits. When designed with strong safeguards, the manus ai agent vs chatgpt model becomes a reliable backbone for modern AI-enabled workflows.

Pricing and value considerations

Pricing for manus ai agent vs chatgpt typically follows a combination of compute, API usage, and tooling costs. Manus AI deployments often incur costs related to the agent runtime, the number of tool integrations, and the volume of automated executions. ChatGPT pricing generally scales with API usage for text generation and any plugin or tool usage, with predictable per-token or per-call charges. In realistic planning, you’ll want to consider total cost of ownership: development time, maintenance overhead, monitoring, and governance tooling. For automation-first teams, the value comes from reduced manual effort, faster cycle times, and improved consistency across processes. For chat-centric initiatives, value comes from faster content creation, improved user experience, and scalable knowledge access. Depending on workload, some organizations find a hybrid approach cost-effective: use ChatGPT for interface and ideation, and Manus AI to drive execution with auditable workflows. When evaluating, estimate the cost of tool calls, data egress, and the overhead of orchestrating multi-step tasks. Emphasize value indicators such as time saved, error rate reductions, and improvements in throughput rather than chasing absolute numbers.

Ai Agent Ops’s perspective is that pricing strategies should align with governance needs and ROI expectations. Seek scenarios where automation reduces high-friction manual steps and where conversations lead to measurable improvements in customer outcomes. In practice, a pilot project can help quantify both the linguistic and operational value of manus ai agent vs chatgpt, enabling a disciplined decision about scaling.

Practical decision framework for teams

A structured decision framework helps teams navigate manus ai agent vs chatgpt for a given project. Start with problem framing: what is the core objective—automation, dialogue, or a blend? Map tasks to capabilities: identify which steps require tool orchestration and which require natural language reasoning. Create evaluation criteria: reliability, latency, governance, and user satisfaction. Assess risk tolerance and compliance constraints. Develop a pilot plan with success metrics: completion rate of automated tasks, average time saved per workflow, and user satisfaction with conversational interfaces. Decide on an architecture that keeps responsibilities clear: an automation layer (Manus AI) handles execution, a conversational layer (ChatGPT) handles user interaction, and an integration layer ensures data governance and security. Finally, plan for change management: provide training for developers, establish coding and testing standards for agents, and define escalation paths when failures occur. By following this framework, teams can accelerate adoption while maintaining control over outcomes during manus ai agent vs chatgpt initiatives.

Getting started: pilot projects and governance

To begin a pilot, start with a focused workflow that clearly benefits from automation and conversational capability. Example pilot: streamline a customer onboarding flow that uses ChatGPT to gather needs and confirm details, then hands off to a Manus AI agent to perform account creation, data validation, and provisioning. Governance should define who can modify tool schemas, approve new integrations, and approve agent autonomy levels. Establish guardrails, such as limiting tool access to approved endpoints, ensuring data minimization, and enforcing audit trails for all automated actions. Measure outcomes with a simple dashboard: time-to-completion, error rates, and user satisfaction. As teams iterate, gradually increase autonomy in safe, controlled, and auditable steps. This careful approach aligns with the manus ai agent vs chatgpt landscape: deploy the right mix of agents and conversations, validate against real business goals, and scale only after achieving reliable, measurable gains.

Comparison

| Feature | Manus AI agent | ChatGPT |

|---|---|---|

| Autonomy & tool use | High autonomy with structured tool calls and state management | Low autonomy; mainly prompt-driven with optional plugins |

| Context window / memory | Stateful across steps with defined memory for workflows | Session-limited context; memory typically via external storage |

| Integration footprint | Requires tool adapters, orchestration logic, and data interfaces | Primarily API/prompt integration with plugins |

| Customization & control | High: customizable workflows, governance, and tool schemas | Moderate: prompt design and plugin integration with some control |

| Data privacy & governance | Explicit access controls, auditing, and policy enforcement | Depends on provider; governance features vary by platform |

| Pricing & scaling | Costs scale with tool calls, executions, and orchestration overhead | Pricing scales with text-generation usage and plugin calls |

| Best for | Automation-first workflows, multi-step tasks, and enterprise-scale operations | Conversational interfaces, knowledge work, and rapid ideation |

Positives

- Sharper automation and workflow orchestration

- Better traceability and auditability for automated tasks

- Flexible governance over tool usage and data access

- Strong separation between language and execution layers

- Scales well for enterprise automation with repeatable outcomes

What's Bad

- Steeper learning curve and more upfront design

- Higher initial setup and maintenance effort

- Latency can grow with multi-step executions

- Requires robust monitoring to prevent drift

Manus AI leads in automation; ChatGPT leads in conversation

Choose Manus AI for automation- and integration-heavy workflows. Choose ChatGPT for natural-language tasks and flexible dialogue. For many teams, a hybrid approach delivers the strongest overall ROI.

Questions & Answers

What is Manus AI and how does it differ from ChatGPT?

Manus AI refers to an agent framework designed to orchestrate tools and automate multi-step workflows. ChatGPT is a general-purpose language model optimized for natural language tasks. The manus ai agent vs chatgpt comparison highlights that Manus AI emphasizes execution, while ChatGPT emphasizes language and reasoning within prompts.

Manus AI is an automation engine; ChatGPT is a language model. Manus AI handles tool-based tasks, whereas ChatGPT handles talking and writing.

Can Manus AI replace ChatGPT for all tasks?

No. Manus AI excels at automation and tool orchestration, while ChatGPT shines at conversational tasks, ideation, and content generation. In practice, many teams blend both to leverage automation with natural-language interfaces.

No—use Manus for automation and ChatGPT for conversation; many projects combine both.

Which is easier to adopt for a development team?

ChatGPT is typically easier to adopt initially because it requires less upfront architecture. Manus AI requires designing tool schemas, state management, and integration points, but pays off with stronger automation and governance.

ChatGPT is easier to start with; Manus AI takes more upfront design but pays off in automation.

How should one approach data privacy in manus ai agent vs chatgpt projects?

Both require careful data governance. For Manus AI, enforce strict access controls, auditable tool usage, and data minimization in automation. For ChatGPT, ensure compliance through prompt design, data handling policies, and opting for on-prem or restricted deployments when needed.

Govern data with strict access, audits for automation; control prompts and data flow for conversations.

What metrics matter for ROI when choosing between the two?

Key metrics include time saved, error rate reductions, task completion rate, user satisfaction with interactions, and total cost of ownership. A hybrid approach can amplify ROI by combining fast conversational interfaces with reliable automation.

Track time savings, accuracy, and costs to measure ROI; pilot first.

Key Takeaways

- Match task type to capability: automation vs conversation

- Use a hybrid architecture for best results

- Prioritize governance and observability in automation

- Pilot before scaling to quantify ROI

- Design for auditable, controllable execution