AI Agent Like Augment: A Comparative Guide

An analytical side-by-side look at ai agent like augment vs traditional automation, covering capabilities, governance, costs, and deployment best practices for developers, product teams, and business leaders.

An ai agent like augment is best suited for knowledge-work tasks that require context, learning, and adaptivity. In a two-option comparison with traditional scripted automation, it delivers faster decision-making and greater flexibility, but requires governance, data quality, and ongoing monitoring. The right choice depends on balancing speed, risk, and integration effort.

What is an ai agent like augment?

An ai agent like augment is an autonomous software agent that combines perception, reasoning and action to augment human work. It uses models to understand context, plan steps and execute tasks with limited human intervention. Unlike traditional automation which follows fixed rules, an ai agent like augment can interpret signals, learn from feedback and adjust its behavior over time. For developers this shifts how you design systems from linear pipelines to agent centric architectures where decision making and action occur in a loop. This concept sits at the heart of agentic AI and enables smarter automation across product, engineering and business operations. In practice organizations mix tools data services and policies to enable a responsive agent that can triage requests assemble workflows and monitor outcomes while staying aligned with governance standards. Ai Agent Ops emphasizes that such agent like augment capabilities unlock faster experimentation and closer alignment with strategic goals.

Why it matters in modern automation

In modern automation teams increasingly rely on ai agent like augment to handle uncertain tasks and evolving requirements. Traditional scripted automation excels at repeatable, rule based processes, but it struggles with ambiguity and changing data. Agent like augment introduces context aware decision making, learning from outcomes and adjusting flows without re coding. This shift reduces handoffs and speeds up delivery cycles while enabling teams to codify tacit knowledge into reusable agents. For product leaders the promise is faster time to value and more resilient operations. For developers it creates new design patterns such as agent orchestration and stateful plans. For business leaders the key question is value versus risk, because agent like augment systems can drift if not governed properly. Ai Agent Ops notes that the best outcomes come from a clear governance model combined with robust data practices and human in the loop oversight.

Core capabilities and limits

A ai agent like augment relies on a loop of perception, reasoning and action. Perception collects signals from apps, data stores and human inputs. Reasoning uses models to formulate plans or parallel tasks and select next actions. Action executes tasks through APIs, automation flows or direct integrations. This trio enables complex workflows such as triaging incidents, drafting responses or assembling multi step processes. However limits exist. Performance depends on data quality, model accuracy and infrastructure reliability. Without good data, agents make poor decisions and governance becomes difficult. Also there is risk of over reliance where the agent stops surfacing edge cases to humans. Teams should design guardrails, maintain transparent logs and implement safety nets. When done well, agent like augment can reduce cognitive load and improve consistency across teams, while preserving human oversight and accountability. Ai Agent Ops stresses the importance of incremental adoption and clear success criteria.

Use cases across industries

Product and engineering teams often deploy ai agent like augment to accelerate roadmaps. In software development agents can prioritize work items, auto generate test cases and orchestrate builds. Customer support agents can triage inquiries, collect context and route to humans when needed. Marketing and data science use agents to synthesize insights from disparate data sources, propose experiments and monitor outcomes. Operations teams apply agents to monitor supply chains, detect anomalies and adjust schedules. Financial services use case scenarios include risk assessment and policy enforcement based on streaming data. Across all sectors, the pattern is agent like augment acting as a collaborative partner that augments human decision making rather than replacing it. Ai Agent Ops highlights that successful adoption relies on outcome oriented use cases and measurable milestones.

Architectural patterns for integration

There are several patterns for integrating ai agent like augment into existing ecosystems. The embedded pattern places the agent inside a core application to handle tasks locally with low latency and strong data privacy. The orchestrated pattern uses a central agent manager to coordinate multiple agents across services, enabling complex workflows and cross domain reasoning. The cloud hosted pattern provides scalable compute and access to large models but requires attention to data governance and network reliability. Each pattern has trade offs around latency, control and observability. Teams should map the desired outcomes to an architectural pattern that aligns with security policies and regulatory constraints. Ai Agent Ops recommends starting with a small orchestrator setup to test end to end flows before scaling to multi agent orchestration.

Data governance and ethics

Agent like augment depends on high quality data and clear governance. Data provenance helps trace how inputs influence decisions and who is responsible for outcomes. Privacy controls, access policies and encryption protect sensitive information. Transparency about model behavior and limitations builds trust with users and stakeholders. Ethical considerations include bias detection, safety nets for harmful outputs and robust testing across scenarios. Organizations should implement guardrails that enforce policy constraints, enable human in the loop when necessary and document decision rationales. Ai Agent Ops emphasizes that governance is not a bolt on but a foundational capability in agent like augment implementations.

How learning works in ai agents

Learning in ai agents like augment can be offline, online or hybrid. Offline learning uses historical data to refine models and update decision policies. Online learning adapts models based on fresh feedback signals, which accelerates improvement but requires monitoring for drift. Fine tuning enables tailoring generic models to domain specifics, increasing relevance and accuracy. It is critical to maintain versioned models, track performance, and revert changes if drift undermines reliability. In practice, teams pair learning with evaluation dashboards and human oversight to ensure responsible behavior. Ai Agent Ops notes that iterative improvement is essential to avoid stagnation and drift.

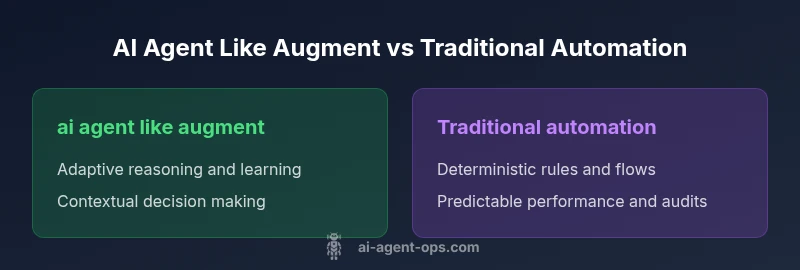

Comparing with traditional automation

Traditional automation follows explicit rules and deterministic flows that are easy to test and audit. It excels at repeatable tasks with clear boundaries and low variability. An ai agent like augment brings flexibility, context awareness and the ability to adapt to new patterns without rewriting code. The trade offs include greater complexity, higher data dependencies and more governance overhead. In practice the best results often come from a hybrid approach that assigns routine, deterministic work to scripted automation while delegating uncertain, high value decisions to agent like augment systems. Ai Agent Ops recommends validating outcomes with clear success metrics and staged rollouts.

Security privacy and compliance

Agent like augment introduces new surface areas for security concerns including model exposure, data leakage and access control across distributed services. Organizations should enforce strict authentication, authorization and audit logging across all integration points. Data minimization and encryption reduce risk when data traverses multiple systems. Regular security assessments, penetration testing and safe deployment practices help prevent misconfigurations. Compliance requirements depend on industry and geography but generally include privacy laws, data retention rules and governance reporting. Transparent incident response plans and clear ownership reduce confusion during incidents. Ai Agent Ops stresses that security by design is essential for agent like augment programs.

Operational impact and team workflows

Adopting ai agent like augment shifts collaboration between humans and machines. Teams reallocate cognitive load from routine decision making to monitoring, evaluation and optimization. New roles emerge such as agent product owners, governance leads and data stewards. Cross functional teams must agree on interfaces, SLAs and escalation paths. Observability tooling becomes essential to understand agent behavior and track outcomes. Training and change management are critical to reduce resistance and ensure adoption. When properly integrated, agents free up human experts to tackle strategic problems while preserving essential oversight. Ai Agent Ops observes that organizational readiness is as important as technical readiness.

Roadmap and maturity

Maturity models typically include pilot experiments, scaled pilots, and full production deployments. Early pilots focus on a narrow use case with measurable value and controlled risk. Scaling involves multi domain use cases, governance improvements and stronger integration with data pipelines. Full production requires robust monitoring, drift management and clear ownership. A staged approach helps avoid misalignment and negative ROI. Organizations should define success criteria and exit criteria for each stage. Ai Agent Ops notes that a pragmatic roadmap reduces uncertainty and speeds up learning while maintaining safety.

Best practices for deployment and maintenance

Start small with a well defined use case and end to end success criteria. Establish governance policies, data handling norms and security controls from day one. Build reusable agent templates, with structured prompts, decision boundaries and logging. Invest in monitoring dashboards that reveal performance, drift and user impact. Create feedback loops that incorporate human review and policy updates. Plan for ongoing maintenance including model retraining, data quality checks and dependency management. By design, agent like augment deployments should be incremental, auditable and resilient. Ai Agent Ops recommends documenting assumptions and outcomes to support future iterations.

Vendor selection and cost models

When comparing vendors for ai agent like augment, evaluate model access, data integration capabilities and governance features. Look for transparent licensing, clear service level expectations and robust security controls. Cost models vary from usage based pricing to tiered subscriptions and enterprise agreements; avoid hidden fees by demanding itemized pricing. Consider total cost of ownership across data pipelines, compute resources, and governance tooling. Plan tests and phase gate criteria to verify value before scale. Ai Agent Ops advises stakeholders to assess vendor roadmaps and support quality to ensure long term viability.

Future trends and the 2026 horizon

The next waves of agent like augment emphasize multi agent orchestration, memory, and chain of thought style planning. Agents will collaborate with human teams through shared dashboards and explainable reasoning. Regulatory frameworks will mature around data lineage and drift management, reinforcing responsible use. We expect greater integration with enterprise data fabrics and broader support across industries. The 2026 horizon favors architectures that balance flexibility with strong governance and safety nets. Ai Agent Ops anticipates continued experimentation, open standardization and practical guidance for teams implementing agent like augment.

Comparison

| Feature | ai agent like augment | Traditional scripted automation |

|---|---|---|

| Decision making | Adaptive, context aware | Deterministic, rule based |

| Learning capability | Self improving, feedback driven | Static and fixed |

| Integration effort | Moderate to high | Low to moderate |

| Data requirements | Medium to high data needs | Minimal data needs |

| Governance and auditability | Requires governance and logs | Easier to audit due to explicit rules |

| Costs | Potentially higher upfront, scalable | Lower upfront, ongoing maintenance |

| Best for | Complex, dynamic tasks with evolving context | Deterministic, repeatable processes |

Positives

- Faster handling of uncertain tasks

- Scales cognitive workloads across teams

- Improved consistency and knowledge capture

- Reduces time to value

- Enables cross functional collaboration

What's Bad

- Higher integration and governance overhead

- Requires good data quality and monitoring

- Potential drift and model risk

- Vendor lock-in and complexity

ai agent like augment is the better fit for complex, adaptive workflows, while traditional automation remains strong for deterministic, repeatable processes.

For teams that prioritize adaptability and learning, choose agent like augment; for those needing strict determinism and low variability, scripted automation may be preferable.

Questions & Answers

What is an ai agent like augment?

An ai agent like augment is an autonomous software entity that combines perception, reasoning and action to augment human work. It can learn from feedback, adapt to new contexts and operate across multiple systems. This agentic approach enables smarter automation beyond fixed rules.

An ai agent like augment is an autonomous assistant that learns and adapts while coordinating tasks across systems.

How does it differ from traditional automation?

Traditional automation relies on explicit rules and deterministic flows. An ai agent like augment adds context aware decision making, learning from outcomes, and the ability to adjust strategies without code changes, which increases flexibility but also governance needs.

It adds learning and context awareness, which makes it more flexible but also requires stronger governance.

What are common use cases?

Common use cases include incident triage, intelligent routing, automated research and synthesis, dynamic workflow orchestration, and feedback driven optimization across product, engineering and operations. Use cases typically involve uncertain or evolving requirements.

Use cases span triage, routing, research, and dynamic workflows where context matters.

What governance and compliance should I plan for?

Plan for data provenance, access controls, logging, drift monitoring and human in the loop where safety is critical. Define ownership, escalation paths and policy enforcement to ensure accountability and regulatory compliance.

Establish data provenance, logs and clear ownership with human oversight when needed.

What challenges should I expect during deployment?

Expect data quality issues, integration complexity, drift risk and the need for ongoing monitoring. Start with a small pilot, align on success metrics, and incrementally scale while maintaining governance and observability.

Data quality and governance are key risks; pilot first, then scale with oversight.

How do I start an implementation?

Begin with a bounded, high value use case, assemble cross functional stakeholders, define interfaces and data contracts, and set up observability. Iterate in sprints with measurable milestones and a clear exit criteria.

Pick a focused use case, align teams, and iterate with clear milestones.

Key Takeaways

- Pilot with a bounded use case to learn quickly

- Invest in data governance before scale

- Balance automation types based on complexity

- Define clear success metrics and exit criteria

- Plan for ongoing monitoring and drift management