What is an AI Agent vs LLM? A Practical Comparison

Learn the differences between AI agents and large language models (LLMs), including definitions, autonomy, data handling, costs, use cases, and governance considerations to choose the right approach for automation projects.

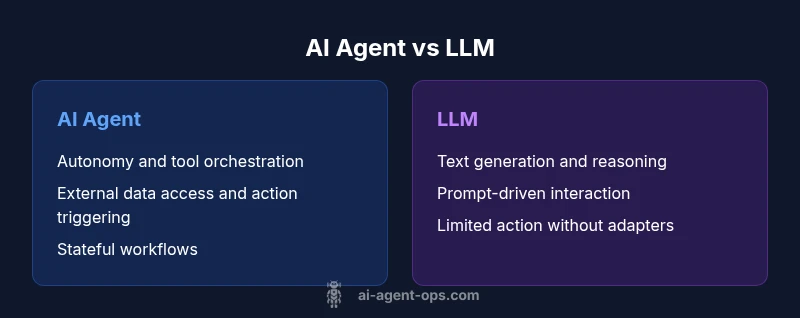

What is an AI agent vs llm? In short, an AI agent is a system that can autonomously plan, decide, and act by invoking tools and data sources to meet goals, while an LLM is a powerful language model that excels at understanding and generating text from prompts. For many teams, the best architecture blends both: an LLM provides reasoning and natural language capabilities, and an agent orchestrates actions and integrations to achieve concrete outcomes. As of 2026, this agentic AI pattern is a common blueprint for smarter automation and safer operation.

What is an AI Agent vs LLM? Framing the question

In practical AI engineering, the question what is an ai agent vs llm anchors a fundamental design choice: do you want a system that can act autonomously in the real world, or a language-focused assistant that shines at text-based tasks? An AI agent typically combines a reasoning module (often powered by an LLM) with executors—tools, APIs, and data stores—that allow it to perform actions, fetch information, and update systems. An LLM, by contrast, is a statistical model trained to predict text; it excels at generation, interpretation, and structured reasoning within a prompt. In many enterprise contexts, teams build agentic AI workflows where the LLM provides interpretation and decision logic, while the agent carries out actions in the real world. This blended model is especially relevant for automation projects, where the goal is to reduce human in-the-loop time while preserving governance and safety.

wordCountOverride": null

Comparison

| Feature | AI Agent | LLM |

|---|---|---|

| Definition | A software entity that can perceive, reason, and act by orchestrating tools and data sources to achieve goals. | A probabilistic language model that generates and analyzes text based on prompts. |

| Autonomy | High autonomy in structured contexts; can initiate actions and adapt to changing inputs. | Primarily prompt-driven; needs external orchestration to take real-world actions. |

| Decision Scope | Handles actionable workflows, multi-step tasks, and tool integration across systems. | Focuses on language tasks, knowledge reasoning, and content generation. |

| Tooling & Integrations | Designed to call APIs, access databases, trigger processes, and update state. | Typically relies on prompts and internal reasoning unless extended with adapters. |

| Memory & State | Can maintain long-running state and external memory through tools and databases. | By default stateless; may rely on external memory modules or prompts for context. |

| Price & Resource Use | Costs scale with orchestration, tool usage, and infrastructure; potential efficiency gains across processes. | Costs tied to prompt usage, model size, and API calls; usually simpler to deploy. |

| Best For | End-to-end automation, process orchestration, and operations that involve external systems. | Language-heavy tasks: writing, summarizing, explaining, and chat-based assistance. |

Positives

- Enables autonomous task execution and end-to-end workflow orchestration

- Improves consistency and auditability through tool usage and action logs

- Supports multi-application integration and scalable automation

- Offers measurable governance by recording actions and outcomes

What's Bad

- Higher upfront complexity and operational overhead

- Risk of unintended actions if constraints are weak or poorly tested

- Requires robust security, data governance, and monitoring

- LLMs without orchestration may be insufficient for real-world workflows

AI Agents generally outperform plain LLMS for automation-heavy tasks; use LLMS for language-centric work and as a reasoning core within an agent.

Choose an AI agent when you need autonomous actions and tool integration. Prefer an LL M when the primary need is text-based tasks and conversational abilities; pair them to maximize impact.

Questions & Answers

What is the practical difference between an AI agent and an LLM?

An AI agent can perceive its environment, decide, and act by calling tools and updating systems, enabling autonomous workflows. An LLM excels at understanding and generating text in response to prompts but does not automatically execute external actions without an orchestrator.

AI agents act and automate, while LLMs generate text based on prompts; use an orchestrator to connect the two when automation is needed.

Can an LLM operate as an agent without extra components?

Not by itself. An LLM requires tooling, prompts, and an orchestration layer to perform actions in the real world. It can act as the reasoning core within an agent, but needs components to execute tasks.

An LLM alone can’t act autonomously; it needs tooling and orchestration to act in the real world.

When should I choose an AI agent over a pure LLM solution?

Choose an AI agent when your goals include automation of multi-step workflows, external data access, and interactions with other software. If your core need is text-heavy tasks, an LL M alone may be the simpler route.

Pick an AI agent for automation; use an LL M for language tasks unless you need action and integration capabilities.

What are the main risk considerations with AI agents?

Key risks include unintended actions, data privacy concerns, and governance gaps. Mitigate with explicit constraints, robust logging, access controls, and regular audits of actions.

Watch for unintended agent actions and data access; use strong controls and audits.

How do costs typically compare between AI agents and LLMS?

Costs depend on usage patterns: agents incur infrastructure and tool costs, while LLMs incur per-call or per-token charges. In many cases, the agent approach yields lower human-in-the-loop costs over time when automating routine tasks.

Agents may have higher upfront costs but can reduce ongoing human labor; LLMs cost scales with use.

What governance practices are important for agentic AI workflows?

Establish clear ownership, access controls, action logging, and fail-safes. Regularly review outcomes, calibrate tool usage, and update constraints as systems evolve.

Set ownership, logs, and safety checks; review outcomes regularly to keep automation aligned.

Are there common patterns to assess AI agents in production?

Look for reliable tool integrations, clear decision boundaries, and monitoring dashboards. Start with a narrow scope, gradually expand capabilities while maintaining safety gates.

Start small, validate decisions, monitor results, and expand carefully.

Key Takeaways

- Understand the core distinction: autonomy vs language generation

- Pair LLMs with agents to unlock practical automation

- Plan governance, safety, and monitoring from the start

- Choose based on use-case: end-to-end workflows vs text tasks

- Evaluate data access, memory, and tool integration early