Cursor AI Agent vs Normal: A Comprehensive Comparison

A rigorous, analytical comparison of cursor ai agent vs normal automation, detailing capabilities, use cases, governance, costs, and deployment patterns for developers and leaders evaluating AI-driven agents.

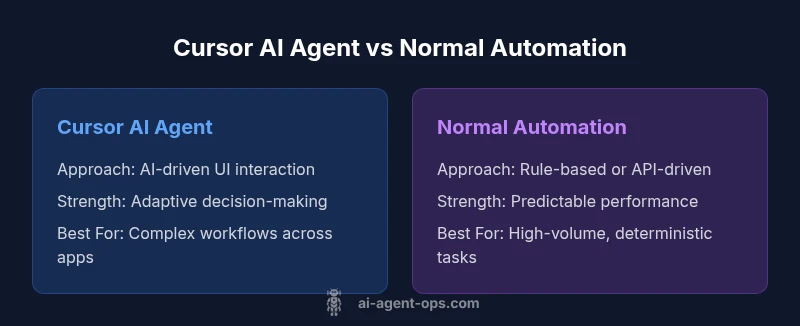

Cursor AI agents blend perception, reasoning, and action at the UI level, delivering adaptive automation across apps. This comparison clarifies how cursor ai agent vs normal automation differ in capabilities, governance, cost, and real-world applicability, helping you decide where AI-driven cursor agents add value and where traditional automation remains preferable.

What is cursor ai agent vs normal automation

Cursor AI agents fuse perception, decision-making, and action, enabling interactions with software through user interfaces and data streams. In the world of cursor ai agent vs normal automation, the core difference is that cursor agents operate in dynamic, multi-application environments, learning from context and adapting to new tasks without explicit reprogramming. According to Ai Agent Ops, this paradigm shifts automation from static scripts to agent-driven workflows capable of navigating screens, interpreting unstructured inputs, and coordinating across tools. Yet, governance, risk, and cost considerations are essential. The phrase cursor ai agent vs normal captures a spectrum of capabilities—from OCR and form filling to decision prompting and cross-modal actions. While traditional automation excels at repeatable, well-defined tasks, cursor AI agents unlock cross-system automation where APIs are incomplete or legacy software dominates. The result is a more flexible automation surface that complements, rather than replaces, API-first pipelines. For developers and leaders, the cursor ai agent vs normal distinction signals a move toward adaptive automation that learns from interaction history.

Core differences: capabilities, control, and cost

When comparing the core attributes of cursor AI agents and normal automation, several criteria stand out. First, capability scope: cursor AI agents combine perception (seeing data), reasoning (interpreting context), and action (manipulating UI or APIs), enabling end-to-end workflows across disjoint tools. Traditional automation relies on fixed rules and API calls. The control plane also diverges: cursor agents operate under policy-based governance with guardrails and, often, human-in-the-loop approvals; traditional automation emphasizes deterministic control with audit trails. Latency and throughput also diverge: AI-driven decisions introduce model latency and occasional variability, while scripted workflows strive for consistent cadence. Cost models diverge as well: cursor agents may require ongoing model usage and monitoring, whereas conventional automation typically entails upfront development costs and predictable maintenance. In practice, the cursor ai agent vs normal discussion boils down to trade-offs between flexibility and reliability, breadth of automation versus depth in a single domain, and the readiness of your data and governance processes.

Use cases by domain

Cursor AI agents excel in scenarios where tasks span multiple apps, require interpretation of unstructured data, or demand rapid adaptation. In customer support, they can interpret inquiries, fetch context from CRM, and route cases across systems. In product and operations, they can assemble data from spreadsheets, dashboards, and issue trackers to produce summaries and trigger follow-ups. In procurement, they can compile vendor information and complete forms when criteria align. In software testing, they can simulate user journeys, gather logs, and generate test artifacts. These examples illustrate that cursor ai agent vs normal automation is most valuable when you face tasks spanning multiple apps, where unstructured data and human judgment play a role. The strengths lie in reducing handoffs, accelerating decision cycles, and enabling automation patterns that pure API-based pipelines cannot easily cover.

Performance and economic considerations

Adopting cursor AI agents often changes the economics of automation. You may see faster onboarding of new workflows because less time is spent on building API adapters; you pay for model usage rather than bespoke integration work. However, performance is not purely free of risk: model latency, prompt quality, and drift can affect throughput and outcomes. Ai Agent Ops Analysis, 2026 notes that organizations experimenting with cursor-based agents should plan for governance, monitoring, and rollback paths to handle unexpected results. In practice, the true cost of cursor ai agent vs normal automation includes not only direct expenses but also the cost of operational teams to tune prompts, maintain guardrails, and audit decisions. The decision hinges on the value of flexibility and cross-app reach versus the cost of extra governance.

Security, governance, and compliance

Cursor AI agents introduce new risk vectors: data exposure through UI interactions, leakage across prompts, and drift if policies are not updated. Organizations should implement least-privilege access, robust activity logging, and end-to-end audit trails for actions performed by agents. Governance should cover model versioning, prompt hygiene, and containment strategies to prevent escalation in sensitive environments. Compliance considerations vary by domain but commonly include data residency, PII handling, and vendor risk management. In short, the security posture for cursor-based automation should be as strong as the data hygiene in your core apps.

Integration and deployment patterns

To realize the benefits of the cursor ai agent vs normal approach, teams typically adopt a hybrid pattern: orchestrate AI agents with existing workflow engines, expose defined guardrails, and integrate with both API-backed services and UI-level automation tools. Common patterns include building an agent-runner layer that consumes prompts, maintains context across sessions, and logs decisions for auditability; employing a centralized policy engine to enforce constraints; and creating test hospitals (sandbox environments) to validate prompts and actions before production. Deployment considerations include version control for prompts and policies, feature flags to enable or disable capabilities, and continuous monitoring for drift. In organizations with mature governance, cursor AI agents can be deployed incrementally, beginning with non-critical tasks and gradually expanding to broader workflows as confidence grows.

Decision framework: when to choose which path

Begin with mapping the tasks you intend to automate and categorizing them by API coverage, data structure, and required judgment. If most steps exist as reliable API calls with clear success criteria, traditional automation is often the better first choice. If you encounter tasks that require interpretation of unstructured inputs, cross-application navigation, or rapid adaptation to new tools, consider cursor ai agent vs normal as a primary strategy. Then assess governance readiness: do you have logging, access control, and rollback plans? Finally, run a small pilot to measure qualitative gains (speed of task completion, reduction in handoffs) and qualitative risks (drift, misrouting). The decision framework should emphasize risk-aware experimentation: start with non-critical processes, measure impact, and scale responsibly.

Best practices and road map

Start with guardrails and scope: define where agents can act, what data they can access, and how decisions are logged. Invest in prompt design and testing, including edge-case prompts to reduce surprises in production. Combine human-in-the-loop oversight for critical decisions and build a clear rollback path. Track metrics such as task completion rate, error rate, and cross-tool latency to quantify progress. Regularly review prompts, policies, and data flows to mitigate drift. As you refine cursor ai agent vs normal strategies, keep your architecture modular so you can swap components as the ecosystem evolves. The Ai Agent Ops team encourages teams to document decisions and share lessons learned, both in code and in governance artifacts.

Authority Sources

- https://www.nist.gov

- https://ai.stanford.edu

- https://www.nature.com

Comparison

| Feature | Cursor AI Agent | Normal Automation |

|---|---|---|

| Approach | LLM-driven UI automation with context-aware decisions | Rule-based or API-driven automation |

| Interaction with apps | Cross-app navigation and data interpretation | Direct API calls or scripted UI actions only |

| Control & governance | Policy-based governance with guardrails | Deterministic controls and audit trails |

| Development effort | Moderate to high (prompt engineering and risk controls) | Low to moderate (scripts and adapters) |

| Latency | Variable due to model latency | Deterministic, SLA-friendly |

| Reliability | Improves with guardrails; requires monitoring | High reliability for stable, defined tasks |

| Data handling | Handles unstructured inputs; learns from interaction | Works best with structured inputs and APIs |

| Cost considerations | Ongoing model usage; monitoring and governance | Upfront development; predictable maintenance |

| Best for | Complex, cross-app, knowledge-driven workflows | High-volume, repeatable, API-based tasks |

Positives

- Expands automation surface by operating inside UIs across apps

- Reduces handoffs and speeds up cross-tool workflows

- Handles unstructured data and adapts to new tools without full reprogramming

- Enables end-to-end processes when APIs are incomplete or unavailable

What's Bad

- Requires governance, monitoring, and drift mitigation

- Model latency can affect throughput and predictability

- Higher ongoing costs for prompts, fine-tuning, and guardrails

- Security and regulatory considerations are more complex

Cursor AI agents outperform traditional automation in flexibility but demand governance and monitoring.

Choose cursor AI agents when cross-app automation and adaptive decision-making are critical. Prefer normal automation for deterministic, high-volume tasks with stable APIs and strict reliability needs.

Questions & Answers

What is a cursor AI agent, and how does it differ from normal automation?

A cursor AI agent combines perception, reasoning, and action to operate across user interfaces and apps. It differs from normal automation by handling unstructured data, adapting to new tools, and coordinating tasks end-to-end, rather than following fixed scripts alone.

Cursor AI agents blend perception and action to automate across apps, adapting as tools change. They’re different from fixed scripts because they handle unstructured data and new tools more flexibly.

When should I adopt a cursor AI agent over traditional automation?

Choose a cursor AI agent when your workflows span multiple apps, involve unstructured data, or require rapid adaptation to new tools. If tasks are well-defined, API-based, and require high-volume reliability, traditional automation often suffices.

If your work crosses apps and adapts to new tools, a cursor AI agent is worth considering; for fixed API-based tasks, traditional automation may be better.

What are common risks with cursor AI agents and how can I mitigate them?

Key risks include drift in policy enforcement, data leakage through prompts, and unpredictable actions. Mitigate with strong access control, audit logging, prompt hygiene, and a clear rollback path for agent actions.

Watch for drift and data exposure. Mitigate with strict access, logs, prompts hygiene, and rollback plans.

How do you measure ROI and success for cursor AI agents?

Measure by task completion rate, reduction in manual handoffs, cross-tool latency, and governance overhead. Compare against a baseline of API-driven automation to quantify improvements and costs.

Track completion, fewer handoffs, and latency, then compare to your API-based baseline to gauge ROI.

What tooling or platforms support cursor AI agents?

Many platforms offer AI agent capabilities, including orchestration layers and UI automation tools. Look for features like guardrails, context retention, prompt testing, and audit logging to support cursor AI workflows.

Many toolchains support AI agents; prioritize guardrails, context, testing, and logs for reliable use.

Key Takeaways

- Assess task complexity across apps before choosing an approach

- Prioritize guardrails and governance when using AI-driven agents

- Leverage cursor AI for flexible, end-to-end workflows

- Invest in monitoring and prompt design to manage drift

- Start with non-critical processes and scale thoughtfully