ai agent specification template: a practical guide

Learn how to create an ai agent specification template to standardize goals, inputs, outputs, safety, and governance for scalable, responsible agentic AI workflows.

With an ai agent specification template, you will standardize how teams describe an AI agent's purpose, inputs/outputs, evaluation criteria, safety constraints, and governance. It helps across projects accelerate development, ensure interoperability, and enable consistent review and testing. This blueprint also clarifies ownership, versioning, and success metrics for scalable agent programs.

What is an ai agent specification template

According to Ai Agent Ops, an ai agent specification template is a structured blueprint that codifies the essential aspects of an AI agent—its purpose, capabilities, inputs, outputs, data contracts, safety constraints, and governance rules. It serves as a single source of truth that teams can reference when designing, building, and evaluating agents. By codifying requirements in a consistent format, you reduce ambiguity, accelerate onboarding for new team members, and improve cross-team collaboration. The template also functions as a living artifact: it should evolve as your agentic workflows mature, boundaries shift, and new regulatory expectations arise. For developers, product managers, and leaders, this template aligns technical implementation with policy goals and user needs, ensuring a repeatable path from concept to deployment.

Why teams need a formal template

A formal template provides a repeatable framework for describing what an AI agent should do, how it should behave, and how its success will be judged. It prevents scope creep by forcing a concrete declaration of goals and constraints at the outset. With a standardized template, stakeholders—from data engineers to compliance officers—can review agent designs against the same criteria, enabling faster governance approvals and smoother audits. Ai Agent Ops team recommends anchoring the template to real-world use cases so teams can see practical value quickly, while preserving flexibility for future adaptations. In short, a template turns tacit knowledge into explicit, shareable knowledge that scales.

Core components of an ai agent specification

An effective ai agent specification template typically includes several core components. First, a clear purpose statement and scope define what the agent is intended to address. Second, inputs and outputs specify data contracts, schemas, and interfaces. Third, goals, success metrics, and constraints establish how performance will be measured and what limits apply. Fourth, safety, governance, and compliance considerations outline policies for privacy, bias, and risk mitigation. Fifth, data provenance and access controls describe how data is sourced and who can access it. Finally, versioning and ownership assign responsibility for updates and approvals. Each component should be described with precise language, so engineers can translate requirements into concrete features and tests.

Defining goals, constraints, and success criteria

Goals should be outcome-focused and measurable, not abstract. Frame success with objective criteria like accuracy thresholds, latency targets, or user satisfaction benchmarks. Constraints can include safety rules, ethical guidelines, and regulatory boundaries. It helps to tie each goal to a concrete test or scenario that will prove it has been met. For example, a goal might be: 'The agent shall respond with non-biased language within 2 seconds for 95% of simple queries.' Detailing failures and fallback behaviors upfront reduces ambiguity during operation and debugging. Ai Agent Ops emphasizes clarity in constraint definitions to prevent uncontrolled agent behavior in edge cases.

Data interfaces and inputs/outputs

Define the data contracts for every interaction the agent will have. Specify input types, required fields, data formats, and any sanitation or normalization rules. Outline outputs in the same level of detail: structure, allowed values, and error handling. Include data lineage notes to trace how each input was produced and transformed. This section should also cover privacy considerations, such as PII handling rules and retention windows. Providing explicit examples of valid and invalid data helps engineers implement robust parsing and validation checks from day one.

Safety, governance, and compliance considerations

Safety is built into the template by design. Include rules for guardrails, escalation paths, and decision boundaries. Governance should cover who can deploy updates, how approvals are obtained, and how changes are logged. Compliance considerations include data protection standards, auditing capabilities, and documentation for regulators. This block is where you translate high-level policies into concrete controls the agent can apply during operation. When aligned with organizational risk tolerance, a rigorous governance model reduces the likelihood of unintended or harmful agent behavior.

Example template structure in Markdown

Below is a practical skeleton you can copy-paste and customize. The goal is to give teams a concrete starting point that preserves flexibility for different agent types while maintaining consistency across projects.

# ai agent specification template

## 1. Overview

- Name:

- Purpose:

- Scope:

- Stakeholders:

## 2. Inputs

- Data sources:

- Data formats:

- Validation rules:

## 3. Outputs

- Output schema:

- Expected behavior:

- Error handling:

## 4. Goals & Metrics

- Primary goal:

- KPIs:

- Acceptable thresholds:

## 5. Constraints & Guardrails

- Safety rules:

- Ethical guidelines:

- Boundary conditions:

## 6. Data & Privacy

- Data provenance:

- Access control:

- Retention:

## 7. Governance & Versioning

- Owner:

- Review cycle:

- Change log:

## 8. Validation Tests

- Test cases:

- Exit criteria:

## 9. Compliance & Audit

- Regulations:

- Documentation:

- Tip: Use a repository to version the template and track changes so teams can compare iterations easily.

How to tailor the template for your team and tech stack

Every organization has unique tooling, data sources, and risk tolerance. Start by mapping the template sections to your internal artifacts: data schemas, API contracts, data lineage diagrams, and deployment pipelines. Create lightweight starter templates for different agent classes (e.g., information retrieval agents, decision agents, and action agents) to accelerate adoption. Establish a review checklist that aligns with your engineering standards and regulatory requirements. Finally, train your teams on how to fill the template consistently and encourage feedback to improve clarity and usefulness.

Validation, testing, and version control

Validation should combine automated checks with human review. Implement unit tests for inputs/outputs, integration tests for end-to-end scenarios, and safety checks for policy violations. Use version control to manage template evolution, tagging major revisions and recording rationale. Maintain a changelog and periodic reviews to ensure the template stays aligned with evolving business needs and regulatory expectations. The end goal is a reliable, auditable artifact that supports governance as you scale agentic AI programs.

Common pitfalls and best practices

- Pitfall: Vague language leads to misinterpretation. Practice precise, testable statements. - Best practice: Tie every goal to a verifiable test or scenario. - Pitfall: Overloading the template with too many optional fields. - Best practice: Start lean, add sections as needed. - Pitfall: Neglecting data privacy implications. - Best practice: Include explicit privacy and access controls from day one.

Tools & Materials

- Documentation template (Markdown or rich text)(Ensure it's editable and version-controlled)

- Stakeholder interview checklist(Capture requirements from product, security, and data teams)

- Example datasets or data schema spec(Provide representative inputs/outputs)

- Version control system (e.g., Git)(Track changes to the template and instances)

- Diagramming tool(Visual data contracts and interfaces (optional))

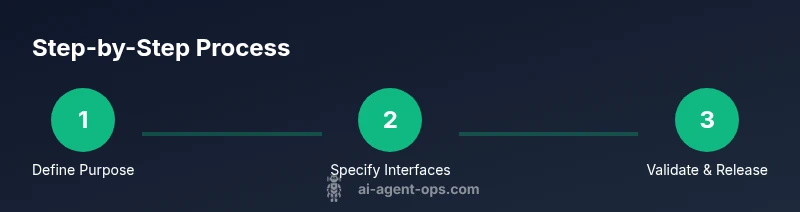

Steps

Estimated time: 60-120 minutes for a first pass; 2-3 hours including stakeholder review

- 1

Define the agent's purpose and scope

State the agent's core objective and the domain it will operate in. Clarify boundaries to prevent scope creep and to guide validation tests. Document who uses the template and who approves changes.

Tip: Link the purpose to a concrete user story or business outcome to ensure relevance. - 2

Identify inputs, outputs, and data contracts

List every input the agent will consume and every output it will produce. Specify formats, validation rules, and error handling. Include data provenance to support traceability.

Tip: Use example payloads to illustrate valid and invalid data early. - 3

Specify goals, success criteria, and constraints

Translate business goals into measurable KPIs and acceptance criteria. Define guardrails and constraints that limit undesired behavior and ensure compliance.

Tip: Attach a concrete test case to each goal for automated validation. - 4

Define safety, governance, and privacy controls

Capture policy requirements, escalation paths, and privacy safeguards. Outline who can modify the template and how approvals are obtained for changes.

Tip: Document data access roles and retention policies to support audits. - 5

Draft template sections and formatting

Create a clean, modular template with sections that map to your agent classes. Use consistent terminology to reduce ambiguity across teams.

Tip: Provide a ready-to-use starter version for different agent types. - 6

Review with stakeholders

Share the draft with product, security, data, and compliance teams. Collect feedback and resolve ambiguities before finalizing.

Tip: Record decisions and rationale in a change log. - 7

Validate with real tasks and version control

Run representative tests to verify that the template captures required details. Commit the final version to your repository and publish a governance plan.

Tip: Tag major revisions and maintain a backward-compatibility plan.

Questions & Answers

What is the purpose of an ai agent specification template?

It standardizes how teams describe an AI agent, covering purpose, inputs/outputs, goals, constraints, safety, and governance to enable consistent development and governance.

It's a standard blueprint that helps teams describe what an AI agent should do and how it should be governed.

Who should own and maintain the template?

Ownership typically rests with a cross-functional governance team including product, engineering, and security. Regular reviews keep the template aligned with policy and technical changes.

A cross-functional team should own and update the template over time.

What sections are essential in the template?

Core sections include purpose, inputs/outputs, goals and metrics, constraints, data contracts, governance, privacy, and validation tests.

At minimum, include purpose, inputs/outputs, goals, constraints, data contracts, governance, and tests.

How does the template support compliance?

By documenting privacy controls, data lineage, access restrictions, and escalation policies, the template provides auditable evidence for regulators and internal reviews.

It records privacy and governance details so regulators and teams can review them.

How often should the template be updated?

Update cadence depends on regulatory changes, new use cases, and product evolution; at minimum, schedule annual reviews and as-needed revisions after major incidents.

Review the template at least once a year and after any major incident or policy change.

Watch Video

Key Takeaways

- Define clear, measurable goals linked to tests.

- Capture inputs/outputs with explicit data contracts.

- Embed governance and privacy controls from day one.

- Use versioning to manage template evolution.

- Align the template with real-world use cases to drive adoption.