ai agent and its types: a comprehensive comparison

Explore the main ai agent types, architectures, and trade-offs. This analytical guide helps developers and leaders choose reactive, deliberative, learning, or hybrid agents to optimize automation and governance.

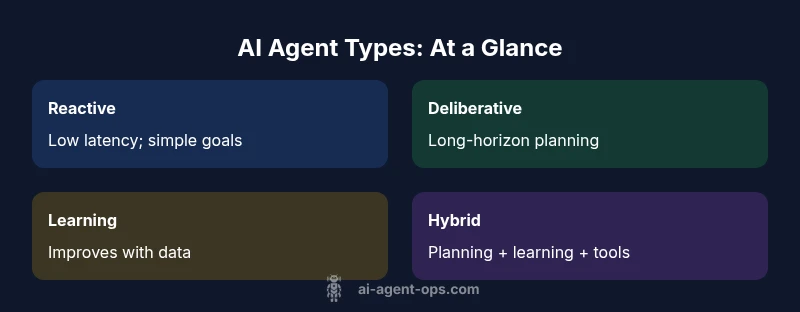

This comparison distills ai agent and its types into four core families—reactive, deliberative, learning, and hybrid—and shows how each handles perception, planning, memory, and tool use. By mapping your task to these types, you can choose the right architecture, set realistic expectations, and design governance around autonomy. The ai agent and its types you pick will shape latency, explainability, and adaptability across your workflows. See our detailed sections for practical deployment guidance.

What is an AI agent and its types

AI agents are software programs that perceive their environment, reason about goals, and take actions to achieve those goals. In the broad landscape of AI, an ai agent and its types span reactive agents, deliberative (goal-driven) agents, learning-based agents, and hybrid architectures that combine planning, learning, and tool use. According to Ai Agent Ops, understanding these types helps teams design more reliable automation and guard against over-promising capabilities. The term 'ai agent' often implies autonomy: systems that can operate with minimal human intervention, adapt to new tasks, and integrate with other software via APIs and plugins. The central distinction among ai agent and its types is how they represent knowledge, how they plan, and how they learn. Reactive agents respond to stimuli with minimal internal state; deliberative agents construct plans to pursue long-horizon objectives; learning agents adjust behavior through experience; and hybrid agents fuse planning with learning and external tool-uses. For developers and business leaders, the taxonomy matters because it maps to latency constraints, explainability demands, and governance considerations. When you map your problem to one or more ai agent and its types, you can select architectures that balance speed and accuracy, provide auditable reasoning, and scale across departments. Each type supports different levels of autonomy and reliability, and each has associated design patterns, evaluation metrics, and integration challenges. In practice, teams often start with a simple reactive agent to handle routine events and then layer deliberative or learning components as needs mature. The landscape also includes agentic AI, where agents coordinate with other agents and external tools to achieve system-wide goals. In this article, we examine the main categories and offer practical guidance on when to use which type, how to combine them, and what tradeoffs to expect for ai agent and its types across industries.

Core categories of AI agents

A comprehensive view of the ai agent and its types identifies four core families: reactive agents, deliberative/goal-driven agents, learning/adaptive agents, and hybrid agents that combine elements of planning, reasoning, and tool use. Each category offers different guarantees around latency, reliability, and explainability, and each fits different business needs. Reactive agents are fast, inexpensive, and suitable for real-time sensation-response tasks; they excel where the environment is stable and the goals are simple. Deliberative or goal-driven agents carry internal models and plan sequences of actions to achieve longer-horizon objectives, making them ideal for multi-step workflows and policy enforcement. Learning/adaptive agents improve through experience, user feedback, or data streams, enabling personalization and continuous improvement. Hybrid agents fuse planning with learning and external tools to handle complex, dynamic environments. Real-world examples include chat assistants that combine retrieval (RAG) with planning, automation orchestrators that schedule tasks across services, and autonomous testing agents that adapt based on outcomes. When evaluating ai agent and its types for a project, weigh speed against flexibility, and consider how much you value explainability and governance. The choice often hinges on whether your priority is immediate responsiveness, long-term strategy, or continuous improvement through data-driven updates.

Architectures powering AI agents: planning, memory, and reasoning

The ai agent and its types rely on a spectrum of architectures that balance symbolic reasoning and statistical learning. At one end are reactive, stimulus–response models; at the other end are deliberative systems based on Belief-Desire-Intention (BDI) or planner-based frameworks such as PDDL. A modern agent often uses a hybrid cognitive architecture that combines: (1) perception modules and world models; (2) a planner or decision module to generate sequences of actions; (3) memory components that store past observations and outcomes; and (4) a tool-use layer that calls external APIs or plugins. Embedding such architectures helps an ai agent and its types maintain traceable reasoning for audits and governance. For instance, BDI-inspired agents can log beliefs, goals, and intents to support explainability, while planning-based agents publish plans that can be inspected by humans. Memory architectures, including episodic memory and long-term memory, enable agents to adapt across sessions and domains. Tool-use capabilities—such as browsing, calculator, or domain-specific libraries—extend reach without hard-coding every response. As teams design agent systems, they should select an architecture that matches their target latency, scaling expectations, and regulatory requirements. This alignment is essential for enterprise deployments of ai agent and its types, particularly in regulated industries or safety-critical contexts.

Tools, memory, and learning in agent workflows

A key distinction in the ai agent and its types is how tools are integrated and how memory is managed. Many agents rely on external tools or plugins to extend capabilities: search engines, databases, code execution environments, calendars, or CRM systems. A practical pattern is to treat tools as modular interfaces, enabling safe substitution and clear access control. Short-term memory keeps track of the current conversation or task state, while long-term or external memory stores domain knowledge and past outcomes. Retrieval-augmented generation (RAG) and memory-augmented adapters are common techniques to improve accuracy without retracing the entire reasoning path. Learning, whether supervised fine-tuning, reinforcement learning, or rule-based adaptation, lets ai agent and its types improve over time, particularly in user-facing domains where preferences shift. However, learning comes with risk: distribution shift, bias, and safety concerns. Therefore, governance and testing must accompany learning-enabled agents. In practice, many teams start with a reliable reactive or deliberative agent, then add learning components to personalize interactions or optimize workflows. This staged approach reduces risk while unlocking the benefits of agentic AI.

Real-world patterns and best practices for deploying AI agents

In real-world deployments, the ai agent and its types must operate within organizational constraints, security policies, and performance targets. Establish a governance model early: define guardrails, data provenance, and audit trails so decisions are explainable and traceable. Start with a narrow scope—one clear task or one customer journey—and validate, then scale. Use modular architectures so you can swap in a more capable agent type as requirements evolve. Measure success with a balanced scorecard of metrics: task completion rate, latency, error rate, user satisfaction, and system reliability. Make sure to assess risk across data handling, privacy, and adversarial manipulation. For many teams, hybrid approaches deliver the best of both worlds: a fast reactive core that handles routine work, augmented by a deliberative or learning layer for exceptions and personalization. Across industries—from software as a service to healthcare—organizations are experimenting with ai agent and its types to automate decision workflows, orchestrate cross-system tasks, and improve responsiveness. Ai Agent Ops analysis shows a rising trend toward orchestrators that combine planning with external tools, signaling the industry move toward agentic AI patterns.

Decision framework: choosing the right ai agent and its types for your project

To select the best ai agent and its types for a project, start by clarifying the business objective and success metrics. Is speed paramount, or is long-horizon planning more valuable? What level of explainability is required for stakeholders, regulators, or customers? Create a decision checklist: 1) latency constraints; 2) required level of autonomy; 3) need for learning or personalization; 4) governance and safety requirements; 5) integration with existing tools and data sources. Map these criteria to the four main categories: reactive, deliberative, learning, and hybrid. Use a staged evaluation plan: prototype a minimal viable agent, collect feedback, and incrementally incorporate more capable types as needed. When deciding, consider a hybrid strategy—that is, a core reactive agent handling real-time tasks, with an overlaid deliberative or learning component for edge cases or optimization. This approach helps manage risk while delivering measurable value from the ai agent and its types across departments. Also, ensure you have a plan for monitoring, updating, and decommissioning agents if performance degrades or governance requirements shift. By following this framework, organizations can realize reliable automation with clear accountability.

Common myths and pitfalls around AI agents

A frequent myth is that an AI agent and its types can function perfectly without human oversight. In reality, governance, monitoring, and safety controls are essential. Another misconception is that more complex agents automatically yield better results; in fact, complexity can raise risk and maintenance costs. Many teams overestimate the transferability of a given agent across tasks or domains; tailoring the architecture to the specific environment is crucial. Finally, it's easy to confuse automation with intelligence: an agent's success depends on good data, robust interfaces, and well-defined objectives. Clear, actionable prompts and templates help maintain consistency and reduce drift across sessions. By dispelling these myths, organizations set realistic expectations for agent performance and governance.

The role of AI agent types in agentic AI and business impact

The ai agent and its types play a central role in agentic AI strategies that aim to coordinate multiple agents to achieve common goals across platforms. Deliberative and hybrid types are well-suited for orchestrating workflows that span tools, APIs, and teams, while reactive agents excel at handling high-volume, low-latency tasks. Personalization emerges from learning agents that adapt to user preferences over time, feeding into marketing, customer support, and product optimization. The business impact is not just in automation cost savings but in the ability to experiment with new operating models, reduce cycle times, and improve decision quality through transparent reasoning and auditable traces. Organizations should design agent ecosystems with governance as a first-class concern, ensuring safety, privacy, and accountability across all ai agent and its types.

Measurable outcomes and success metrics for AI agents

Evaluating AI agents requires multiple dimensions that reflect both performance and governance. Core metrics include task success rate, average latency, user satisfaction scores, and error rates. For learning-based agents, monitor convergence time, stability during updates, and potential biases introduced by training data. It's essential to track the end-to-end impact on business processes—did the agent reduce cycle times, improve accuracy, or boost net promoter scores? A robust evaluation plan also includes scenario testing, red team exercises, and ongoing validation of data privacy and security controls. In sum, the ai agent and its types offer a path to scalable automation, provided organizations invest in careful selection, governance, and continuous improvement.

Authority sources

- National Institute of Standards and Technology (nist.gov) on artificial intelligence governance and safety practices.

- Stanford Encyclopedia of Philosophy entry on agent models and agent cognition.

- Association for the Advancement of Artificial Intelligence (aaai.org) publications and resources for AI agents.

Feature Comparison

| Feature | Reactive Agent | Deliberative/Goal-Driven Agent | Learning/Adaptive Agent | Hybrid/Agentic Agent |

|---|---|---|---|---|

| Decision scope | Short-sighted, reflexive responses | Long-horizon planning to achieve defined goals | Adaptation through data and feedback | Planning + learning + tool orchestration |

| Memory/State | Minimal internal state | Explicit state and goal models | Experience data and feedback loops | External memory with planning context |

| Latency | Low latency, fast cycles | Moderate to high latency due to planning | Variable latency depending on learning loop | Hybrid latency shaped by components |

| Explainability | Low explainability | Medium explainability via plans/goals | Variable; depends on data and model | Higher explainability through auditable plans |

| Best for | Real-time monitoring and alerting | Complex workflows and policy enforcement | Personalization and improvement over time | Coordinated multi-task orchestration |

| Complexity | Low | Medium-High | High | High |

Positives

- Clear taxonomy helps teams choose the right type

- Supports governance and auditable decision trails

- Enables modular, scalable automation

- Facilitates gradual sophistication from simple to complex agents

What's Bad

- Hybrid models increase system complexity and maintenance

- Evaluation requires multi-metric analysis

- Overreliance on learning can risk bias and drift

- Governance and safety controls add upfront work

Select the agent type based on task needs rather than chasing sophistication

Reactive agents for fast tasks, deliberative for planning, learning for personalization, and hybrids for orchestration. Use a staged approach to minimize risk and maximize governance.

Questions & Answers

What is an AI agent and its types in simple terms?

An AI agent is a software system that perceives its environment, reasons about goals, and takes actions to achieve them. The main types are reactive, deliberative, learning, and hybrid agents, each with different planning and memory characteristics.

An AI agent is a smart software that senses, decides, and acts. The main types are reactive, deliberative, learning, and hybrid—each with different planning and memory styles.

When should I choose a reactive vs a deliberative agent?

Choose reactive agents for fast, low-latency tasks with simple goals. Deliberative agents suit long-horizon planning and complex workflows where explainability matters.

Pick reactive for speed, deliberative for planning and clarity in decisions.

Can learning agents be trusted in production?

Learning agents can improve over time but require governance, testing, and monitoring to manage drift and bias. Start with a controlled scope and gradually expand.

Learning agents can help a lot, but you need governance and careful testing.

What is agentic AI and how does it relate to AI agents?

Agentic AI refers to systems that coordinate multiple AI agents to achieve shared goals, often using hybrid architectures to orchestrate tools and workflows across platforms.

Agentic AI is about coordinating several agents to work together.

What metrics matter when evaluating AI agents?

Key metrics include task success rate, latency, reliability, user satisfaction, and governance compliance. For learning components, track convergence and bias.

Look at success, speed, reliability, user feedback, and governance.

What are common pitfalls in deploying AI agents?

Avoid overcomplicating architecture, underestimating data quality needs, and skipping governance. Start with a narrow scope and iterate with feedback.

Don't overcomplicate things; test early and govern strictly.

Key Takeaways

- Map your task to an agent type before building

- Prioritize governance early in design

- Use hybrid approaches for complex workflows

- Prototype, measure, and iterate

- Plan for monitoring and updates from day one