Different Types of AI Agents: A Practical Builder's Guide

Explore the different types of AI agents—from reactive to multi-agent systems—and learn how to choose the right agent type for your project with practical guidance for developers and leaders.

According to Ai Agent Ops, the AI-agent landscape spans from simple reactive primitives to advanced agentic systems, and understanding these types is crucial for effective automation. The most impactful distinctions lie in how agents perceive, reason, and decide—shaping how you architect automation. For practical purposes, start with a simple reactive agent and plan a path toward goal-based, learning-enabled, and multi-agent configurations as needs evolve.

What are AI agents? Core concept and taxonomy

AI agents are autonomous or semi-autonomous software entities that act on behalf of a user or system within an environment. They perceive inputs, reason about them, and take actions to achieve goals or optimize utility. When we talk about the different types of agents in ai, we are describing variations in perception, decision-making, and learning capabilities, as well as how they coordinate with other systems. The Ai Agent Ops framework emphasizes that effective agent design starts with mapping your problem to a suitable agent class—recognizing that many real-world problems benefit from a hybrid approach that blends reactive, deliberative, and learning components. In practice, teams should describe the agent’s boundary, its expected behaviors, and the data it can leverage. This helps avoid scope creep and aligns stakeholders around measurable outcomes.

A well-structured taxonomy includes reactive agents, model-based or symbolic agents, goal-based and utility-based agents, learning agents, planners, and multi-agent systems. Each category has distinct strengths, weaknesses, and data requirements. For developers and product leaders, the critical question is not only which type to deploy, but how to compose multiple types into an architecture that remains maintainable, observable, and safe. As you explore the landscape of different types of agents in ai, keep a running map of capabilities, interfaces, and expected latency. According to Ai Agent Ops, practical AI agent design often starts with a simple baseline and grows into layered agentic workflows that combine perception, reasoning, and action.

mainTopicQuery

ai agents

Feature Comparison

| Feature | Reactive Agent | Deliberative/Model-based Agent | Goal-based Agent | Learning Agent |

|---|---|---|---|---|

| Definition | Perception→action based on immediate stimuli | Uses an internal model to reason about world state before acting | Chooses actions to achieve explicit goals; may revise plans | Learns from data or interaction to improve performance over time |

| Scope of reasoning | Low computational overhead; fast responses | High planning capability; can simulate outcomes | Future-oriented with explicit goals; adaptable to new plans | Data-driven; performance improves with experience |

| Perception-Action loop | Direct mapping from inputs to actions | Internal world model guides decisions | Goal-directed search over actions | Policy/learning-based action selection |

| Data needs | Minimal data; rule-based | Structured models; domain knowledge | Goals and constraints; task-level data | Experience data; feedback signals |

| Best use case | Real-time control; simple automation | Complex problem solving; environments with rich state | Tasks with clear end-state goals or constraints | Adaptive systems with feedback loops and continuous improvement |

Positives

- Clear separation of concerns facilitates testing and maintenance

- Low runtime overhead for simple tasks enables real-time decisioning

- Easier to reason about behavior and debug in isolation

- Modular upgrades: swap in new components without rewriting the whole system

- Supports predictable governance and safety constraints

What's Bad

- Limited long-horizon reasoning without added planning layers

- Rigid behavior if not integrated with learning or planning

- Upfront design effort to specify goals, models, and interfaces

- Can incur higher development and compute costs for complex setups

Layered, mixed-type agent architectures deliver the best balance of reliability and adaptability.

A layered approach that blends reactive, goal-based, planning, and learning components typically outperforms single-type designs. Start simple, validate quickly, then incrementally add agent types to handle complexity and evolving requirements.

Questions & Answers

What are AI agents and why do they matter in software design?

AI agents are autonomous or semi-autonomous software entities that perceive their environment, reason about it, and take actions to achieve defined goals. They matter because they can operate continuously, adapt to changing data, and offload routine decision-making from humans. Understanding the different types of agents in ai helps teams design systems that are more scalable, observable, and capable of handling complex tasks.

AI agents act and decide on behalf of humans, adapting to data and goals over time.

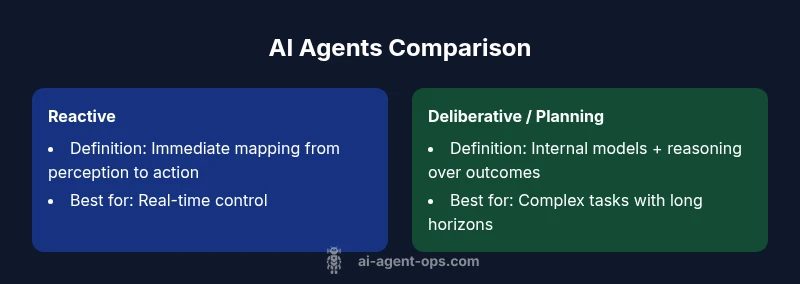

How do reactive and deliberative agents differ in practice?

Reactive agents respond immediately to inputs with simple mappings from perception to action, offering speed and reliability for straightforward tasks. Deliberative agents use internal models and planning to simulate outcomes before acting, enabling more complex problem solving at the cost of increased computation and design complexity.

Reactive is fast; deliberative planners are smarter but heavier to run.

When should I consider learning agents?

Learning agents improve performance as they gain experience from data and feedback. They are valuable when the environment is noisy or evolving, and when you have access to representative data streams. They complement reactive or planning components by optimizing policies over time.

Use learning agents when you have data and want the system to get better with experience.

What risks come with multi-agent systems?

Multi-agent systems add coordination complexity, potential contention, and emergent behaviors that are hard to predict. They require careful interface design, clear protocols, and robust monitoring to prevent unsafe or inefficient outcomes.

MAS can become complex; plan governance and safety checks.

How can I evaluate agent performance effectively?

Evaluation should align with defined goals and operational constraints. Use measurable metrics such as response latency, success rate, learning progress, and safety incidents. Regular A/B testing, simulations, and real-world pilots help validate decisions across agent types.

Measure latency, success, and safety to judge how well agents perform.

Key Takeaways

- Map your problem to agent types

- Start with a reactive baseline for reliability

- Incorporate planning and/or learning for adaptability

- Use multi-agent coordination when scaling across domains

- Measure performance with real-world tasks and guardrails