Which AI Agent Is Best for Image Generation? A Comparative Guide

Learn how to pick the best AI agent for image generation. This analytical guide compares architectures, costs, latency, and governance to help developers and business leaders choose the right agent strategy in 2026.

which ai agent is best for image generation is not a one-size-fits-all answer. The optimal choice depends on your goals, such as image quality, prompt control, latency, and governance. In practice, teams often balance a primary, strong single-model agent with supplemental capabilities from orchestrators or multi-model ensembles to cover variety in prompts and outputs. According to Ai Agent Ops, the best path is a decision framework that weighs quality, speed, cost, and compliance rather than chasing a single, universal winner. This article provides a structured comparison to help you decide.

Why this question matters for image generation

In the rapidly evolving field of image generation, choosing the right AI agent shapes not just image quality but also your team's velocity and governance. The question which ai agent is best for image generation sits at the intersection of model capability, integration ease, data handling, and cost. For developers, product managers, and leaders, the decision affects how quickly you can prototype, how reliably you can reproduce results, and how you manage access to sensitive data. The Ai Agent Ops team emphasizes that understanding your own workflow—prompt complexity, iteration cadence, and deployment context—is the first step toward a sound choice. When you frame the problem as a balance between creativity and control, you unlock a scalable pattern for image pipelines that stay robust as requirements evolve.

Key factors shaping the effectiveness of image-generation agents

The landscape includes diffusion-based models, transformer-based image editors, and rule-based constraint layers. Core capabilities to evaluate include prompt sensitivity, fidelity to style prompts, color and detail control, and the agent's ability to handle multi-step or iterative prompts. Latency matters when you need quick turnarounds, while cost matters in long-running campaigns or large catalogs. Governance considerations—data residency, model provenance, and audit trails—are essential for organizational compliance. While a single, well-tuned agent can perform many tasks, most teams benefit from an orchestration layer that routes prompts to the most suitable model, combines results, and applies post-processing rules. Ai Agent Ops highlights that a balanced approach reduces risk and increases throughput in professional workflows.

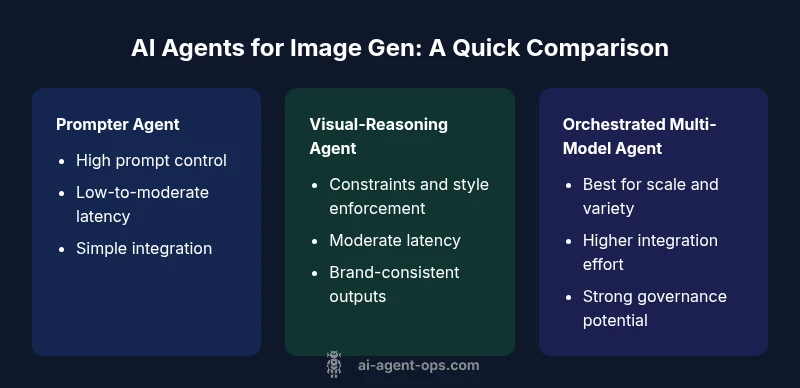

Common agent archetypes for image-generation tasks

There are several archetypes you can consider, each with trade-offs. The Prompter Agent relies on a strong prompt engineering layer to maximize quality from a single model. The Visual-Reasoning Agent adds constraint checks, style enforcement, and contextual reasoning to keep outputs aligned with brand guidelines. The Orchestrated Multi-Model Agent coordinates several models and tools, applying governance rules across generation, modification, and evaluation steps. When choosing, map your prompts to the most relevant architecture: simple prompts with a single model; complex prompts with multi-step reasoning; or fast-auditable outputs via orchestrators. The Ai Agent Ops framework suggests starting with one solid base model and layering from there as requirements become clearer.

Evaluation criteria and benchmarking basics

Benchmarking should cover three dimensions: quality (fidelity to the prompt, coherence, and style accuracy), performance (latency per render and throughput under load), and governance (traceability, data handling, and access controls). Use standardized prompts to measure repeatability and create a small catalog of prompts that represent typical tasks. Document variability across runs to understand stability. Remember to separate subjective judgments (aesthetics) from objective metrics (PSNR, SSIM, or perceptual quality estimates) and to calibrate scores with a panel if possible. The goal is to create a defensible, data-driven basis for selecting an agent architecture rather than relying on anecdotal success stories.

Decision framework: match goals to agent type

Start by listing top priorities: fastest turnaround, highest fidelity, or strict governance. If speed is king and prompts are straightforward, a robust single-model Prompter Agent may suffice. If outputs must adhere to brand guidelines or multiple styles, add a Visual-Reasoning layer to enforce constraints. For diverse catalogs or high-stakes outputs, an Orchestrated Multi-Model Agent often yields the best balance, provided governance and cost controls are in place. Use a decision matrix that includes: quality, latency, cost, ease of integration, data governance, and scalability. The Ai Agent Ops model recommends piloting 2–3 architectures on representative prompts to observe real-world trade-offs before full adoption.

Practical implementation patterns for teams

Begin with a lightweight pilot: pick a base model and define 3–5 prompts that cover your typical use cases. Add a prompting guide, style templates, and post-processing rules. Introduce an orchestration layer only after you’ve established stable prompts and a baseline quality. Build a simple evaluation dashboard that records render times, quality judgments, and variations across styles. As you scale, implement governance policies: data handling, access controls, and versioning. Finally, plan for continuous improvement by collecting user feedback and updating prompts, styles, and constraints. The most successful teams curate an evolving toolkit rather than a single “best” agent.

Practical guidance on data privacy, licensing, and model provenance

Data privacy and licensing directly affect the decision on which AI agent is best for image generation. Prefer agents that provide clear data-use policies, model provenance, and auditable rendering histories. For organizations, keeping source prompts under control and ensuring that generated assets inherit clear licensing terms avoids downstream disputes. When you combine this with explicit governance for model updates and access auditing, you create a robust operating model. Ai Agent Ops stresses the importance of documenting decisions, maintainable prompts, and a clear upgrade path so teams can adapt as models evolve.

How to measure success in production

Success in production hinges on stable pipelines, reproducible results, and alignment with business goals. Define metrics for image quality (qualitative reviews and objective metrics), speed (average render time and pipeline latency), and governance (auditability and compliance). Establish a baseline using your chosen base model, then test additional agents or orchestrators against the baseline. Track changes over time as prompts, styles, and use cases evolve. A disciplined, data-informed approach helps you avoid overcommitment to a single technology while remaining responsive to new capabilities.

Authority sources and best-practice references

Below are sources you can consult to ground your decisions in established research and industry practice. These references help frame the evaluation criteria and governance considerations that influence which ai agent is best for image generation.

Real-world usage scenarios and trade-offs

Consider cases such as a design studio that needs brand-consistent imagery, a game developer generating assets at scale, or a marketing team producing diverse visuals for campaigns. In each scenario, you’ll trade off nuances in control, speed, and cost. A studio may favor an orchestrated approach to maintain brand consistency across assets, while a small team might prioritize a lightweight prompter agent for rapid iterations. Understanding these trade-offs helps you tailor the agent strategy to your organization’s unique needs.

Pitfalls to avoid and optimization tips

Common errors include underestimating the governance overhead, choosing an agent solely on cost, or failing to document prompts and prompts' variants. Mitigate these by creating a prompt library, establishing review checkpoints, and integrating a simple rollback mechanism when an output misses quality gates. Optimization often comes from refining prompts, adding style constraints, and layering validation checks within an orchestration step to ensure outputs stay on-brand and within policy.

Feature Comparison

| Feature | Prompter Agent | Visual-Reasoning Agent | Orchestrated Multi-Model Agent |

|---|---|---|---|

| Core Approach | Single-model, prompt-driven | Prompt constraints + style checks | Coordinates multiple models and prompts |

| Quality of Outputs | High with strong prompts | Consistent with constraints and style | Potentially highest with ensemble, |

| Latency | Low to moderate | Moderate due to checks | Moderate to high due to orchestration |

| Cost | Low to moderate | Moderate to high (post-processing) | Moderate to high (model calls and orchestration) |

| Best For | Straightforward prompts and fast cycles | Brand-compliant, style-bound outputs | Diverse outputs at scale with governance |

Positives

- Enables high flexibility with modular design

- Can optimize quality while controlling costs

- Improves reproducibility through orchestration

- Supports governance and traceability at scale

What's Bad

- Adds architectural complexity and maintenance burden

- May incur higher upfront integration effort

- Requires governance discipline and monitoring

- Latency can increase with orchestration layers

Orchestrated multi-model agents offer the strongest long-term flexibility and governance, but require investment in integration and monitoring.

If your use case spans multiple styles or brands, orchestration provides scale and control. For tightly scoped tasks, a single robust prompter may be more cost-efficient.

Questions & Answers

What makes an AI agent the best for image generation?

The best AI agent balances output quality, prompt control, latency, and governance with your organization's constraints. It should deliver consistent results, integrate cleanly with existing tools, and support auditable workflows.

The best agent balances quality, speed, and governance, matching your organization’s needs while staying auditable and easy to integrate.

Should I use a single agent or orchestration?

If prompts are simple and speed is crucial, a strong single-model agent can be sufficient. For complex prompts, brand constraints, and scale, orchestration across models is typically more robust and future-proof.

Start with one solid model, then add orchestration if your prompts get complex or you need scale.

Are open-source agents competitive with commercial ones?

Open-source options can be competitive on cost and customization, but may require more in-house expertise for maintenance and governance. Commercial agents often provide stronger support and richer governance tooling.

Open-source options can work well with your team, but expect to invest in governance and maintenance.

How do I measure image quality versus speed?

Establish standardized prompts, collect human and perceptual quality scores, and record render times across runs. Use a simple delta metric to track improvements over time and against baselines.

Use standardized prompts and track both quality scores and render times to compare options.

What governance considerations matter most?

Prioritize data residency, licensing, model provenance, prompt versioning, and audit trails. Governance reduces risk as you scale image-generation activities.

Data residency, licensing, and audit trails are key as you scale.

What’s a practical first step for teams?

Run a 2–3 prompt pilot with a base model, define success metrics, and implement a minimal governance framework. Use the results to decide whether to layer in orchestration.

Pilot with a base model, measure results, then decide on orchestration.

Key Takeaways

- Define clear goals: quality, speed, governance

- Start with a base model, then layer orchestration

- Prioritize data policy and licensing early

- Pilot 2–3 architectures on representative prompts

- Document prompts and results for auditability