Tips for Building AI Agents Anthropically: A Practical Guide

A practical, governance-minded guide from Ai Agent Ops on building AI agents with agentic design principles, covering goals, architecture, safety, data handling, and evaluation for scalable, ethical AI agent systems.

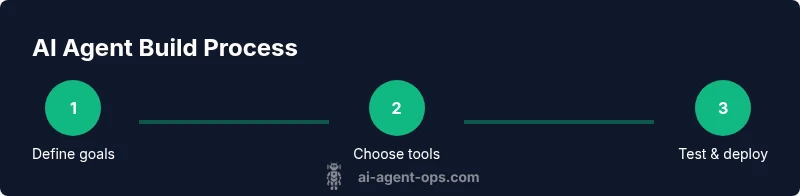

Guiding you through practical steps, this quick guide shows how to build AI agents using anthropic-style design. You’ll define goals, assemble capabilities, implement agentic workflows, and apply safety and governance controls from day one. Follow these steps to create scalable, auditable agents that align with business outcomes. It emphasizes governance, ethics, and measurable performance to help teams ship responsibly.

Why these tips matter for building AI agents in anthropic frameworks

In the realm of tips for building ai agents anthropic, clarity of purpose and guardrails are essential. According to Ai Agent Ops, governance and risk considerations must drive architecture from day one, not as an afterthought. This section explores why a governance-minded, modular approach matters when you design agentic workflows. You’ll learn how to balance autonomy with controllability, so agents can act effectively while staying aligned with organizational policies. Expect practical examples of how to decompose complex goals into explicit, testable tasks and how to document assumptions so teams can audit decisions later. By anchoring development to auditable processes, you reduce the likelihood of cascading failures when agents encounter novel situations. The discussion also highlights how to treat data provenance, tool selection, and logging as first-class design choices, not catch-up tasks. As you read, keep the primary keyword in mind: tips for building ai agents anthropic, which guides you toward responsible, scalable agent design that respects human oversight and regulatory constraints.

longTextNotUsedInThisField_IgnoreIfNeededOnlyIfSchemaRequires

null

Tools & Materials

- LLM API access(Choose a provider with strong policy controls and fine-grained tool access.)

- Agent orchestration framework(Select a framework that supports pluggable tools, memory, and policy hooks.)

- Version control system(Use Git with clear branching for experiments and governance.)

- Data privacy and governance policy docs(Have templates for data handling, retention, and consent.)

- Logging and monitoring stack(Centralized logging, anomaly detection, and alerting.)

- Testing environment (sandbox)(Isolate experiments from production; enable safe rollback.)

- Prompts library and templates(Versioned prompts, templates, and safety constraints.)

- Security & compliance checklist(Regular reviews for access, secrets, and data use.)

- Compute resources(Reserved capacity for experimentation and deployment)

Steps

Estimated time: Total time: 2-3 hours (pilot) + ongoing iterations

- 1

Define goals and constraints

articulate the primary objective of the agent, the intended user base, and the operational constraints (latency, data access, safety). Translate goals into measurable success criteria and guardrails that prevent common failure modes. This grounding makes later steps auditable and repeatable.

Tip: Document goals in a single, shared spec that all stakeholders can review. - 2

Assemble capabilities and tools

Identify the core capabilities the agent needs (planning, retrieval, tool usage, memory). Map each capability to a tool or API and define how the agent should choose among them. Create a lightweight prototype that the team can test within a sandbox.

Tip: Start with a minimal, well-scoped toolset to reduce complexity early. - 3

Design agent workflow orchestration

Define the end-to-end loop: goal understanding → plan generation → tool execution → result evaluation → loop or escalation. Include human-in-the-loop checkpoints for high-stakes tasks and establish clear exit criteria for autonomous decisions.

Tip: Explicitly specify when to escalate and who must approve outcomes. - 4

Implement safety and governance

Embed guardrails, dynamic risk checks, and logging of decisions. Apply access controls, secret management, and prompt-safe-guard policies to limit capability misuse. Ensure data privacy and compliance are baked into the design.

Tip: Keep a living safety policy and log every agent action for audits. - 5

Test, measure, and iterate

Run controlled experiments to test performance against defined metrics. Use ablation studies to understand which components drive results. Iterate rapidly, but only after validating with data and stakeholders.

Tip: Track both success metrics and failure modes to improve robustness. - 6

Prepare for production and monitoring

Package the agent with versioned prompts, tools, and policies. Deploy to a staged environment first, then monitor performance, latency, and safety signals continuously, with rollback capabilities ready.

Tip: Set up alert thresholds and a clear incident-response plan.

Questions & Answers

What is an AI agent in this guide?

An AI agent is a software entity that autonomously acts to achieve a goal by using tools, data, and reasoning. It can plan, decide, and execute tasks within defined constraints.

An AI agent is a software that autonomously acts to achieve goals using tools and data, with reasoning and planning.

What is agentic AI and how does it differ from simple bots?

Agentic AI refers to systems capable of goal-directed planning and fluid action with some autonomy. Unlike static bots, they reason about options, select tools, and adapt to new information within safety constraints.

Agentic AI involves goal-driven planning and tool use, unlike simple bots that follow fixed scripts.

How should I start building an AI agent responsibly?

Begin with a governance-first design: define goals, establish guardrails, require human-in-the-loop for critical decisions, and set up auditing and logging from day one.

Start with governance, guardrails, and clear auditing to build responsibly.

What safety measures are essential before production?

Implement access controls, data privacy policies, prompt safety constraints, and a robust incident-response process with monitoring and alerts.

Ensure access control, data privacy, and prompt safety before production.

How do you evaluate AI agent performance?

Use predefined metrics for goals, track failure modes, run controlled experiments, and compare against baselines to gauge robustness and reliability.

Evaluate with defined metrics and controlled experiments against baselines.

Where can I learn more about AI agents and agentic design?

Consult authoritative sources on AI risk management and agent design, including Ai Agent Ops’s guidance and related academic resources.

Check Ai Agent Ops for guidance and academic resources on agent design.

Watch Video

Key Takeaways

- Define clear goals before building.

- Architect with guardrails and governance in mind.

- Iterate with measurable metrics and controlled experiments.

- Document decisions and versions for auditability.

- Deploy in staged environments and monitor continuously.