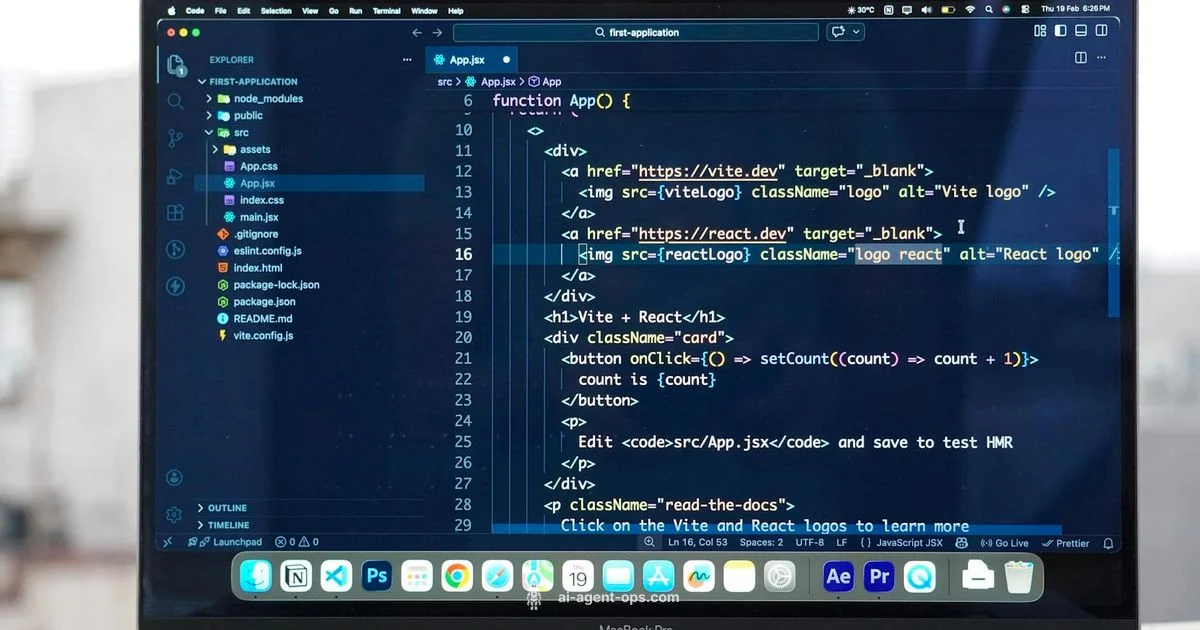

Local AI Agent for VS Code: A Practical Developer Guide

Learn how to design, implement, and use a local AI agent for VS Code that runs on-device, protects privacy, and accelerates coding workflows with practical code examples and best practices.

Local AI agent for VS Code is a self-contained AI assistant that runs on your machine inside VS Code, performing tasks without sending sensitive data to external servers. It uses on-device models and local tooling to help with coding, testing, and automation, delivering fast feedback and privacy-friendly automation. This guide shows how to design, implement, and integrate a lightweight on-device agent that improves latency, reduces data exposure, and fits naturally into the VS Code workflow.

What is a local AI agent for VS Code and why it matters

A local ai agent for vs code runs entirely on your machine, using on-device models or locally hosted components to assist with coding tasks. This design minimizes data leaving your environment, reduces network latency, and provides a privacy-first workflow for sensitive repositories. In contrast to cloud-based assistants, a local agent leverages the VS Code extension host and lightweight inference engines to offer immediate feedback, code generation, refactoring hints, and task automation without mandatory cloud calls. According to Ai Agent Ops, building an on-device agent emphasizes privacy, offline capability, and deep VS Code integration. The goals are predictable behavior, reproducible results, and safe fallbacks to cloud when a feature requires more compute. Whether you’re a solo developer or part of a team, a well-designed local agent can boost throughput by handling repetitive edits, running quick checks, and orchestrating local tools while keeping critical data on-device. The rest of this section walks through a minimal blueprint you can adapt to your stack.

// Minimal local agent interface

export interface LocalAgent {

id: string;

name: string;

planTask(input: string, context: object): Promise<string>;

train?(): Promise<void>;

}// Lightweight executor that runs a code task locally

async function executeTask(task: string, context: Record<string, any>): Promise<string> {

// naive local handler

if (task.startsWith("generate-snippet")) {

const snippet = `// Generated snippet\\nconsole.log('Hello from local agent');`;

return snippet;

}

// fallback

return `Task "${task}" executed locally with context ${JSON.stringify(context)}`;

}// Simple prompt interpreter stub

function parseIntent(input: string): string {

if (/snippet|code/i.test(input)) return "generate-snippet";

if (/refactor|change|rename/i.test(input)) return "refactor";

return "assist";

}Why this matters: It shows how to keep sensitive code on-device while providing value through intent handling and safe fallbacks.

wordCountSection1

Steps

Estimated time: 3-6 hours

- 1

Define goals and scope

List the core features you want from a local AI agent in VS Code, such as snippet generation, refactoring hints, and task automation. Define privacy and latency goals early to guide architecture decisions.

Tip: Document constraints and success criteria to align engineers and product goals. - 2

Set up the extension scaffold

Create a minimal VS Code extension structure, including package.json, tsconfig.json, and a simple activation event. Ensure the dev environment runs in a dedicated workspace.

Tip: Use the VS Code sample templates as a baseline to speed up setup. - 3

Implement a local agent core

Create a lightweight LocalAgent class with a planTask method and a safe fallback. Focus on a deterministic, testable interface that supports pluggable backends.

Tip: Write unit tests around intent parsing and task execution. - 4

Wire up on-device inference

Integrate a tiny on-device model or a mock inference function. Ensure the agent can run without network access and gracefully degrade when needed.

Tip: Mock heavy models in development to keep iteration fast. - 5

Create safe execution boundaries

Implement input validation, a permission gate for file edits, and a sandboxed execution environment to prevent unsafe operations.

Tip: Audit all external interactions and never execute arbitrary user input without validation. - 6

Test, iterate, and monitor

Run end-to-end tests, observe latency and memory usage, and collect user feedback to refine prompts and safety rules.

Tip: Start with a small, local user group to get early feedback.

Prerequisites

Required

- Required

- Required

- Required

- VS Code Extension Development knowledgeRequired

- Familiarity with CLI and npm/yarnRequired

Optional

- On-device ML basics (optional) such as ONNX RuntimeOptional

Commands

| Action | Command |

|---|---|

| Install dependenciesRun in project root | npm install |

| Build the extensionCompiles TypeScript to JavaScript | npm run build |

| Launch in Development HostOpen VS Code extension host | code --extensionDevelopmentPath . --disable-extensions |

| Publish extensionRequires VS Code Publisher token | vsce publish |

| Run testsUnit tests for the extension | npm test |

Questions & Answers

What is a local AI agent for VS Code?

A local AI agent for VS Code runs on your development machine, handling tasks and suggestions without sending data to external servers. It emphasizes privacy and low latency while integrating with the VS Code extension API. Cloud-based options can be used for heavier tasks if needed.

A local AI agent runs on your computer inside VS Code, offering fast, private automation with optional cloud support for heavy tasks.

How does it differ from cloud AI assistants?

Cloud AI assistants rely on remote servers for inference, which can introduce latency and data exposure. A local agent processes input on-device, often with a small model, and only uses the cloud when explicitly requested or needed for complex tasks.

Local agents process on your device for privacy and speed, using cloud resources only when necessary.

What skills do I need to build one?

Familiarity with TypeScript or JavaScript, VS Code extension development, and a basic understanding of on-device inference. Knowing how to structure safe, sandboxed code execution helps a lot.

Know TypeScript, VS Code extensions, and on-device inference basics for a solid start.

Can I test locally without internet access?

Yes. A well-designed local agent should operate offline for core features and gracefully fall back to cloud services only when connectivity is available and safe.

Yes, you can test offline; cloud fallback is optional.

What are common safety concerns?

Code execution safety, data isolation, and explicit user consent for writes are common concerns. Implement strict input validation, a permission model, and thorough auditing.

Mind safety controls, validate input, and require user consent for edits.

How do I evaluate performance?

Measure latency, CPU usage, and memory, then compare against cloud-based baselines. Use profiling tools available in VS Code and your runtime.

Profile latency and resource use to guide optimizations.

Key Takeaways

- Design for on-device execution first

- Define a clean API surface for intents

- Sandbox and validate all edits

- Test in real VS Code workflows

- Plan for safe cloud fallbacks when needed