LLM vs AI Workflow vs AI Agent: A Comprehensive Comparison

Explore how llm vs ai workflow vs ai agent differ, where each excels, and how to choose the right approach for scalable automation in modern AI projects.

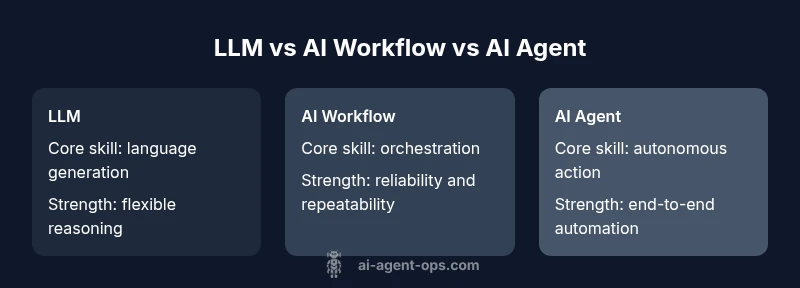

TL;DR: LLMs power flexible language tasks, AI workflows coordinate steps across tools, and AI agents provide autonomous action in complex processes. For most teams, start with LLMs to handle text and reasoning, implement simple AI workflows to stitch tasks, and introduce agents only when you need end-to-end automation with governance, monitoring, and safety controls in place.

What the terms mean: llm vs ai workflow vs ai agent

To understand llm vs ai workflow vs ai agent, we first need precise definitions and realistic expectations. An LLM (large language model) is a statistical model designed to generate, summarize, translate, and reason over natural language text. An AI workflow is a pattern for orchestrating multiple steps, tools, and data transformations to complete a task—often with explicit handoffs and state management. An AI agent combines perception, reasoning, and action, potentially operating autonomously within a defined environment. According to Ai Agent Ops, these three concepts sit on a spectrum from language-first reasoning (LLMs) to orchestration (AI workflows) to autonomous execution (AI agents). When teams align on this spectrum, they can design architectures that balance flexibility, reliability, and governance. The llm vs ai workflow vs ai agent comparison is not about replacing one with another; it’s about layering capabilities to match the problem at hand and progressively increasing automation as you build trust and control.

In practice, most organizations begin with LLM-powered tasks—such as drafting, summarizing, or answering questions. From there, they introduce AI workflows to enforce repeatable process logic, data routing, and tool integration. Only after establishing solid boundaries and observability do teams deploy AI agents for end-to-end automation, where agents can select tools, execute actions, and adapt to changing inputs without continuous human input. Throughout, the emphasis should be on safety, auditability, and clear ownership, especially when agents operate in real-time or with sensitive data.

Core capabilities and limitations

Understanding the core strengths and limits of each paradigm helps teams avoid overengineering. LLMs excel at language-based tasks: understanding prompts, generating coherent text, and performing reasoning in natural language. They shine in domains requiring nuance, creativity, or fast prototyping, but they rely on probabilistic outputs and can hallucinate or reflect biases if prompts aren’t carefully crafted. AI workflows offer reliable orchestration: they provide deterministic paths, state management, error handling, and clear data lineage across multiple tools. This makes them ideal for repeatable, auditable processes like data extraction, transformation, or multi-step decision pipelines. AI agents push toward autonomy: they can perceive their environment, decide on courses of action, and execute tasks without constant human intervention. Agents are powerful for complex objectives, but they demand robust governance, safety rails, and strong observability to prevent drift or unsafe actions.

A practical reality is that each layer compounds capabilities. An LLM can power decision hints within a workflow; a workflow can constrain an agent’s choices; an agent can select tools in a rule-based or model-driven fashion. The challenge is balancing expressiveness with control. From a cost perspective, language generation tends to dominate budgets, while orchestration incurs tooling and integration costs, and agents add compute for perception and action. Ai Agent Ops analyses highlight that cost models favor incremental adoption: start small, measure impact, and scale thoughtfully.

Decision framework: choosing the right abstraction

Choosing between llm, ai workflow, and ai agent depends on goals, risk tolerance, and organizational maturity. If your objective is rapid experimentation with text-heavy tasks, start with LLMs and simple prompts. If you need reliability across repeated processes with strict data governance, design AI workflows that standardize inputs, outputs, and tool interfaces. If your goal is autonomous execution in a dynamic environment—such as live customer support routing, autonomous data collection, or proactive anomaly remediation—an AI agent can deliver significant value, provided you implement strict guardrails, monitoring, and rollback capabilities.

A practical decision rubric can help: (1) define the task type (language, orchestration, action), (2) assess required control (human-in-the-loop vs fully autonomous), (3) evaluate data sensitivity and privacy, (4) estimate latency and throughput needs, (5) plan for governance and auditing, and (6) consider the organizational capability to support tooling and instrumentation. By mapping each decision criterion to the appropriate abstraction, teams avoid overengineered solutions and keep momentum.

For teams transitioning from LLMs to AI workflows, the question often shifts from “Can we do this?” to “How do we prove this will work at scale?” The answer lies in modular design, strong observability, and staged adoption. Start with a minimal viable configuration: an LLM-powered task with a simple, auditable workflow. Then incrementally introduce agents only where autonomy and end-to-end outcomes deliver measurable value.

Architecture patterns and integration strategies

Architectural clarity is essential when comparing llm vs ai workflow vs ai agent. The simplest pattern is a layered stack: an LLM for language tasks, a workflow orchestrator for deterministic steps, and optional agent components for autonomy. Interoperability hinges on clean interfaces: standardized prompts, tool APIs, and a central data store with traceable state changes. Key integration strategies include: (1) prompt-as-a-service with versioned prompts to manage evolution, (2) a workflow engine that handles retries, timeouts, and data normalization, (3) a fundemental memory or toolkit of reusable tools that agents can call, (4) observability hooks—logs, metrics, and explainability signals—to track decisions and actions, and (5) governance policies that cap actions in sensitive contexts.

In practice, you might deploy an LLM to draft a user-facing summary, route the draft through a workflow to fetch supporting data, and then hand off to an AI agent for action such as updating records or triggering alerts. Crucially, you should separate concerns: keep language tasks isolated from decision logic, and isolate autonomous actions behind safety checks and human oversight when appropriate. A well-designed architecture also anticipates failure modes: what happens when a tool is unavailable, or when an agent’s action leads to an unintended consequence? Clear rollback paths and user-visible explanations ensure confidence and compliance.

For teams building agentic capabilities, the recommended pattern is gradual escalation: start with instrumented prompts, attach a lightweight rule-based guardrail, then add tool use and limited autonomy. As you mature, you can replace rigid rules with learned policies or hybrid approaches, ensuring that every decision path remains auditable and reversible.

Real-world examples across industries

Across industries, llm vs ai workflow vs ai agent yield different value propositions. In customer support, LLMs handle initial inquiries with natural language understanding and succinct summaries. AI workflows coordinate data lookups, CRM updates, and ticket routing to ensure consistency and auditability. When the process requires proactive actions—such as credential checks, incident remediation, or dynamic resource allocation—AI agents can autonomously perform sequences of actions within safety boundaries. In healthcare, LLMs assist with documentation while workflows enforce regulatory-compliant data routing, and agents may operate in controlled environments to monitor device telemetry with physician oversight. In finance, language models draft analyses, workflows reconcile data from multiple sources, and agents can execute predefined trades or alerts under governance. The core advantage is alignment: LLMs provide flexible reasoning and content generation, workflows provide structure and repeatability, and agents provide autonomous execution with appropriate controls.

A practical tip is to treat these components as a gradient: begin with LLM-powered interactions, layer in orchestration to guarantee reliability, and only then enable autonomy where required by the business case. By observing real-world outcomes and iterating on interfaces, teams build robust systems that can scale from pilot projects to enterprise-grade automation.

In regulated environments, careful attention to data provenance, access controls, and audit trails is essential. Even when agents operate autonomously, you should maintain human-centred oversight for high-stakes decisions and ensure that explanations accompany actions to satisfy governance and compliance requirements. This approach preserves trust while unlocking the productivity gains of automation.

Observability, governance, and risk management

Observability is the backbone of any llm-ai workflow-ai agent stack. You need end-to-end traces that connect prompts, tool interactions, data inputs, and agent actions. Metrics should cover latency, success rate, tool failure rates, and human-in-the-loop intervention frequency. Governance policies must specify what agents can do, which data they may access, and the thresholds for escalation. Safety controls, such as burnout safeguards, prompt sanitization, and red-teaming exercises, help prevent unsafe or biased outcomes. Privacy considerations are critical when handling sensitive data; implement data minimization, encryption, and access controls to reduce risk. Finally, design for ethics: auditability, fairness, and transparency in how decisions are made. By combining robust observability with strong governance, llm, ai workflow, and ai agent deployments can scale responsibly while delivering measurable business impact.

Feature Comparison

| Feature | LLM | AI workflow | AI agent |

|---|---|---|---|

| Core function | Language generation, analysis, and reasoning in natural language | Orchestrates multi-step processes and tool calls with defined data flow | Perceives, reasons, and acts to achieve goals; can operate autonomously |

| Decision authority | Primarily generates outputs based on prompts | Executes deterministic or rule-based routing with human oversight | Makes autonomous decisions within safety and governance boundaries |

| Automation level | Low to moderate automation; generation-focused | Moderate automation; repeatable orchestration | High automation; end-to-end action across tools and systems |

| Data requirements | Text data; prompts; contextual signals | Structured inputs and tool interfaces; state management | Sensed data, history, and tool responses; memory and context handling |

| Latency | Higher due to generation cycles | Moderate; depends on step count | Variable; can be real-time with proper optimization |

| Cost considerations | Prompt length and API usage drive costs | Orchestration platforms and tool usage add costs | Compute, memory, and tooling costs; governance overhead |

Positives

- Clarifies role boundaries between reasoning, orchestration, and action

- Promotes modular, scalable automation across teams

- Enables reuse of LLMs across multiple workflows and agents

- Supports end-to-end automation when governance and observability are in place

What's Bad

- Increased integration and maintenance overhead

- Complex debugging and traceability challenges in multi-step setups

- Potential safety, governance, and privacy risks with autonomous agents

- Cost and latency considerations when scaling across tools

AI agents offer the strongest potential for autonomous end-to-end automation in complex environments, but LLMs and AI workflows remain essential for flexible reasoning and controlled orchestration.

Choose LLMs for language tasks requiring nuance and speed. Use AI workflows to standardize and scale processes. Deploy AI agents when autonomous action is needed, with governance, observability, and safety controls to ensure responsible operation.

Questions & Answers

What is the difference between an LLM, an AI workflow, and an AI agent?

An LLM is a language model focused on text generation and reasoning. An AI workflow is a orchestration pattern that sequences tasks and tools. An AI agent combines perception, reasoning, and action to autonomously execute goals within defined constraints.

LLMs handle language tasks, workflows manage steps, agents act autonomously within rules.

When should I start with an LLM vs an AI workflow?

Start with an LLM for exploratory, text-heavy tasks. Introduce an AI workflow when you need reliable, repeatable processes with clear data paths and governance.

Begin with language tasks, then add structured workflows for reliability.

What are typical risks of AI agents?

Autonomous agents can take unintended actions if not properly constrained. Risks include data leakage, unsafe tool use, and governance gaps. Implement safety rails, monitoring, and rollback plans.

Agents can misbehave if not watched; set guardrails and alerts.

Can AI workflows operate without LLMs?

Yes. AI workflows can orchestrate data processing and tool calls using rule-based logic or non-language AI models. LLMs are optional in such stacks if language tasks aren’t required.

Yes, workflows can operate without language models when language tasks aren’t needed.

How do I measure success for an AI agent?

Measuring success involves outcome-focused metrics such as task completion rate, time to resolution, error rate, and governance compliance. Include observability signals and auditability.

Track how often agents finish tasks correctly and safely.

Is this approach suitable for real-time decisions?

Real-time suitability depends on latency, tool availability, and safety controls. LLMs can be a bottleneck; optimizing prompts and edge deployments helps with responsiveness.

Real-time use is possible with the right architecture and safeguards.

Key Takeaways

- Map tasks to the right abstraction: language, orchestration, or autonomous agent

- Prioritize governance and observability for agent-driven automation

- Begin with LLMs and simple workflows; scale to agents as needed

- Evaluate latency and cost before scaling automation

- Design for auditability and safe rollback in all layers