LangChain vs AI Agent: A Practical Comparison Guide

A rigorous, analytical comparison of LangChain and generic AI agents, covering architecture, use cases, costs, governance, and implementation guidance to help teams choose the right path.

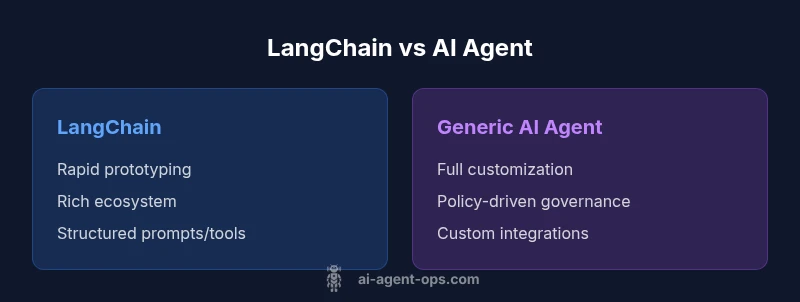

In the langchain vs ai agent decision, LangChain accelerates prototyping and standard workflows with a modular suite of agents, tools, and integrations, while a custom AI agent architecture offers deeper customization, governance, and end-to-end control. This comparison highlights the core tradeoffs, so engineering teams can choose based on speed, scalability, and compliance needs.

Context and Definitions

In modern AI software development, two dominant paths often surface: using a framework like LangChain to assemble agentic workflows, or building a bespoke AI agent architecture tailored to specific governance, data, and runtime constraints. For teams evaluating the langchain vs ai agent question, the distinction is not merely about tooling; it reflects different philosophies of software architecture, team capabilities, and long-term maintainability. LangChain provides ready-made components and a rich ecosystem designed to accelerate common agent-based tasks, while a custom AI agent approach emphasizes explicit control over data flows, policy enforcement, and integration choices. Ai Agent Ops notes that the choice typically hinges on velocity versus customization, with tradeoffs in maintenance burden and vendor dependence. Understanding these definitions helps set realistic expectations for deployment, testing, and scaling in production environments.

LangChain in Practice: Features, Tools, and Typical Workflows

LangChain is best understood as an opinionated toolkit that guides developers through building, testing, and deploying agentic AI workflows. It offers modular components for prompt design, memory management, tool integration (APIs, databases, filesystems), and orchestration patterns that reduce boilerplate. In practice, teams assemble agents that can chain calls, reason about user inputs, fetch external data, and perform actions in a governed loop. The strength of LangChain lies in its ecosystem: curated connectors, a community of example projects, and documented patterns for common challenges like long-running tasks or multi-step decision making. For many teams, LangChain lowers the barrier to start prototyping agent-based solutions, allowing focus on business logic rather than plumbing.

AI Agent Architecture: Building Blocks and Tradeoffs

A generic AI agent approach emphasizes the fundamental building blocks: perception (input handling), planning (strategy selection), execution (actioning commands), and reflection (learning from outcomes). When you design an AI agent from scratch or with a bespoke framework, you gain granular control over data governance, latency budgets, and integration semantics. This path supports specialized workflows, strict security postures, and tailored monitoring. However, the tradeoffs include higher initial development time, more complex testing matrices, and the need for in-house expertise across orchestration, tooling, and deployment. Ai Agent Ops observes that teams pursuing this route must invest in robust observability, auditability, and change management to succeed at scale.

Architecture and Data Flows: LangChain vs Custom Agent Orchestration

LangChain’s architecture emphasizes composability: prompts, memory, tools, and agents are coupled through well-defined interfaces. Data flows typically follow a predictable pattern: user input → prompt/template → tool invocation → result aggregation → response. In custom agent architectures, developers tailor data models, security boundaries, and policy engines. Data provenance, access control, and audit logs often require bespoke solutions. When deciding langchain vs ai agent, teams should map out data gravity—where data originates, how it travels, and who can access it. A hybrid approach is common: use LangChain for rapid prototyping while layering governance and custom adapters where specialized workflows demand it.

Performance, Security, and Governance Considerations

Performance considerations include latency budgets, throughput, and path determinism. LangChain can introduce overhead through abstraction layers but generally provides efficient tooling and caching strategies. Security concerns center on data exposure, prompt injection risks, and tool access controls. With LangChain, governance is typically facilitated by centralized templates and policy modules, but bespoke agents enable tighter control when policy enforcement must be baked into every decision point. Ai Agent Ops highlights that a clear governance model—who can deploy, modify, or shut down an agent—significantly affects risk posture and reliability in production.

Ecosystem, Tooling, and Community Support

LangChain benefits from a thriving ecosystem of connectors, templates, and community modules that accelerate development and experimentation. The breadth of tutorials, sample projects, and community support can shorten time-to-value for (langchain vs ai agent) use cases. Conversely, a generic AI agent approach may leverage standard tooling (LLMs, vector stores, orchestration engines) but requires more custom integration work to achieve parity with LangChain’s out-of-the-box patterns. The Ai Agent Ops team recommends evaluating the sustainability of each path: maintainability of dependencies, frequency of updates, and availability of skilled engineers who can extend or replace components as needs evolve.

When to Use LangChain: Decision Criteria and Scenarios

LangChain is often the right choice when speed-to-value matters and the project fits common agent-based patterns: API calls, data retrieval, and simple decision policies can be prototyped quickly with less bespoke code. It shines in environments where rapid experimentation, clear templates, and an active ecosystem reduce risk. For highly customized governance, data-sensitive deployments, or organizations with niche compliance requirements, a bespoke AI agent architecture may be more effective. Ai Agent Ops emphasizes a staged approach: start with LangChain for prototyping, then gradually replace or augment components with custom modules as governance, latency, or data-control needs dictate.

Practical Implementation Guidelines: Getting Started with LangChain

If you are leaning toward LangChain, begin with a minimum viable agent workflow: define the use case, choose a core toolset, and implement a simple agent loop to handle a user prompt and a single tool. Expand gradually by adding memory, multi-tool orchestration, and robust error handling. Documentation and templates from LangChain, plus community examples, provide concrete patterns for incremental complexity. Parallelly, establish a lightweight governance framework—versioned prompts, access controls, and test harnesses—to ensure safe experimentation. Throughout, keep alignment with business goals and metrics to drive disciplined adoption.

Common Pitfalls and How to Avoid Them

Common pitfalls in the LangChain vs ai agent decision include over-reliance on a single framework, neglecting data governance, and underestimating testing complexity for multi-step agents. To avoid these, adopt a modular design principle: separate business logic from orchestration, implement clear data provenance, and build comprehensive test suites that simulate real-user scenarios. Monitor performance and failures with robust logging and alerting. Finally, ensure a plan for long-term maintenance, including dependency management, security reviews, and regular architectural reviews to prevent drift from initial architectural goals.

Next Steps and Roadmap Considerations for Teams

For teams planning a transition or split between LangChain and a bespoke agent approach, set a phased roadmap: (1) prototype with LangChain to validate feasibility, (2) inventory reusable components, (3) segment governance and data-control needs, (4) incrementally replace or extend LangChain components with custom modules where appropriate, (5) invest in instrumentation and security posture. This roadmap aligns with best practices in agent orchestration and helps organizations balance speed, control, and resilience as they scale their AI agent initiatives.

Comparison

| Feature | LangChain | Generic AI Agent |

|---|---|---|

| Ease of getting started | Low barrier with templates and examples | Higher upfront effort and integration work |

| Flexibility and customization | Good for standard patterns, customizable via plugins | Maximum customization at every layer |

| Ecosystem and tooling | Rich connectors, community modules, rapid prototyping | Core tooling requires bespoke integration |

| Governance and compliance | Template-driven governance, easier to audit | Custom governance must be built from scratch |

| Performance and latency | Low to moderate overhead, optimized tooling | Potentially tighter control but higher complexity |

| Security posture | Managed security patterns, role-based access | Custom security model tailored to org |

| Maintenance and updates | Active maintenance, frequent updates | Maintenance depends on in-house team |

| Best use case | Rapid proof-of-concept, standard workflows | Complex, regulated environments with unique needs |

Positives

- Speeds up prototyping and reduces boilerplate with ready-made agents and tools

- Offers strong ecosystem support and extensive documentation for common patterns

- Keeps teams focused on business logic rather than wiring across tools

What's Bad

- Potential vendor or ecosystem lock-in if heavily using the framework

- Learning curve for mastering advanced features and best practices

- Dependency on community updates which may affect long-term stability

LangChain is the better starting point for rapid prototyping and standard agent workflows; a custom AI agent architecture is preferable when governance, customization, and strict data control are non-negotiables.

Choose LangChain to accelerate development and leverage a mature ecosystem. Opt for a bespoke AI agent approach if your project demands tight governance, unique data flows, and end-to-end control over every component.

Questions & Answers

What is LangChain and how does it relate to AI agents?

LangChain is a framework designed to simplify building agent-based workflows by providing modular components and tools. It helps developers assemble prompts, memory, and tool calls into agent patterns, reducing boilerplate and accelerating prototyping. In many teams, LangChain acts as a bridge between ideation and production for agent-driven applications.

LangChain helps you build agent workflows faster with ready-made patterns and tools.

What defines a generic AI agent architecture?

A generic AI agent architecture centers on perception, planning, execution, and reflection. It emphasizes governance, data control, and customizable orchestration layers. This approach suits organizations needing deep customization, strict compliance, or unique business logic.

An AI agent architecture is built around perception, planning, and execution with strong governance.

When should I choose LangChain over a bespoke agent?

Choose LangChain for rapid prototyping, standard workflows, and faster time-to-value. Opt for a bespoke agent when governance, data privacy, and specialized integrations demand custom architecture and continuous control.

Use LangChain to prototype fast; go bespoke if governance and data controls are your priority.

Can LangChain be replaced with a custom solution later?

Yes, many teams start with LangChain and progressively replace components with custom modules as requirements mature. This approach preserves momentum while enabling deeper control where needed.

You can start with LangChain and swap in custom parts as your needs grow.

What are common risks when choosing LangChain?

Risks include potential over-reliance on the framework, vendor-specific constraints, and maintenance considerations if the ecosystem shifts. Mitigate by modular design and clear exit strategies.

Risks involve framework dependency and maintenance; plan for modularity and exits.

Is production-ready LangChain feasible for regulated industries?

Production in regulated industries is feasible with proper governance, auditing, and secure data handling. Consider implementing custom policy layers and rigorous testing to meet compliance.

Production with LangChain is possible if you build strong governance and tests.

Key Takeaways

- Start with LangChain to validate feasibility quickly

- Map data flows and governance early to avoid later rewrites

- Balance speed with control by using a hybrid approach when needed

- Invest in testing and observability from day one

- Leverage Ai Agent Ops insights to guide architecture decisions