Comparing AI Agents: A Thorough Side-by-Side Guide

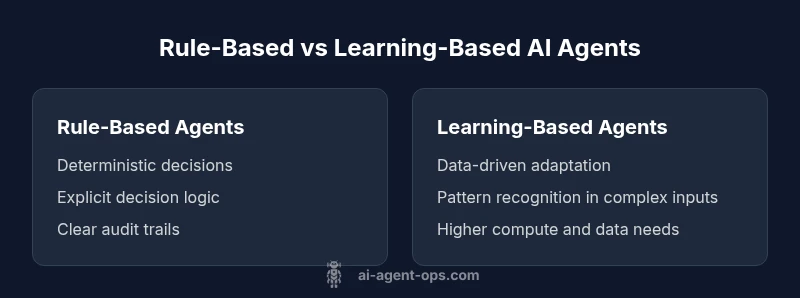

Explore a rigorous, objective comparison of rule-based versus learning-based AI agents, covering architecture, governance, data needs, safety, maintenance, and deployment to help teams choose the right approach for their use cases.

TL;DR: When comparing ai agents, start with governance, data availability, and risk tolerance, then evaluate architecture, safety, data needs, and maintenance. A structured, side-by-side approach reveals which design pattern best fits your use case, which helps with budget, compliance, and speed to value. See the detailed chart below for nuance in comparing ai agents.

Foundational goals of comparing ai agents

Comparing ai agents is about more than selecting a single best technology. It is a disciplined process that weighs architecture, governance, data requirements, safety, and maintenance against business objectives and risk tolerance. The Ai Agent Ops team emphasizes starting with a clearly defined problem, success criteria, and constraints before weighing options. When teams frame outcomes such as faster decision cycles, auditable decisions, or safer human-in-the-loop control, they create a foundation for a fair, transparent evaluation. In practice, this means mapping decision points, input types, output expectations, and the regulatory or security requirements that shape the design space. The result is a robust decision framework that can be applied across projects, vendors, and internal experiments, reducing bias and accelerating progress. In the backdrop of evolving agentic AI, the goal remains to enable smarter, faster automation while keeping risk within acceptable bounds.

According to Ai Agent Ops, starting with a crisp problem statement and measurable criteria helps teams avoid feature-spotting and focuses the comparison on what truly matters for long-term value. This article uses a structured lens to illuminate how Rule-Based and Learning-Based AI Agents differ in architecture, governance, and operational realities. By keeping the focus on outcomes, you can translate insights into concrete next steps for pilots, procurement, and platform choices.

Key dimensions for evaluating AI agents

When you compare ai agents, use a structured set of criteria to keep the analysis objective. Key dimensions include:

- Architecture and decision logic: how decisions are made, whether rules are explicit or learned, and how predictable outcomes are across inputs.

- Data requirements: data volume, quality, labeling needs, and data governance implications.

- Governance and compliance: risk controls, audit trails, and regulatory obligations that constrain design choices.

- Safety and explainability: visibility into decisions, guardrails, and the ability to explain results to humans or regulators.

- Performance and latency: how fast decisions are made, throughput under load, and reliability under edge cases.

- Maintainability and upgrade cycles: ease of updates, monitoring needs, and the process for retraining or refreshing rules.

- Integration and ecosystem: compatibility with existing tools, APIs, and data pipelines.

- Total cost of ownership and ROI: upfront and ongoing costs, including compute, maintenance, and potential savings from automation.

For each dimension, evaluate Rule-Based Agents and Learning-Based Agents against the same criteria to keep the comparison fair and actionable.

Architecture and data flows: modular vs end-to-end

Architecture is the central differentiator in comparing ai agents. Rule-Based Agents typically rely on modular pipelines: input processing, decision rules, action adapters, and logging. This modularity makes behavior easy to audit and change in small, well-defined steps. Learning-Based Agents, by contrast, often operate end-to-end or rely on learned components embedded within a broader system. They can adapt to novel inputs, but their internal logic is less transparent, which raises explainability and governance concerns.

In practice, a hybrid approach often emerges: a rule-based supervisor governs a learned module, providing safety nets and fallback behavior. Evaluating these architectures requires mapping data flows from data ingestion to final action, identifying where rules or models inject bias, and assessing end-to-end latency under realistic workloads. As you compare ai agents, consider how data moves through each component, where failures would propagate, and how observability supports rapid debugging. An architecture that balances explicit governance with adaptive capability tends to perform more reliably in uncertain environments.

From Ai Agent Ops’ perspective, the choice between modular and end-to-end designs should hinge on the organization’s tolerance for risk, the maturity of data pipelines, and the need for auditable decisions. The goal is a design that is both robust and adaptable to changing business requirements.

Safety, transparency, and governance considerations

Safety and governance are non-negotiable when comparing ai agents for real-world use. Rule-Based Agents shine on safety due to their explicit logic, deterministic outputs, and easier auditing. Learning-Based Agents can achieve superior performance but require rigorous guardrails, continuous monitoring, and robust data governance to prevent drift and unintended behavior. Transparency is essential for audits and regulatory compliance; thus, explainability tools, reason codes, and traceable decision logs should be built into the evaluation framework.

A practical governance approach includes defining who can modify rules or retrain models, establishing approval workflows, and implementing access controls for data and artifacts. It also involves assessing privacy implications, data provenance, and the risk of model leakage. For teams pursuing agentic AI, establishing clear fail-safes, redundancy, and human-in-the-loop paths reduces risk while preserving operational agility. In this space, Ai Agent Ops emphasizes documenting decision rationales and baseline safety criteria early in the assessment process.

Ai Agent Ops analysis shows that strong governance correlates with higher trust and lower long-term risk, especially in regulated industries. This is not a risk-free choice; it is a disciplined effort to balance autonomy with accountability.

Evaluation frameworks and scoring approaches

A rigorous evaluation uses a structured scoring framework rather than ad-hoc impressions. Start by defining a rubric that maps each criterion to a standardized scale (for example, 1–5 or 0–100) and assign weights reflecting business priorities. Common criteria include accuracy, reliability, explainability, latency, data requirements, maintainability, and safety/compliance. For each agent type, score against the rubric and aggregate results into a transparent scorecard. Document assumptions, test scenarios, and any caveats. A well-documented scoring process reduces bias and makes it easier to justify decisions to stakeholders. In practice, run parallel pilots, capture benchmarks under representative workloads, and use blind assessments where possible to isolate the contribution of architecture from data quality. This structured approach is central to comparing ai agents in a credible, repeatable way.

As you evolve your evaluation framework, consider creating a living document that captures lessons learned, test cases, and updated metrics to reflect changing business needs and regulatory landscapes.

Deployment life cycle and maintenance considerations

Deployment choices profoundly influence total cost and long-term value. Rule-Based Agents tend to require fewer retraining events, with updates focusing on rule edits, audit logs, and monitoring rules for drift. Learning-Based Agents typically demand regular retraining, model evaluation, and data pipeline maintenance to stay current with evolving inputs. A robust deployment plan includes versioning, rollback strategies, feature flags, and comprehensive monitoring for drift, latency, and failure modes. Implement automated testing for both regression and integration issues, and ensure observability across data quality, model behavior, and downstream systems. In addition, plan governance checks for updates, including impact assessments, risk reviews, and stakeholder approvals. The deployment life cycle should also address on-call responsibilities, post-deployment hotfixes, and continuous improvement loops that help maintain alignment with business goals over time.

From a practical perspective, teams that invest in reproducible environments, clear rollback plans, and proactive monitoring typically experience smoother transitions when comparing ai agents across pilots and production phases.

Cost, ROI, and risk management

Understanding cost and ROI in comparing ai agents requires a holistic view. Rule-Based Agents generally incur lower ongoing compute costs if rules are simple and updates are infrequent, but maintenance costs can rise if business rules become complex or require frequent audits. Learning-Based Agents can offer substantial automation benefits and adaptability but often come with higher data, compute, and maintenance costs. The true ROI includes not only immediate efficiency gains but also long-term risks, such as model drift, regulatory exposure, and the cost of failed decisions. A comprehensive evaluation should include direct costs (infrastructure, licenses, personnel) and indirect costs (training, governance, risk mitigation). Build scenarios that estimate total cost of ownership over the desired horizon, and compare against expected gains in accuracy, speed, or safety. Finally, document risk exposures for each option so executives can make informed, defensible choices during budget cycles.

In Ai Agent Ops’s framework, align ROI calculations with governance and safety benchmarks to avoid overrating performance while underweighting risk. The aim is a balanced assessment that supports durable, compliant automation rather than short-term wins.

Practical decision scenarios and best-practice guidance

To translate theory into action, consider common decision scenarios when choosing between rule-based and learning-based ai agents. Scenario A might involve a well-defined, stable process with strong compliance needs, where a rule-based approach offers clear audit trails and predictable outcomes. Scenario B could be a high-velocity, data-rich domain where rapid adaptation and pattern recognition are paramount, favoring learning-based architectures. A hybrid approach—where a rule-based supervisor governs a learned component—often delivers a practical balance between safety and adaptability. In both cases, establish governance gates, perform parallel pilots, and evolve your scoring rubric as you gather evidence. Ensure that your team documents learnings, clarifies ownership for data and rules, and maintains clear handoffs between developers, operators, and business stakeholders. This disciplined approach reduces risk and accelerates value realization when comparing ai agents across projects.

Ai Agent Ops recommends starting with a pilot program that tests a minimal viable architecture, then incrementally expands scope as criteria are met and governance is validated.

Real-world caveats and common pitfalls

Despite best intentions, teams often encounter pitfalls when comparing ai agents. Misalignment between business goals and evaluation criteria can lead to bias toward one architecture. Overemphasis on short-term performance at the expense of long-term safety or regulatory compliance can create hidden costs. Vendor lock-in, toolchain fragmentation, and data silos undermine portability and hinder future evolution. Another frequent issue is assuming that more data automatically yields better results; data quality and labeling quality matter as much as quantity. To avoid these traps, maintain a transparent, reproducible evaluation process, predefine success criteria, and ensure cross-functional involvement from data, security, product, and governance teams. A disciplined, evidence-driven approach to comparing ai agents reduces risk and accelerates successful outcomes beyond isolated pilots.

Authoritative sources and further reading

For readers seeking deeper context, consult foundational sources on AI risk, governance, and systems engineering. Notable authorities include NIST’s AI Risk Management Framework for governance and risk assessment, Stanford HAI’s ethics and policy perspectives, and MIT CSAIL’s research on autonomy and agent design. These sources help ground the practical guidance in this article and provide a basis for extending the comparison to emerging architectures and regulatory requirements.

mainTopicQuery":"ai agents"

Comparison

| Feature | Rule-Based Agents | Learning-Based Agents |

|---|---|---|

| Decision Logic | Explicit, rule-based decisions | Data-driven, learned mappings |

| Adaptability | Less adaptable to novel inputs | High adaptability to changing contexts |

| Maintenance | Predictable updates to rules | Ongoing retraining and data curation |

| Data Requirements | Lower data needs in narrow domains | High data needs for broader generalization |

| Safety & Compliance | Easier to audit, stronger guardrails | Harder to certify without robust controls |

| Latency & Cost | Typically lower compute, faster per-task | Higher compute cost, potential latency tradeoffs |

| Best For | Stable, regulated processes | Dynamic, complex environments |

Positives

- Clarifies trade-offs and design decisions

- Easier to audit and explain to stakeholders

- Lower upfront risk in regulated domains

- Faster initial setup for simple tasks

- Clear maintenance roadmap and ownership

What's Bad

- Can oversimplify complex behaviors

- May underperform in novel situations without guards

- Risk of brittle rules as domains evolve

- Hybrid architectures add coordination complexity

Neither approach is universally best; choose based on risk tolerance, data availability, and velocity of change.

A balanced decision framework helps teams pick the architecture that aligns with governance, data strategy, and operational needs. When in doubt, start with a pilot that tests a minimal viable approach and iteratively adjust scope based on measurable criteria. The Ai Agent Ops team recommends prioritizing safety and auditability alongside performance.

Questions & Answers

What is the core difference between rule-based and learning-based AI agents?

Rule-based agents operate on explicit, human-defined rules, offering transparency and auditability. Learning-based agents rely on data to learn patterns and can adapt to new situations, but their internal logic is less transparent. Both have roles depending on the risk, data, and governance context.

Rule-based agents are transparent and predictable, while learning-based agents adapt with data but can be harder to interpret.

When is it appropriate to start with a rule-based agent?

Rule-based agents are well-suited for stable, high-regulation environments where decisions must be auditable and explainable. If your domain has clear rules and limited variability, starting with a rule-based approach reduces risk and accelerates governance.

Start with rules when things are stable and regulators require explainability.

What metrics should you use to evaluate AI agents?

Key metrics include accuracy or success rate, latency, stability, explainability scores, data quality, and governance compliance. Weight these by business priorities and run parallel pilots to gather evidence under realistic workloads.

Use a balanced scorecard that covers performance, safety, and governance.

How do governance and compliance affect agent selection?

Governance requirements drive the need for audit trails, access controls, and fail-safes. In regulated domains, stronger guardrails may favor rule-based designs or hybrid approaches with clear responsibility assignment.

Governance can tip the scale toward safer, auditable designs.

Is a hybrid approach feasible and beneficial?

Yes. Hybrid architectures combine the strengths of both worlds: a rule-based supervisor can govern a learned component to maintain safety while preserving adaptability. This often requires careful integration and clear ownership.

Hybrid designs can balance safety and adaptability.

What are common pitfalls to avoid when comparing ai agents?

Avoid bias by using structured scoring, ensure data quality and governance are not overlooked, and beware vendor lock-in. Document assumptions and test plans openly to prevent misalignment between stakeholders.

Plan, document, and test to dodge common mistakes.

What is a practical timeline for evaluating AI agents?

Pilot phases typically span data collection, baseline testing, governance validation, and stakeholder reviews. Build in iterative milestones and decision gates to progressively advance from proof-of-concept to production.

Start small, iterate, and maintain clear milestones.

Key Takeaways

- Define decision criteria before evaluation

- Prioritize governance and safety alongside performance

- Use a structured scoring rubric for fairness

- Consider hybrid architectures for balance

- Pilot early and iterate with cross-functional teams