Compare AI Agents for Coding: A Practical Guide

A balanced, evidence-based guide to compare ai agents for coding, focusing on code generation, orchestration, and governance. Learn evaluation criteria, deployment patterns, and best practices to boost developer productivity in 2026.

According to Ai Agent Ops, to compare ai agents for coding, prioritize agents that specialize in code generation, testing, and workflow orchestration. Quick wins come from evaluating accuracy, latency, and integration with your development stack. This guide helps you compare ai agents for coding across goals, data access, and governance so you can pick the right tool for your team's needs.

What is the landscape for coding AI agents?

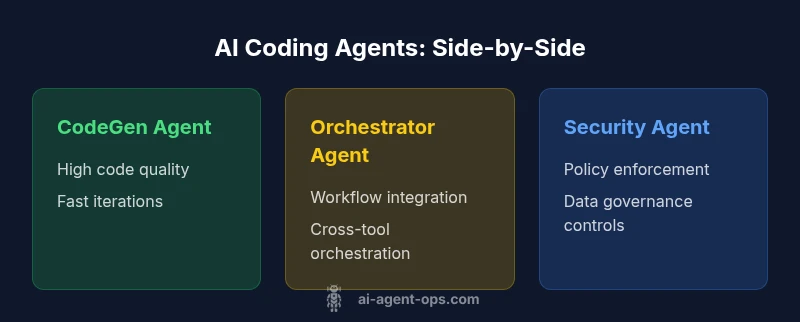

AI agents for coding are autonomous software entities designed to participate in software development tasks. They can propose code, run tests, orchestrate toolchains, and enforce policies. The landscape today includes three common archetypes: code-generation agents that write or refactor code; workflow/orchestrator agents that coordinate tasks across IDEs, CI/CD, and issue trackers; and security/compliance agents that enforce governance and data protections. For teams, the key is to balance creative speed with reliability and risk. According to Ai Agent Ops, the ecosystem is evolving toward modular, interoperable agents that can be composed into end-to-end pipelines. This shift makes it easier to adapt to changing requirements and reduces vendor lock-in. When you start comparing ai agents for coding, you should map your current bottlenecks, data flows, and governance constraints to the capabilities of each agent archetype.

Core capabilities to evaluate

When you compare ai agents for coding, focus on the core capabilities that determine actual performance in real development contexts. Look for high-quality code generation that reduces manual edits, robust testing and linting support, intelligent debugging helpers, and the ability to orchestrate multiple tools (IDE, version control, CI/CD, chat ops). Evaluate how well an agent handles refactoring suggestions, doc generation, and adherence to team conventions. Consider also the agent’s ability to learn from your codebase while respecting privacy and access controls. Finally, assess how easily the agent can be integrated into existing pipelines, how it handles errors, and whether it provides explainable recommendations to your developers.

Evaluation criteria: accuracy, latency, explainability, safety

A rigorous evaluation of coding AI agents requires a structured approach. Start with accuracy: how often do generated blocks compile and pass tests? Then consider latency: what is the end-to-end turnaround for a given task, such as generating a function or fixing a bug? Explainability matters for audits and onboarding; you should be able to trace a suggested change to a rationale or data source. Safety focuses on preventing leakage of sensitive information, avoiding unsafe patterns, and enforcing coding standards. Balance these criteria against your team’s risk tolerance and regulatory requirements. Ai Agent Ops emphasizes using real-world tasks tailored to your tech stack to avoid synthetic benchmarks that overstate capabilities.

Data access and privacy considerations

Data access policies are a foundational factor when you compare ai agents for coding. Clarify what data the agent can access, whether it can read private repos, and how it handles credentials. Prefer agents with strict least-privilege access and role-based controls, plus robust audit logs showing who accessed what and when. Consider how model updates might affect sensitive data and whether the vendor provides in-house data handling guarantees or on-prem deployment options. Establish a data retention policy and a plan for revoking access if a developer leaves the team. Privacy-by-design should be a non-negotiable criterion in your evaluation process.

Integration and deployment architecture

Effective integration is a major differentiator when you compare ai agents for coding. Look for well-documented APIs, SDKs, and plug-ins that fit your stack (IDE, CI/CD, issue trackers, chat platforms). Assess whether agents can run in your preferred environment (cloud, on-prem, or hybrid) and how they scale with team size. A good deployment pattern involves containerized workers, clear ownership, and automated drift detection to ensure agents stay aligned with policies. Consider resilience requirements: retry logic, circuit breakers, and graceful fallbacks in case of outages. The goal is to create a cohesive automation layer that complements human developers rather than adds operational complexity.

Governance and compliance for coding agents

Governance features are a critical axis for any comparison of ai agents for coding. Look for policy enforcement capabilities that restrict dangerous operations, access controls that separate duties, and detailed audit trails for changes and decisions. Compliance support should cover data handling standards, code provenance, and actionable risk flags for suspicious activity. Your evaluation should also include how easily you can define and enforce coding standards, security requirements, and license terms across all agents in the stack. A well-governed setup reduces risk and accelerates adoption among engineering leaders.

Performance testing: benchmarks you can run

Performance testing is essential to validate your choices when you compare ai agents for coding. Create representative test suites that mirror your real work: bug fixes, feature scaffolding, and performance profiling tasks. Measure end-to-end time, error rates, and the need for human review. Use shadow testing where possible to compare agent outputs against human equivalents without impacting production. Document biases that emerge under certain tasks and adjust prompts, context windows, or tool integrations accordingly. This disciplined approach yields reliable signals about suitability for your projects.

Cost considerations and pricing ranges

Cost is a practical constraint when you compare ai agents for coding. Expect pricing models to vary by usage, licensing terms, and the level of support required. Compare not only per-task or per-seat fees but also potential hidden costs like data egress, training data access, and long-term maintenance. Build a total-cost-of-ownership picture that accounts for onboarding time, governance overhead, and integration efforts. Use pricing ranges to frame decisions, but avoid fixed numbers without vendor context. The goal is to identify the best value within your budget and risk tolerance.

Real-world use cases by role

Developers benefit from instant code suggestions, automated test generation, and refactoring recommendations. Platform engineers gain from orchestrated toolchains and consistent deployment pipelines. Product managers see faster iteration cycles and clearer impact analyses. Security teams appreciate governance features that prevent data leaks and enforce compliance. When you compare ai agents for coding, map these roles to your organization’s workflows and select agents that complement the skills of your teams rather than duplicating effort.

Practical evaluation checklist for teams

Use a checklist to standardize your evaluation when you compare ai agents for coding. Confirm clear ownership and documented responsibilities. Verify data access boundaries and the presence of audit trails. Validate integration points with your core tools and simulate a typical sprint to assess real-world utility. Ensure you have a rollback plan and a feedback loop for continuous improvement. Finally, document success criteria and align them with your strategic goals so stakeholders can track progress over time.

Risk management and security best practices

Security and risk management are foundational. Ensure agents use encrypted channels, rotate credentials, and support least-privilege access. Implement a secure development lifecycle for AI tooling, including code reviews for agent-generated changes and independent testing. Maintain an incident response plan for missteps or data exposure and conduct regular security audits. By prioritizing risk management, you reduce the chance of costly surprises and boost confidence in automation across the organization.

Vendor comparison strategies

When evaluating vendors, seek interoperability and clear versioning of models and prompts. Favor solutions with open APIs, documented data handling policies, and transparent performance claims. Request reference customers and third-party assessments to validate claims. A rigorous comparison strategy also includes a side-by-side evaluation of SLAs, support channels, and roadmap alignment with your long-term AI strategy.

Implementation patterns: modular agents vs monoliths

A modular approach typically wins when you compare ai agents for coding, enabling you to compose specialized agents for different tasks. Modules can be upgraded independently and integrated into end-to-end pipelines. A monolithic agent may offer simplicity but risks vendor lock-in and slower adaptation to new tooling. Start with a minimal viable module set, then incrementally add capabilities as your team gains confidence and governance matures.

Change management and developer onboarding

Adoption hinges on people and processes as much as technology. Provide training that maps to real development tasks and create clear policies for when to intervene. Establish lightweight governance gates to help developers understand expectations around code quality, data handling, and security. Use onboarding checklists that align with your organization's values and risk posture, and collect feedback to refine the agent ecosystem over time.

Case studies (anonymous examples)

In anonymized deployments, teams often report faster feature delivery and improved test coverage after introducing codemod and orchestration agents. Other organizations note better governance and fewer security concerns when strict access controls and audit trails are in place. While results vary, the common thread is a disciplined approach to evaluation, phased rollout, and continuous learning from each sprint.

Future trends in coding agents

The field is moving toward tighter integration with development environments, improved explainability, and stronger governance controls. Expect more capability to customize agents for domain-specific tasks, better data privacy guarantees, and scalable orchestration that respects team boundaries. As models evolve, successful teams will prioritize interoperability, modular architectures, and transparent policies to maximize ROI while minimizing risk.

Feature Comparison

| Feature | CodeGen Agent | Orchestrator Agent | Security/Compliance Agent |

|---|---|---|---|

| Code generation quality | high | medium | medium |

| Latency (response time) | low | medium | low |

| Integration complexity | low | medium | high |

| Data privacy & access control | strong | adequate | strong |

| Governance features | robust | Partial | robust |

| Best for | heavy code generation | end-to-end dev automation | regulated environments |

Positives

- Accelerates code development and reduces repetitive tasks

- Clarifies responsibilities by function (codegen, orchestration, governance)

- Facilitates modular architectures and easier upgrades

- Supports repeatable pipelines across teams

What's Bad

- Potential tool fragmentation if agents aren't integrated

- Higher total cost of ownership with multiple agents

- Security and privacy risks if data access isn't tightly controlled

Specialized AI agents for coding deliver the best value when you match capabilities to your workflow.

If you prioritize high-quality code generation, fast orchestration, or strong governance, choose the agent type that aligns with that need; a mixed setup often yields the best results.

Questions & Answers

What is the difference between a code-generation agent and an orchestrator agent?

CodeGen focuses on producing code and refactoring; Orchestrator coordinates tasks across tools, pipelines, and environments. Both roles complement human developers when integrated with clear governance.

CodeGen writes code, while the Orchestrator wires tools together.

How should I measure the accuracy of AI coding agents?

Use real-world tasks with predefined success criteria, verify against regression tests, and incorporate peer reviews to assess correctness and maintainability.

Test them on real tasks and get human feedback.

Are governance features essential when evaluating coding agents?

Yes. Governance features like access controls, audit trails, and policy enforcement help manage risk, ensure compliance, and protect code and data.

Governance helps you stay in control of what the agent can do.

Can I mix agents from different vendors?

Yes, if interfaces are well-defined and governance is enforced. Interoperability is key to preventing vendor lock-in and enabling flexible architectures.

You can mix and match as long as things fit together.

What about costs and pricing models?

Expect tiered pricing with usage-based elements and possible setup fees. Consider total cost of ownership, including integration and governance overhead.

Costs vary; plan for scale and governance needs.

How do I start a pilot project for coding agents?

Define a narrow scope, establish success metrics, and run a controlled pilot with a small team before broader rollout.

Start small, measure outcomes, then expand.

Key Takeaways

- Define your top coding objective before evaluating agents

- Choose modular agents to reduce lock-in

- Pilot on a low-risk project to validate outcomes

- Ensure governance and data handling rules are in place

- Monitor integration quality and provide ongoing feedback loops