AI Agent for Test Case Generation: A Practical How-To

A comprehensive guide showing developers how to use an AI agent to automatically generate robust test cases, validate coverage, and integrate into CI/CD with governance.

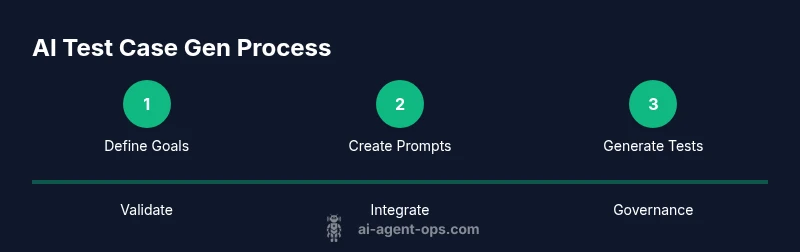

This how-to shows how to use an AI agent to generate robust test cases. You'll define goals, choose tooling, craft prompts, orchestrate generation, validate outcomes, and integrate the workflow into CI/CD with governance. The guide emphasizes practical steps, safe automation, and measurable quality.

What is an AI agent for test case generation?

An AI agent for test case generation is a software entity that combines goal-directed reasoning with generative capabilities to produce test cases from input specifications, user stories, or API contracts. Unlike simple scripted test creators, an AI agent can plan a sequence of actions, ask clarifying questions, and iteratively refine outputs based on feedback from validators and metrics. This combination of planning, generation, and validation enables teams to scale test design, uncover edge cases, and adapt to evolving requirements without losing traceability. In practice, an AI agent operates within an environment that provides the input specs, the target framework, and the desired coverage criteria. It then selects appropriate test formats, writes test code, and suggests complementary test data. Governance layers ensure outputs meet standards for readability, maintainability, and non-functional requirements. For developers and product teams exploring AI-powered automation, this approach blends human oversight with machine-assisted creativity to improve both speed and quality.

Key concepts to keep in mind include: goal-driven planning, constraint-aware generation, feedback loops from validators, and audit trails for reproducibility.

Why use AI agents for test case generation?

Adopting AI agents for test case generation offers several compelling advantages. First, automation scales the production of test cases across multiple features, APIs, and edge scenarios that humans might miss. Second, AI agents can quickly generate diverse inputs, boundary conditions, and negative tests, expanding coverage beyond conventional test suites. Third, the cycle from spec to tests can be accelerated, freeing engineers to focus on design decisions and critical risk areas. Fourth, AI-driven test ideas often reveal integration and data-flow issues early, reducing late-stage defects and flaky tests. Finally, when governed properly, AI agents provide consistency in test style and naming conventions, improving maintainability across teams. While these benefits are powerful, success hinges on solid governance, proper prompt design, and ongoing monitoring to prevent drift or biased test generation.

To harness these benefits, teams should start with a narrow scope, then expand the agent’s domain as confidence grows. This approach keeps projects manageable while delivering tangible improvements in test quality and velocity.

Core components of an effective AI agent workflow

An effective AI agent workflow for test case generation typically includes four core components: a planner, a generator, a validator, and an orchestrator. The planner interprets input specs and defines goals such as coverage criteria and test types (functional, edge, performance). The generator writes test cases in the target framework, including code snippets, inputs, and assertions. The validator checks that generated tests meet coverage and quality thresholds, and can run a subset of tests to confirm syntactic correctness and runtime behavior. The orchestrator coordinates the flow, handles versioning, and ensures reproducibility by logging prompts, outputs, and evaluation results. Together, these components enable end-to-end automation with human-in-the-loop checkpoints. Supporting components like a prompts library, a data catalog, and a governance layer help maintain consistency and traceability across generations.

Operational practices such as modular prompts, version-controlled templates, and clear evaluation metrics are essential for long-term success.

Designing prompts and prompt strategies

Prompt design is the backbone of reliable AI-driven test generation. Effective prompts combine system instructions, role definitions, task descriptions, and example inputs with guardrails that prevent unsafe or non-compliant outputs. A typical prompting strategy includes: (1) a system prompt that sets the agent’s goals and constraints; (2) a role prompt that defines the agent as a test designer; (3) a task prompt describing the input spec and desired coverage; (4) few-shot examples illustrating preferred test structures; and (5) an evaluation prompt that asks the agent to self-validate against coverage metrics. Iterative prompt refinement is critical: start with simple prompts, analyze failures, then add clarifying constraints or examples. Using chain-of-thought prompts or structured templates can improve interpretability and reproducibility. Remember to include safety rails to avoid generating brittle or unsafe test logic and to request explicit test data formats compatible with your framework.

A practical pattern is to separate the prompt into a static template and a variable context block so you can reuse the same design across multiple features.

Tooling and integration options

Choosing the right toolchain for AI-driven test generation depends on your tech stack and CI/CD practices. Common choices include a language model provider (such as OpenAI or an open-source alternative) for generation, a test framework (e.g., PyTest, JUnit) for execution, and a code repository with CI pipelines (GitHub Actions, GitLab CI). Integration points should include a generator service or module that accepts specs, a validator module that assesses coverage and correctness, and an orchestration layer that logs outputs and maintains provenance. For data handling, ensure your setup supports test data masking and synthetic data generation when necessary. A robust pipeline also includes monitoring and alerting for failures, drift in prompts, or degraded test quality. Finally, design your tooling to support multi-language tests and easy onboarding for new team members, with clear documentation and templates.

Security considerations matter: protect API keys, limit access, and audit prompt changes to maintain governance.

Data considerations: test data, coverage, and risk

Data is the lifeblood of test generation. High-quality, representative data helps the AI agent produce relevant tests and avoids generating brittle or irrelevant cases. Start with a data catalog that maps input schemas to test categories (positive, negative, boundary, null cases). When privacy is a concern, use synthetic data or de-identified samples. Coverage metrics such as statement, branch, and path coverage can guide test generation goals, while mutation testing can reveal weaknesses in the generated suite. Consider data drift: as the application changes, prompts and templates may need updates to reflect new behaviors or APIs. To reduce risk, implement guardrails that limit generation to approved test types and enforce naming conventions, code style, and test structure. Finally, ensure registry and lineage tracking so every generated test can be traced back to its input spec and prompts.

A disciplined data strategy connects test generation choices to real-world risk and quality objectives.

Validation and governance: how to verify and audit AI-generated tests

Validation and governance are essential to trusted AI-assisted test generation. Validation should combine automated checks (syntax, runtime, isolation, and quick coverage estimates) with human review for edge cases and domain-specific nuances. Establish acceptance criteria such as minimum coverage thresholds, readability standards, and adherence to branch or path coverage targets. Maintain an auditable trail of prompts, inputs, outputs, and evaluation results to enable rollback or inspection. Implement a human-in-the-loop stage for high-risk features or critical APIs, and use versioned templates to track changes over time. Regular audits should examine prompt drift, test flakiness, and alignment with regulatory or compliance requirements. Clear ownership and SLAs for governance activities help keep the process sustainable as the project scales.

Remember that governance is not a barrier but a safety net that preserves quality and trust in automated test design.

Common challenges and how to troubleshoot

AI-generated test cases can encounter several challenges. Prompt drift can cause outputs to diverge from the intended domain; flaky tests may arise from brittle inputs; and misalignment with business rules can produce irrelevant tests. Address these issues by tightening prompts, adding explicit constraints, and including feedback loops from validators. Use cold-start prompts for new features and incremental expansion to avoid overwhelming the agent. Regularly review sample outputs to detect bias, gaps in coverage, or unsafe logic. If generation stalls, consider simplifying the prompt, providing more concrete examples, or adjusting the model temperature. Establish clear fallback rules: if the AI cannot generate reliable tests within a given time, revert to a human-in-the-loop process or a proven baseline set of tests. Finally, measure impact with metrics such as defect detection rate, test suite growth, and time-to-coverage improvements to justify ongoing investment.

A practical end-to-end example: from spec to tests

Take a REST API endpoint as a concrete example. Start with the API spec (paths, methods, request/response schemas) and a goal to cover 90% of the logical branches. The AI agent receives the spec, the target test framework, and prompts describing desired test types (functional + boundary + negative). It generates a set of test cases with inputs, expected outcomes, and assertions. A validator runs the subset, checks for syntax correctness and basic coverage, and returns feedback. The agent then refines its prompts to fill any gaps, repeats generation, and ultimately hands off the final set of tests to the repository with a PR-ready format. Throughout the process, every test, prompt, and decision is logged for traceability, and governance gates ensure alignment with standards. In practice, this pattern accelerates test design while preserving rigor and maintainability.

Tools & Materials

- AI platform or LLM access(API key or hosted model; ensure rate limits align with team needs)

- Test framework(E.g., PyTest, JUnit, or equivalent in your language)

- CI/CD integration tool(E.g., GitHub Actions, Jenkins; supports workflow automation)

- Test specification source(OpenAPI, Swagger, user stories, or requirements documents)

- Prompts library(Templates, guardrails, and evaluation prompts for consistency)

- Data governance and masking tools(Optional but recommended for production data use)

Steps

Estimated time: 2-6 hours

- 1

Define testing goals and constraints

Articulate the feature scope, expected quality levels, and measurable coverage targets. Identify non-functional goals (security, performance) and regulatory constraints. Document acceptance criteria to guide AI behavior.

Tip: Write concrete acceptance criteria before drafting prompts to reduce ambiguity. - 2

Map AI agent roles and data flow

Outline the planner, generator, validator, and orchestrator components. Define data inputs (specs, examples), outputs (tests, logs), and the feedback loop.

Tip: Keep role responsibilities modular to simplify maintenance. - 3

Create prompts and guardrails

Develop a prompt template with a system prompt, role prompt, task prompt, and example IO. Add guardrails to restrict unsafe or irrelevant outputs and ensure alignment with your framework.

Tip: Test prompts on small specs first and iterate based on results. - 4

Generate initial test cases

Load the input spec and run the AI agent to produce a first batch of tests. Include a variety of functional, boundary, and negative cases with clear assertions.

Tip: Prefer shorter, well-structured tests to improve readability and maintainability. - 5

Validate and filter outputs

Run generated tests through a validation harness to check syntax, coverage, and expected outcomes. Flag failures for human review and prompt refinement.

Tip: Automate as much validation as possible, but retain a human-in-the-loop for edge cases. - 6

Iterate prompts and generation

Use feedback from validation to refine prompts and templates. Re-run generation to improve coverage and reduce duplicates.

Tip: Track prompt changes in a version-controlled prompts library. - 7

Integrate into CI/CD

Embed the test-generation workflow into your CI/CD pipeline so new specifications auto-generate tests on change events, with PR-based reviews.

Tip: Maintain a clear separation between generated tests and hand-authored tests. - 8

Governance, auditing, and maintenance

Set ownership, review cadence, and gating rules. Regularly audit prompts, outputs, and coverage metrics to prevent drift.

Tip: Schedule quarterly governance reviews to keep prompts current.

Questions & Answers

What is an AI agent for test case generation?

An AI agent for test case generation is a guided AI component that plans, creates, and validates test cases from input specifications. It combines goal-driven reasoning with generation to automate parts of the testing process while preserving auditability and governance.

An AI agent for test case generation is a guided AI that plans, creates, and checks test cases from specs, helping automate testing with governance.

How do I ensure quality in AI-generated tests?

Quality is ensured through structured prompts, automated validation, human-in-the-loop for high-risk areas, and governance that tracks prompts and outputs. Regular reviews of coverage metrics and test readability help maintain standards.

Quality comes from well-designed prompts, automated checks, and governance with human review for critical areas.

What should be included in prompts for test generation?

Prompts should include system instructions, role definitions, task descriptions, example IO, constraints, and evaluation prompts. Separate static templates from dynamic context to enable reuse and easier refinement.

Prompts should have system prompts, role, task, and examples, plus constraints and evaluation cues.

Can AI-generated tests replace human-written tests?

AI-generated tests should augment, not replace, human-written tests. Use human review for edge cases, domain rules, and safety checks, ensuring the final suite reflects both automation benefits and expert judgment.

AI tests should augment human tests, with reviews for edge cases and domain rules.

How do I integrate AI test generation into CI/CD?

Integrate by adding a generator-and-validator stage in your pipeline, triggering on spec changes or PRs. Ensure generated tests land in a separate directory with PR-based reviews and clear provenance.

Add a generator stage to CI/CD, run on spec changes, review generated tests in PRs.

What about data privacy and test data in AI work?

Use synthetic data or masked inputs when generating tests. Maintain data governance to prevent leakage of sensitive information and to comply with privacy regulations.

Use synthetic or masked data and follow governance to protect privacy.

Watch Video

Key Takeaways

- Define clear testing goals and acceptance criteria

- Design modular AI agent roles for maintainability

- Use structured prompts with guardrails and examples

- Integrate validation and governance into CI/CD

- Monitor prompts and outputs to prevent drift