Cool AI Agents to Build: A Practical Guide

Learn how to design, implement, and scale cool ai agents to build for smarter automation. This guide covers architectures, safety, evaluation, and hands-on steps for developers and leaders.

You will learn how to design and implement cool ai agents to build for smarter automation. This guide covers architectures, safety practices, and step-by-step workflows to ship reliable agents quickly. According to Ai Agent Ops, practical agent design starts with clear goals, measurable constraints, and iterative testing.

Defining cool ai agents to build

According to Ai Agent Ops, a cool ai agent is a modular software unit that can observe, reason, and act within a defined domain. The value comes not from clever prompts alone but from the combination of a well-scoped objective, robust data access, and a reliable decision loop. In practice, you’ll want to define a concrete use case, boundary conditions, and success metrics before writing code. Consider common domains such as automation, data synthesis, and decision support, and map each domain to a small but useful agent capability set. Document guardrails—what the agent should never do—and decide how you will measure success (latency, accuracy, confidence, and auditability). Start small: a single agent with a focused task is easier to test and govern than a sprawling system. This approach also makes it easier to learn from failures and iterate quickly.

Core architectures for agentic AI

There are several patterns teams use to structure AI agents. A planner-driven architecture uses a reasoning module to decide next actions, then delegates execution to a diverse set of tools. A multi-agent orchestration pattern coordinates several autonomous agents to handle complex tasks in parallel. A tool-using agent integrates external APIs and data sources to fetch information or perform actions in the real world. Each pattern has trade-offs in latency, reliability, and complexity. When choosing an approach, start with a minimal viable core (a single agent with a defined task) and add orchestration or tool integration as needed. Emphasize modular interfaces and clean separation between reasoning, planning, and action. This makes the system easier to test and extend.

Data strategy and safety considerations

Data quality directly impacts agent performance. Prioritize clean, labeled, and diverse data sources, and implement data validation at the input boundary. Safety is not an afterthought; it should be baked into the agent's policy and fail-safe behaviors. Implement guardrails such as action limits, confidence thresholds, and observable logging so you can detect and correct drift. Use sandboxed environments for testing, and keep a strict separation between experimentation data and production data. Finally, design for transparency: maintain clear decision logs and provide explainable traces of why the agent chose a particular action.

Practical examples you can prototype

Consider a few starter agent archetypes you can prototype in a weekend:

- Task automation agent: handles repetitive workflows with minimal human input.

- Research assistant agent: gathers information, summarizes findings, and suggests next steps.

- Customer support agent: triages inquiries and routes them to the right human or bot pathway.

- Code helper agent: suggests edits, checks for style, and points out potential bugs. Each example demonstrates different patterns (planner, tool use, orchestration) and helps you learn how components interact. Start with a simple prompt and a single tool; then gradually add data sources and policies as you validate usefulness.

Evaluation and experimentation workflows

A practical evaluation plan includes defining objective metrics (accuracy, latency, resource use) and setting up controlled experiments. Use synthetic or sandbox data to test edge cases and to stress-test guardrails. Implement A/B-style comparisons where feasible, tracking both objective metrics and user-centric outcomes such as satisfaction or perceived usefulness. Maintain a decision log that records what worked, what didn’t, and why. This documentation becomes essential as you scale and involve more teams in governance.

Getting started: building a minimal viable agent

Begin with a minimal viable agent (MVA) that has a single clear objective and a bounded environment. Create a lightweight architecture: a reasoning component (planner), a core action executor, and a data access layer. Implement guardrails and basic logging, then run the agent against a sandbox scenario that mimics real tasks. Iterate quickly by adjusting objectives and tools based on observed results. The MV A mindset helps teams ship tangible value sooner while learning how to scale safely.

Scaling, governance, and future directions

As you grow from MVA to more capable agents, invest in governance, observability, and modular design. Establish clear ownership for components, maintainable interfaces, and a centralized policy library to ensure consistency across agents. Plan for future directions such as multi-agent collaboration, advanced planning, and dynamic tool discovery. Focus on reproducibility and auditing so your agents remain trustworthy as their complexity increases. The Ai Agent Ops team suggests documenting decision boundaries early and revisiting them on a regular cadence to adapt to new requirements.

Tools & Materials

- Laptop or workstation(With at least 16 GB RAM; modern CPU or GPU as available)

- Python runtime (3.11+)(Create isolated environments with venv or poetry)

- Code editor(VS Code or equivalent; enable linting and extensions)

- Git and container tooling(Git for versioning; Docker/Desktop for containerized tests)

- Sandbox API access or test data(Use a test API or mock data to prototype safely)

- Documentation and logging setup(Structured logs and tracing for observability)

- Data generation tools(Synthetic data generators to simulate tasks)

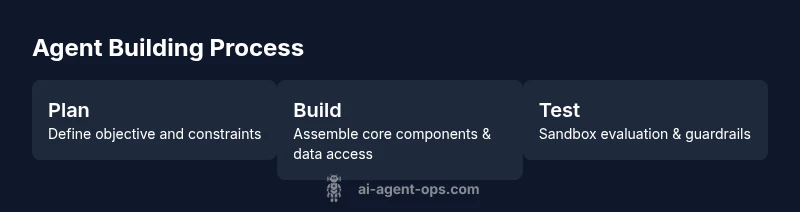

Steps

Estimated time: 6-9 hours

- 1

Define objective and constraints

Set the agent's explicit goal and boundary constraints. Capture success criteria and failure modes to guide design decisions.

Tip: Write a one-page objective and a guardrail list before coding. - 2

Choose architecture pattern

Select a base pattern (planner, tool-using agent, or orchestration) that matches your task and team skills. Begin with a single core agent.

Tip: Start simple and avoid premature orchestration. - 3

Assemble core components

Build a minimal reasoning loop, an action executor, and a basic data access layer. Ensure clean interfaces between components.

Tip: Define input/output contracts first to simplify integration. - 4

Integrate tools and data sources

Wire in essential tools and data providers. Validate connectors with mock data and guardrails against misuse.

Tip: Keep tool connections modular for easy replacement. - 5

Add safety and observability

Attach guardrails, logging, and monitoring. Ensure decisions are explainable and auditable.

Tip: Use explicit confidence thresholds and action limits. - 6

Test in a sandbox environment

Run scenarios that mimic real tasks. Break down failures to understand root causes and iterate quickly.

Tip: Document all test cases and outcomes. - 7

Prototype and iterate

Release a small, tangible MVP and collect feedback. Refine objectives, data sources, and tools based on results.

Tip: Prioritize learning from real usage over perfection. - 8

Plan for deployment and governance

Define rollout steps, monitoring, and a governance plan for scaling. Prepare for future multi-agent collaboration.

Tip: Create a living playbook for agent development.

Questions & Answers

What are AI agents and how do they differ from simple bots?

An AI agent combines observation, reasoning, and action within a defined domain. Unlike basic bots, agents plan and adapt, using data sources and tools to achieve goals. They operate under guardrails and are designed for modularity and governance.

AI agents observe, reason, and act within a defined domain, using data and tools to reach goals. They’re more flexible and governance-ready than simple bots.

What is a minimal viable AI agent and how do I start?

A minimal viable agent has a single defined objective, a small toolset, and basic guardrails. Start by prototyping in a sandbox, verify that it completes the task, and gradually add data sources and tools as you validate success.

Begin with a simple, well-scoped agent in a sandbox, then expand as you confirm results.

What safety checks should I implement for AI agents?

Implement guardrails, confidence scoring, and audit logs. Enforce action limits and fail-safe behavior to prevent harmful or unintended outcomes. Regularly review logs to detect drift.

Add guardrails, confidence thresholds, and clear logs so you can spot and fix issues quickly.

How long does it take to build a basic agent?

Time varies with scope, data access, and tooling. A focused MVP can be assembled in a few coding sessions, followed by iterative testing and governance setup.

A focused MVP can come together in a few sessions, with iteration and governance following.

Which tools or frameworks are recommended for prototyping?

Use a planner–executor pattern with a modular interface, and a lightweight framework to connect to data sources. Preference should be given to well-documented, community-supported toolkits and clear testing environments.

Choose a modular toolkit with good docs and test environments to prototype effectively.

How should I evaluate AI agent performance?

Define objective metrics, run controlled experiments, and compare outcomes. Track both automated metrics and user-centric impact to guide improvements over time.

Set clear metrics and run controlled tests to guide improvements.

Watch Video

Key Takeaways

- Define clear objectives and guardrails first

- Start with a minimal viable agent and iterate

- Prioritize observability and safety from day one

- Use modular, testable architectures for scalability

- Document decisions to support governance