AI Agent Frameworks Comparison

A data-driven, analytical comparison of AI agent frameworks to help developers, product teams, and leaders select the best platform for agentic automation and scalable workflows.

AI agent frameworks vary in orchestration, safety, and integration capabilities. This quick comparison highlights the core differences so you can choose the right tool for agentic automation. According to Ai Agent Ops, the best framework aligns your data flows, latency requirements, and governance needs with your team’s skill set. This quick read sets the stage for a deeper dive.

What is the phrase ai agent frameworks comparison and why it matters

The term ai agent frameworks comparison gathers multiple software foundations that enable autonomous agents to sense, decide, and act within a given domain. It isn't about a single product but about a family of approaches that vary in architecture, governance, and integration capabilities. For developers and leaders, understanding this landscape avoids vendor lock-in and accelerates automation initiatives. In this article, we’ll use the phrase ai agent frameworks comparison to anchor the discussion and help teams map their needs to concrete architectural decisions. According to Ai Agent Ops, the best practice is to start with your data topology, decision cadence, and safety requirements before evaluating any framework. This framing helps you move beyond marketing claims into actionable evaluation criteria.

Why the landscape matters for modern automation

The rise of agentic AI has shifted the focus from monolithic software to modular, composable agents. A thoughtful ai agent frameworks comparison reveals how different frameworks handle context, memory, and action. Key drivers include latency tolerance, data sovereignty, and the breadth of integrations. When teams perform a rigorous comparison, they uncover trade-offs between openness and governance, speed of iteration and reliability, as well as the depth of plugin ecosystems. Ai Agent Ops emphasizes that your choice will influence development velocity, risk posture, and long-term maintainability. This section outlines the dimensions that typically anchor a robust ai agent frameworks comparison.

Core dimensions to compare when evaluating frameworks

A structured ai agent frameworks comparison centers on five core dimensions: architecture, orchestration, memory and context, extensibility, and governance. Each dimension affects how agents perceive their environment, plan actions, and learn over time. Architecture covers whether a framework uses modular components or an all-in-one stack. Orchestration deals with how tasks are scheduled and how decisions flow from perception to action. Memory and context focus on retaining relevant information across conversations and sessions. Extensibility looks at plugin ecosystems and connectors to external tools. Governance encompasses access control, audit trails, and safety controls. This section provides a framework to rate each dimension for the options you’re evaluating, with practical signals to watch for during testing.

Architecture patterns you’ll encounter in ai agent frameworks comparison

Within an ai agent frameworks comparison, you’ll see several architectural archetypes. Some frameworks favor a microservices-like approach with clearly separated components for perception, reasoning, and action; others opt for a plug-in driven, single-agent core that loads external capabilities via adapters. Evaluators should map their domain requirements to these patterns: modularity supports experimentation and risk containment, while monolithic architectures may simplify onboarding and governance. A practical signal is how easily you can swap out a single module (e.g., planner or memory store) without rewriting large parts of the system. Ai Agent Ops notes that teams should prefer architectures with explicit interfaces and versioned contracts to minimize integration churn over time.

State and memory management: keeping context relevant

Effective ai agent frameworks comparison hinges on how each framework handles state. Some platforms maintain rich, local memory per agent and use event-sourced histories to reconstruct decisions. Others provide a lighter-weight context store with shorter-lived sessions, which can limit cross-session learning but simplify compliance. The right choice depends on your domain: real-time decision systems may demand fast, bounded memory, while complex, multi-turn tasks require longer context retention. From Ai Agent Ops’ perspective, the best options offer configurable memory policies, clear data lifecycle rules, and reliable rollback capabilities to recover from missteps without derailing user experience.

Extensibility: plugins, connectors, and ecosystem health

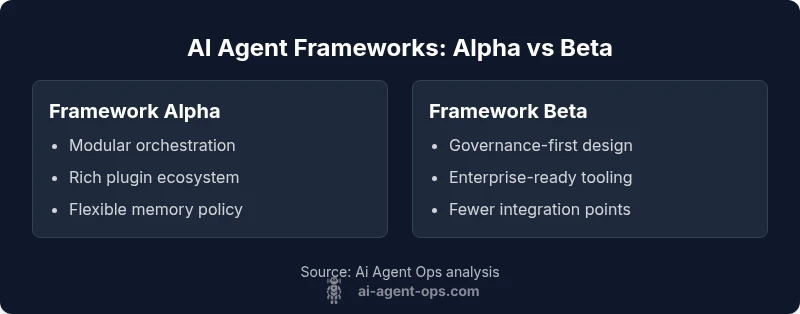

A strong ai agent frameworks comparison highlights extensibility. Look for plugin ecosystems, open connectors, and standardized plugin interfaces so you can add capabilities without re-architecting the core. Framework Alpha in our example emphasizes a thriving ecosystem of adapters for popular data stores, LLMs, and tooling platforms. Framework Beta prioritizes governance and security controls but offers fewer third-party integrations. Practical signals include documented SDKs, a clear plugin versioning strategy, and a marketplace with peer reviews. Ai Agent Ops cautions that a weak ecosystem can turn long-term maintenance into a headache as your automation needs evolve.

Safety, governance, and ethical considerations in ai agent frameworks comparison

Governance and safety are central to any ai agent frameworks comparison intended for production use. Look for robust access controls, immutable audit logs, and formal verification pathways for critical decision points. Safety features may include guardrails, containment strategies, and human-in-the-loop options for high-stakes tasks. The best frameworks also provide governance templates and compliance mappings to help organizations meet regulatory requirements. Ai Agent Ops emphasizes that teams should prioritize transparent decision paths and reproducible experiments to build trust with users and stakeholders.

Performance metrics and benchmarking in ai agent frameworks comparison

Benchmarking performance requires consistent metrics across the evaluated frameworks. Focus on latency (per decision), throughput (decisions per second under load), memory usage, and reliability under simulated workloads. A thorough ai agent frameworks comparison includes synthetic benchmarks, real-world pilots, and long-running stress tests to reveal stability under drift. Ai Agent Ops suggests defining a baseline and then testing for degradation under concurrent tasks, external tool failures, and data variability. Document results meticulously to guide the final decision and future optimization.

Open-source vs commercial options: trade-offs in ai agent frameworks comparison

The choice between open-source and commercial offerings often dominates an ai agent frameworks comparison. Open-source tends to offer flexibility, faster iteration, and no vendor lock-in, but may require in-house expertise for maintenance and security hardening. Commercial platforms usually provide enterprise-grade governance, support, and easier onboarding at scale, yet come with licensing costs and potential lock-in. In practice, most teams adopt a hybrid approach: use an open-source core with commercial governance layers for risk-sensitive deployments. Ai Agent Ops notes that a transparent roadmap and community activity are reliable indicators of long-term viability for any framework.

Migration, maintenance, and lifecycle planning in ai agent frameworks comparison

Long-term viability is a key axis in any ai agent frameworks comparison. Consider how updates impact compatibility, how migration paths are documented, and what maintenance typically entails. Favor frameworks with clear deprecation timelines, robust versioning, and community or vendor support for critical upgrades. A sound lifecycle plan includes data retention strategies, backward compatibility guarantees, and test suites that cover regression risk. Ai Agent Ops underscores the importance of aligning upgrade cycles with your product roadmap to avoid disruptive changes during peak development windows.

How to run a rigorous side-by-side evaluation (a practical framework)

A structured evaluation protocol for ai agent frameworks comparison begins with a shared evaluation plan. Define a representative workload, establish success criteria, and create a controlled test environment with repeatable scenarios. Run baseline tests to capture cold-start costs, then measure performance under steady state and peak load. Compare observability features, error handling, and failure modes across candidates. Ai Agent Ops recommends documenting caveats for each framework to ensure stakeholders understand the context of the results and any potential biases in the test setup.

Industry use cases and decision hints for ai agent frameworks comparison

Real-world decision hints emerge when you map use cases to framework strengths. For customer support automation, a framework with strong memory, context handling, and robust integration ecosystems may excel. For compliance-heavy domains, prioritize governance, auditing, and secure plugin management. In our analysis, industries like finance and healthcare often favor enterprise-grade governance and security features, while startups may prioritize rapid experimentation and modularity. An effective ai agent frameworks comparison translates business objectives into concrete technical criteria that drive the final choice.

Pre-purchase checklist for AI agent frameworks (final guardrails)

Before selecting, assemble a practical checklist: assess data connectivity, memory policies, plugin ecosystem health, governance capabilities, and vendor or community support. Validate integration with your existing toolchain, including data lakes, message buses, and identity providers. Ensure the framework supports predictable rollout plans, rollback strategies, and measurable success criteria. Ai Agent Ops recommends creating a lightweight pilot that mirrors real workloads to surface gaps early and avoid costly rework later.

Comparison

| Feature | Framework Alpha | Framework Beta |

|---|---|---|

| Orchestration model | Modular, event-driven with clear interfaces | Centralized, monolithic flow with fewer moving parts |

| State management | Rich local memory with event sourcing | Simplified, session-scoped memory |

| Extensibility | Thriving plugin ecosystem and adapters | Fewer integrations, stronger built-in controls |

| Pricing model | Tiered, usage-based (varies by tier) | Enterprise-focused with governance emphasis (varies by tier) |

| Best for | Teams needing flexibility and rapid iteration | Organizations prioritizing governance and risk controls |

Positives

- Supports rapid experimentation and extensibility

- Clear separation of concerns reduces maintenance risk

- Strong plugin ecosystems enable rapid capability growth

- Open ecosystems promote community collaboration

- Suitable for teams balancing agility with governance

What's Bad

- Open ecosystems can lead to integration fatigue if not managed

- Governance-focused platforms may slow down initial experimentation

- Migration between frameworks can be non-trivial

Framework Alpha is the preferred choice for flexibility; Framework Beta excels in governance and enterprise readiness

If your priority is customization and speed to market, Framework Alpha wins. If you need strong governance, risk management, and enterprise-scale support, Framework Beta is the safer long-term bet. The Ai Agent Ops team’s assessment supports a dual-path approach depending on organizational needs.

Questions & Answers

What is an AI agent framework, and why should I care?

An AI agent framework provides the structure, tooling, and runtime components for building autonomous agents. It defines perception, reasoning, action, and interaction with external systems. A solid framework accelerates development, ensures safety, and streamlines maintenance. In any ai agent frameworks comparison, the goal is to identify which framework best fits your domain and team skills.

An AI agent framework helps you build autonomous agents faster by providing the core tools and rules they need to operate.

Open-source vs. commercial: which path is better for startups?

Open-source options offer flexibility and community-driven innovation, which is great for experimentation. Commercial platforms provide governance, support, and enterprise-ready features that reduce risk as you scale. In a balanced ai agent frameworks comparison, startups often start with open-source for learning, then layer in commercial governance for production.

Open-source is great for learning, but for production at scale, commercial options can save you headaches.

How should I benchmark AI agent frameworks?

Define a representative workload, establish success criteria, and run repeatable tests to compare latency, throughput, and reliability. Include safety checks, plugin load tests, and failure scenarios. Document results clearly to support a data-driven decision in your ai agent frameworks comparison.

Set up a repeatable test plan and compare speed, reliability, and safety across frameworks.

What governance features matter most?

Key governance features include access controls, audit trails, reproducible experiments, and safe default configurations. A strong governance layer helps meet regulatory requirements and reduces risk during scaling. In most ai agent frameworks comparisons, governance quality often determines long-term suitability for enterprises.

Look for audits, access control, and documented compliance mappings.

Can I integrate these frameworks with my existing tools?

Most frameworks offer connectors and adapters to common data stores, messaging systems, and AI services. Successful ai agent frameworks comparisons hinge on the depth and quality of these integrations, not just the number of available plug-ins. Start by mapping critical toolchain gaps and testing end-to-end workflows.

Check if your current tools have ready-made connectors or if you’ll need custom adapters.

What about safety and fail-safes in these frameworks?

Safety mechanisms include guardrails, human-in-the-loop options, and containment strategies. A trustworthy ai agent frameworks comparison will weigh how easily you can pause, override, or terminate an agent when unexpected behavior occurs. Prioritize frameworks with transparent decision paths and robust logging.

Ensure you have guardrails and a clear human-in-the-loop process.

Key Takeaways

- Align data flows with framework capabilities

- Prioritize memory and context management

- Evaluate plugin ecosystems early

- Plan governance and security from day one

- Run structured pilots before committing to a platform