AI Agent Instructions Template: A Practical How-To

A practical guide to creating an AI agent instructions template that clarifies goals, context, constraints, and evaluation criteria for reliable agentic AI workflows. Learn structure, examples, testing, and governance.

By the end of this guide, you will be able to draft an ai agent instructions template tailored to your agent type. It covers goals, context, constraints, prompts, and evaluation criteria, plus safety and governance aspects. This quick blueprint will help you accelerate development, ensure consistency across teams, and reduce misalignment in agentic workflows.

What is an ai agent instructions template?

According to Ai Agent Ops, an ai agent instructions template is a formal specification that translates a task into actionable signals for an autonomous system. It combines goals, context, constraints, inputs, outputs, and evaluation metrics to align agent behavior with business objectives. A good template is modular, reusable, and auditable, enabling teams to version-control prompts just like code. When designed well, the template reduces ambiguity, accelerates onboarding of new engineers, and provides a solid baseline for governance and safety checks. The result is a repeatable framework that can be adapted to different agent types—ranging from single-task bots to multi-agent orchestration platforms.

Core elements of a template

A robust ai agent instructions template typically includes: goals and success criteria; context and background; constraints and guardrails; inputs, signals, and available tools; outputs and formatting; evaluation plan and feedback loops; safety, compliance, and privacy considerations; versioning and change history. This section explains each element, why it matters, and how to map them to concrete prompts. When you separate these components, you enable teams to swap in new tools or adjust policies without rewriting the entire prompt. The template should be modular, with sections that can be activated or deactivated depending on the task.

Designing for different agent roles

Templates should accommodate diverse agent portfolios: task-oriented agents that perform precise actions, conversational agents that manage dialogue, and orchestration agents that coordinate multiple subsystems. For each role, define success criteria, trigger signals, and fallback behaviors. Multi-agent systems require clear handoffs and auditable decision logs so that chain-of-thought reasoning can be reviewed. Ai Agent Ops emphasizes modularity so teams can reuse core blocks while swapping in domain-specific constraints. This approach reduces cognitive load and supports rapid scaling as new agent types are introduced.

Structuring prompts and constraints for reliability

Reliability comes from precise prompts and bounded constraints. Start with a high-level objective, then add guardrails like hard stop conditions and safe-fallback prompts if the agent encounters ambiguity. Specify input formats (CSV, JSON, or natural language), required tools, and expected outputs with concrete schemas. Include a scoring rubric for evaluation and a feedback loop to refine prompts over time. Always separate policy from task logic so you can adjust governance without rewriting core prompts. Finally, document any assumptions to avoid drift as team members change.

Practical examples and templates you can reuse

To illustrate, consider a short-form template for a data-check task: Goal: Verify data integrity for a report. Context: Data comes from Source A; ensure no duplicates. Constraints: Do not modify source data; Flag anomalies; Output: JSON with fields {status, anomalies}. Steps: 1) Ingest data, 2) Run duplicate check, 3) Compile anomalies. For more complex workflows, use a long-form template with sections for goals, context, prompts, guardrails, and evaluation criteria. Reuse modules across teams by tagging blocks with version numbers and use cases.

Testing, validation, and iteration

Testing a template starts with unit tests that verify each module produces the expected signal. Conduct integration tests where the agent executes a real scenario and logs decisions for audit. Use synthetic edge cases to reveal failure modes, then adjust prompts and constraints accordingly. Establish a regression suite so updates don’t break existing workflows. Finally, maintain an evidence log showing how each version improved outcomes, safety, or efficiency.

Collaboration, governance, and versioning

Teams should maintain a central repository of templates with clear ownership and version history. Use semantic versioning for blocks (major, minor, patch) and require code-like reviews for updates. Document rationale for changes, mapping each change to business outcomes and risk considerations. Governance should include privacy and security checks, ensuring that templates comply with policy and legal requirements. This approach fosters trust and accelerates cross-functional adoption.

Quick-start checklist to implement today

- Define a minimal viable template with goals, context, and a single constraint.

- Create a reusable block you can attach to multiple agent types.

- Set up a versioned repository and a review process.

- Draft evaluation criteria and a simple test scenario.

- Include guardrails and a plan for safety reviews.

Tools & Materials

- Editor or IDE(VS Code, JetBrains, or a web-based editor; enable markdown preview)

- Version control(Git or another VCS to track template changes)

- AI platform access(Access to your agent deployment environment or sandbox)

- Template library(A repo or wiki for modular blocks and examples)

- Risk and governance checklist(Privacy, safety, and compliance evaluation form)

- Documentation tooling(Tools for documentation generation and changelogs)

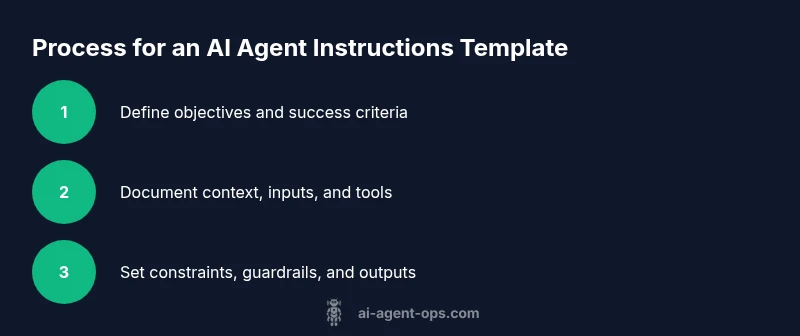

Steps

Estimated time: Total time: 60-90 minutes

- 1

Define the objective

Clearly state the task the agent should accomplish and how success will be measured. Include at least one concrete outcome and a success metric.

Tip: Keep objectives task-centric and observable to ease evaluation. - 2

Identify context and background

Provide essential context, data sources, and assumptions the agent must honor. Clarify what is known and what remains uncertain.

Tip: Document sources to support traceability and audits. - 3

Specify inputs and tools

List all inputs the agent can access and the tools or APIs it may call. Include allowed formats and any required authentication notes.

Tip: Limit tools to prevent scope creep and reduce risk. - 4

Set constraints and guardrails

Define hard constraints, safety guards, and fallback prompts if the agent hits ambiguity or a policy violation.

Tip: Use explicit STOP conditions and safe prompts for edge cases. - 5

Draft outputs and formatting

Specify the exact schema, data types, and formatting for outputs. Include examples to illustrate the expected shape.

Tip: Prefer structured outputs to simplify downstream consumption. - 6

Define evaluation criteria

Create a rubric or scoring method to judge success. Include both qualitative and quantitative measures.

Tip: Link evaluation directly to business objectives. - 7

Add governance and safety notes

Document privacy, security, and compliance requirements. Include policies for data handling and ethical considerations.

Tip: Make governance a required section, not an afterthought. - 8

Version and review

Publish the template with a version number and assign ownership. Schedule regular reviews and updates.

Tip: Use pull requests and changelogs to track rationale. - 9

Test in a sandbox

Run the template against representative scenarios to surface gaps, then iterate.

Tip: Capture logs to inform future improvements.

Questions & Answers

What is an ai agent instructions template?

An ai agent instructions template is a structured blueprint that defines goals, context, constraints, inputs, outputs, and evaluation criteria for AI agents. It enables consistent behavior across tasks and teams.

An ai agent instructions template is a structured blueprint that guides AI agents to behave consistently across tasks.

How is it different from a standard prompt?

A template includes modular blocks, governance notes, and an evaluation plan, whereas a typical prompt focuses on a single instruction. Templates support reuse and auditing across scenarios.

Templates are modular and governed, not just a single instruction prompt.

What are common pitfalls to avoid?

Avoid vague objectives, unclear context, and unbounded tool access. Without guardrails, agents may drift or produce unsafe outputs.

Be precise, bound the agent's actions, and add safety checks.

How do you test templates effectively?

Use a sandbox, run representative tasks, collect logs, and compare outcomes against your evaluation rubric. Iterate on prompts and constraints based on results.

Test in a safe sandbox and refine based on results.

Can templates scale to multiple agents?

Yes. Use a core framework of shared blocks with agent-specific overrides. Versioning and governance help maintain consistency as you scale.

Yes—start with core blocks and customize per agent, with governance.

Watch Video

Key Takeaways

- Define clear, measurable objectives.

- Use modular blocks for reuse.

- Test early in a sandbox and iterate.

- Version templates with changelogs.

- Governance and safety must be documented.