OpenAI Agent SDK vs LangChain: A Practical Comparison for AI Agents

A rigorous, objective comparison of the OpenAI Agent SDK and LangChain to guide developers and leaders in choosing the right framework for AI agent workflows, tooling, and deployment.

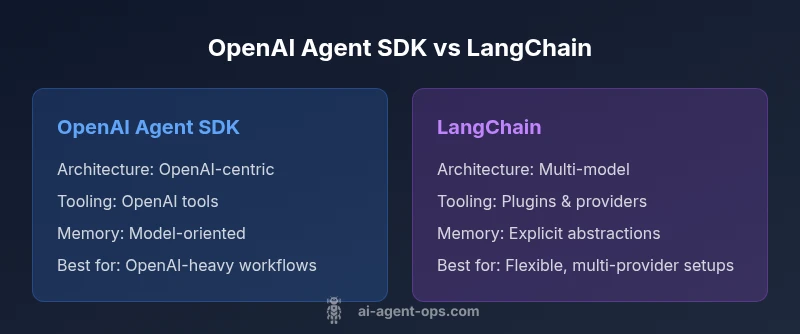

OpenAI Agent SDK and LangChain are two leading options for building AI agents. OpenAI Agent SDK provides tight integration with OpenAI tooling, best for teams deeply invested in OpenAI models. LangChain offers a broader orchestration framework with multi-model tools and plugins. In open ai agent sdk vs langchain, your choice hinges on model strategy and workflow.

Scope and Definitions

Understanding the landscape around open ai agent sdk vs langchain requires clarifying what each option offers. The OpenAI Agent SDK is a toolkit from OpenAI that streamlines the creation, testing, and deployment of autonomous agents that rely on OpenAI models and official tooling. LangChain, by contrast, is a broad framework that unifies prompts, memory, tools, and agents across multiple model providers. According to Ai Agent Ops, the distinction is less about capability and more about ecosystem alignment and orchestration philosophy. If your goals include tight integration with OpenAI's proprietary tooling, OpenAI Agent SDK often provides lower friction and stronger guarantees for model behavior; if your priority is multi-model orchestration, rich plugin ecosystems, and experimentation, LangChain tends to offer more flexibility.

Core Philosophies: Focus vs. Flexibility

The OpenAI Agent SDK is designed around a philosophy of deep, dependable integration with OpenAI's own models and tooling. This tends to reduce the surface area for integration problems when you’re committed to OpenAI’s ecosystem. LangChain follows a philosophy of abstraction and interoperability, emphasizing a plug-and-play approach to models, tools, and memory strategies. For teams that want to mix models (e.g., combining an OpenAI model with a different vendor’s vector database or tool), LangChain’s design often reduces friction and accelerates experimentation.

Ecosystem Maturity and Community Signals

When evaluating open ai agent sdk vs langchain, consider ecosystem maturity. LangChain has cultivated a broad community with plugins, tutorials, and third-party tools across multiple model providers. OpenAI’s SDKs, meanwhile, benefit from official updates and strong integration with OpenAI services such as tools, function calling, and policy controls. Ai Agent Ops notes that a thriving ecosystem can translate into faster troubleshooting, richer examples, and more robust tooling, but it may also introduce more variability in implementation details depending on the plugin version and provider.

Architecture and Modularity

OpenAI Agent SDK tends to emphasize a modular path tightly bound to OpenAI’s model capabilities and official tooling. LangChain emphasizes modularity at a broader level, enabling users to compose agents from a diverse set of components: prompts, memories, tools, validators, and orchestrators. This modularity supports complex agent behaviors but can demand more upfront architectural decisions about data flow, memory management, and error handling. For teams starting with a clear OpenAI-centric workflow, the SDK can offer faster ramp-up; for those pursuing heterogeneous toolchains, LangChain shines in adaptability.

Tooling and Tool Integration

Tool invocation, function calling, and tool management are central to AI agents. The OpenAI Agent SDK delivers a streamlined path to leverage OpenAI tools and official resources with minimal boilerplate. LangChain provides a broader toolkit for integrating external tools, APIs, databases, and custom plugins across model providers. In practice, this means LangChain can accommodate tools outside the OpenAI universe, while the OpenAI SDK might deliver tighter guarantees and easier debugging for OpenAI-specific workflows. Teams should map required tools to the framework that minimizes integration gaps and maintenance overhead.

Memory, State, and Continuity

Memory management is a critical area for long-running agents. LangChain offers explicit memory abstractions designed to preserve context across turns, sessions, and even disparate runs. The OpenAI SDK supports consistent interactions with OpenAI models and can pair with external memory services, but the approach is often more prescriptive and model-centric. The decision depends on whether your use case benefits from a plug-and-play memory layer (LangChain) or a more integrated memory strategy aligned with OpenAI tooling (OpenAI SDK).

Security, Compliance, and Governance

Operational security and governance matter for production agents. OpenAI’s SDK aligns with OpenAI’s security controls and model safety features, which can simplify risk management for teams already aligned with OpenAI’s policies. LangChain’s broader ecosystem invites diverse tooling, which can complicate governance but offers more flexible risk models if you implement your own controls and reviews. Ai Agent Ops recommends auditing tool provenance and enforcing least privilege when combining third-party plugins with either framework.

Performance and Reliability Considerations

Latency, throughput, and reliability depend on an agent’s architecture and toolchain. OpenAI SDK workflows typically benefit from streamlined calls to OpenAI models and tools, potentially reducing overhead for OpenAI-heavy deployments. LangChain’s orchestration layer can introduce additional software boundaries, but it also enables parallel tool calls, caching strategies, and batch processing across multiple providers. The best choice hinges on your expected traffic patterns, tooling diversity, and the acceptable trade-offs between simplicity and flexibility.

Use-Case Fit: When to Choose Each

For teams that rely heavily on OpenAI models, seek fastest onboarding, and want strong guarantees around model behavior and policy enforcement, the OpenAI Agent SDK is a natural fit. If your strategy includes multiple models, extensive external tooling, or future migration toward a multi-provider approach, LangChain often provides greater long-term flexibility. Ai Agent Ops emphasizes tailoring your decision to your organization's risk tolerance, talent pool, and operational priorities. A hybrid approach—start with the SDK for core flows, then layer LangChain for exploratory tools—can also be effective.

Migration Path and Interoperability

Migration considerations matter when evaluating open ai agent sdk vs langchain. If you start with the SDK and later need broader tool integration, LangChain can be introduced as an orchestration layer on top of existing OpenAI-based components. Conversely, teams beginning with LangChain can gradually align with OpenAI’s official tooling if OpenAI-specific guarantees become essential. Planned abstraction layers, clear API contracts, and thorough testing suites ease future transitions and reduce vendor-lock risk.

Practical Guidelines and Best Practices

- Start with a clear problem statement: model scope, tools required, and success criteria.

- Map tool types to the most compatible framework: OpenAI-centric tool calls or multi-model toolchains.

- Invest in observability: logging, tracing, and structured metrics across prompts, calls, and tool invocations.

- Prioritize security: enforce access controls, secret management, and prompt hygiene regardless of framework.

- Plan for iteration: choose a framework that accelerates experimentation while maintaining governance discipline.

- Document migration paths early to ease future transitions and reduce operational risk.

Comparison

| Feature | OpenAI Agent SDK | LangChain |

|---|---|---|

| Architecture & Modularity | Tightly integrated with OpenAI tooling and model behavior | Broader modularity: prompts, memory, tools, and multi-model orchestration |

| Tool Integration | Strong OpenAI tool integration and function calling | Extensive plugin and multi-provider tool ecosystem |

| Memory & State | Model-centric memory pathways aligned with OpenAI tooling | Explicit memory abstractions for sessions, turns, and pipelines |

| Performance & Latency | Potentially lower overhead within OpenAI-centric workflows | Flexibility to optimize across providers and caches |

| Security & Governance | Aligned with OpenAI security controls and policy guarantees | Broader ecosystem requires explicit governance of plugins |

| Ecosystem & Community | Official OpenAI guidance and tooling updates | Vibrant multi-provider community with plugins and tutorials |

| Best For | Teams committed to OpenAI tooling and enterprise OpenAI features | Teams needing model diversity, plugins, and rapid experimentation |

| Pricing & Support Considerations | Aligned with OpenAI pricing and enterprise support avenues | Varies with provider plugins; broader support options via community |

Positives

- Clear alignment with OpenAI tooling for enterprise workflows

- Faster onboarding if OpenAI-centric deployment is core

- LangChain enables broad multi-model experimentation and plugins

- Strong community resources and templates for both options

- Flexibility to evolve architecture as needs change

What's Bad

- SDK may be too prescriptive for non-OpenAI models

- LangChain adds architectural complexity and potential integration overhead

- Vendor lock-in risk with SDK if OpenAI tooling dominates

- Plugin quality and security vary in LangChain ecosystem

Choose based on ecosystem alignment and long-term strategy

If your pipeline revolves around OpenAI tooling and policy controls, OpenAI Agent SDK is a natural fit. If you anticipate multi-model deployments and broad plugin use, LangChain offers superior flexibility. Ai Agent Ops recommends starting with the framework that aligns with your current model strategy and then expanding as needs evolve.

Questions & Answers

What is the primary difference between the OpenAI Agent SDK and LangChain?

The OpenAI Agent SDK is built around OpenAI tooling and model behavior, delivering tight integration for OpenAI-based workflows. LangChain is a more general framework designed for multi-model orchestration, plugins, and broader tool integration. This fundamental distinction shapes implementation choices and long-term flexibility.

OpenAI Agent SDK focuses on OpenAI tooling, while LangChain emphasizes multi-model orchestration and plugins.

Which should I choose for production?

If your production environment relies heavily on OpenAI models with strict policy controls, the SDK offers streamlined deployment and support. If you need model diversity and rapid prototyping with various tools, LangChain provides more flexibility. Consider starting with the SDK and layering LangChain for broader tool integration if needed.

Choose SDK for OpenAI-heavy production; choose LangChain for flexibility and multi-model setups.

Can I use LangChain with the OpenAI Agent SDK?

Yes, you can architect LangChain workflows that leverage OpenAI models and tools. However, compatibility considerations and integration boundaries should be tested early in a staging environment. Plan for governance and maintenance when combining the two.

You can, but test integration and governance carefully.

What about memory and state management?

LangChain offers explicit memory abstractions to preserve context across sessions, turns, and pipelines. The OpenAI SDK supports memory in a model-centric way and often requires external services for long-term persistence. Choose based on how central persistent context is to your agent's behavior.

LangChain has strong memory abstractions; the SDK leans on model-centric state.

Are there security concerns I should consider?

Both approaches require robust secret management, prompt hygiene, and access controls. SDK use aligns with OpenAI security features, while LangChain's plugins demand careful validation and supply-chain checks. Implement baseline governance regardless of choice.

Yes—secure secrets and plugins, with strong governance, are essential.

Is there a recommended migration path between them?

A staged approach works: start with OpenAI SDK for core flows, then layer LangChain for broader integrations. If moving later, define API contracts and tests to minimize disruption. Planning early reduces migration risk.

Start with SDK, then layer LangChain if you need broader integrations.

Key Takeaways

- Assess model strategy before selecting a framework

- Prioritize tool and memory needs over UI aesthetics

- Plan for governance and security from day one

- Leverage hybrid approaches to balance speed and flexibility

- Invest in observability to simplify future migrations