ai agent pros and cons: a balanced comparison for teams

A comprehensive, data-informed look at the advantages and tradeoffs of AI agents. Learn where AI agents excel, where they struggle, and how to govern them for smarter automation in development and business contexts.

AI agent pros and cons hinge on governance, data maturity, and organizational readiness. In brief, AI agents can dramatically increase throughput, speed decision-making, and scale automation, but they introduce complexity, risk, and governance needs that must be managed. The right choice depends on your domain, data quality, and guardrails. With careful planning, teams can realize substantial value while controlling risk.

What AI Agents Are and Why They Matter

AI agents are autonomous software systems that perceive, reason, and act to accomplish tasks with minimal human intervention. They can chain tools, learn from data, and adapt to changing contexts, making them well suited for complex workflows that involve multiple steps, data sources, and decision points. For developers and product teams, AI agents promise to accelerate product development, improve operational efficiency, and enable new automation paradigms. At a high level, the pros and cons of ai agent pros and cons revolve around capabilities, governance, and the balance between autonomy and control. According to Ai Agent Ops, the value of AI agents grows when teams have clear objectives, reliable data, and guardrails that prevent drift from business goals. This article uses an objective, data-informed lens to compare options, identify where AI agents shine, and explain the tradeoffs you should plan for.

The Core Benefits: Why Teams Invest in AI Agents

The most compelling benefits of AI agents include throughput scaling, faster cycle times, and consistency across tasks. When designed with proper context and safety checks, AI agents can handle repetitive decision points, triage issues, and route tasks with minimal human input. For many teams, this translates into reduced human workload, the ability to reallocate engineering talent to higher-value work, and the potential for 24/7 operations in customer support, data processing, and incident response. Beyond productivity, AI agents can improve data-driven decision making by synthesizing inputs from disparate sources and surfacing actionable insights. Yet, realizing these benefits requires careful data governance, monitoring, and alignment with business objectives. Ai Agent Ops analysis shows that teams commonly see bigger gains when they pair agents with explicit success criteria, continuous feedback loops, and transparent escalation paths.

The Key Drawbacks: Where Things Get Tricky

Despite strong upside, AI agents bring notable challenges. The most significant are complexity and governance overhead: building reliable agents requires integration with data pipelines, toolchains, and security controls. Misalignment between agent behavior and business goals can lead to inefficiencies or inappropriate actions. Data privacy and security concerns arise when agents access sensitive information or operate across multiple environments. There is also the risk of brittle behavior if training data changes or if the system encounters out-of-distribution tasks. Cost considerations—not just upfront but ongoing maintenance, monitoring, and tooling—must be weighed against expected value. Ai Agent Ops emphasizes that the economic case for AI agents hinges on disciplined scoping, measurable pilots, and an explicit plan for governance and risk management.

When to Use AI Agents: Context and Best Practices

Use AI agents when tasks are repetitive, rule-based at a high level but require some adaptability, or involve multiple tools and data sources. They shine in environments with well-defined success criteria, stable data feeds, and a need for rapid experimentation. Start with a narrow pilot that maps clear inputs, outputs, and escalation rules. Build guardrails that enforce constraints (policy checks, rate limits, privacy controls) and establish monitoring dashboards that alert teams to drift or failures. As maturity grows, expand scope gradually, keeping a transparent trail of decisions and outcomes so stakeholders can assess value and risk. Ai Agent Ops advises teams to document decision boundaries, instrument agent behavior, and maintain a clear rollback plan.

Evaluation Framework: Cost, Value, and ROI Considerations

Assessment should balance potential efficiency gains with total cost of ownership, including data preparation, tooling, and governance. Define quantitative metrics (throughput, cycle time, error rate) and qualitative outcomes (customer satisfaction, safety, trust). Compare agent-based workflows to baseline automation and to human-only processes. Conduct staged pilots that incrementally increase autonomy while monitoring risks. The goal is to demonstrate concrete improvements in speed, accuracy, or scalability without compromising security or governance. Ai Agent Ops highlights the importance of a clear success criterion for each use case and a path to scale that preserves accountability and explainability.

Architecture, Governance, and Operational Excellence

Successful AI agents require a solid architecture: modular pipelines, clear interfaces, robust logging, and versioned policies. Governance should cover data access, privacy, bias detection, and escalation procedures. Operational excellence rests on observability—metrics, traces, and alarms that reveal why an agent acted in a certain way. Establish a governance committee, standardized playbooks for incident response, and routine audits of agent performance and data lineage. Integrate with existing security controls, ensure role-based access, and implement privacy-preserving techniques as needed. Ai Agent Ops recommends treating AI agents like any critical system: design for reliability, resilience, and auditable decisions.

Industry Use Cases and Scenarios

Across industries, AI agents are applied to customer support routing, workflow orchestration, data extraction, and decision-support tasks. In software development, agents can triage build failures, suggest code changes, or automate repetitive QA steps. In operations, agents can monitor for anomalies, trigger remediation workflows, and coordinate across teams. In business analytics, agents can collect data from multiple sources, perform synthesis, and present ready-to-use insights. While use cases vary, the core pattern remains: identify a multi-step task, design agent roles with guardrails, and measure outcomes against defined objectives. Ai Agent Ops has observed that the most successful deployments share a common framework: a narrow initial scope, end-to-end ownership, and a plan for governance from day one.

Common Pitfalls and How to Avoid Them

A few recurring pitfalls threaten AI agent programs: scope creep, poor data quality, and under-investment in monitoring. To avoid these, maintain a strict problem definition, lock down data sources, and implement continuous feedback loops from users. Another risk area is hidden costs: tool licenses, compute usage, and maintenance can grow if not managed. Establish budgets, track usage, and periodically re-evaluate the value proposition. Finally, avoid reliance on a single agent for critical decisions; incorporate human-in-the-loop escalation when needed and ensure a transparent audit trail for important actions. Ai Agent Ops recommends an iterative, guarded approach to minimize risk while learning what works in your environment.

Getting Started: A Practical Roadmap for Teams

Initiate with a concrete pilot aligned to a single, well-scoped process. Define inputs, expected outputs, and success criteria. Gather data, configure the agent’s tool connections, and implement guardrails (privacy, security, and escalation). Run the pilot with limited scope, monitor results, and collect feedback from users. Use lessons learned to expand scope gradually, updating governance documents and performance dashboards as you scale. Document the rollout, create a repeatable template for other teams, and keep leadership informed with transparent metrics. Ai Agent Ops emphasizes that a disciplined, incremental approach yields sustainable gains.

Comparison

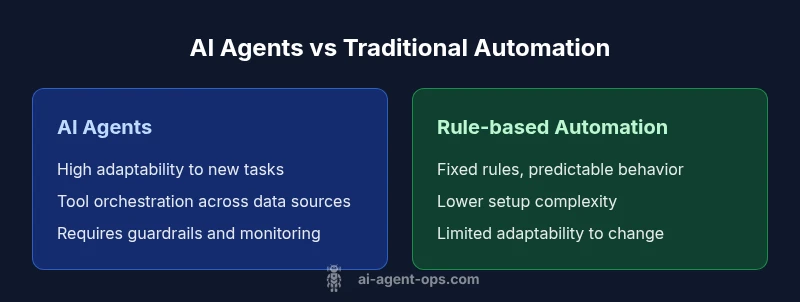

| Feature | AI agents | Rule-based automation |

|---|---|---|

| Setup & maintenance effort | Medium to high | Low to medium |

| Adaptability to new tasks | High with data context | Low; fixed rules only |

| Decision quality and autonomy | High with good data; requires monitoring | Predictable; depends on rule completeness |

| Cost considerations | Higher upfront and ongoing costs | Lower upfront; predictability improves budgeting |

| Governance and risk | Requires guardrails and ongoing governance | Simpler governance but limited flexibility |

| Best for | Complex, evolving processes needing learning | Stable, routine tasks with clear rules |

Positives

- Scales across tasks with minimal human input

- Improves speed and throughput

- Consistency and reduced human error

- 24/7 operations possible in supported domains

- Facilitates data-driven decision making

What's Bad

- Requires careful setup and ongoing governance

- Risk of misalignment with business goals

- Data privacy and security considerations

- Higher total cost of ownership if not managed well

AI agents offer strong value when governed properly and scoped tightly

They excel at scale and speed but require guardrails, governance, and careful data handling. A phased, measurable rollout helps realize benefits while limiting risk.

Questions & Answers

What is the main difference between AI agents and traditional automation?

AI agents differ from traditional automation in their ability to perceive, decide, and act across varying tasks with minimal direct input. They can handle less structured problems and adapt to new contexts, whereas traditional automation relies on fixed rules. The result is greater flexibility alongside new governance and safety requirements.

AI agents adapt to changing tasks, while traditional automation follows fixed rules.

What are typical costs and time to implement?

Costs and timelines vary widely with scope, data readiness, and tool choices. Start with a narrow pilot to estimate data needs, integration effort, and governance overhead before expanding. Expect ongoing maintenance and governance activities beyond initial deployment.

Costs vary; begin with a small pilot to gauge effort and governance needs.

How do you measure success of AI agents?

Success is measured by objective outcomes such as throughput gains, cycle-time reductions, accuracy improvements, and user satisfaction. Combine quantitative metrics with governance indicators like drift, reliability, and escalation rates to ensure sustainable value.

Track throughput, speed, accuracy, and user satisfaction while watching for drift.

What governance practices are recommended?

Establish policy controls, access governance, data lineage, and escalation procedures. Create an owner for each agent, define decision boundaries, and implement ongoing monitoring with alerting for anomalies or policy violations.

Set clear ownership, boundaries, and monitoring for each agent.

Are there data privacy concerns with AI agents?

Yes. Agents may access sensitive data across systems, so apply minimization, encryption, access control, and privacy-preserving techniques. Regular audits and compliant data handling practices are essential.

Protect data with privacy controls and audits.

When should you avoid AI agents?

Avoid deploying AI agents for tasks requiring deep, nuanced human judgment, highly sensitive decisions, or when data quality is poor and governance is weak. In such cases, a more controlled, human-in-the-loop approach is safer.

If judgment, sensitivity, or data quality is uncertain, pause automation.

Key Takeaways

- Anchor pilot projects to clear success metrics

- Guardrails and governance are non-negotiable

- Measure both quantitative and qualitative outcomes

- Prepare for data needs and security considerations

- Adopt an incremental, repeatable rollout framework