Agentic AI: Single vs Multi-Agent Systems – A Practical Guide

A rigorous, data-driven comparison of single-agent versus multi-agent systems in agentic AI, with practical guidance for developers and leaders.

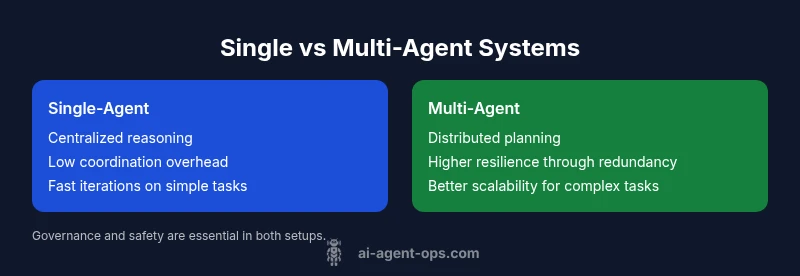

In the field of agentic AI, the choice between a single-agent system and a multi-agent system often hinges on task complexity and governance overhead. A single agent provides simplicity and fast iteration for well-bounded problems, while multi-agent configurations enable distributed planning, parallel exploration, and higher resilience for dynamic workloads. Based on Ai Agent Ops analysis, a pragmatic path is to start with a strong single agent and layer a controlled multi-agent layer to tackle larger, continuously evolving tasks.

Context and Definitions

Agentic AI describes systems where autonomous agents reason, plan, and act to achieve objectives within a shared environment. This article compares single-agent designs—with one agent shouldering all tasks—to multi-agent systems where several agents collaborate, negotiate, and sometimes compete for resources. The phrase agentic ai single vs multi agent systems captures a core decision: do you centralize intelligence or distribute it? According to Ai Agent Ops, the choice hinges on task complexity, the need for parallelism, and governance overhead. In practice, teams often start with a strong single agent to validate core workflows and then layer specialized agents for delegated responsibilities. The goal is to align architecture with business goals, risk tolerance, and the expected tempo of change. Throughout this guide we will reference common patterns, measurable trade-offs, and practical heuristics to help developers, product teams, and business leaders choose wisely. We will also discuss how maturity models, monitoring, and safety policies influence every decision, from data interfaces to failure handling. By foregrounding trade-offs, organizations can pace adoption to minimize disruption while enabling scalable automation.

This article is also framed to help developers, product leaders, and executives assess how governance and risk management shape both single-agent and multi-agent architectures. It reflects Ai Agent Ops’s emphasis on pragmatic tooling, observability, and measurable outcomes when selecting agent configurations. The discussion consistently ties back to real-world constraints such as latency, data locality, and policy enforcement, ensuring that the recommended patterns are implementable in modern tech stacks."

Why this comparison matters for developers and leaders

As organizations increasingly rely on agentic AI to automate decisions and actions, the architecture choice directly affects speed, cost, and reliability. A single-agent system offers simplicity, predictable performance, and easier debugging, which translates to faster time-to-market for well-bounded problems. Multi-agent configurations unlock parallel exploration, redundancy, and domain specialization, enabling sophisticated workflows such as distributed planning, robust fault handling, and adaptive collaboration with external services. The Ai Agent Ops team notes that many production teams adopt a staged approach: begin with a single agent that handles core logic, then incrementally introduce additional agents with clearly defined interfaces, governance, and safety constraints. This pattern reduces upfront risk while preserving the opportunity to scale as requirements grow. Developers should also consider operational realities: latency between agents, potential data duplication, and the need for transparent escalation when disagreements arise. The practical takeaway is to treat agenting as an engineering discipline with explicit contracts and measurable goals.

From a governance perspective, the single-to-multi transition often reveals where policy, logging, and human-in-the-loop checks should be embedded early. Ai Agent Ops’s experience indicates that teams that document decision interfaces, error-handling protocols, and escalation paths tend to accelerate adoption while maintaining safety and compliance. This section sets the stage for deeper analysis of when to favor centralization versus distribution across tasks, domains, and teams.

Core architectural differences

Core architecture revolves around where cognition happens, how memory is shared, and how failures propagate. In a single-agent design, all perception, reasoning, and action selection happen within one process or container, sharing a common memory and decision loop. In a multi-agent system, you split cognition across agents, each with its own beliefs, goals, and plans, plus a central or distributed coordinator that mediates interactions. The trade-offs include latency overhead, coordination complexity, and data governance concerns. A central coordinator can simplify orchestration but becomes a bottleneck and a single point of failure; a distributed coordination approach reduces bottlenecks but increases protocol complexity. The article emphasizes that the most successful implementations explicitly model interfaces, allowed inter-agent protocols, and escalation paths to humans when needed. You also need to define how success is measured: a single agent may optimize for speed, while a multi-agent system can optimize for resilience and coverage.

Architectural decisions should also reflect how data flows between perception, deliberation, and action. For single agents, you can optimize for end-to-end latency but at the cost of reduced redundancy. For multi-agent setups, plan for inter-agent contracts, fault containment strategies, and scalable knowledge sharing. The result is not a single silver bullet; it is a spectrum where the right point depends on the organization’s tolerance for complexity, its risk posture, and the pace of change in the environment being automated.

Coordination mechanisms in multi-agent systems

Coordination in multi-agent systems often relies on three pillars: communication protocols, shared knowledge bases, and contract-based interfaces. Agents exchange structured messages via well-defined protocols that specify message shape, timing, and failure handling. Some teams use a blackboard pattern to publish and subscribe to world state, while others implement a brokered architecture with explicit contracts between agents. A robust approach includes a termination policy for deadlock, escalation rules for when inter-agent negotiation stalls, and a safety floor—limits on what agents may do without human confirmation. Practical lessons include simulating interactions in sandbox environments, instrumenting tracing to diagnose cross-agent decisions, and maintaining a lightweight ledger of decisions to improve auditability. The aim is to minimize brittle interdependence and ensure that the system continues to function if one agent underperforms or fails.

A crucial practice is to define versioned interfaces and evolve contracts gradually to prevent cascading compatibility issues. When agents share a common ontology or data schema, you reduce misinterpretation and negotiation overhead. In mature deployments, you will see automated testing for interaction protocols, including synthetic fault injection to reveal edge cases in coordination. The takeaway is that robust coordination requires discipline in interface design, observability, and governance during every deployment.

Decision making and autonomy granularity

Autonomy comes in degrees. A single agent can make end-to-end decisions, but in a multi-agent setup autonomy is distributed: some agents propose options, others select by consensus, and a coordinator enforces global objectives. The key questions are: who defines the objectives, who tunes the reward signals, and how do we prevent escalation loops? A practical pattern is to separate planning from execution and to restrict high-risk actions behind policy gates. Designers also differentiate between narrow autonomy (delegated tasks with clear success criteria) and full autonomy (long-running plans with dynamic Goal changes). The benefits of controlled autonomy include faster adaptation and improved fault containment, while drawbacks include potential inter-agent conflicts and the need for robust conflict resolution strategies.

This section emphasizes setting explicit authority boundaries: assign explicit decision rights, publish policy constraints, and implement confidence thresholds before actions are executed. Having clear autonomy levels helps teams avoid creeping scope and ensures predictable system behavior even when multiple agents operate concurrently. A well-designed autonomy model reduces debuggability complexity while keeping room for future expansion as tasks become more intricate.

Data sharing, learning, and knowledge scope

Single-agent systems tend to have centralized memory, which simplifies data governance but can bottleneck learning. Multi-agent systems distribute knowledge across agents, improve scalability for knowledge-intensive tasks, and enable parallel learning pathways. However, distributed memory raises governance concerns, versioning challenges, and the risk of model drift between agents. Approaches to mitigate include centralized knowledge repositories, standardized schemas, and cross-agent training events that refresh shared representations. Teams should establish data locality policies, access controls, and privacy safeguards, especially when agents interact with external services or end-user data. The article also discusses how to measure information asymmetry between agents and how to ensure that knowledge persists through system restarts or partial outages.

In practice, you often see a hybrid approach: keep a core knowledge base centralized for policy and critical data while distributing non-sensitive experiential data to agents for local adaptation. This balance improves both governance and performance while avoiding systemic data silos that can cripple collaboration across agents.

Governance, safety, and reliability considerations

Governance is a first-order concern in any agentic AI deployment. Single-agent systems allow simpler enforcement of policies but concentrate risk. Multi-agent ecosystems require governance across interfaces, inter-agent protocols, and escalation policies. Safety relies on transparent decision logs, human-in-the-loop options for critical decisions, and mechanisms to detect anomalous agent behavior. Reliability hinges on monitoring, circuit breakers, graceful degradation, and roll-back capabilities. The Ai Agent Ops team emphasizes designing for failure: simulate adverse conditions, test for coordination deadlocks, and implement clear SLOs/SLA obligations. You should also allocate resources for auditing and compliance to satisfy industry and regulatory requirements. In practice, governance is not a one-off activity but an ongoing discipline that evolves with the system’s maturity and the breadth of tasks delegated to agents.

A practical takeaway is to codify governance in automation pipelines: policy-as-code, alerting on policy violations, and automated remediation when cross-agent conflicts are detected. This proactive stance helps teams sustain safety and reliability as complexity grows.

Operational costs and maintenance implications

Cost considerations for agentic AI systems vary with architecture. A single-agent deployment generally entails lower hardware, fewer integration points, and simpler CI/CD pipelines, which translate to lower maintenance costs in early stages. Multi-agent systems incur higher ongoing costs due to inter-agent communication, cross-agent testing, more complex monitoring, and the need for governance tooling. However, the long-term value often justifies the investment when dealing with multi-domain tasks, high-volume workloads, or environments requiring resilience and adaptability. The section discusses trade-offs in terms of compute, storage, and human oversight, and highlights the importance of automation for governance across the agent network, not just within a single agent. Ai Agent Ops’s analysis suggests budgeting for a governance overlay, inter-agent testing infrastructure, and observed latency budgets across nodes to maintain predictable performance.

Businesses should also quantify the cost of coordination overhead versus the anticipated gains in speed, fault tolerance, and domain coverage. In practice, a staged funding approach—funding a high-value core agent first, then allocating resources for additional agents as metrics justify—helps manage financial risk while delivering early returns.

Deployment patterns and risk profiles

Deployment strategies differ: single-agent deployments are typically rolled out quickly with fast feedback loops; multi-agent architectures require staged rollouts, feature flags for coordination layers, and robust rollback plans. Risk profiles include single-point failures in the agent, orchestration bugs, and data leakage risks with distributed knowledge. The recommended approach is to define clear success metrics, run phased experiments, and maintain a cross-functional gate that includes safety reviewers. The article references Ai Agent Ops's practical guidance on governance and risk management. Teams should implement progressive exposure, simulate failure modes, and ensure that rollbacks restore a known-good state without cascading effects across agents.

A practical rule of thumb is to begin with a pilot in a controlled environment, measure latency and coordination reliability, then progressively expand while maintaining strict observability around inter-agent interactions. This approach prevents surprises during scale and keeps teams aligned with business goals.

Decision framework: a step-by-step approach

Step 1: map the task landscape and classify tasks as well-bounded or open-ended. Step 2: prototype with a single agent to validate core workflows. Step 3: identify candidates for delegation to additional agents and define precise interfaces. Step 4: design a coordination protocol and decide on centralized vs distributed planning. Step 5: implement governance, logging, and safety checks. Step 6: monitor performance and maintain a staged upgrade path. The framework emphasizes measurable criteria such as time-to-value, latency budgets, and failure rate, with explicit go/no-go gates at each stage. Ai Agent Ops’s practical guidance reinforces documenting interfaces and establishing a policy-driven guardrail to ensure that the transition from single to multi-agent architectures remains under control and aligned with business objectives.

Block 12: Use-case patterns across industries

Industries such as software engineering, logistics, finance, and customer support apply agentic AI architectures differently. For instance, software teams may begin with a single agent for issue triage and then deploy a small multi-agent system to coordinate automated QA, deployment, and monitoring. In logistics, a hybrid approach often begins with a centralized decision agent, then evolves into a multi-agent network for routing optimization and inventory control. The examples illustrate how the same foundational patterns adapt: small, well-defined agents to manage specific domains, and a coordination layer to ensure alignment to business objectives. Across marketing, manufacturing, and healthcare, the core decision remains: start with well-scoped agents, then expand the coordination fabric as complexity and scale demand it.

Comparison

| Feature | Single-agent system | Multi-agent system |

|---|---|---|

| Coordination complexity | Low/none | High; requires negotiation protocols and contracts |

| Scalability | Limited by a single cognitive loop | High scalability through parallelism and specialization |

| Fault tolerance | Lower resilience (single point of failure) | Higher resilience through redundancy and fallback agreements |

| Data sharing/knowledge | Centralized memory and access control | Distributed knowledge with governance across agents |

| Development cost | Lower upfront cost | Higher ongoing maintenance and integration costs |

| Best for | Well-defined, bounded tasks | Complex, dynamic tasks requiring collaboration |

Positives

- Better scalability for complex tasks

- Improved fault tolerance through redundancy

- Specialization enables faster problem solving

- Clearer interfaces aid maintainability

What's Bad

- Higher coordination overhead

- Increased governance and orchestration burden

- More complex debugging and testing

- Longer initial time-to-value

Multi-agent systems generally outperform single-agent systems for complex, dynamic workloads; single-agent remains preferable for simple, well-defined tasks.

Choose multi-agent when task diversity and resilience matter. For quick wins, start with a robust single agent and plan a staged transition to multi-agent coordination as needs evolve.

Questions & Answers

What is a single-agent system in agentic AI?

A single-agent system delegates all perception, reasoning, and action to one autonomous agent. It is easier to deploy, debug, and govern but may struggle with very large or dynamic workloads.

A single-agent system uses one agent for all tasks; it's simple but can struggle with scale.

What defines a multi-agent system?

A multi-agent system distributes tasks across several agents, each with its own goals and plans, coordinated by a controller or through protocol. This enables parallel work and resilience but increases orchestration complexity.

A multi-agent system uses multiple agents that collaborate, which helps with complexity but adds coordination needs.

When should I start with a single agent?

Start with a single agent for simple, well-defined problems to reduce risk and accelerate validation. Only add agents when requirements demand parallelism or domain specialization.

Begin with one agent to prove the core idea, then layer in others as needed.

How do I transition to a multi-agent architecture safely?

Plan a staged migration: define clear interfaces, introduce a light coordination layer, run A/B tests, and monitor governance and safety metrics before expanding.

Move in stages: define interfaces, test the waters, monitor safety and governance.

What governance considerations matter?

Governance should cover access control, decision transparency, policy enforcement, and escalation paths to humans. These policies help prevent unsafe coordination and data leaks.

Set clear rules and escalation paths to keep things safe and controllable.

Are there real-world examples?

Companies adopt single agents for core automation before expanding to multi-agent setups for advanced orchestration, governance, and resilience. Look for case studies in AI automation and agent orchestration.

Yes, many firms start with one agent and gradually add others as needs grow.

Key Takeaways

- Start simple with a strong single agent, then layer multi-agent capabilities as needed

- Invest in explicit interfaces and protocols to reduce coordination chaos

- Prioritize governance, safety, and monitoring from day one

- Treat agenting as an engineering discipline with measurable goals and dashboards

- Plan for staged transitions to balance speed and scalability