AI vs QP: A Comprehensive Comparison for Builders and Leaders

Explore an objective, in-depth comparison of artificial intelligence and quantum processing. Learn where each excels, their limits, and how to plan practical adoption with governance, cost, and roadmap considerations.

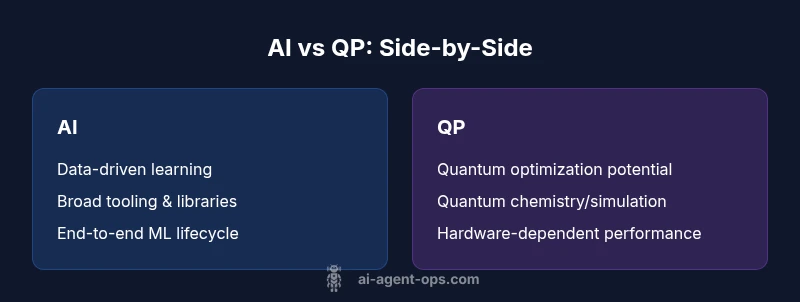

ai vs qp: Artificial intelligence (AI) currently dominates data-driven tasks with scalable tooling, while quantum processing (QP) remains largely experimental but promises breakthroughs for specific optimization and simulation problems. For most teams, AI offers faster ROI and broader ecosystems; QP is a strategic bet for select problem classes and long timelines. The choice depends on problem type, data, and readiness.

Defining AI vs QP

According to Ai Agent Ops, ai vs qp describes two complementary but distinct technologies. AI refers to computational systems that learn from data to perform tasks, recognize patterns, and make decisions. QP refers to computational approaches leveraging quantum phenomena to solve certain classes of problems more efficiently than classical approaches. In practice, AI algorithms run on conventional processors, while QP requires specialized quantum hardware or simulators. Understanding the fundamental differences helps teams set realistic expectations and build roadmaps that align with business goals. In the Ai Agent Ops context, we emphasize pragmatic adoption: use AI where data-driven decisions are needed now, and plan for QP experiments as hardware and algorithms mature. This distinction matters because it informs budgeting, talent strategy, and project framing. For teams exploring automation, ai vs qp becomes a guiding lens to decide between building AI-driven agents today and watching for quantum-enabled breakthroughs in optimization and simulation.

The distinction is often framed around problem structure: if you can model the task as learning from data and optimizing with established ML techniques, AI is typically the default path. If the problem features combinatorial complexity, exotic state representations, or optimization landscapes where quantum effects could accelerate search, QP starts to look attractive. Ai Agent Ops’s practical stance is to separate what you can do now with AI from what you might unlock later with QP, balancing risk, cost, and time-to-value.

Core Capabilities and Constraints

Artificial intelligence excels at recognizing patterns, understanding language, and forecasting from data. It scales across industries with mature tooling, libraries, and best practices for model training, evaluation, and deployment. Quantum processing, by contrast, targets specific problem classes such as combinatorial optimization, quantum chemistry, and certain simulation tasks where classical methods struggle. QP's potential comes from quantum speedups for particular algorithms, but hardware noise, error rates, and the need for specialized encodings limit current practicality. In practice, hybrid approaches—where AI handles data-driven parts and quantum-inspired techniques guide optimization—are common stepping stones. Important constraints include data quality, model bias, and governance for AI; and hardware availability, calibration complexity, and programmatic overhead for QP. By acknowledging these realities, teams can design experiments and roadmaps that maximize learning with minimal risk. The strategic takeaway is to separate what you can do today (AI) from what you want to explore tomorrow (QP).

Use Case Fit: When to Choose AI or QP

Choosing ai vs qp is not a binary choice; it is a matter of mapping problem structure to tooling. AI shines in data-rich tasks: predictive maintenance, customer sentiment analysis, fraud detection, and autonomous agents that learn from user interactions. These problems benefit from continuous improvement, large labeled datasets, and mature deployment pipelines. Ai Agent Ops analysis shows that in many organizations, AI-first automation accelerates time-to-value, reduces manual toil, and democratizes decision-making. QP, meanwhile, holds promise for select optimization and simulation domains where classical methods hit combinatorial walls or exponential scaling. Potential use cases include complex logistics optimization, materials discovery, and certain financial portfolio problems, provided the problem encodes well to quantum algorithms and the hardware is accessible. Early pilots often use quantum-inspired heuristics to prototype ideas before committing to actual quantum hardware. In hybrid architectures, AI handles the noisy data environment while QP accelerates a constrained subproblem, offering a path to incremental value without a full quantum rollout.

Performance, Data, and Compute Considerations

The performance envelope for ai vs qp differs fundamentally. AI performance scales with data: more diverse and higher-quality data typically yields better models, though model governance and bias remain pressing concerns. Training large models benefits from distributed compute and accelerators; inference can be optimized with quantization and edge deployment, enabling real-time or near-real-time workloads. Quantum processing, in contrast, is sensitive to noise and coherence times; even when a quantum computer is available, most tasks require careful encoding, error mitigation, and hybrid decomposition strategies. The upshot is that AI currently provides more reliable, predictable performance for a wide range of tasks, while QP offers rare, hardware-dependent advantages for narrow problem sets. Planning should include a roadmap that allocates budget and talent for data engineering, model risk management, and MLOps on the AI side, alongside exploratory QA tests, simulator runs, and feasibility studies on the QP side. Expect longer iteration cycles during QP experiments, but potential payoffs in problem classes with hard combinatorics.

Integration, Ecosystem, and Roadmapping

Organizations integrate AI through mature ML pipelines, data catalogs, experiment tracking, and governance frameworks. The AI ecosystem includes feature stores, scalable training platforms, model registries, and continuous delivery for AI services. QP integration is path-dependent: teams must assess whether their tech stack can accommodate quantum-ready components, such as hybrid algorithms or quantum emulators. A practical roadmapping approach begins with a detailed problem inventory, followed by a risk-adjusted prioritization that assigns AI-first pilots to common business outcomes, while maintaining a small, funded QP experimentation track. Cross-functional collaboration is essential: product managers, data engineers, and hardware researchers should co-create evaluation criteria, success metrics, and exit criteria for each initiative. The future of ai vs qp involves gradual, staged adoption, not a single lightning-fast replacement. A governance model that aligns with regulatory requirements and ethical standards will help sustain momentum over time. The Ai Agent Ops perspective favors incremental learning: prove value with AI now, then layer quantum capabilities as they become practical.

Risks, Ethics, and Governance

With AI, risk centers on data privacy, bias, and unintended consequences; with QP, risk centers on hardware reliability, theoretical uncertainty, and the complexity of quantum-classical interfaces. Strong evaluation protocols, transparent reporting, and independent audits help organizations monitor AI models, benchmark progress, and detect drift. For QP, risk management focuses on feasibility milestones, dependency on hardware availability, and the taxonomy of quantum algorithms that promise actual advantages. Ethical considerations include fairness, accountability, and the avoidance of overhyping capabilities. A responsible approach combines rigorous testing, explainability methods where possible, and staged pilots that scale model governance as AI capabilities mature. A governance framework should also address workforce implications: retraining, upskilling, and ongoing safety reviews to keep pace with evolving AI and QP capabilities. The Ai Agent Ops framework emphasizes planned, incremental adoption with clear go/no-go criteria and continuous risk assessment.

Implementation Strategies and Roadmap

Start with a crisp problem statement and success metrics; design a two-track program: an AI-first track for immediate impact and a longer-term QP track for potential breakthroughs. Practical steps include data pipeline modernization, MLOps integration, and robust evaluation protocols for AI projects, plus feasibility studies, data encoding, and sandbox experiments for QP ideas. Build cross-disciplinary squads: data engineers, ML researchers, and quantum scientists collaborate through shared dashboards and governance rituals. Define pilot sizes, duration, and ROI targets to avoid scope creep. Establish a learning loop: capture lessons from AI pilots, refine use cases, and determine which problem classes deserve quantum exploration. Ensure compliance with security and privacy regulations, and set clear training plans for staff. The result is a measurable, adaptable strategy that evolves from AI-centered delivery to strategic QP exploration as capabilities mature and hardware access becomes more reliable. The path is not binary; it is a spectrum, with AI delivering value today and QP gradually expanding the frontier tomorrow.

Comparison

| Feature | AI | QP |

|---|---|---|

| Data efficiency | High with labeled data and transfer learning | Low in general; relies on quantum encodings and problem structure |

| Compute requirements | Mature cloud-based compute for training/inference | Hardware-dependent; noisy intermediate-scale quantum (NISQ) era limitations |

| Maturity & tooling | Extensive libraries, frameworks, and MLOps | Emerging toolchains; learning curve for quantum programming |

| Best for | Broad data-driven tasks (NLP, vision, forecasting) | Optimization, simulation, and certain chemistry problems |

| Implementation complexity | End-to-end ML lifecycle | Quantum algorithm design and hardware considerations |

| Cost to experiment | Moderate to high due to data and compute needs | High upfront hardware access or expensive simulations |

| Latency & real-time readiness | Low-latency inference in production | Limited real-time capabilities due to interfacing and hardware |

Positives

- AI enables rapid prototyping and scalable deployment across teams

- QP offers potential exponential speedups for specific problem classes

- Mature AI ecosystems support end-to-end workflows and governance

- Hybrid AI-quanta approaches could unlock new capabilities in the future

What's Bad

- AI can introduce bias and require large labeled datasets

- QP hardware is nascent; practical advantage today is limited to niche problems

- Adoption requires specialized talent and longer experimentation cycles

AI remains the practical default for most organizations today; QP is a strategic bet for select optimization problems and long-term R&D.

Prioritize AI-driven value with scalable governance now. Maintain a targeted QP exploration track to capture potential breakthroughs as hardware and algorithms mature.

Questions & Answers

What is the practical difference between AI and QP?

AI focuses on data-driven learning and decision-making using classical hardware; QP targets problems where quantum effects could enable faster solutions on specialized hardware. The two are complementary, not mutually exclusive.

AI handles data-based tasks today, while QP aims at niche problems where quantum improvements may appear in the future.

Can AI and QP be used together?

Yes. Hybrid workflows can let AI process noisy data and extract patterns, while quantum approaches tackle subproblems like optimization. The integration requires careful interface design and governance.

Absolutely—AI and QP can work together in staged, hybrid systems.

Which problems are best for AI today?

Most enterprise problems involving prediction, classification, NLP, and automation are well-suited for AI today, given mature tooling and deployment pipelines.

Think predictive tasks and automation—that's AI's sweet spot today.

Which problems are best for QP today?

QP shows promise in optimization, quantum chemistry, and certain simulations where classical methods struggle. Real-world advantages depend on hardware access and problem encoding.

QP is promising for select optimization and chemistry problems, but hardware access matters.

How should teams start an AI vs QP program?

Begin with AI pilots to demonstrate value, establish data governance, and build MLOps. Simultaneously, run small-scale QP feasibility studies to identify candidate problem classes.

Start with AI pilots and keep a small quantum-track ready.

What are common risks when adopting AI or QP?

AI risks include bias, data privacy, and model drift; QP risks involve hardware dependency and higher uncertainty. Use phased pilots, risk controls, and clear exit criteria.

Be mindful of bias and hardware uncertainties as you test both paths.

Key Takeaways

- Prioritize AI-driven value with scalable governance now

- Explore QP only for well-mapped optimization/simulation problems

- Invest in hybrid AI-quantum strategies to hedge bets

- Plan a staged quantum-readiness program to minimize risk and maximize learning