AI Replacing Insurance Agents: A Practical Guide for 2026

Explore how AI can replace or augment insurance agents with a practical, governance-focused roadmap. Learn processes to automate, risk controls, and how to measure impact while preserving trust and compliance.

According to Ai Agent Ops, this guide explains how AI can replace or augment insurance agents, outlining practical steps, governance, and risk management. You'll learn which processes to automate, how to redesign roles, how to shift customer interactions to AI-enabled workflows, and how to measure impact without compromising customer trust or regulatory compliance. By the end, you’ll have a runnable, enterprise-ready roadmap for responsible adoption.

The Promise and Limits of AI in Insurance

AI has the potential to transform many routine, data-heavy tasks within insurance, from underwriting to customer support. When designed thoughtfully, AI can handle repetitive inquiries, triage claims, extract data from documents, and surface relevant policy information to human agents for escalation. This can reduce cycle times, improve consistency, and free human agents to focus on high-value conversations like complex risk assessments and trust-building. However, AI is not a wholesale replacement for every human touchpoint. Empathy, nuanced negotiation, and regulatory decision-making still rely on skilled professionals. The most successful implementations view AI as a force multiplier for agents and teams, not a wholesale replacement. As Ai Agent Ops often notes, alignment with governance, ethics, and customer experience is essential to sustainable adoption.

The Core Premise and How to Read this Guide

This guide takes a practical, step-by-step approach to understanding where AI can replace or augment insurance agents, what to automate first, and how to manage the organizational changes involved. We emphasize governance, data stewardship, and regulatory compliance, plus how to maintain trust with customers who expect clarity and human-centered service. You’ll find clear criteria for prioritizing automation, a phased rollout plan, and metrics that matter for insurers and their customers. Throughout, Ai Agent Ops’s framework for responsible AI informs the recommendations, ensuring steps are actionable in real-world settings.

Key Use Cases Where AI Replaces or Supports Agents

- Automated quotes and underwriting support: AI analyzes risk factors, extracts data from documents, and proposes initial policy terms for human review.

- AI-powered customer service: Chatbots and virtual assistants handle routine inquiries, policy changes, and status updates, freeing agents for complex conversations.

- Document processing and data extraction: OCR and NLP extract information from applications, medical records, and policy documents to speed up processing and reduce errors.

- Claims triage and routine decisions: AI flags straightforward claims for quick handling while routing complex cases to adjusters.

- Agent-enablement tools: AI assists agents with policy explanations, cross-sell opportunities, and research during client meetings.

Caution: The most effective designs preserve human oversight where trust, ethics, and regulatory compliance are critical. The goal is to shift the workload away from repetitive tasks while enhancing the customer experience, not erode it.

Architectural patterns and data flows

Successful AI-enabled insurance workflows depend on clear data governance, robust data pipelines, and guardrails for model risk management. Typical patterns include: a centralized data layer that feeds AI services, privacy-preserving data handling (data minimization and encryption), model monitoring for drift, and explainability dashboards so human users can understand AI suggestions. Data provenance and audit trails are essential for regulatory scrutiny. In practice, this means aligning policy data, claims histories, underwriting inputs, and customer interaction records so AI can operate on consistent, high-quality data.

Additionally, integration with existing policy administration systems requires thoughtful API design and security controls. Teams should define who owns data quality, who oversees model performance, and how decisions are surfaced to human users. The result is a transparent, reliable flow from data source to AI inference to human decision.

Governance, ethics, and regulatory considerations

Automation in insurance raises important governance questions. Organizations should establish guiding principles for fairness, transparency, privacy, and accountability. Key steps include: mapping data usage to regulatory requirements, obtaining customer consent where applicable, implementing bias testing for underwriting or customer-facing AI, and setting up escalation paths to human agents for sensitive decisions. Regular ethics reviews and independent audits help ensure models stay aligned with consumer protection laws and industry standards. Ai Agent Ops emphasizes building governance into the design phase rather than treating it as an afterthought.

Implementation roadmap: from pilot to scale

A practical roadmap starts with a narrow, controlled pilot that targets a specific process (e.g., claims triage or document extraction). Define success metrics early (cycle time reduction, error rate, customer satisfaction, agent workload). Use an iterative approach: design, test, measure, and adjust. As you scale, expand automation to adjacent processes, ensure agents are trained on AI tools, and continuously refine governance policies. Stakeholder alignment across product, compliance, IT, and operations is critical, as is clear change management communications for customers and staff. The end state should preserve a human-centric service model, with AI handling repetitive tasks and humans handling nuance and trust-building.

Tools & Materials

- Stakeholder map(Identify decision-makers and change champions across departments)

- Data inventory spreadsheet(Catalog sources, data fields, sensitivity, and retention needs)

- Compliance and regulatory checklist(Map applicable laws, guidelines, and audit requirements)

- Ethics and risk assessment template(Evaluate bias, privacy risks, and escalation paths)

- AI tools and access plan(Document chosen platforms, access controls, and integration points)

- Change management plan(Prepare communications, training, and rollout schedules)

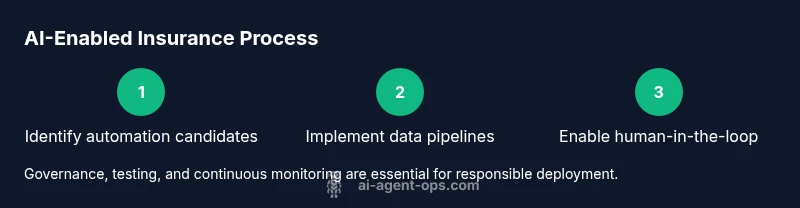

Steps

Estimated time: 6-12 weeks for initial pilots; 6-12 months to scale

- 1

Define objectives and guardrails

Clarify which processes to automate, expected outcomes, and safety constraints. Establish governance policies upfront to guide data use, privacy, and escalation protocols.

Tip: Document success criteria and alignment with regulatory requirements before starting. - 2

Inventory processes and data

Map current workflows and data sources to identify automation candidates. Catalog data fields, quality, and privacy considerations for each candidate process.

Tip: Prioritize processes with predictable inputs and high repeatability. - 3

Select AI capabilities and tools

Choose AI capabilities (LLMs, OCR, automation) that fit the selected processes. Plan for integration with existing systems and define data access controls.

Tip: Start with one or two small pilots to validate assumptions. - 4

Design governance and risk controls

Implement bias checks, explainability features, and escalation paths. Ensure privacy-by-design and regulatory alignment are baked in from the start.

Tip: Involve compliance early to avoid later rework. - 5

Pilot, measure, and adjust

Run a controlled pilot, track the predefined metrics, and collect qualitative feedback from agents and customers. Refine models and workflows based on findings.

Tip: Keep a tight feedback loop with frontline staff. - 6

Scale and sustain

Expand automation to additional processes, train staff, and refresh governance as you grow. Build a continuous improvement cadence.

Tip: Establish ongoing monitoring and a clear process for model updates.

Questions & Answers

What does AI replacing insurance agents mean in practice?

In practice, AI can handle repetitive tasks like data extraction, document processing, and initial customer inquiries, while complex decisions and trust-based conversations remain with human agents. It’s a shift toward augmentation rather than immediate elimination.

AI handles routine tasks, while humans focus on complex conversations and trust-building.

Will AI completely replace human agents?

AI is unlikely to fully replace human agents in insurance. The most successful models combine automation with human oversight, preserving empathy and nuanced decision-making for high-stakes cases.

AI complements humans; critical decisions stay human-guided.

Which processes are best for automation in insurance?

Processes with repetitive data handling, document processing, and routine inquiries are ideal for automation. Complex underwriting, negotiation, and relationship-building benefit from a human-in-the-loop approach.

Automation shines on repetitive tasks; keep humans for complex parts.

How can we ensure compliance and trust when using AI?

Establish governance, bias testing, data privacy controls, and escalation paths. Regular audits and transparent explainability support regulatory requirements and customer trust.

Governance and audits build trust in AI-powered processes.

What risks should we monitor with AI in insurance?

Key risks include data privacy, bias in decision-making, model drift, and over-automation that reduces customer empathy. Implement monitoring and controls to mitigate these risks.

Watch for privacy, bias, and drift; keep humans in the loop.

What skills should teams develop when adopting AI agents?

Teams should invest in data literacy, model governance, change management, and user-centric design. Training should cover both technical and ethical aspects of AI in insurance.

Build data, governance, and customer-centric design skills.

Watch Video

Key Takeaways

- Define AI scope and governance before automation.

- Preserve human touch where trust matters most.

- Pilot, measure, and iterate with clear metrics.

- Scale responsibly with ongoing governance and training.