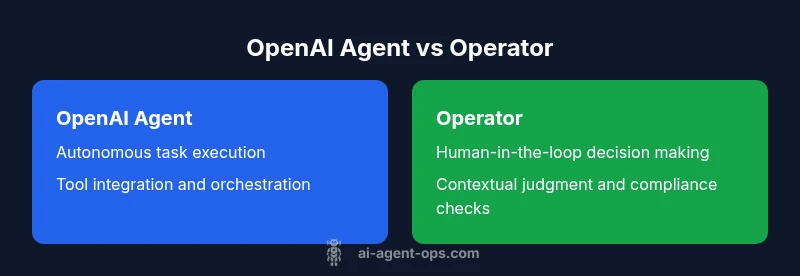

OpenAI Agent vs Operator: A Comprehensive Comparison for AI Workflows

A rigorous 2026 analysis comparing OpenAI agents and operator workflows. Learn definitions, use cases, architecture, governance, and decision criteria to determine when to automate or involve human oversight in AI workflows.

OpenAI agent vs operator highlights two ends of automation: autonomous, reasoning agents versus human-guided workflows. In practice, most teams start with operator-led processes and gradually introduce agent-driven automation to accelerate throughput while maintaining governance. The choice hinges on risk tolerance, data sensitivity, and the desired balance of speed and control.

Defining the terms: openai agent and operator

In the OpenAI ecosystem, the phrase openai agent vs operator describes two ends of a spectrum for automating work. An OpenAI agent typically refers to an autonomous software agent built on top of OpenAI models that can perceive inputs, reason about goals, and take actions through tool calls or API interactions. An operator, by contrast, is a human-in-the-loop worker or a system guided by human decision-makers who approve, adjust, or override automated actions. The distinction matters because it drives architecture choices, governance strategies, and risk management. For developers, product teams, and business leaders exploring AI agents and agentic AI workflows, the choice shapes data flows, logging requirements, and reliability controls. As of 2026, many organizations pursue hybrid patterns that blend autonomous agents with oversight to balance speed with accountability and safety. Throughout this article we use the term openai agent vs operator to refer to a continuum from fully automated to human-guided processes. The Ai Agent Ops team emphasizes clear definitions to avoid scope creep and to set expectations for performance, governance, and maintenance.

Core capabilities and limitations

OpenAI agents bring a set of capabilities that enable end-to-end automation, while operators excel at governance and nuanced decision making. An OpenAI agent can perceive inputs, plan a sequence of actions, invoke tools, and adjust its approach based on feedback. This enables rapid iteration, standardization of routine tasks, and reproducible outcomes across multiple domains. However, autonomy increases the risk of drift, unexpected actions, or brittle behavior if guardrails are weak. Operators, on the other hand, provide filtration, domain expertise, and context-aware judgment that machines often lack. The best-performing teams typically implement a layered approach: agents handle well-defined, repeatable tasks, while human oversight handles exceptions, high-stakes decisions, and changes in project scope. From a governance perspective, a hybrid model enables traceability—decisions can be audited, and prompts or policies can be adjusted as scenarios evolve. In 2026, the trend is toward agentic AI with strong human-in-the-loop mechanisms that safeguard critical outcomes while preserving speed and scale.

Use cases by scenario

Different scenarios reveal when openai agent vs operator delivers value. For high-volume data processing, an autonomous agent can process inputs, extract insights, and trigger downstream actions with minimal latency. In customer support, agents can triage inquiries and provide first-pass responses, but escalation to a human is essential for complex cases or sensitive information. In research and product development, agents can prototype workflows, run experiments, and summarize results; operators can validate findings, ensure compliance, and interpret ambiguous outcomes. For real-time monitoring, agents excel at anomaly detection and automated remediation, yet operators should review edge cases and override decisions when regulatory or safety constraints apply. In practice, many teams blend both modalities: agents perform routine, rule-based tasks, while operators oversee unusual patterns, approve changes to policies, and govern the data that agents access. The 2026 landscape supports hybrid workflows that balance speed, accuracy, and accountability across domains such as software development, data analytics, and customer experience.

Architecture and integration considerations

Designing a robust openai agent vs operator setup requires a clear architecture. Key components include: a well-defined state model to track progress across steps; tool orchestration to call APIs, databases, and external services; a policy framework that prescribes when agents can act autonomously and when human intervention is required; and comprehensive logging for auditing and retraining. Data flows should minimize sensitive information exposure, with strict access controls and encryption both in transit and at rest. For agents, prompts, tools, and memory management shapes behavior; operators interact via dashboards, queues, or ticketing systems that feed context back to agents. Interoperability matters: you’ll often need adapters for message queues, issue trackers, or CRM platforms. From a reliability perspective, implement graceful fallbacks, timeouts, and escalation paths. Governance, privacy, and compliance controls should be baked into the architecture from day one, including incident response playbooks and periodic reviews of policies as environments evolve. In 2026, architecture tends toward modular, auditable components that can scale while preserving human oversight where it matters most.

Performance, reliability, and governance

Performance for openai agent vs operator hinges on how you measure throughput, latency, and accuracy, as well as the reliability of tool integrations. Agents can deliver high throughput and consistency once tuned, but require robust guardrails to prevent unintended actions. Operators contribute resilience by catching edge cases and applying domain expertise, especially in high-risk domains such as finance or healthcare. Reliability practices include end-to-end monitoring, alerting on failure modes, and automatic rollback if an action leads to undesirable results. Governance considerations cover data usage, access controls, explainability, and accountability. Maintaining detailed logs, versioned prompts, and decision rationales supports audits and compliance reviews. As teams mature, they often implement a tiered policy where low-stakes tasks run autonomously under firm constraints, while high-stakes decisions require explicit human reviews. This balance improves both safety and productivity, aligning with best practices in agentic AI and broader AI governance frameworks.

Choosing between openai agent and operator: decision guide

To decide between openai agent and operator, start by mapping your automation goals against risk tolerance and governance needs. If speed and scale are paramount and tasks are well-defined with clear safety rails, an agent-driven approach may provide the best returns. If decisions have significant business or legal implications, or if your domain demands nuanced judgment, an operator-led workflow might be preferable, at least initially. Consider your data sensitivity: agents should minimize exposure and maintain auditable traces of actions. Assess integration complexity and total cost of ownership, including compute, tooling, and human resources. A common best practice is a phased, hybrid rollout: deploy agents for low-risk, repeatable tasks, establish strict escalation criteria, and continuously refine prompts, policies, and tooling based on observed outcomes. Finally, anchor your decision in governance: define accountability, monitoring, and continuous improvement loops to support long-term reliability and compliance.

Hybrid patterns and governance strategies

Many organizations adopt hybrid patterns to exploit the strengths of both approaches while mitigating downsides. A practical hybrid model assigns autonomous execution to agents for routine, well-scoped tasks while reserving oversight for critical decisions, unusual data patterns, or new workflows. Governance strategies include explicit escalation protocols, human-in-the-loop reviews for high-risk actions, and regular audits of prompts, tool permissions, and data flows. Design strategies also emphasize clear ownership: assign product, security, and legal leads to oversee agent configurations and operator workflows. Testing regimes should cover unit, integration, and end-to-end scenarios with both agents and operators. Finally, adopt a culture of iterative improvement: monitor performance, gather feedback, and adjust policies to reflect evolving risk profiles and business needs. In 2026, the most resilient teams align AI capabilities with organizational governance to sustain trust and productivity.

Comparison

| Feature | openai agent | operator |

|---|---|---|

| Definition | Autonomous software agent built on OpenAI models that plans actions and executes tasks with tool calls | Human-guided workflow with explicit oversight and decision-making |

| Decision authority | High degree of automation with guardrails | Human review and escalation for critical decisions |

| Efficiency & speed | High throughput and rapid iterations | Slower due to human-in-the-loop processes |

| Error handling & safety nets | Automated retries, predefined safety checks | Manual verification and escalation paths |

| Data handling & privacy | Model-centric data handling with structured logging | Governed data handling with access controls and privacy reviews |

| Integration complexity | Requires tool adapters and orchestration | Often relies on existing workflows and ticketing systems |

| Best for | Repeatable, scalable automation with governance | Complex or high-stakes decisions requiring oversight |

| Cost considerations | Potentially lower operational costs with automation | Labor costs but potentially slower time-to-value |

Positives

- Potentially faster decision cycles with autonomous agents

- Reduces manual workload and human error

- Scales across tasks with less human intervention

- Improved reproducibility through automated workflows

- Easier to audit via structured logs and prompts

What's Bad

- Risk of unintended actions without strong guardrails

- Requires robust governance and monitoring

- Possible hidden compute and integration costs

Hybrid strategies often outperform rigid, single-mode approaches.

OpenAI agents excel at speed and scale, while operators provide essential oversight. A blended model tailored to risk, data sensitivity, and governance needs typically yields the best outcomes for 2026 AI workflows.

Questions & Answers

What is an OpenAI agent?

An OpenAI agent is an autonomous tool built on OpenAI models that can perceive inputs, decide on actions, and execute those actions through tool calls or API interactions. It operates with minimal human intervention, but still benefits from governance, safety rails, and monitoring.

An OpenAI agent acts on its own to complete tasks, with safeguards and logs to keep everything under control.

What does an operator mean in this context?

An operator refers to a human-in-the-loop workflow or a system controlled by humans who review, approve, or override automated actions. Operators provide domain expertise and critical judgment in situations where automated decisions alone may be risky.

An operator means a person oversees the process and steps in when automation hits a tricky decision.

When should I use an OpenAI agent vs an operator?

Use an OpenAI agent for routine, high-volume tasks with clear rules and safe boundaries. Use an operator when decisions involve high stakes, regulatory concerns, or nuanced context that requires human judgment. Many teams start with operators and gradually introduce agents for scalable automation.

If the task is simple and repetitive, agents help. If it’s risky or needs judgment, bring in an operator.

What governance considerations matter most?

Governance should cover data privacy, access controls, explainability, logging, and accountability. Establish escalation protocols, review prompts and policies regularly, and implement incident response plans to address unexpected agent behavior.

Governance means having clear rules, logs, and people responsible for decisions and safety.

How do costs compare between the two approaches?

Costs depend on usage patterns, compute, tooling, and human resource requirements. Agents may reduce ongoing labor costs for large-scale tasks but incur compute and integration costs; operators incur labor costs and may slow down value delivery when handling exceptions.

Costs hinge on how much you automate and how much you rely on humans to supervise it.

Can openai agents and operators be used together effectively?

Yes. A hybrid approach combines autonomous agents for routine work with human oversight for critical decisions, exceptions, and policy updates. This pattern balances speed with safety and accountability, and it’s a common best practice in 2026.

Absolutely—use agents for the boring parts and humans for the tricky ones.

Key Takeaways

- Define risk tolerance before automating

- Hybrid patterns often yield best results

- Invest in governance, logging, and rollback

- Choose based on use-case maturity and data sensitivity