Data Analysis AI Agent: Automating Insights with Agentic AI

A practical guide to data analysis ai agents, covering how they automate data tasks, governance, and steps to design, build, and deploy agentic AI workflows for insights.

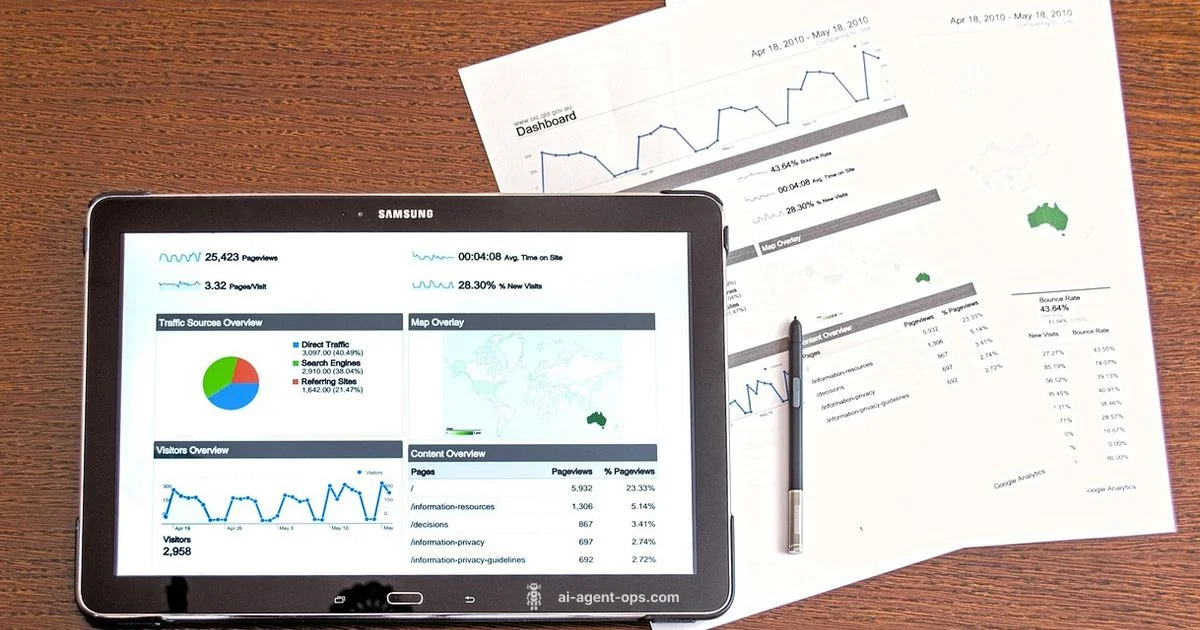

Data analysis ai agent is a type of AI agent that automates end-to-end data tasks, from ingestion and cleaning to modeling and insight generation within an agentic workflow.

What is a data analysis ai agent and why it matters

A data analysis ai agent is a specialized AI agent that automates end-to-end data tasks—from ingestion and cleaning to statistical analysis and insight generation—within an agentic workflow. For developers, product teams, and business leaders, these agents enable faster, more consistent analyses across teams. According to Ai Agent Ops, data analysis ai agents are reshaping how organizations approach data insights, blending data engineering with autonomous decision making. They support recurring analytics, reduce manual toil, and enable teams to scale data-informed decisions without sacrificing governance. In practice, a data analysis ai agent might connect to data sources, pre-process data, run models, generate summaries, and push results to dashboards or reports, all under a single objective. This combination of automation and adaptiveness is particularly valuable in fast-moving environments where data volumes are large and data quality varies. The core value lies in turning raw data into actionable insight with minimal handoffs, while maintaining an auditable trail of decisions.

How data analysis ai agents work under the hood

At a high level, a data analysis ai agent sits at the intersection of data engineering, analytics, and AI planning. It uses a lightweight memory or state to track context and decisions, a planner to sequence tasks, and a set of tools to perform actions such as querying databases, cleaning data, or generating visualizations. Agents operate with goals and policies that determine when to retry failed tasks, escalate issues, or request human review. The typical toolchain includes data connectors, SQL or API clients, a modeling or statistics engine, and a visualization layer. Governance and security wrappers ensure data access is controlled and auditable. Layered prompts steer the agent toward relevant analyses, while a monitoring layer observes outputs for quality and drift. In practice, many teams adopt a modular pattern that mirrors human workflows: ingestion and validation, exploratory analysis, modeling and evaluation, and reporting and storytelling. AI agents often rely on agent orchestration patterns that blend planning with action execution, enabling end-to-end analytics in an autonomous loop. As Ai Agent Ops notes, this hybrid approach blends rigor with flexibility.

Key workflows and patterns

- ETL extended to EDA: The agent performs data extraction, cleaning, transformation, and then automated exploratory data analysis to surface hypotheses.

- Auto model and evaluation: The agent selects modeling approaches, runs experiments, and reports on performance and limitations.

- Automated reporting: The agent generates narratives and dashboards, highlighting key insights, caveats, and recommended actions.

- Observability and governance: Each step is logged, tied to data lineage, and auditable for compliance.

- Operational analytics patterns: The agent triggers alerts and recommendations based on thresholds and drift signals.

Building a data analysis ai agent: steps and considerations

- Define the objective: articulate the business question and success criteria. 2) Map data sources: inventory data assets, access controls, and data quality. 3) Design a task graph: outline the sequence of steps the agent will perform, with decision points and fallback paths. 4) Choose an integration stack: data connectors, analysis tools, modeling capabilities, and visualization targets. 5) Implement guardrails: enforce privacy, bias checks, access controls, and error handling. 6) Test thoroughly: use synthetic and real data to validate outputs, compare results to manual processes, and adjust prompts and tools. 7) Deploy and monitor: start small, publish clear dashboards, and establish feedback loops for continuous improvement. 8) Iterate: periodically review performance, update guardrails, and incorporate new data sources or analytics techniques.

Benefits and limitations

Benefits include speed, consistency, and the ability to scale analytics across teams without increasing headcount. A data analysis ai agent can reduce repetitive, mundane tasks and free analysts to focus on higher-value work such as interpretation and strategy. However, limitations exist: data quality and governance requirements, potential for biased or misleading outputs if prompts or models are not properly tuned, and the need for careful auditing and explainability. Successful deployments emphasize clear objectives, robust data stewardship, and continuous monitoring. Ai Agent Ops's perspective is that these agents are most effective when used to augment human analysts rather than replace them, maintaining oversight and accountability across the analytics lifecycle.

Governance, ethics, and trust with data analysis ai agents

Trustworthy deployment rests on data governance, transparency, and reproducibility. Implement access controls, data lineage, and versioning so outputs can be traced to inputs. Require explanations for critical decisions and provide humans with the option to review or override results. Establish performance metrics, incident response plans, and ongoing audits to detect drift or bias. Align the agent's behavior with organizational policies and regulatory requirements. The Ai Agent Ops team emphasizes a phased adoption with incremental governance controls and measurable outcomes to build confidence across stakeholders.

Tooling landscape: common platforms and integration patterns

Data analysis ai agents rely on three broad tool categories: data connectors and ETL/ELT platforms to consolidate and cleanse data; analytics engines and statistical tools to run analyses; and visualization or reporting layers to communicate results. On the orchestration side, agent frameworks provide the scaffolding for planning, memory, and tool usage, enabling reusable task graphs and modular components. Integration patterns vary from batch oriented pipelines to streaming analytics for near real time needs. Open architectures and standards support easier upgrades and governance. The trend toward agent orchestration, modular tooling, and explainable analytics is reshaping how organizations implement data driven decisions at scale.

Getting started: a minimal practical blueprint

Start with a narrow objective and a small, trusted data source. Define the agent's goals, the data inputs, and the expected outputs. Draft a simple task graph that covers data ingestion, basic cleaning, a first exploratory analysis, and a final report. Select a minimal set of tools that you can maintain and audit, and establish guardrails for privacy and error handling. Implement a testing plan with synthetic data and a rollback path. Launch with a non production dataset and collect feedback from stakeholders. Iterate by expanding data sources and refining prompts and tool choices as you learn what works best in your domain. By following this approach, teams can begin to harness the benefits of data analysis ai agents while maintaining governance and control.

Questions & Answers

What is a data analysis AI agent?

A data analysis AI agent is an autonomous system that performs data tasks such as ingestion, cleaning, analysis, and reporting. It uses AI planning to sequence actions and produce insights with auditable provenance.

A data analysis AI agent is an autonomous system that handles data tasks and generates insights with traceable steps.

How does a data analysis AI agent differ from a traditional data science workflow?

Traditional workflows rely on manual steps and separate tools. An AI agent combines planning, automation, and tooling to perform end-to-end analytics with minimal human intervention, while still allowing human oversight.

Traditional analytics rely on manual steps. The AI agent automates those steps with built in planning, though humans can review results.

What are the core components of a data analysis AI agent?

Key components include an objective driven planner, a memory state to track context, a toolset for data access and processing, and a presentation layer for insights.

The core is a planner, memory, tools, and a reporting surface.

What governance practices are recommended for data analysis AI agents?

Establish data access controls, maintain data lineage, enable explainability, and monitor outputs for drift. Use audit logs and versioning to ensure reproducibility.

Use strict access controls, data lineage, and audits to keep analytics trustworthy.

Can data analysis AI agents be used in real time analytics?

Yes, for certain workloads agents can participate in near real time analytics if data sources and tools support streaming or scheduled updates. They should be configured with latency targets and fallback paths.

They can handle near real time analytics if setup supports streaming and low latency.

What are common pitfalls when deploying data analysis AI agents?

Common pitfalls include assuming data quality is perfect, underestimating governance needs, over relying on prompts without guardrails, and insufficient monitoring after deployment.

Watch out for data quality gaps and lack of governance and monitoring.

What skills are needed to build a data analysis AI agent?

Essential skills include data engineering, analytics, prompt design, and knowledge of AI agent frameworks. Cross-disciplinary collaboration between data scientists, engineers, and product teams is important.

It helps to have data engineering and analytics skills plus knowledge of AI agent tooling.

Key Takeaways

- Define the objective before building the agent

- Design a modular, reusable task graph

- Implement governance and auditing from day one

- Start small and iterate with real data

- Prioritize reproducibility and explainability