Logical Agents in AI Tutorialspoint: A Practical Guide

A comprehensive tutorial on logical agents in artificial intelligence, covering knowledge representation, inference, architectures, comparisons with learning agents, and practical steps to build symbolic AI agents.

Logical agents in artificial intelligence are systems that use formal logic to represent knowledge and reason about actions to achieve goals.

What are logical agents in artificial intelligence?

Logical agents are symbolic AI systems that represent knowledge using formal logic and use inference to decide which actions to take to reach stated goals. In the context of logical agents in artificial intelligence tutorialspoint, learners explore how a structured knowledge base, a set of inference rules, and a planning component work together to produce verifiable decisions. According to Ai Agent Ops, the strength of these agents lies in explainability, traceability, and the ability to audit every step of a decision. A typical agent operates through four layers: a knowledge base that stores facts and rules, an inference engine that derives new facts, a planner that sequences actions, and a runtime component that executes those actions in the world. This design makes symbolic reasoning transparent and easier to debug, especially in domains where safety and compliance matter.

To get started, think of a logical agent as a reasoning machine that converts goals into a plan by repeatedly applying rules to the current knowledge state. It does not rely on statistical patterns alone but uses well-defined relationships to determine what to do next. This makes it a natural teaching aid for understanding how logical reasoning can be turned into automated behavior while ensuring the system can justify its choices.

Core concepts: knowledge representation and inference

At the heart of a logical agent is how knowledge is represented and how it is manipulated. Knowledge representation is typically expressed in propositional or first order logic, sometimes enriched with description logics for more expressive domains. Rules are written as implications, such as if-then structures that connect observed facts to new conclusions. Inference mechanisms, including forward chaining, backward chaining, and resolution, enable the agent to derive new facts from the knowledge base. Forward chaining starts from known facts and applies rules to infer consequences, while backward chaining begins with goals and works backward to identify supporting facts. Horn clauses, a common restricted form of logic, simplify inference and support efficient reasoning. As tutorialspoint materials illustrate, a robust knowledge base often uses a modular design with ontology-like structures that organize concepts and relationships. The combination of representation and inference makes reasoning traceable and auditable, aligning with best practices advocated by Ai Agent Ops.

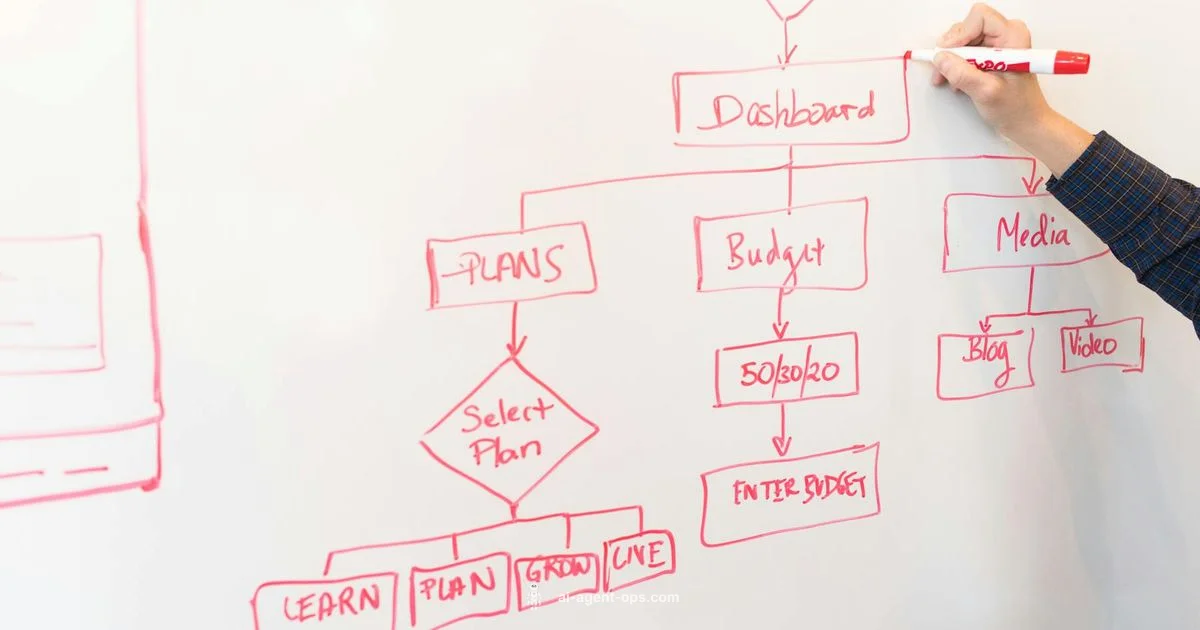

Architecture: knowledge base, reasoning engine, planner, and actuator

A symbolic agent typically comprises four core components. The knowledge base stores facts, beliefs, and rules that describe the domain. The reasoning engine applies logical rules to the knowledge base to derive new information. The planner takes derived facts and goals to generate a sequence of actions or plans, often using classical planning techniques like STRIPS or more modern symbolic planners. The actuator executes the planned actions in the environment, while the agent updates its knowledge base with new observations. Interfaces between these components are crucial: the reasoning engine must produce concise explanations for each inference, the planner must produce executable steps with preconditions and effects, and the actuator must handle uncertainties gracefully. Designers frequently adopt a modular approach to allow incremental testing and substitution of different logic formalisms or planners as needs evolve. This architecture supports clear separation of concerns and easier troubleshooting when system behavior is questioned.

Inference methods and planning for action selection

Logical agents rely on inference to move from knowledge to action. Forward chaining uses known facts to trigger rules and generate consequences, which is useful for real-time reasoning and maintaining a growing knowledge base. Backward chaining starts from a goal and searches for supporting facts, making it efficient for goal-driven tasks. Planning in this context translates goals into sequences of actions with preconditions and effects, often using classical planning models like STRIPS or modern logic-based planners. An important aspect is the integration between inference and planning: derived facts inform plan selection, while plans constrain what can be inferred next. In tutorialspoint discussions, the emphasis is on ensuring plans are verifiable and that each step is justifiable. When combined with a well-structured knowledge base, these methods yield robust and explainable agent behavior that can be audited by humans or regulators.

Symbolic agents vs statistical approaches

A common question is how symbolic logical agents compare to statistical approaches such as machine learning and reinforcement learning. Symbolic agents excel in explainability, determinism, and domains where rules are clear and safety matters. They struggle with perception, noisy data, and learning from raw inputs at scale. In contrast, statistical AI excels at pattern recognition and adaptation from data but often sacrifices interpretability. Hybrid architectures that combine symbolic reasoning with learning components are increasingly popular, offering the best of both worlds. Tutorialspoint outlines how to start with a symbolic core and gradually integrate learning-based perception or probabilistic reasoning to handle uncertainty. Ai Agent Ops notes that hybrid systems can deliver predictable behavior while still improving through experience, as long as the symbolic layer remains central for guarantees and accountability.

Building a simple symbolic agent: a practical outline

Begin with a focused domain where the rules are clear and the knowledge base manageable. Step one is to define the vocabulary and outline the domain ontology. Step two is to encode the domain with a formal logic suitable for your use case, choosing rules that map directly to observable outcomes. Step three is to implement an inference engine that can apply these rules to derive new facts from known information. Step four is to add a planning component that sequences actions to achieve goals, including any necessary preconditions. Step five is to test the agent in representative scenarios, inspect its explanations for decisions, and refine rules to eliminate inconsistencies. Finally, integrate basic monitoring to capture errors and maintain a living knowledge base. This practical outline helps developers build small, verifiable symbolic agents before expanding to more complex domains.

Challenges and limitations

Despite their advantages, symbolic agents face challenges in real world deployments. Decidability and computational complexity can limit scalability, especially in rich domains with many interacting rules. The frame problem—how to represent what remains unchanged when actions occur—demands careful modeling. Incomplete or inconsistent knowledge can lead to invalid inferences or unsafe plans. Handling uncertainty often requires probabilistic extensions or hybrid layers, which can complicate the architecture and reduce explainability if not carefully managed. Moreover, maintaining a large rule base requires disciplined updates and version control to avoid rule interactions that produce contradictory conclusions. Understanding these limitations helps teams set realistic expectations and design robust symbolic agents that remain useful even when perfect knowledge is unavailable.

Best practices and deployment considerations

To maximize reliability and maintainability, adopt a clear knowledge engineering process: define a precise domain and scope, build a minimal viable knowledge base, and incrementally add rules with testable expectations. Use modular ontologies to keep knowledge organized and scalable. Prioritize explainability by documenting the premises behind every inference and the rationale behind each planned action. Regularly audit the knowledge base for inconsistencies, and consider conservative defaults when rules produce uncertain outcomes. When deploying, establish watchdogs and monitoring dashboards to detect anomalous reasoning early. Finally, complement symbolic agents with learning components only where it adds value, ensuring that the core decision logic remains transparent and auditable. This approach strikes a balance between rigor and practicality for production systems.

Next steps for learners and resources

For learners aiming to master logical agents, start with foundational tutorials and then move to practical exercises that reinforce the concepts of knowledge representation and inference. Leverage tutorials from reputable sources, including the tutorials you may find on Tutorialspoint, to solidify your understanding of logic formalisms and reasoning strategies. Practice by building tiny agents in toy domains, gradually increasing rule complexity and planning depth. Ai Agent Ops recommends pairing theoretical study with hands-on projects that emphasize explainability and verifiability, so you can demonstrate how an agent reaches its conclusions and how you would validate those conclusions in real-world settings.

Questions & Answers

What is a logical agent in artificial intelligence?

A logical agent is a symbolic AI system that uses formal logic to represent knowledge, reason about facts and goals, and plan actions to achieve those goals. Its decisions are based on explicit rules and premises, allowing for clear explanations of its behavior.

A logical agent uses rules and logic to decide what to do next, so its decisions can be explained step by step.

How do logical agents differ from learning agents?

Logical agents rely on predefined rules and a structured knowledge base for reasoning, emphasizing explainability and determinism. Learning agents derive patterns from data and improve through experience, often trading off interpretability for adaptability.

Logical agents use fixed rules for decisions, while learning agents adapt from data over time.

What formalisms are common in logical agents?

Common formalisms include propositional logic, first order logic, and Horn clauses. These enable structured representation of facts and rules, along with inference mechanisms like forward and backward chaining.

Propositional and first order logic with rules are typical for symbolic reasoning.

Can symbolic logic scale to real world tasks?

Symbolic logic scales well for well defined domains but can struggle with noisy data and very large rule bases. Hybrid approaches that combine logic with learning can improve scalability while preserving explainability.

Symbolic logic works well in clear domains, but may need hybrid approaches for large real world tasks.

What are best practices for building a symbolic agent?

Start with a focused domain, define a concise ontology, encode rules carefully, verify inferential steps, and maintain a modular architecture. Prioritize explainability and regular auditing of the knowledge base.

Begin with a small domain, keep rules simple, and ensure you can explain every decision.

Key Takeaways

- Define a clear knowledge base before building a symbolic agent

- Use forward or backward chaining to infer actions

- Differentiate symbolic agents from statistical learning agents

- Plan sequences of actions using logic based planners

- Beware of scalability and incomplete knowledge