Ai Agent Explanation: A Practical Guide for Explainable AI

Learn how ai agent explanation clarifies decisions, boosts trust, and supports governance definitions, styles, and evaluation methods for transparent AI agents.

Ai agent explanation is a method of clarifying how an AI agent makes decisions, describing inputs, internal processes, and resulting actions.

What ai agent explanation is and why it matters

Ai agent explanation is the practice of making clear how an AI agent arrives at its decisions. It describes what data the agent used, what internal reasoning steps or rules it followed, and why the chosen action happened. This clarity is essential for developers, product teams, and business leaders who rely on AI agents to automate tasks, coordinate workflows, and operate in regulated environments. A good ai agent explanation helps users trust the system, facilitates debugging, and supports governance by enabling auditability and accountability. The Ai Agent Ops team emphasizes that explanations should be tailored to the audience, not just generated as a technical artifact; end users, operators, and executives will all need different levels of detail. At its core, an explanation answers the question: what did the agent see, think, and do, and why did it choose this path? In practice, effective explanations begin with a clear purpose and a defined audience. They blend technical detail with human friendly language and include caveats about uncertainty.

The spectrum of explainability in AI agents

Explainability ranges from simple, high level summaries to detailed, traceable reasoning. For some stakeholders, a concise, human readable rationale suffices; for others, a full chain of thought or step by step justification may be necessary. There are tradeoffs: richer explanations can reveal sensitive data or reveal vulnerabilities, while sparse explanations may leave users uncertain. When designing ai agent explanations, teams should define the audience first and select an explanation style that preserves usefulness without compromising safety or performance. Common styles include rule based summaries, feature attributions, decision logs, and narrative justifications. Each style has benefits and limits, and they can be combined to serve different needs in real time or during post hoc analysis. In addition, organizations should consider how explanations affect latency, storage, and privacy, especially in edge deployments or regulated industries. Ai Agent Ops recommends iterative testing with real users to validate usefulness.

Techniques for building effective ai agent explanations

There are several practical approaches to explainability:

- Feature attributions: highlight which inputs most influenced a decision.

- Decision logs: record the steps or rules used by the agent.

- Case based narratives: describe a concrete example and the reasoning path.

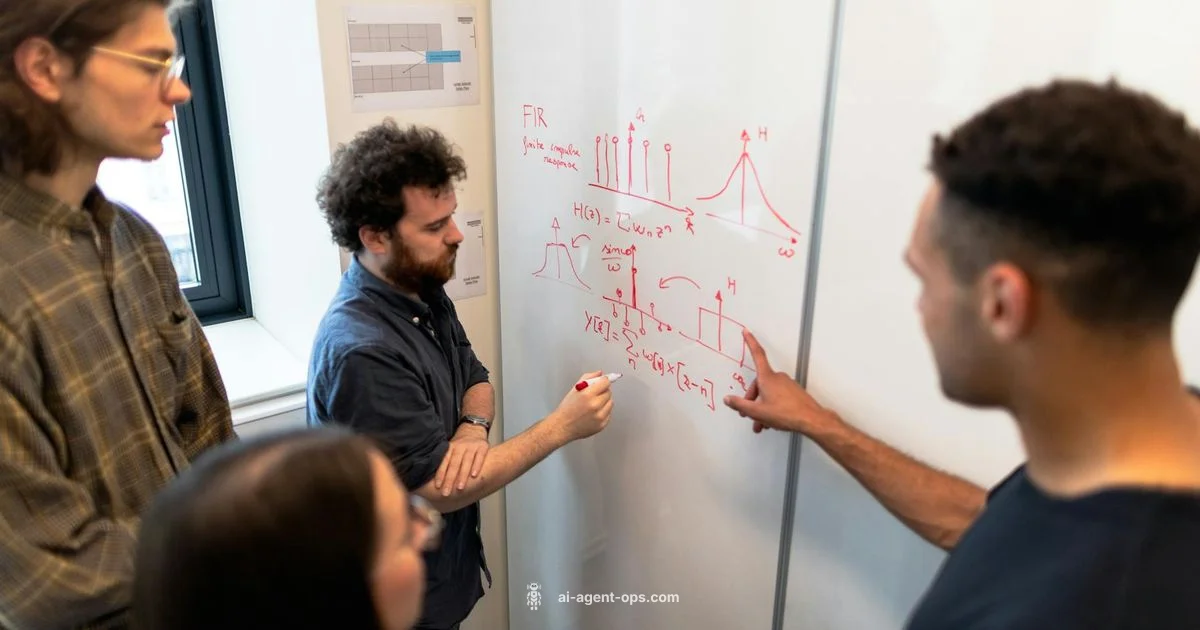

- Visual dashboards: present concise, digestible summaries of decisions and outcomes.

- Post hoc rationales: provide rationale after a decision, while noting limitations.

Keep explanations faithful to the model; avoid unfounded or overly confident statements. The right mix depends on the agent type, data sensitivity, and regulatory requirements. Ai Agent Ops emphasizes fidelity over flair, ensuring explanations reflect actual processes rather than desired narratives. When possible, provide both user friendly summaries and technical trails to support advanced analysis.

Practical guidelines for constructing explainable agents

- Start with the users and their goals; define what needs to be explained and to whom.

- Choose one or two explanation styles per scenario to avoid cognitive overload.

- Build lightweight, observable explanation hooks into the agent's decision pipeline.

- Use visualizations and concise language; avoid jargon where possible.

- Validate explanations with real users and adjust based on feedback.

- Document the limitations; explain what is not known or uncertain.

- Align explainability with governance, compliance, and risk management.

This approach makes ai agent explanation actionable rather than theoretical, helping teams deploy agents with confidence.

Real world examples and caveats

Consider a customer support agent that suggests a troubleshooting step. An explanation might summarize relevant inputs (customer issue, past tickets, available tools) and show a brief rationale (recent patterns indicate X). In a medical setting, the explanation would be more stringent, offering evidence and uncertainty levels. In finance, explanations should include audit trails and data provenance. Always beware of overclaiming. A common pitfall is post hoc rationalization, where explanations appear plausible but do not truthfully reflect the reasoning process. Another danger is leaking sensitive data through explanations, which can violate privacy or regulatory constraints. The best ai agent explanations balance transparency with security and practicality.

Measuring success and governance around ai agent explanations

Quantitative metrics for explainability are evolving. Useful measures include fidelity (how closely explanations reflect actual reasoning), usefulness (how well explanations help users achieve goals), and stability (consistency across similar decisions). Qualitative methods such as user interviews and task success studies provide context. Governance considerations include access controls, data provenance, and versioning of explanations so teams can track changes over time. Ai Agent Ops notes that explainability is not a one off feature but a continuous practice that scales with more complex agentic workflows. Organizations should embed explainability into development cycles, testing, and compliance reviews to ensure responsible use of AI.

Questions & Answers

What is ai agent explanation?

Ai agent explanation is the practice of clarifying how an AI agent arrives at its decisions, describing inputs, reasoning steps, and outcomes. It helps users understand, trust, and govern automated behavior.

AI agent explanation clarifies how decisions are made, including inputs and reasoning, to build trust and governance.

Why is explainability important for AI agents?

Explainability improves transparency, safety, and accountability, making it easier to audit agent behavior, comply with regulations, and earn stakeholder trust.

Explainability improves transparency, safety, and accountability for AI agents.

How do you implement ai agent explanations?

Start with the audience, choose a couple of explanation styles, integrate explanation hooks into the decision pipeline, and test with real users to refine usefulness and fidelity.

Begin with the user, pick a couple of explanation styles, and test with real users.

What are common pitfalls in explanations?

Post hoc rationalization and data leakage through explanations are common pitfalls. Always verify that explanations reflect actual reasoning and protect sensitive data.

Watch out for post hoc rationalization and protect data when explaining decisions.

How can you measure explainability?

Fidelity, usefulness, and stability are key metrics, complemented by user studies and governance checks to ensure explanations remain accurate and actionable.

Measure fidelity, usefulness, and stability with user studies and governance checks.

Do explanations affect performance?

Providing explanations can add latency or storage requirements. Design explanations to be lightweight for real time use and offer deeper trails for post hoc analysis when needed.

Explanations may add latency; plan for lightweight real time and deeper post hoc options.

Key Takeaways

- Define audience before building explanations

- Balance fidelity with usability

- Use multiple explanation styles

- Validate with real users regularly

- Embed governance and privacy controls