MCP Host vs AI Agent: An Analytical Comparison

An analytical comparison of MCP host and AI agent architectures, exploring governance, autonomy, latency, and integration trade-offs for developers and leaders exploring agentic workflows.

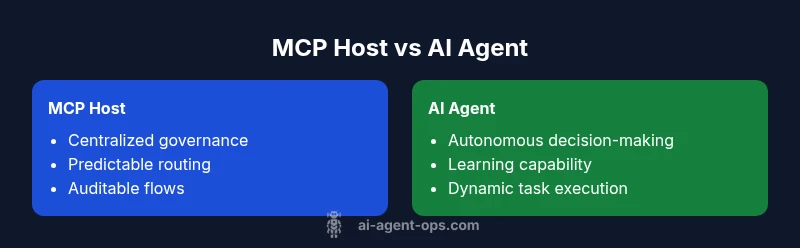

In short, mcp host vs ai agent pits centralized orchestration against autonomous execution. A MCP host offers predictable routing, governance, and traceability, while an AI agent delivers adaptive decision-making and task automation. The best choice depends on whether your priority is control and compliance or dynamic, self-directed workflows, with hybrid patterns increasingly common in modern systems.

Context: Why this comparison matters for developers and business leaders

According to Ai Agent Ops, the rise of agentic AI has shifted tensions between centralized orchestration and autonomous agents. The question of mcp host vs ai agent becomes salient when teams design systems that must balance strict governance with flexible execution. When teams evaluate automation lanes, the decision often hinges on how much control they need over data, policy enforcement, and human-in-the-loop safeguards. This article uses the lens of mcp host vs ai agent to unpack practical considerations, trade-offs, and hybrid patterns that organizations can adopt to move faster without sacrificing governance. The discussion will reference common architectural patterns, deployment realities, and risk factors that every development team should weigh before committing to one approach or a blended model. The Ai Agent Ops team emphasizes that both elements can coexist in a mature, agentic workflow, enabling resilient and scalable automation while preserving visibility and accountability.

The core definitions: MCP host and AI agent

To compare mcp host vs ai agent effectively, we first clarify the core concepts. An MCP host typically acts as a centralized control plane that coordinates messages, enforces policies, and provides observability across distributed components. It excels at predictable routing, standardized interfaces, and auditable flows. An AI agent, by contrast, embodies autonomous decision-making: a software entity that perceives its environment, reasons about goals, and acts to achieve them, often leveraging learning from prior outcomes. The distinction matters because architecture choices influence how work gets done, who is responsible for outcomes, and how quickly the system can adapt to new tasks. In many organizations, teams use a hybrid approach to combine human governance with agentic execution, especially in regulated industries where missteps carry outsized risk.

Key architectural differences: structure, interfaces, and safety

When you pit mcp host vs ai agent, the architectural differences become apparent in four key dimensions: control surface, interface design, failure modes, and integration patterns. The MCP host generally defines strict, point-to-point or pipeline-based interfaces that mandate clear contracts and versioned schemas. AI agents rely on more dynamic interfaces, often with probabilistic outputs and confidence scores that require additional validation layers. Safety models differ as well: MCP hosts emphasize compliance controls, auditing, and deterministic behavior, while AI agents emphasize guardrails, containment, and runtime monitoring. The result is a spectrum between fully centralized orchestration and distributed, autonomous action. A pragmatic approach is to design a layered stack where an MCP host provides governance and routing, while AI agents handle execution within well-bounded policies and safety constraints. The Ai Agent Ops perspective is that governance should scale with autonomy, not diminish it, and that observation should drive iterative improvements across both layers.

Control, governance, and compliance: who is responsible for outcomes?

Control and governance are often the deciding factor in the mcp host vs ai agent debate. MCP hosts offer explicit control points: policy engines, role-based access controls, versioned deployments, and centralized audit trails. These features make it easier to demonstrate compliance and to reproduce outcomes. AI agents introduce new questions around accountability: who is responsible for decisions if an agent acts autonomously? Organizations will likely need hybrid governance models that combine static policies with dynamic risk assessments, runtime monitoring, and escalation paths. Implementing explainability hooks, action logging, and tamper-evident records helps bridge the gap between autonomy and accountability. The Ai Agent Ops framework argues for transparent decision logs and modular safety policies that travel with agents as they move across systems.

Latency, throughput, and scalability: performance implications of mcp host vs ai agent

Performance considerations differentiate the two paradigms at scale. An MCP host centralizes routing and policy checks, which can streamline throughput and reduce variance in latency when traffic patterns are predictable. However, centralized bottlenecks can arise under peak load or when complex policies are enforced, potentially increasing response times. AI agents introduce variability due to model inference, local reasoning, and discovery steps. They can scale horizontally but may require careful resource management, caching, and asynchronous designs to avoid cascading delays. A pragmatic approach is to combine a fast-path MCP host for routine flows with dedicated agentic lanes for tasks that benefit from autonomy, while ensuring end-to-end SLAs cover both pathways. The Ai Agent Ops emphasis on measurable latency and bounded decision windows helps teams design for reliable performance in both modes.

Data handling, security, and privacy implications

Data handling is a critical axis in the mcp host vs ai agent discussion. MCP hosts typically enable centralized data governance: uniform encryption, traceable access, and auditable data lineage. AI agents may access diverse data sources and may process sensitive information in decentralized contexts, which introduces join-then-check or homomorphic encryption strategies to maintain privacy. Hybrid architectures can route sensitive data through the MCP host for governance, while allowing agents to operate on de-identified or synthetic data when possible. Risk management frameworks require clear data ownership, consent controls, and robust incident response plans that span both orchestration and agent layers. The Ai Agent Ops guidance emphasizes implementing data minimization, role-based data exposure, and end-to-end encryption to preserve trust across the stack.

Learning, adaptation, and cost considerations

A central advantage of AI agents is their capacity to learn from outcomes and improve over time. This capability introduces new budgeting concerns, such as model training costs, data curation, and drift management. In MCP-hosted environments, learning cycles are typically external, with governance patches applied through controlled releases. Cost comparisons should account for hidden expenses like compliance overhead, tooling for monitoring, and the ongoing need to maintain safe escalation policies. A hybrid approach can separate concerns: the MCP host handles policy enforcement and risk management, while AI agents experiment with optimization strategies within restricted sandboxes. Ai Agent Ops recommends a staged adoption plan that tracks performance improvements against governance overhead to determine ROI over time.

When to choose an MCP host: controlled environments and predictable workflows

MCP hosts excel where the primary requirements are strict governance, auditable decision paths, and predictable end-to-end flows. Regulated industries, high-sLA environments, and organizations with legacy systems benefit from this pattern because it reduces the surface area for unexpected behavior. The mcp host provides reproducible results, easier root-cause analysis, and straightforward integration with existing monitoring and compliance tooling. In short, choose an MCP host when control, explainability, and compliance are non-negotiable and when autonomy is either limited or tightly scoped by policy.

When to choose an AI agent: dynamic tasks and autonomous workflows

AI agents shine when the work involves uncertain environments, evolving goals, or complex decision-making that benefits from learning. If speed to value, adaptability, and reduced manual intervention are priorities, an AI agent can accelerate delivery and unlock new capabilities. The trade-off is increased governance overhead, potential drift, and a need for sophisticated safety and monitoring. The best practice is to start with a pilot on a well-defined use-case, establish guardrails, and iterate as you collect data on reliability, safety, and impact. The Ai Agent Ops framework advocates for strong instrumentation and incremental autonomy to manage risk while reaping the productivity gains.

Hybrid patterns: orchestrating agents with a host for balanced outcomes

A pragmatic path for many teams is to blend both paradigms. Use an MCP host as the backbone for policy, routing, and auditability, and plug AI agents behind safe boundaries where autonomy adds value. Hybrid patterns enable rapid experimentation while preserving governance and compliance. Design considerations include: clear escalation rules, bounded autonomy within sandboxed environments, robust observability across both layers, and a unified security model. The goal is to minimize conflict between centralized control and autonomous action by aligning incentives, data access, and risk thresholds across the stack. This approach often yields the best ROI when teams are transitioning toward more agentic workflows while retaining necessary governance.

Practical adoption patterns and common pitfalls

Adopting an MCP host vs ai agent stack requires careful planning and staged execution. Start with governance-first pilots, identify KPIs for both control and autonomy, and document escalation paths. Pitfalls to watch for include overconstraining autonomy, underestimating data governance needs, and underinvesting in monitoring or explainability. A practical pattern is to deploy a minimal viable integration that routes non-sensitive tasks through the host while gradually introducing autonomous agents with limited scope. Regular audits, scenario testing, and red-teaming help surface hidden risks early. The Ai Agent Ops team stresses that iterative experimentation, paired with rigorous governance, is the most reliable pathway to scalable, agentic automation.

Implementation tips and guidelines for teams

To operationalize mcp host vs ai agent choices, teams should define a joint architecture blueprint that covers data flows, decision boundaries, and escalation rules. Establish a policy engine that can be updated without redeployments, implement explainability features for agent decisions, and ensure robust observability with end-to-end tracing. Invest in modular components: a governance layer on top of the MCP host, a safety layer for agents, and a clear interface contract between them. Finally, create a feedback loop that assesses performance against KPIs, updates risk assessments, and refines safety policies. A disciplined rollout, guided by Ai Agent Ops principles, helps organizations avoid common missteps and accelerate learning.

Industry considerations, benchmarks, and future trends

Across industries, the mcp host vs ai agent debate reflects broader trends toward agentic AI and automation orchestration. Organizations are increasingly seeking scalable governance models that can support autonomous decisions without sacrificing accountability. Benchmarking efforts focus on throughput, latency, accuracy of agent decisions, and the cost of governance overhead. Looking ahead, expect tighter integration between orchestration layers and agentic components, richer safety frameworks, and more standardized interfaces that reduce integration friction. Staying aligned with evolving standards and best practices, as outlined by Ai Agent Ops, will help teams navigate transitions smoothly.

References and authoritative references

For readers seeking external validation and deeper understanding, consult authoritative sources that discuss AI governance, agentic systems, and orchestration patterns. You can review foundational guidelines from national standards bodies and leading academic centers, as well as cutting-edge findings from major publications. These references provide context for the ongoing evolution of MCP-hosted orchestration and agent-driven automation, and help teams design more resilient architectures in practice.

Authoritative references:

- https://www.nist.gov/topics/artificial-intelligence

- https://ai.stanford.edu/

- https://www.nature.com/subjects/artificial-intelligence

Comparison

| Feature | MCP Host | AI Agent |

|---|---|---|

| Control and governance | Centralized policy enforcement and auditing | Distributed autonomy with guardrails |

| Decision autonomy | Low to moderate; human-in-the-loop common | High; goal-directed actions and self-direction |

| Latency and throughput | Predictable routing; potential bottlenecks under load | Inference-driven latency; scalable with resources |

| Maintenance and updates | Controlled updates via governance pipelines | Continuous learning requiring model management |

| Failure handling | Deterministic failover and rollback | Probabilistic decisions require monitoring and containment |

| Data handling and security | Centralized data governance and access control | Data locality concerns; need for privacy-preserving designs |

| Best for | Regulated environments, high predictability | Dynamic workloads, rapid experimentation |

Positives

- Clear governance with predictable SLAs

- Stronger data control and compliance

- Easier debugging and traceability

- Lower risk of unintended actions

What's Bad

- Less adaptability to unknown tasks

- Higher operational overhead for orchestration

- Potential latency due to centralized routing

Hybrid orchestration with a host plus agent layers is the most flexible path

Use an MCP host for governance and routing, add AI agents for autonomous execution within safe boundaries. This combo balances control with adaptability and scales more robustly as needs evolve.

Questions & Answers

What is an MCP host and how does it relate to AI agents?

An MCP host coordinates messages and policies in a centralized way, while AI agents operate with autonomy to decide and act. Understanding their roles helps teams design hybrid architectures that balance control with adaptive automation.

An MCP host keeps things predictable, and AI agents handle autonomous tasks. Together they offer a balanced approach to automation.

Which architecture is better for regulated industries?

Regulated environments typically benefit from the governance and auditability of an MCP host. Autonomous agents can be used in bounded contexts with strong guardrails and escalation paths to maintain compliance.

Regulated sectors often prefer governance-heavy hosts, with agents used in safe, controlled contexts.

Can MCP hosts and AI agents be deployed together?

Yes. A common pattern is to use the MCP host for orchestration and policy enforcement, while AI agents handle complex decision-making within predefined constraints. This setup aims to maximize reliability and speed to value.

Absolutely—start with a shared layer and expand autonomy gradually.

What metrics matter when comparing these approaches?

Consider governance coverage, decision latency, reliability, and the cost of maintaining guardrails. Also track task completion rate, user escalation frequency, and the impact of automation on throughput.

Look at governance, latency, reliability, and overall automation ROI.

What are common risks of AI agents in production?

Risks include unintended actions, data leakage, model drift, and explainability gaps. Mitigate with strong guardrails, logging, and containment strategies plus a clear escalation path.

Key risks are unintended actions and drift—guardrails and logs help a lot.

What should a migration plan look like?

Start with a small, high-value use-case, implement robust observability, and layer guardrails around agent decisions. Progressively broaden autonomy as you prove reliability and governance alignment.

Begin with a small pilot, add guardrails, then expand autonomy as you prove it safe.

Key Takeaways

- Define clear decision boundaries between host and agent layers

- Prioritize governance when data sensitivity is high

- Pilot autonomous tasks in controlled sandboxes

- Invest in observability across both components

- Plan for hybrid patterns to maximize ROI